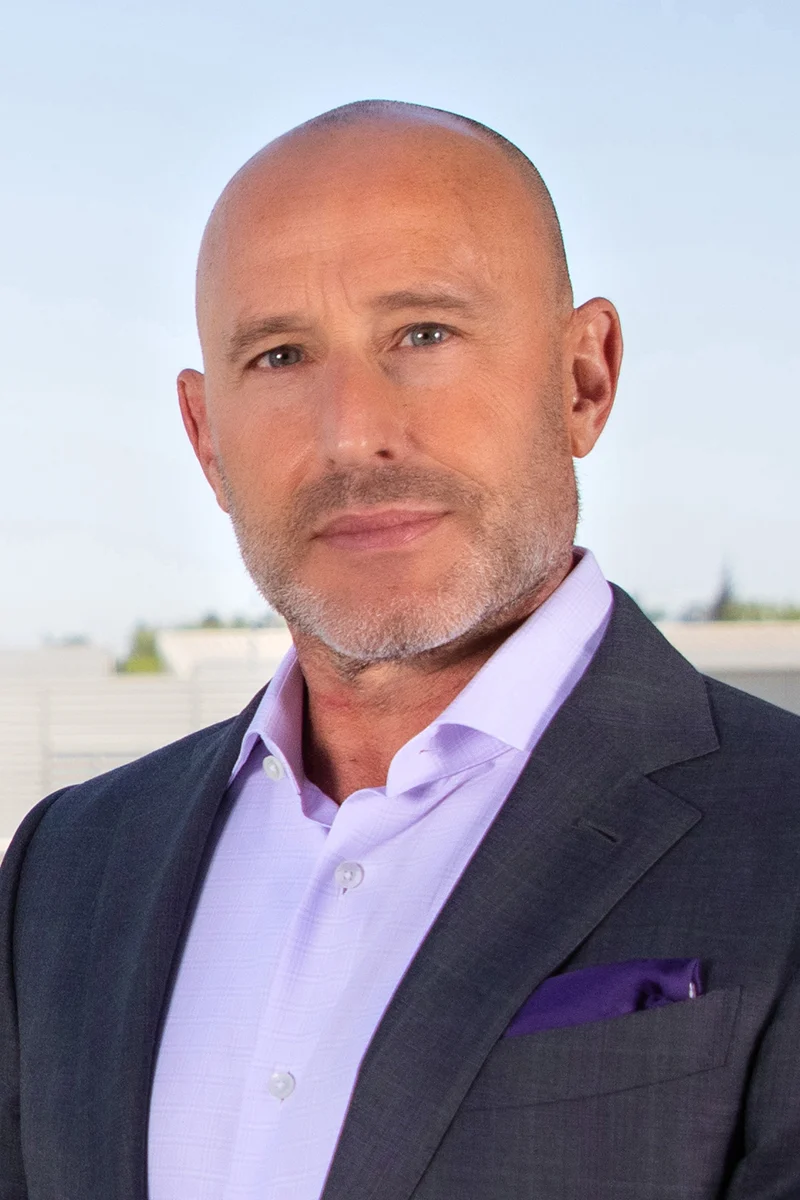

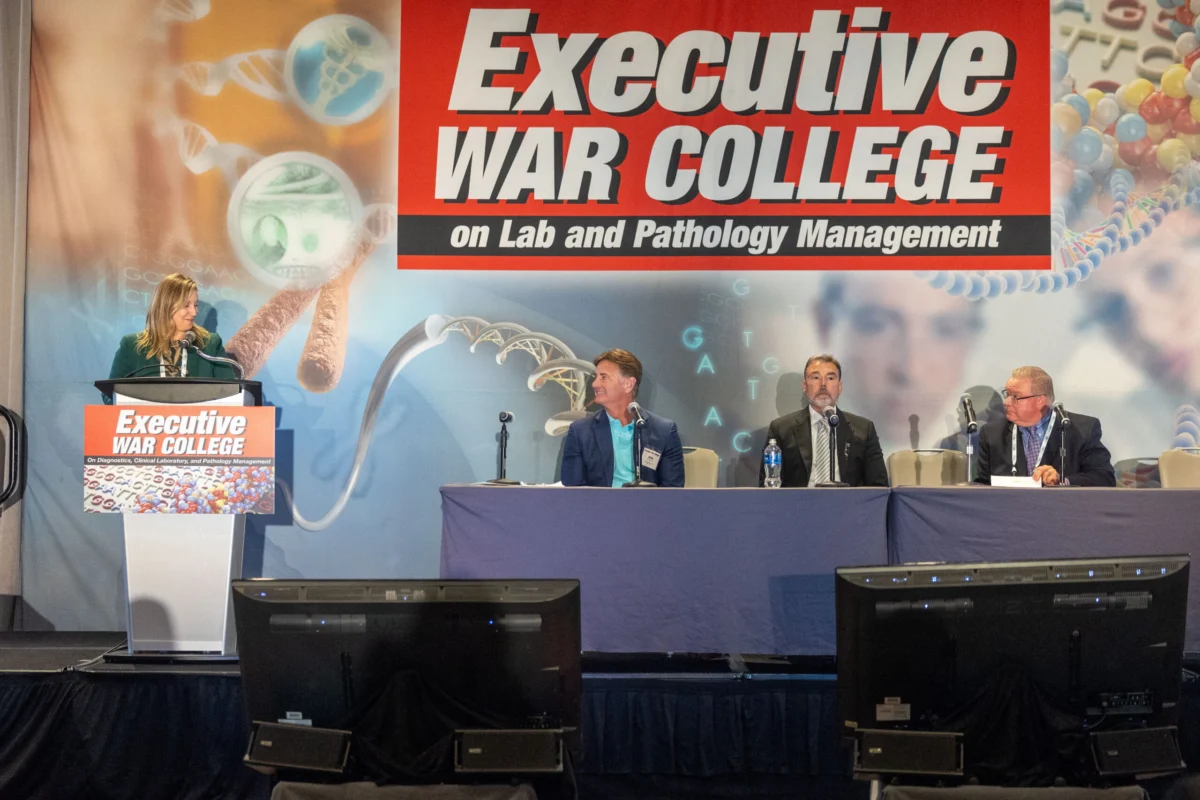

The pronouncement by Mitchell H. Katz, MD, President and CEO of NYC Health + Hospitals, the largest public healthcare system in the United States, has ignited a fierce debate within the medical community regarding the accelerating integration of artificial intelligence (AI) into diagnostic services. Dr. Katz’s assertive stance, articulated at a recent panel hosted by Crain’s New York Business, suggests a readiness to deploy AI to interpret imaging studies such as mammograms and X-rays, with the ultimate goal of significantly reducing labor costs amidst soaring demand for diagnostic services. This ambition, while promising substantial efficiencies and expanded access to care, particularly in underserved communities, simultaneously confronts deep-seated concerns from medical professionals about diagnostic accuracy, patient safety, and the irreplaceable value of human clinical judgment.

The Vision for AI-Driven Radiology

Dr. Katz’s remarks highlighted AI’s current capabilities, stating, "We could replace a great deal of radiologists with AI at this moment, if we are ready to do the regulatory challenge." This declaration underscores a critical juncture where technological advancement meets systemic pressures. NYC Health + Hospitals, a sprawling network comprising 11 acute care hospitals, five skilled nursing facilities, and numerous community-based clinics, serves over one million New Yorkers annually. For such a massive system, the potential for "major savings" through automation is a powerful driver, especially as healthcare costs continue their upward trajectory, with national health expenditure projected to reach $6.8 trillion by 2030, according to the Centers for Medicare & Medicaid Services (CMS).

The proposed model envisions a paradigm shift where AI assumes the primary role of image interpretation, flagging abnormalities for subsequent review by human radiologists. This "AI-first, specialist-second" approach, Dr. Katz suggested, could not only lower operational costs but also expand access to critical screenings, notably for breast cancer. The current shortage of radiologists, exacerbated by an aging population requiring more imaging and an increasing rate of radiologist burnout, makes the prospect of AI augmentation particularly appealing. The Association of American Medical Colleges (AAMC) has projected a shortage of up to 48,000 physicians by 2034, with radiology being one of the specialties facing significant workforce strain.

Empirical Support and Early Adopters

While Dr. Katz’s comments represent a bold vision, they are not entirely without precedent or empirical support. Other hospital leaders are already piloting and reporting promising results from AI-assisted diagnostics. David Lubarsky, MD, MBA, CEO of Westchester Medical Center Health Network, shared compelling data regarding his organization’s experience with AI-assisted mammography interpretation. He noted that for low-risk women, if an AI-interpreted test returns negative, the error rate is remarkably low—approximately three false negatives out of 10,000 cases. Dr. Lubarsky asserted, "The technology is actually better than human beings" in these specific low-risk scenarios, providing a powerful testament to AI’s diagnostic precision in certain contexts.

The advancement of AI in medical imaging has been a journey spanning decades, moving from rudimentary pattern recognition in the 1980s to sophisticated deep learning algorithms today. Modern AI tools, powered by vast datasets of medical images and clinical outcomes, can detect subtle anomalies, quantify disease progression, and assist in diagnostic pathways with increasing accuracy. The U.S. Food and Drug Administration (FDA) has already cleared numerous AI-powered medical devices, including algorithms for detecting intracranial hemorrhage on CT scans, identifying pneumothorax on chest X-rays, and assisting in breast cancer detection on mammograms. These regulatory approvals signify a growing acceptance and validation of AI’s utility in a clinical setting, albeit often under the supervision of a human clinician.

The Economic Imperative and Workforce Challenges

The drive towards AI integration is fundamentally rooted in the economic pressures and operational challenges facing modern healthcare systems. Beyond the sheer volume of diagnostic tests, which continues to grow annually, hospitals grapple with rising labor costs, recruitment difficulties, and the need to deliver care efficiently across diverse patient populations.

For large public systems like NYC Health + Hospitals, which often serve a disproportionately high number of uninsured or underinsured patients, cost containment is not merely a financial goal but a mission-critical imperative to sustain access to care. AI, by potentially automating high-volume, repetitive tasks, offers a pathway to reallocate human resources to more complex cases, patient consultations, or areas where human empathy and intricate clinical judgment are indispensable. This could mitigate the impact of workforce shortages, reduce turnaround times for diagnostic results, and ultimately enhance patient throughput, thereby improving access to care in areas where specialist availability is limited.

Regulatory Evolution and Ethical Crossroads

The widespread adoption of AI in healthcare, particularly in a primary diagnostic role, necessitates a significant evolution of the existing regulatory framework. Dr. Katz’s call for regulators to permit AI to interpret imaging independently highlights a critical debate: how do we balance innovation and efficiency with patient safety and accountability?

Current regulatory bodies like the FDA typically clear AI as a device or tool to assist physicians, not as an independent practitioner. Shifting to an "AI-only" primary interpretation model would require new frameworks for validation, oversight, and liability. Questions arise concerning:

- Algorithmic Bias: Ensuring AI models are trained on diverse datasets to avoid perpetuating or exacerbating health disparities based on race, gender, or socioeconomic status.

- Continuous Learning: How to regulate AI systems that continuously learn and adapt, potentially altering their performance over time.

- Liability: Who is responsible when an AI system makes an error resulting in patient harm – the developer, the hospital, or the overseeing physician (if one exists)?

- Patient Trust: How will patients perceive AI-driven diagnoses, and what level of transparency and informed consent will be required?

Medical ethicists warn that while AI offers immense potential, its implementation must be guided by principles that prioritize patient well-being, equity, and the preservation of the human-centric nature of medicine.

The Pushback: Concerns over Patient Safety and Clinical Judgment

Despite the enthusiasm from some administrators, a significant segment of the medical community, particularly practicing radiologists, expresses profound skepticism and alarm. Mohammed Suhail, MD, of North Coast Imaging, delivered a scathing critique, stating, "Undeniable proof that confidently uninformed hospital administrators are a danger to patients: easily duped by AI companies that are nowhere near capable of providing patient care." Dr. Suhail’s blunt assessment reflects a deep concern that moving too quickly to AI-only reads could have catastrophic consequences. "Any attempt to implement AI-only reads would immediately result in patient harm and death, and only someone with zero understanding of radiology would say something so naive," he asserted.

These criticisms stem from several core arguments:

- Nuance and Context: Human radiologists bring years of clinical experience, intuition, and an understanding of a patient’s entire medical history to interpret an image. AI, while adept at pattern recognition, may lack the ability to synthesize disparate pieces of information, account for atypical presentations, or understand the full clinical context.

- Rare Diseases and Complex Cases: AI models excel with common conditions but may struggle with rare diseases or highly complex, ambiguous cases where subtle findings require expert human interpretation and judgment.

- Diagnostic Uncertainty: Medicine often involves degrees of uncertainty. Radiologists communicate these nuances to referring physicians, guiding further workup or management. AI’s current capabilities are often binary (positive/negative) and may not convey this critical subtlety.

- Professional Liability: The ethical and legal implications of misdiagnosis by an AI system remain largely unaddressed, creating a significant barrier to widespread independent adoption.

Professional organizations like the American College of Radiology (ACR) generally advocate for AI as an assistant to radiologists, enhancing efficiency and accuracy, rather than a replacement. They emphasize the need for rigorous validation, transparency, and continued human oversight to ensure patient safety and maintain the highest standards of care.

The Parallel for Clinical Laboratories: A Similar Horizon

The discussions surrounding AI in radiology serve as a crucial harbinger for other diagnostic fields, particularly clinical laboratories. The underlying drivers—cost containment, workforce shortages, and the demand for faster turnaround times—are virtually identical pressures facing lab professionals.

Clinical laboratories, the backbone of diagnostic medicine, process billions of tests annually. They too grapple with a significant shortage of skilled medical technologists and pathologists, an aging workforce, and increasing test volumes driven by advancements in personalized medicine and molecular diagnostics. The prospect of an "AI-first, specialist-second" model, or even full automation of certain interpretive tasks, holds immense appeal for lab administrators seeking similar efficiencies.

Potential applications of AI in clinical laboratories mirror those in radiology:

- Digital Pathology: AI algorithms are already being developed and validated to analyze whole slide images, detect cancer cells, grade tumors, and quantify biomarkers, potentially revolutionizing cancer diagnosis and research.

- Automated Workflow and Interpretation: In areas like hematology, AI can assist in classifying blood cells, flagging abnormal findings. In microbiology, AI could help identify pathogens or predict antibiotic resistance patterns. In molecular diagnostics, AI can aid in interpreting complex genomic data.

- Quality Control and Error Reduction: AI can monitor laboratory processes for anomalies, reducing human error and improving quality control.

- Predictive Analytics: AI can analyze vast datasets to predict disease outbreaks, identify at-risk patients, or optimize resource allocation.

If regulators pave the way for reduced physician oversight of AI in imaging, clinical labs could see accelerated adoption of AI-driven decision support, automated result interpretation, and potentially reduced hands-on review in certain testing workflows. This would necessitate a similar debate regarding accuracy, safety, and the evolving role of pathologists and medical technologists.

The Future of Medical Expertise: Augmentation, Not Replacement?

The debate sparked by Dr. Katz’s comments underscores a broader existential question for the medical profession: what is the future of human expertise in an increasingly AI-driven world? While the prospect of AI entirely replacing highly trained specialists elicits strong reactions, many experts believe the more likely scenario is "augmented intelligence"—where AI acts as a powerful co-pilot, enhancing human capabilities rather than supplanting them.

In this augmented future, radiologists and pathologists might spend less time on routine, high-volume image or slide interpretation and more time on complex cases, interdisciplinary collaboration, patient consultation, and research. Their roles would evolve to include overseeing AI systems, validating their outputs, and leveraging AI-generated insights to provide more personalized and precise patient care. This transformation would require new training paradigms for medical students and continuous upskilling for existing professionals, focusing on AI literacy, data science, and critical evaluation of AI outputs.

The journey towards integrating AI into core diagnostic functions is complex, fraught with both unparalleled opportunities and significant risks. As health systems continue to test AI-driven models in radiology, the broader diagnostics industry, including clinical laboratories, must closely observe, prepare, and actively participate in shaping the regulatory and ethical frameworks that will define the future of healthcare. The imperative remains to harness the power of AI to improve patient outcomes and access, while steadfastly defending the continued role of expert human oversight in ensuring quality, safety, and the compassionate delivery of care.

Leave a Reply