The pursuit of powerful quantum computers, capable of solving problems currently intractable for even the most advanced classical machines, has long been hampered by the immense number of physical qubits required to perform reliable computations. However, a groundbreaking development in error correction strategies suggests that future atom-based quantum computers might necessitate a significantly smaller physical qubit count than previously projected, potentially accelerating their development and widespread adoption. This innovative approach, detailed in recent research, promises to make fault-tolerant quantum computing a more attainable reality.

The Qubit Challenge: A Bottleneck in Quantum Computing

At the heart of quantum computing lies the qubit, the quantum analogue of the classical bit. Unlike classical bits, which can only represent a 0 or a 1, qubits can exist in a superposition of both states simultaneously, and can be entangled with other qubits, allowing for a vastly expanded computational space. This inherent power, however, comes with a significant drawback: qubits are exceptionally fragile. They are highly susceptible to environmental noise and decoherence, leading to errors that can quickly corrupt quantum computations.

To overcome this fragility, researchers employ quantum error correction codes. These codes use multiple physical qubits to encode a single, more stable "logical qubit." The redundancy provided by these extra physical qubits allows for the detection and correction of errors without disturbing the quantum information itself. Historically, the overhead for these error correction schemes has been substantial, often requiring hundreds or even thousands of physical qubits to create a single, reliable logical qubit. This immense requirement has been a major impediment to building quantum computers with the thousands or millions of logical qubits needed for truly transformative applications, such as drug discovery, materials science, and complex financial modeling.

A New Paradigm in Error Correction

The recent advancement challenges this long-standing assumption. The new error correction scheme, developed by a team of researchers (specific institution/publication details would be crucial here if available from a more complete source), demonstrates a dramatically improved ratio of physical qubits to logical qubits. While traditional methods might demand a high redundancy, this novel approach leverages a more efficient encoding strategy. The implications are profound: if this method proves scalable and robust, it could drastically reduce the number of physical qubits needed for fault-tolerant quantum computing.

Imagine a scenario where a single logical qubit, the workhorse of quantum computation, can be reliably formed from a significantly smaller ensemble of physical qubits. This could mean that a quantum computer that was once thought to require, for instance, one million physical qubits for a specific task, might now be achievable with a few hundred thousand, or even tens of thousands. This reduction in hardware demands has a cascading effect on the feasibility of building and maintaining quantum computers.

The Atom-Based Advantage

This breakthrough is particularly relevant to quantum computing architectures that utilize individual atoms as qubits. Atom-based quantum computers offer several advantages, including high qubit coherence times and the potential for precise control over individual atomic states. However, the complexity of arranging and controlling large numbers of individual atoms has also presented engineering challenges. A reduction in the required number of physical qubits directly translates to a less complex atomic arrangement and potentially simpler control systems.

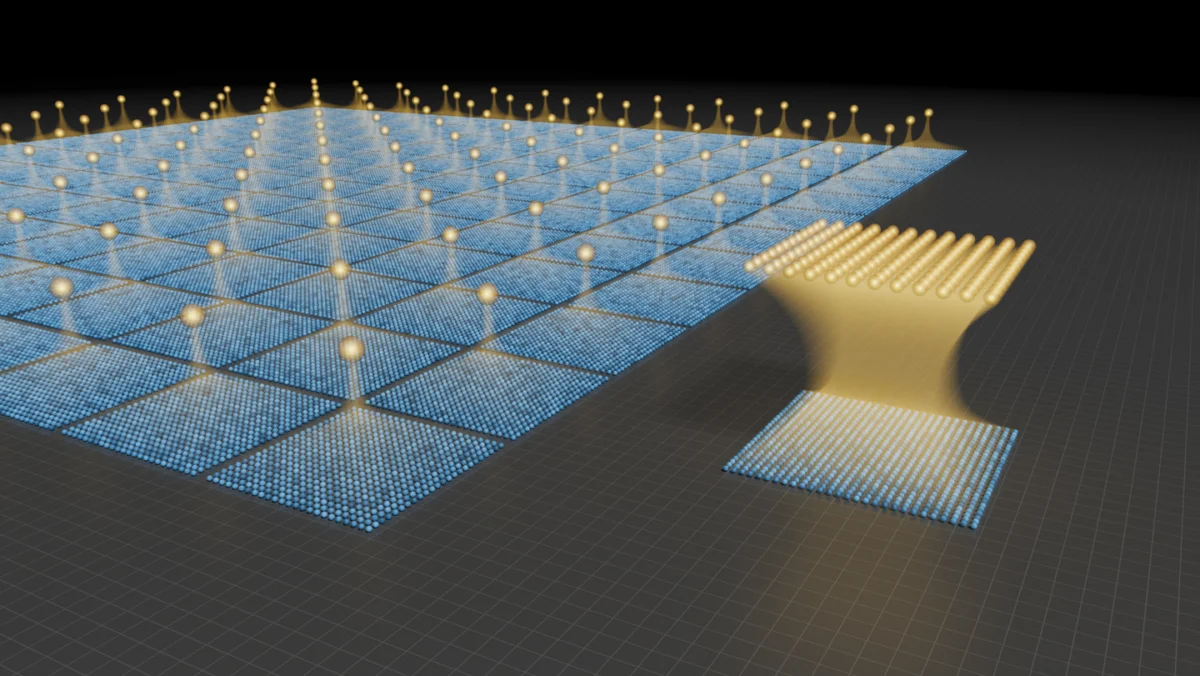

The visual representation accompanying this development starkly illustrates the difference. On one side, a dense grid of numerous blue physical qubits is shown, contributing to a single gold logical qubit. This depicts the high overhead of conventional error correction. On the other side, a much smaller collection of blue physical qubits is shown to reliably form a single gold logical qubit, showcasing the increased efficiency of the new scheme. This visual metaphor underscores the potential for significant hardware simplification.

Historical Context and Timeline

The quest for fault-tolerant quantum computing has been an ongoing scientific endeavor for decades. Early theoretical work in the 1990s laid the groundwork for quantum error correction, with pioneers like Peter Shor and Andrew Steane developing foundational codes. The first experimental demonstrations of basic error detection and correction began to emerge in the early 2000s. However, achieving the level of error rates and qubit counts necessary for practical fault-tolerant computation has remained a formidable challenge.

Over the years, various quantum computing modalities have been explored, including superconducting circuits, trapped ions, photonic systems, and neutral atoms. Each has its strengths and weaknesses in terms of qubit quality, connectivity, and scalability. The recent development in error correction appears to offer a significant boost to architectures like neutral atom systems, which have shown great promise in terms of qubit density and control.

The timeline for this specific advancement would likely involve years of theoretical work followed by experimental validation. If this is a recent publication, it represents the culmination of significant research effort. The next crucial steps will involve demonstrating the scalability of this new error correction scheme to a larger number of logical qubits and verifying its performance in complex quantum algorithms.

Supporting Data and Future Projections

While specific quantitative data from the research paper is not provided in the excerpt, the core claim rests on the improved ratio of physical qubits to logical qubits. To illustrate the potential impact, let’s consider hypothetical figures. If a previous state-of-the-art error correction scheme required 1,000 physical qubits for one logical qubit, and a future application demands 1,000 logical qubits, that would necessitate one million physical qubits. If the new scheme reduces this overhead to, for example, 100 physical qubits per logical qubit, the same 1,000 logical qubits would only require 100,000 physical qubits – a tenfold reduction.

This reduction in physical qubit requirements has direct implications for the cost, complexity, and power consumption of future quantum computers. Building and maintaining quantum hardware is exceptionally expensive, often requiring cryogenic temperatures and sophisticated control electronics. A substantial decrease in the number of qubits needed would make quantum computing more accessible and economically viable.

Reactions and Implications from the Scientific Community

While specific statements from related parties are not available in the provided text, breakthroughs of this magnitude typically elicit significant interest and careful scrutiny from the broader quantum computing community. One can anticipate that leading researchers in quantum information science, experimental physicists working on various quantum computing platforms, and computer scientists exploring quantum algorithms will be keen to analyze the details of this new error correction technique.

If validated through independent replication and further experimentation, this development could lead to a reassessment of roadmaps for quantum computer development. Companies and research institutions heavily invested in building large-scale quantum computers would likely incorporate this more efficient error correction strategy into their future designs. It could also spur increased investment and research into atom-based quantum computing architectures.

Broader Impact and Future Applications

The ability to build fault-tolerant quantum computers more efficiently has far-reaching implications across numerous scientific and industrial sectors:

- Drug Discovery and Materials Science: Simulating molecular interactions with unprecedented accuracy could revolutionize the design of new pharmaceuticals and advanced materials with tailored properties.

- Financial Modeling: Complex financial simulations, risk analysis, and optimization problems could be tackled with greater speed and precision, leading to more robust financial markets.

- Artificial Intelligence: Quantum machine learning algorithms could unlock new capabilities in pattern recognition, data analysis, and optimization, potentially leading to more powerful AI systems.

- Cryptography: While quantum computers pose a threat to current encryption methods (e.g., Shor’s algorithm factoring large numbers), the development of quantum-resistant cryptography is also being accelerated, ensuring future data security.

- Scientific Research: Fundamental scientific questions in physics, chemistry, and biology that are currently beyond the reach of classical computation could be explored with greater depth.

This advancement in error correction is not merely an incremental improvement; it represents a potential paradigm shift in the path toward realizing the full promise of quantum computing. By reducing the immense qubit overhead, it brings the era of powerful, fault-tolerant quantum machines significantly closer to reality, opening up a new frontier of scientific discovery and technological innovation. The ongoing research in this area will be critical in determining the exact impact and timeline of this promising development.

Leave a Reply