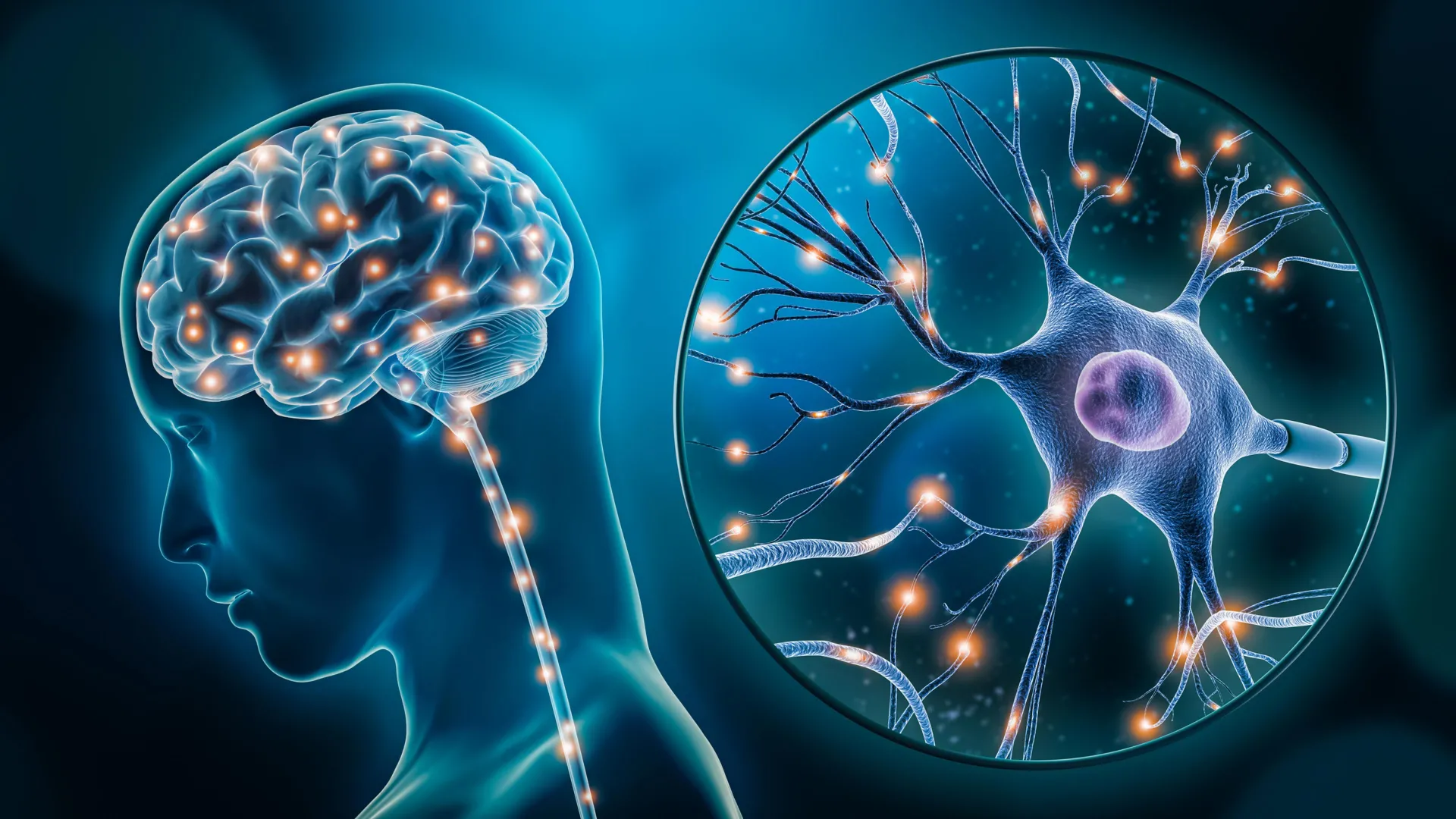

The intricate relationship between the way individuals communicate in daily life and the underlying health of their neurological systems has long been a subject of scientific inquiry, but a groundbreaking study from Baycrest’s Rotman Research Institute, the University of Toronto, and York University has provided definitive evidence that natural speech is a powerful window into cognitive vitality. Researchers have identified that subtle nuances in conversational patterns—specifically the frequency of pauses, the use of filler words such as "um" and "uh," and the speed of word retrieval—serve as critical markers for executive function. These findings, recently published in high-impact journals, suggest that speech timing and linguistic fluidity are not merely personal stylistic choices but are deeply intertwined with the brain’s ability to plan, focus, and manage complex mental tasks.

The Intersection of Linguistics and Cognitive Science

Executive function represents a suite of higher-order mental processes that allow humans to navigate the complexities of daily existence. These include working memory, inhibitory control, and cognitive flexibility. When these functions begin to erode, often due to aging or the onset of neurodegenerative conditions like Alzheimer’s disease or other forms of dementia, the impact is frequently first observed in the most complex task the human brain performs: spontaneous speech.

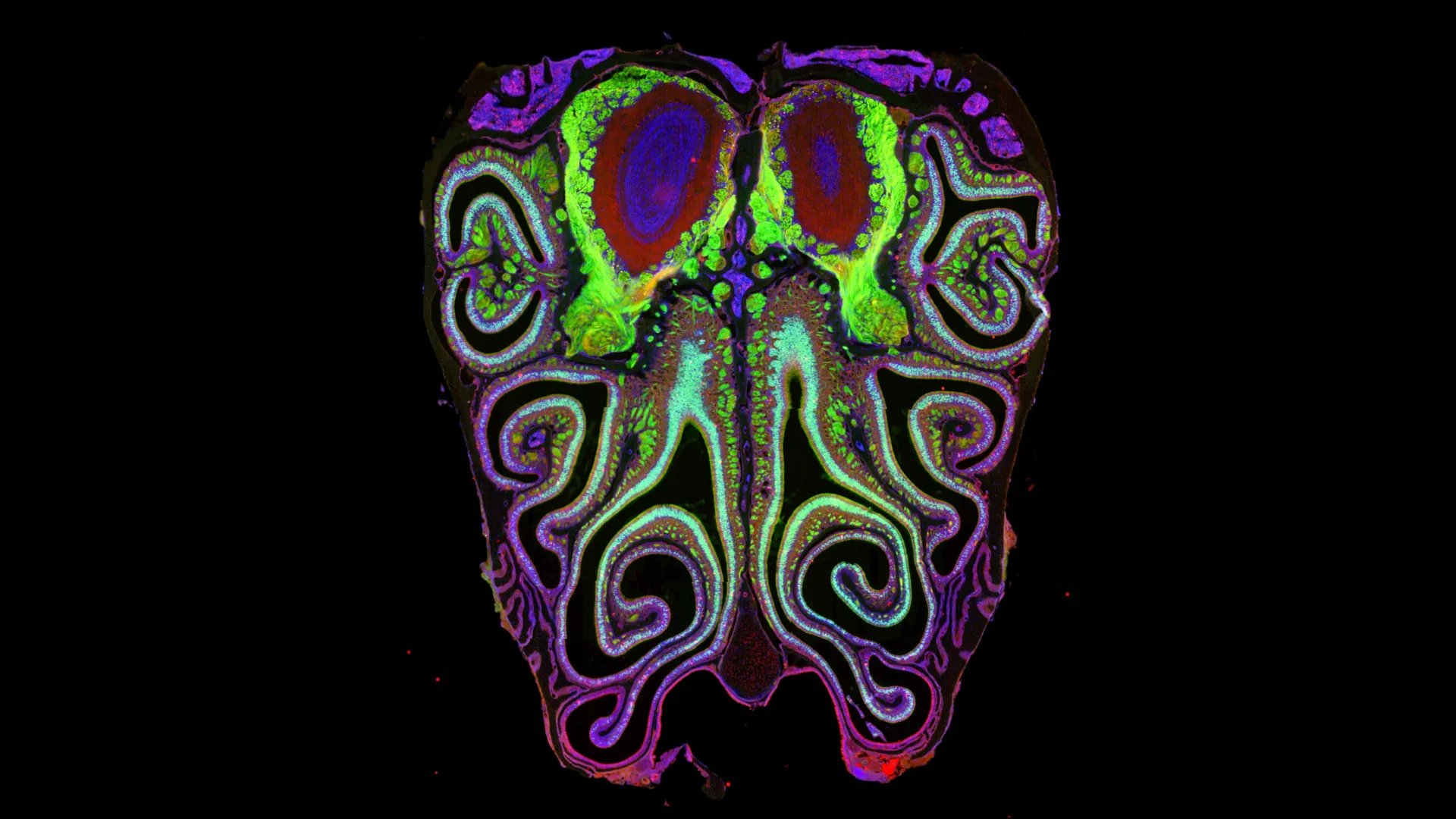

The research team, led by Dr. Jed Meltzer, a Senior Scientist at Baycrest’s Rotman Research Institute, sought to bridge the gap between abstract cognitive testing and real-world functional ability. By analyzing how participants described detailed images, the study moved beyond simple vocabulary tests to observe the brain "in action." The results confirmed that the timing of speech—how long one pauses before a noun or how many fillers are used to bridge a gap in thought—correlates significantly with the strength of an individual’s executive function.

Methodology and the Role of Artificial Intelligence

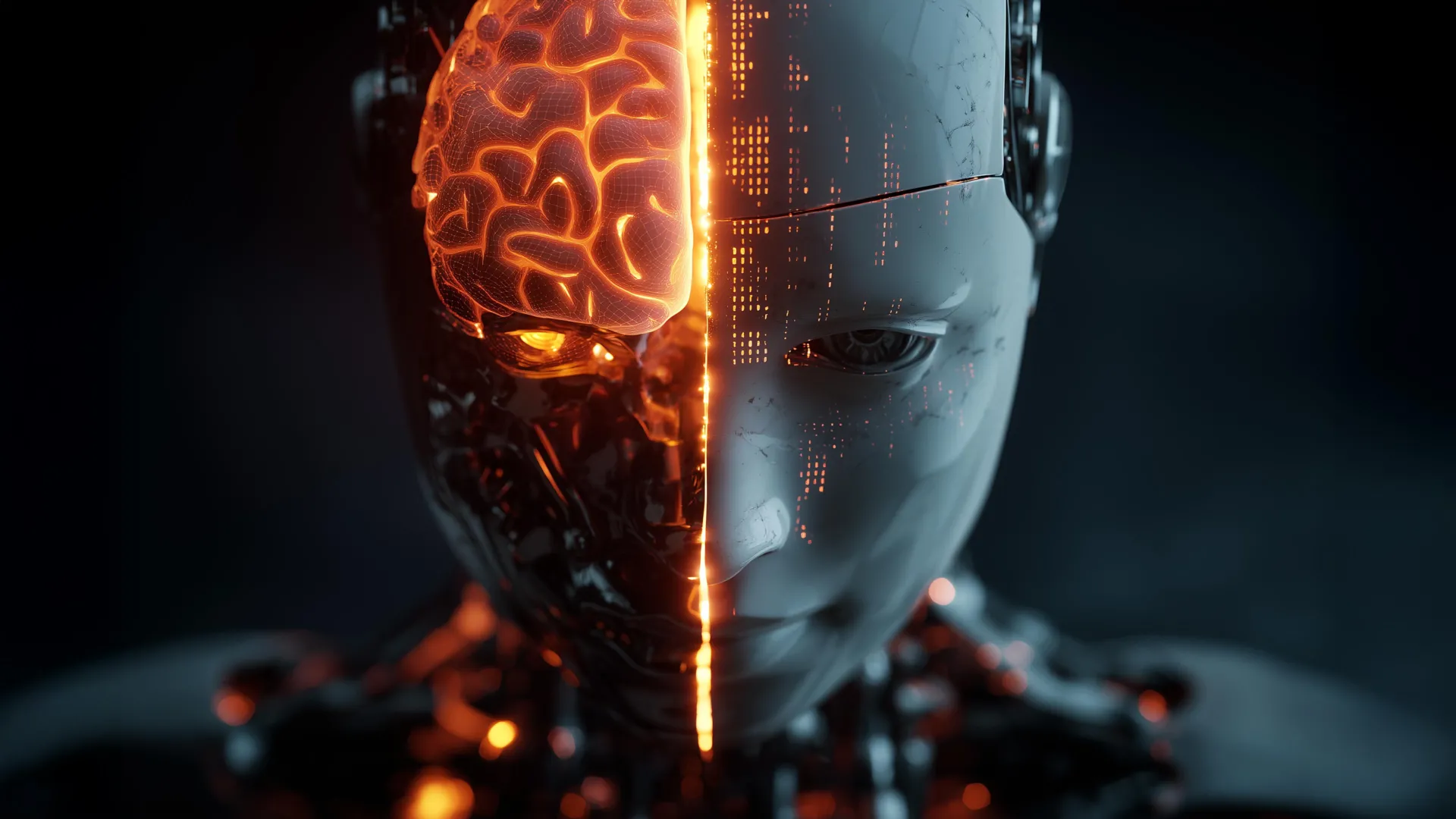

The study’s methodology utilized a combination of traditional neuropsychological assessment and cutting-edge computational linguistics. Participants were presented with complex visual stimuli and asked to provide descriptive narratives. These recordings were then processed using advanced artificial intelligence (AI) algorithms designed to detect hundreds of acoustic and linguistic features that are often imperceptible to the human ear.

The AI system focused on "temporal" features of speech. While a listener might notice a long pause, the AI could quantify the exact millisecond duration of every silence, the ratio of speech to pause, and the placement of filler words. This data was then cross-referenced with the participants’ scores on standardized executive function tests. The correlation remained robust even after the researchers accounted for demographic variables including age, biological sex, and years of formal education. This suggests that speech patterns offer an independent and objective measure of brain health that transcends socioeconomic or demographic backgrounds.

A New Timeline for Cognitive Assessment

Historically, the assessment of cognitive decline has relied on periodic visits to clinical settings where patients undergo a battery of "pen-and-paper" tests, such as the Montreal Cognitive Assessment (MoCA) or the Mini-Mental State Exam (MMSE). While effective, these methods have inherent limitations. They are often stressful for the patient, time-consuming for the clinician, and subject to the "practice effect," where patients perform better on subsequent tests simply because they have memorized the format.

The shift toward natural speech analysis represents a significant evolution in the timeline of geriatric care. By moving diagnostics from the clinic to the living room, researchers are opening the door to continuous, longitudinal monitoring. The chronology of this research field shows a clear trajectory:

- Late 20th Century: Early observations of "word-finding difficulties" in dementia patients.

- Early 2000s: Development of digital recording tools for linguistic research.

- 2010-2020: The rise of machine learning and Natural Language Processing (NLP) to analyze large datasets.

- 2024: The validation of speech timing as a primary biomarker for executive function, as evidenced by the Baycrest and University of Toronto study.

Supporting Data: Speech Speed and Cognitive Resilience

This study builds upon earlier findings, including a notable 2024 study by Wei et al., which established that older adults who maintain a faster rate of speech tend to exhibit higher levels of cognitive resilience. The data suggests that processing speed—the rate at which the brain can take in, interpret, and respond to information—is directly reflected in the velocity of vocal output.

In the current study, the researchers found that it was not just the speed of talking, but the efficiency of the pauses that mattered. Individuals with high executive function used pauses strategically or minimally, whereas those with lower scores exhibited "non-fluent" pauses—silences that occurred in the middle of phrases or before simple words, indicating a struggle in the brain’s retrieval mechanism. This data point is crucial because it differentiates between a person who is a "slow talker" by nature and a person whose speech is being interrupted by cognitive "bottlenecks."

Expert Perspectives and Institutional Reactions

"The message is clear: speech timing is more than just a matter of style, it’s a sensitive indicator of brain health," stated Dr. Jed Meltzer. His perspective is echoed by many in the neurology community who view digital biomarkers as the future of preventative medicine.

Neurologists not involved in the study have noted that these findings could revolutionize how general practitioners screen for early-stage cognitive impairment. If a brief, two-minute speech sample can be analyzed by software to provide a "cognitive score," it could become a standard part of annual physical exams for seniors. This would allow for much earlier detection of decline than is currently possible with traditional methods, which often only catch impairment once it has begun to interfere with activities of daily living.

The Impending Crisis of Dementia and the Need for Early Detection

The urgency of this research is underscored by global health statistics. According to the World Health Organization (WHO), more than 55 million people worldwide are currently living with dementia, and this number is projected to rise to 139 million by 2050 as the global population ages. The economic burden is equally staggering, with costs associated with dementia care exceeding $1.3 trillion annually.

Early detection is the "holy grail" of dementia research. Most current treatments and lifestyle interventions, such as cardiovascular management and cognitive training, are most effective when started in the "prodromal" or preclinical stage—the period before significant brain damage has occurred. Dr. Meltzer emphasizes that because dementia involves the progressive degeneration of the brain, the ability to slow this decline depends entirely on identifying at-risk individuals as early as possible. Natural speech analysis provides a non-invasive, low-cost, and highly scalable way to do exactly that.

Analysis of Implications: From Clinic to Home

The implications of using AI-driven speech analysis extend far beyond the research lab. In the near future, we may see the integration of these tools into everyday technology. Smartphones and smart-home assistants could, with user consent, monitor speech patterns over months or years, alerting families or physicians to subtle changes that might indicate a need for medical follow-up.

However, this technological leap also brings about questions of privacy and ethics. The use of "unobtrusive" monitoring must be balanced against the patient’s right to privacy and the potential for "diagnostic anxiety." Nevertheless, the clinical benefits are hard to ignore. For patients in rural or underserved areas who lack access to specialized neurology clinics, a speech-based app could serve as a vital link to early intervention.

Future Research and the Path Forward

While the findings from Baycrest, the University of Toronto, and York University are robust, the researchers acknowledge that the journey is far from over. Future studies will need to be longitudinal, following participants over decades to see how speech changes precede the formal diagnosis of Alzheimer’s. There is also a need to diversify the data to include different languages and dialects, ensuring that the AI models are accurate across different cultural and linguistic backgrounds.

Furthermore, the team suggests that speech analysis should not stand alone. When combined with other digital biomarkers—such as gait analysis, sleep tracking, and retinal scans—it could form a comprehensive, multi-modal profile of an individual’s neurological health.

The support for this research by the Mitacs Accelerate program and the Natural Sciences and Engineering Research Council of Canada (NSERC) highlights the importance of public-private partnerships in tackling the challenges of an aging population. As the global medical community shifts its focus from reactive treatment to proactive prevention, the way we speak may become our most important health record.

In summary, the study confirms that our daily conversations are rich with data about our internal mental state. The "ums" and "uhs" that we often ignore are, in fact, the brain’s way of signaling its workload. By harnessing AI to decode these signals, science is moving closer to a future where the earliest signs of brain disease are heard long before they are felt.

Leave a Reply