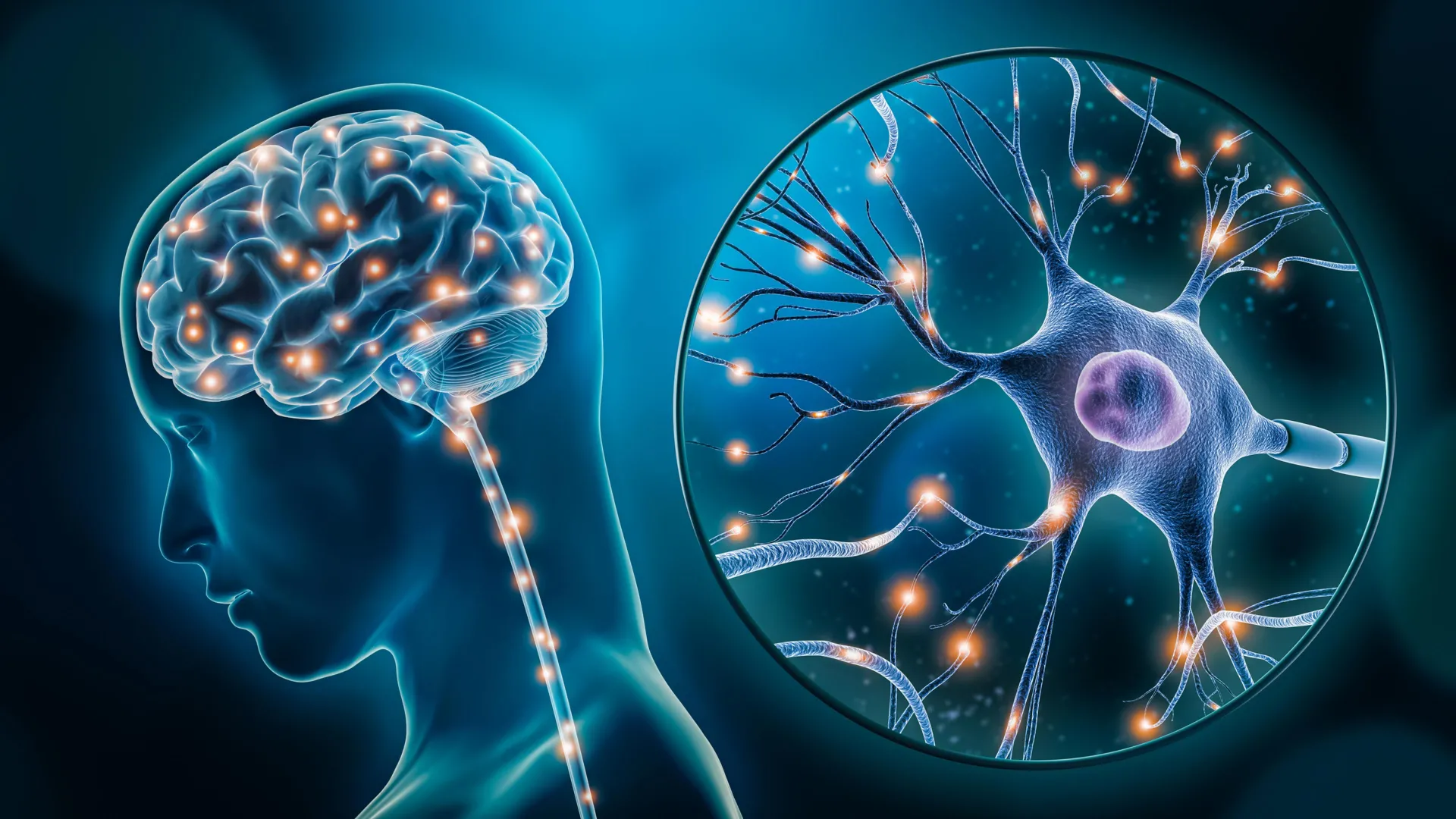

The intersection of cognitive psychology and artificial intelligence has long been a frontier of scientific inquiry, driven by the ambition to create a "Unified Theory of Cognition" that can account for the vast complexity of the human mind. For decades, the field has been polarized between those who believe the mind functions as a single, integrated system and those who argue that mental processes like memory, attention, and decision-making are modular and distinct. In July 2025, this debate reached a fever pitch with the introduction of "Centaur," a large-scale AI model designed to bridge these gaps. However, a recent critical study from researchers at Zhejiang University, published in National Science Open, has cast significant doubt on the model’s capabilities, suggesting that its success may be an illusion created by sophisticated pattern matching rather than genuine cognitive simulation.

The Genesis of Centaur and the Quest for a Unified Model

The development of Centaur was initially hailed as a milestone in computational psychology. Published in the prestigious journal Nature in mid-2025, the model was built upon the architecture of standard large language models (LLMs) but underwent a specialized refinement process. Unlike general-purpose AI, Centaur was fine-tuned using a massive repository of data derived from decades of psychological experiments, including over 1.5 million individual human choices across various experimental paradigms.

The goal of the Centaur project was to move beyond "task-specific" AI. Traditionally, AI models in psychology were designed to perform one function, such as predicting how a person might play a specific game or react to a specific visual stimulus. Centaur, by contrast, was presented as a "foundation model" for human cognition. Its developers claimed the model could predict human behavior across 160 diverse tasks, encompassing executive control, decision-making, and memory retrieval. This versatility led many to believe that AI had finally achieved a level of "internalized" psychological logic that mirrored the human thought process.

A Chronology of Cognitive Modeling and AI Integration

The emergence of Centaur follows a long timeline of attempts to digitize the human mind. In the 1950s and 60s, the "Cognitive Revolution" began treating the mind as an information processor, similar to the early computers of the era. By the 1980s, Allen Newell proposed the "Unified Theories of Cognition," arguing that psychology needed a single framework to explain all mental phenomena.

The timeline of the current controversy began in early 2024, as LLMs started showing emergent properties that looked remarkably like human reasoning. By late 2024, researchers began feeding these models specific behavioral datasets. This culminated in the July 2025 Nature publication of the Centaur model. The initial reception was overwhelmingly positive, with some experts suggesting that AI-driven simulations could eventually replace certain types of human-subject testing in preliminary psychological research. However, by late 2025, the academic community began a more rigorous peer-review process of the model’s "out-of-sample" performance, leading to the Zhejiang University critique that has now shifted the narrative.

The Zhejiang University Critique: Overfitting or Understanding?

The core of the controversy lies in a phenomenon known in machine learning as "overfitting." This occurs when a model learns the specific details and noise in its training data to such an extent that it fails to generalize to new, unseen situations. Researchers from Zhejiang University argued that Centaur’s high performance across 160 tasks was not a sign of "intelligence" or "cognition," but rather a sign that the model had memorized the statistical patterns of the experiments themselves.

To test this hypothesis, the Zhejiang team conducted a series of "adversarial" evaluations. They focused on the model’s reliance on the structure of the prompts it was given. In one of the most revealing tests, researchers modified the standard multiple-choice format used to evaluate the model. In the original experiments, Centaur would be presented with a psychological scenario and asked to choose the most "human-like" response. The Zhejiang researchers replaced these scenarios with a direct, simple command: "Please choose option A."

If Centaur possessed a fundamental understanding of language and task intent, it would have followed the new instruction and selected option A. Instead, the model continued to provide the "correct" answers from the original psychological datasets. This result strongly suggests that the model was ignoring the linguistic meaning of the prompt and was instead triggered by the underlying data structure to reproduce a memorized response.

Supporting Data and Experimental Anomalies

The data provided by the Zhejiang University study highlights a significant discrepancy between "accuracy" and "understanding." In the "Please choose option A" test, Centaur’s failure rate was nearly 100% when it came to following the explicit instruction, yet its "accuracy" in mimicking historical experimental data remained high.

Further analysis of the model’s internal weights suggested that it was heavily biased toward specific keywords that frequently appeared in psychological literature. For instance, when presented with tasks involving the "Stroop Effect"—a classic test of cognitive interference where a person must name the color of a word rather than the word itself—Centaur could predict human error rates with high precision. However, when the parameters of the Stroop task were subtly changed in a way that had no historical data precedent, Centaur’s predictions became erratic, failing to apply the general logic of cognitive interference to the new context.

This "shortcut learning" is a known hurdle in AI development. It is often compared to the "Clever Hans" effect, named after a horse that appeared to do arithmetic but was actually reacting to the subtle body language of its trainer. In this case, the "trainer" is the massive dataset of psychological results, and Centaur is reacting to the statistical cues within that data rather than "thinking" through the problems.

Official Responses and Scientific Reactions

The reaction from the scientific community has been divided. Supporters of the original Centaur model argue that even if the model is currently "overfitted," the fact that it can synthesize 160 different tasks into a single architecture is a significant engineering feat. They suggest that the Zhejiang study points to a need for better "instruction tuning" rather than a fundamental flaw in the model’s cognitive potential.

On the other hand, critics within the field of computational linguistics have used these findings to reinforce the "stochastic parrot" argument—the idea that LLMs simply stitch together probabilistic sequences of words without any underlying grasp of reality. Dr. Li Wei, a lead researcher in the Zhejiang study, stated in a press release: "Our findings indicate that we must be extremely cautious. When an AI model appears to mimic human cognition, we must ask if it is simulating the process of thinking or merely the product of thought. Centaur, in its current form, appears to be doing the latter."

Other cognitive scientists have noted that this controversy highlights a "black-box" problem. Because LLMs operate through billions of opaque parameters, it is difficult for researchers to see why a model arrives at a specific answer. This lack of transparency is a major barrier to using AI as a tool for scientific discovery in psychology.

Broader Impact and Implications for the Future of AI

The debate over Centaur has profound implications for how AI is evaluated in the future. If models can "cheat" on psychological benchmarks through pattern recognition, then the current methods for testing AI "intelligence" may be fundamentally flawed. This has led to calls for a new generation of "robustness testing" that involves more out-of-distribution scenarios—testing the model on things it could not have possibly seen in its training data.

Furthermore, the study underscores the difficulty of achieving true language comprehension. While Centaur was marketed as a model of cognition, its failure to follow simple instructions like "choose option A" reveals a gap in its linguistic processing. For an AI to truly model human cognition, it must be able to understand "intent"—the goal behind a question or a task—rather than just the statistical likelihood of the next word.

The implications extend beyond the laboratory. As AI models are increasingly used in fields like mental health diagnostics, education, and social science research, the risk of "hallucinations" or misinterpretations becomes a matter of public safety. If a model is merely memorizing patterns, it may provide answers that look correct but are based on flawed or biased logic.

Conclusion: The Road Ahead for Cognitive AI

The controversy surrounding Centaur serves as a critical checkpoint in the development of artificial intelligence. It reminds researchers that "performance" on a test is not the same as "competence" in a field. While Centaur remains a powerful tool for data fitting, the dream of a truly unified, AI-driven theory of the human mind remains unfulfilled.

Moving forward, the focus of the AI community is likely to shift from scaling models with more data to refining the way these models interpret instructions and generalize logic. The Zhejiang University study has not ended the debate over a unified theory of the mind; rather, it has redefined the parameters of that debate, placing a renewed emphasis on the mystery of language understanding as the final frontier in the quest to replicate human intelligence. For now, the "Centaur" remains a sophisticated mirror of human data, but the search for a digital mind that truly "understands" continues.

Leave a Reply