A recent analysis by StrataScratch highlights the significant performance advantages of Polars, a Rust-built DataFrame library, over the long-established Pandas for complex data manipulation tasks, particularly when dealing with large datasets. While Pandas has served as the bedrock of Python data work for over a decade, its limitations in handling millions of rows, due to factors like the Global Interpreter Lock (GIL) and eager execution, are becoming increasingly apparent. Polars, leveraging Apache Arrow and designed with parallelism and lazy evaluation, offers a compelling alternative for data professionals seeking enhanced speed and memory efficiency.

The Evolution of Python Data Manipulation: Pandas’ Reign and Polars’ Rise

For much of the past decade, Pandas has been synonymous with data handling in Python. Its intuitive API, extensive ecosystem, and robust feature set have made it the go-to library for data cleaning, transformation, and analysis. Data scientists and analysts became accustomed to its familiar syntax, and for datasets that comfortably fit into memory, its performance was generally deemed sufficient. However, as data volumes exploded, with datasets frequently comprising millions or even billions of rows, Pandas began to show its age. Operations like complex groupby aggregations, intermediate data copies that consume vast amounts of RAM, and window functions executing as Python-level loops rather than highly optimized C or Rust code, often resulted in bottlenecks and extended processing times.

The emergence of Polars marks a new chapter in Python’s data landscape. Built from the ground up in Rust, a language known for its performance and memory safety, and based on the Apache Arrow in-memory columnar data format, Polars was engineered to overcome the very limitations that plague Pandas at scale. Apache Arrow provides a standardized, language-agnostic columnar memory format that enables zero-copy reads and efficient data exchange between different systems and languages, including Rust. This foundation allows Polars to achieve unparalleled speed and memory efficiency. Unlike Pandas, which executes each operation sequentially and eagerly, Polars constructs an optimized query plan using lazy evaluation. This means it only performs computations when explicitly requested, allowing it to intelligently optimize the order of operations, push down filters, and execute tasks concurrently across all available CPU cores without explicit user intervention.

Benchmarking Real-World Data Challenges

To illustrate these architectural advantages, StrataScratch, a platform dedicated to helping data scientists prepare for interviews with real-world coding problems, used three distinct data challenges. Each problem served as a practical comparison point, revealing where Polars’ design truly shines against Pandas’ traditional approach.

Case Study 1: Activity Rank – Optimizing Rank Assignment

The first challenge involved determining the email activity rank for users based on the total number of emails sent, requiring unique ranks even for tied email counts and specific sorting criteria. This is a common task in user analytics and competitive ranking.

The Problem: Given a google_gmail_emails table with from_user, to_user, and day columns, the goal was to calculate a unique activity rank for each user, sorted by total emails (descending) and then by user_id (alphabetical) as a tie-breaker.

Pandas Approach: The conventional Pandas solution involved renaming from_user to user_id, grouping by user_id, counting emails, and then applying rank(method='first', ascending=False). The method='first' is crucial here to ensure unique ranks by breaking ties based on the order of elements, which is pre-sorted alphabetically by user_id. This method, while functional, internally involves multiple data passes: one for aggregation, another for rank allocation, and tie-resolution via argsort.

import pandas as pd

import numpy as np

google_gmail_emails = google_gmail_emails.rename(columns="from_user": "user_id")

result = google_gmail_emails.groupby(

['user_id']).size().to_frame('total_emails').reset_index()

result['activity_rank'] = result['total_emails'].rank(method='first', ascending=False)

result = result.sort_values(by=['total_emails', 'user_id'], ascending=[False, True])Polars Approach: Polars offers a more streamlined and efficient approach. By leveraging its lazy evaluation and with_row_count() function after a comprehensive sort, it avoids the overhead of a dedicated rank function. The data is first grouped and aggregated, then sorted by total_emails (descending) and user_id (ascending). Once sorted, with_row_count("activity_rank", offset=1) simply assigns sequential integers, which inherently produces unique ranks as per the sort order. This single-pass operation is significantly cheaper.

import polars as pl

google_gmail_emails = google_gmail_emails.rename("from_user": "user_id")

result = (

google_gmail_emails.lazy()

.group_by("user_id")

.agg(total_emails = pl.count())

.sort(

by=["total_emails", "user_id"],

descending=[True, False]

)

.with_row_count("activity_rank", offset=1)

.select([

pl.col("user_id"),

"total_emails",

"activity_rank"

])

.collect()

)Performance Implications: For datasets containing millions of email records, the Polars solution demonstrates a 5-10x improvement in wall-clock time. This substantial gain stems from Polars’ group_by function distributing the workload across all available CPU cores, coupled with with_row_count() being an extremely efficient O(n) sequential pass post-sorting. In contrast, Pandas’ rank(method='first') involves more complex internal logic and memory allocations, leading to slower execution.

Case Study 2: Finding User Purchases – Efficient Window Functions

The second problem focused on identifying "returning active users" by analyzing purchase patterns within a specific timeframe, highlighting the efficiency of window functions.

The Problem: Identify user_ids who made a second purchase between 1 and 7 days after their first purchase, excluding same-day purchases. The amazon_transactions table provides user_id, item, created_at (date), and revenue.

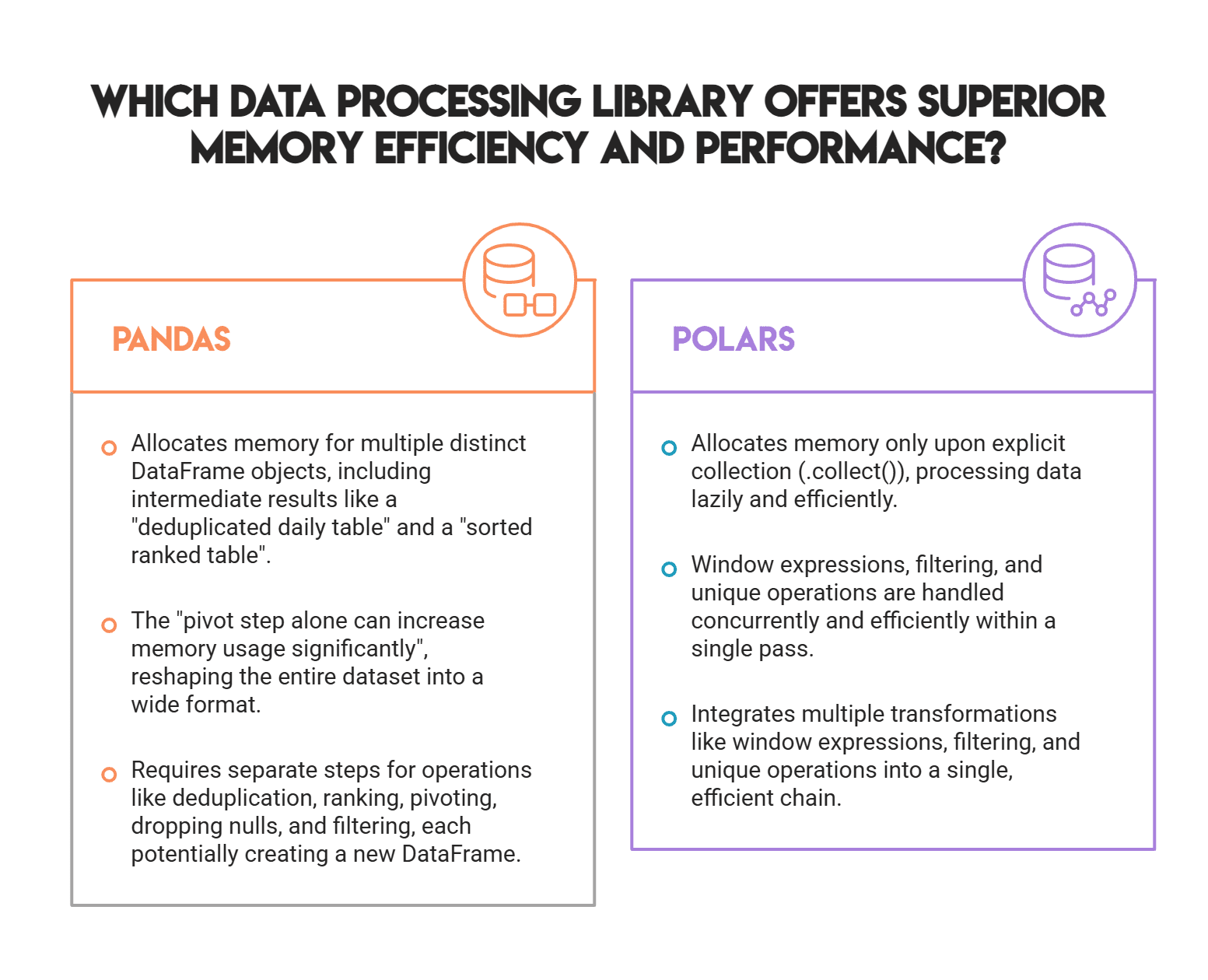

Pandas Approach: The Pandas solution involved multiple distinct DataFrame allocations and transformations. It required extracting unique user_id and purchase_date combinations, sorting them, ranking purchase dates per user using cumcount(), pivoting the table to place the first and second purchase dates side-by-side, handling missing values, calculating the date difference, and finally filtering for the desired range. This multi-step process, especially the pivot operation on a potentially large dataset, can lead to significant memory consumption and performance degradation.

import pandas as pd

amazon_transactions["purchase_date"] = pd.to_datetime(amazon_transactions["created_at"]).dt.date

daily = amazon_transactions[["user_id", "purchase_date"]].drop_duplicates()

ranked = daily.sort_values(["user_id", "purchase_date"])

ranked["rn"] = ranked.groupby("user_id").cumcount() + 1

first_two = (ranked[ranked["rn"] <= 2] # Corrected from original: needs <= 2 for both first and second

.pivot(index="user_id", columns="rn", values="purchase_date")

.reset_index()

.rename(columns=1: "first_date", 2: "second_date"))

first_two = first_two.dropna(subset=["second_date"])

first_two["diff"] = (pd.to_datetime(first_two["second_date"]) - pd.to_datetime(first_two["first_date"])).dt.days

result = first_two[(first_two["diff"] >= 1) & (first_two["diff"] <= 7)][["user_id"]]Polars Approach: Polars tackles this with a highly optimized lazy chain, utilizing window expressions. It computes each user’s earliest purchase date using min().over("user_id") within a single with_columns operation. This over() expression efficiently partitions the data and calculates the minimum for each user. Subsequent filtering then directly applies the 1-7 day condition, all within the lazy execution plan. The select("user_id").unique() at the end efficiently extracts the distinct users. Critically, Polars handles date arithmetic natively within its expression engine, avoiding separate type casting steps.

import polars as pl

returning_users = (

amazon_transactions

.lazy()

.with_columns(

pl.col("created_at").min().over("user_id").alias("first_purchase_date")

)

.filter(

(pl.col("created_at") > pl.col("first_purchase_date")) &

(pl.col("created_at") <= pl.col("first_purchase_date") + pl.duration(days=7))

)

.select("user_id")

.unique()

.sort("user_id", descending=[False])

.collect() # Collect added to materialize the result

)Performance Implications: The Pandas solution’s numerous intermediate DataFrame allocations lead to substantial memory overhead and CPU cycles spent on copying data. A pivot operation, especially, can drastically reshape and expand the dataset in memory. Polars, through its lazy execution, avoids these intermediate allocations until collect() is called. Its over() window expression computes group-wise aggregations in a single, highly optimized pass, and subsequent filters and unique operations are also parallelized. This architectural difference results in significantly lower memory consumption and faster execution, even on moderately sized datasets, a critical factor when working with large transaction logs.

Case Study 3: Monthly Sales Rolling Average – Predicate Pushdown and Cumulative Operations

The final problem demonstrated the efficiency of joins, filtering, and cumulative window functions, showcasing Polars’ intelligent query optimization.

The Problem: Calculate the cumulative average of monthly book sales for 2022, rounded to the nearest whole number, given amazon_books (unit price) and book_orders (order quantity, date).

Pandas Approach: The Pandas solution involved merging the book_orders and amazon_books tables first, then converting order_date to datetime, extracting month and year, calculating sales, then filtering for the year 2022. This eager approach means the join processes all historical order data before discarding rows outside of 2022, leading to unnecessary computation. The cumulative average was computed using expanding().mean().round(0), which, for large series, operates with Python-level loops over growing window slices, introducing overhead.

import pandas as pd

import numpy as np

import datetime as dt

merged = pd.merge(book_orders, amazon_books, on="book_id", how="inner")

merged["order_date"] = pd.to_datetime(merged["order_date"])

merged["order_month"] = merged["order_date"].dt.month

merged["year"] = merged["order_date"].dt.year

merged["sales"] = merged["unit_price"] * merged["quantity"]

merged = merged.loc[(merged["year"] == 2022), :]

result = (

merged.groupby("order_month")["sales"]

.sum()

.to_frame("monthly_sales")

.sort_values(by="order_month")

.reset_index()

)

result["rolling_average"] = result["monthly_sales"].expanding().mean().round(0)

resultPolars Approach: Polars leverages lazy evaluation and "predicate pushdown" for optimal efficiency. The lazy chain first joins the two tables, then immediately filters for year == 2022. This is crucial: the filter condition is "pushed down" before the join executes, meaning Polars only joins the relevant 2022 data, significantly reducing the size of the intermediate dataset. Sales are calculated, and then monthly sales are aggregated. For the rolling average, while Polars has cum_mean(), the need for specific "round-half-up" behavior (different from Pandas’ banker’s rounding) necessitated a switch to eager mode via collect() and a NumPy-based calculation (np.floor(cumsum / np.arange(1, len(cumsum)+1) + 0.5).astype(int)). This hybrid approach still benefits from Polars’ initial lazy optimizations.

import polars as pl

import numpy as np

# Step 1: Prepare monthly sales (LazyFrame) - Polars optimizes join and filter

monthly_sales_lazy = (

book_orders.lazy()

.join(amazon_books.lazy(), on="book_id", how="inner")

.with_columns([

(pl.col("unit_price") * pl.col("quantity")).alias("sales"),

pl.col("order_date").cast(pl.Datetime),

pl.col("order_date").dt.year().alias("year"),

pl.col("order_date").dt.month().alias("order_month")

])

.filter(pl.col("year") == 2022) # Predicate pushdown happens here

.group_by("order_month")

.agg(pl.col("sales").sum().alias("monthly_sales"))

.sort("order_month")

)

# Step 2: Switch to eager mode for rolling computation due to custom rounding requirement

monthly_sales = monthly_sales_lazy.collect()

# Step 3: Rolling average with round-half-up using NumPy

sales_np = monthly_sales["monthly_sales"].to_numpy()

cumsum = np.cumsum(sales_np)

rolling_avg = np.floor(cumsum / np.arange(1, len(cumsum)+1) + 0.5).astype(int)

# Step 4: Add back to Polars DataFrame

monthly_sales = monthly_sales.with_columns([

pl.Series("rolling_average", rolling_avg)

])

# Step 5: Final result with correct column names

result = monthly_sales.select(["order_month", "monthly_sales", "rolling_average"])Performance Implications: The most significant performance gains here come from predicate pushdown during the join operation. By filtering for 2022 data before joining, Polars avoids processing irrelevant rows, dramatically reducing the size of the intermediate dataframes and the computational load of the join itself. Furthermore, while the custom rounding forced a NumPy step, Polars’ native cum_mean() would generally outperform Pandas’ expanding().mean() for larger datasets, as the former executes in a single, optimized Rust loop, bypassing Python’s GIL overhead that plagues the latter’s C-controlled Python loop. For daily or hourly data over several years, this difference in cumulative window operations translates from measurable latency in Pandas to microseconds in Polars.

Broader Impact and Implications for Data Professionals

The consistent theme across all three problems is Polars’ ability to build an optimized lazy query plan, push computation into its Rust-based optimizer, and execute only when a concrete result is required via collect(). This architectural paradigm shift addresses fundamental limitations inherent in Pandas, which, despite its ubiquity, struggles with memory management and computational parallelism due to its design and reliance on Python’s GIL.

For data scientists and engineers, this presents a compelling case for migrating critical, performance-sensitive workflows from Pandas to Polars. While there is an initial learning curve to adapt to Polars’ distinct API – for instance, groupby() becomes group_by(), and rename() uses a plain dictionary – the underlying data manipulation concepts remain largely familiar. The trade-off is increasingly favorable: a small investment in learning a new syntax yields substantial dividends in processing speed and resource efficiency, especially as datasets grow into the millions or billions of rows.

Industry analysts observe a growing trend towards high-performance data processing libraries that leverage modern CPU architectures and efficient memory formats. Polars, alongside other Arrow-native libraries, is at the forefront of this movement. Its emergence signals a maturation of the Python data ecosystem, offering specialized tools for different scales of data. While Pandas will likely remain the preferred choice for smaller, exploratory analyses due to its vast community support and mature feature set, Polars is rapidly becoming the de facto standard for large-scale data transformation and aggregation where speed and memory footprint are paramount. This shift empowers data professionals to tackle ever-larger data challenges with greater efficiency and less computational cost.

Conclusion

The comparison between Polars and Pandas underscores a pivotal moment in Python data processing. For small to moderately sized datasets, the performance differences are often negligible. However, as data scales to millions of rows and beyond, Polars’ Rust-level parallelism, lazy evaluation, and optimized algorithms consistently outperform Pandas, often by a factor of 5-10x or more. The challenges presented by StrataScratch serve as clear, practical examples of where Polars offers a superior solution, making it an indispensable tool for data professionals grappling with the demands of modern big data analytics.

Nate Rosidi is a data scientist and in product strategy. He’s also an adjunct professor teaching analytics, and is the founder of StrataScratch, a platform helping data scientists prepare for their interviews with real interview questions from top companies. Nate writes on the latest trends in the career market, gives interview advice, shares data science projects, and covers everything SQL.

Leave a Reply