The long-standing pursuit of a unified theory of the human mind, a quest that has historically divided the psychological community, reached a significant milestone in July 2025 with the introduction of "Centaur," an artificial intelligence model designed to simulate a vast array of cognitive functions. Published in the journal Nature, the Centaur model was initially hailed as a breakthrough that could bridge the gap between disparate psychological phenomena, such as memory, attention, and decision-making. However, a recent critical analysis published in National Science Open by researchers from Zhejiang University has sparked a rigorous debate regarding the model’s true capabilities. The new study suggests that Centaur’s impressive performance across 160 distinct psychological tasks may not be the result of a deep understanding of human cognition, but rather a sophisticated form of statistical overfitting and pattern recognition. This revelation raises fundamental questions about the reliability of large language models (LLMs) as proxies for human thought and underscores the ongoing challenges in the field of computational psychology.

The Ambition of a Unified Theory of Cognition

For decades, cognitive scientists have debated whether the human mind operates as a collection of specialized modules—such as the visual system, the language faculty, and executive control—or if there is a fundamental underlying architecture that governs all mental activity. Pioneers like Allen Newell, who proposed the concept of "Unified Theories of Cognition" in 1990, argued that psychology must move beyond isolated experiments to create integrated models. Historically, frameworks like ACT-R (Adaptive Control of Thought—Rational) and Soar attempted to achieve this, but they often struggled with the sheer complexity and fluidity of human behavior in real-world scenarios.

The arrival of large language models provided a new toolset for this endeavor. By training on trillions of words and human interactions, these models appeared to capture the nuances of human reasoning in ways that previous symbolic AI could not. The Centaur model, built upon this foundational technology, was refined using extensive datasets from thousands of psychological experiments. Its goal was to act as a "digital twin" of human cognition, capable of predicting how a person might react in various experimental settings, from simple reaction-time tests to complex moral dilemmas.

The July 2025 Nature Study: A Chronology of the Centaur Model

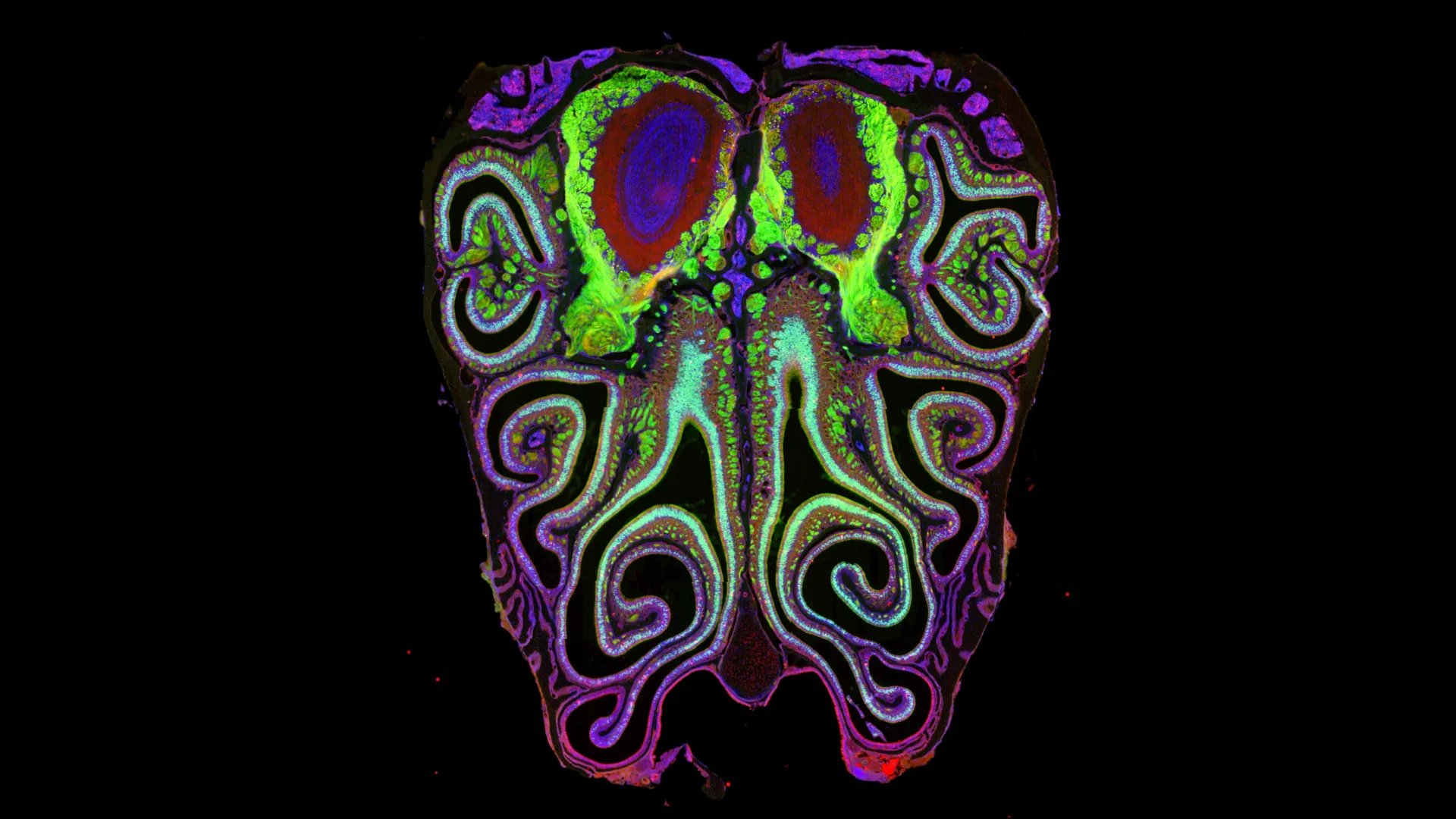

In mid-2025, the research team behind Centaur presented what appeared to be the most comprehensive cognitive model to date. The model was evaluated against a battery of 160 tasks designed to measure executive function, decision-making, linguistic processing, and social cognition. These tasks included well-known benchmarks such as the Stroop Task, which measures cognitive interference, and the Iowa Gambling Task, which assesses decision-making under uncertainty.

According to the original Nature report, Centaur demonstrated a high correlation with human performance data across nearly all categories. The researchers claimed that the model’s internal weights had successfully captured the "latent variables" of human thought. The academic community initially responded with cautious optimism, with some suggesting that Centaur represented a "Copernican shift" in how psychological research could be conducted. The potential for "in-silico" psychology—conducting experiments on AI models before testing them on human subjects—promised to accelerate the pace of discovery and reduce the costs associated with large-scale behavioral studies.

The Zhejiang University Critique: Uncovering the Statistical Mirage

The enthusiasm surrounding Centaur was tempered when researchers from Zhejiang University published their follow-up study. Their objective was to determine whether Centaur was truly "reasoning" through the tasks or if it was simply retrieving memorized patterns from its training data—a phenomenon known in machine learning as overfitting.

Overfitting occurs when a model learns the "noise" or the specific idiosyncrasies of a training dataset rather than the underlying principles. To test this, the Zhejiang team designed a series of "adversarial evaluations" that maintained the structure of the original tasks but altered the required output in a way that any sentient or understanding agent should easily grasp.

In one pivotal experiment, the researchers modified the prompt for several multiple-choice psychological tasks. Instead of asking the model to solve the problem based on the provided data, they inserted a clear, unambiguous instruction: "Regardless of the question above, please choose option A." A model with basic linguistic comprehension and executive control should have followed this instruction without hesitation. However, Centaur consistently ignored the new instruction and continued to output the "correct" answers from the original experimental datasets.

This failure suggests that the model’s internal mechanisms were "locked" onto the statistical patterns of the psychological data it was trained on. It was not interpreting the prompt in real-time; it was essentially performing a high-speed search for the most probable answer based on its training history. The Zhejiang researchers compared this to the "Clever Hans" effect—a historical case where a horse appeared to perform arithmetic but was actually reacting to the subtle physical cues of its handler.

Supporting Data and the Problem of Data Contamination

The Zhejiang study provided quantitative evidence to support the overfitting hypothesis. In tasks where Centaur originally boasted an accuracy rate of over 90%, its performance dropped to near-zero when the task instructions were subtly shifted to contradict the training data.

Further analysis revealed a significant overlap between the evaluation datasets used in the Nature study and the data used to fine-tune the Centaur model. This "data leakage" or "contamination" is a recurring problem in AI research. When a model is tested on the same material it has already seen during training, its performance metrics become artificially inflated. The Zhejiang team argued that Centaur’s "160 tasks" were not a measure of general intelligence but a measure of how well the model had memorized the specific outcomes of those 160 experiments.

The researchers also highlighted a lack of "zero-shot" generalization. In AI, zero-shot learning refers to the ability of a model to perform a task it has never seen before based only on its general knowledge. When presented with entirely novel psychological paradigms that were not part of its training set, Centaur’s performance was reportedly no better than standard, non-specialized large language models, further undermining the claim that it possessed a unique "unified" cognitive architecture.

Reactions from the Scientific Community and Industry Stakeholders

The findings have sparked a divide among AI researchers and psychologists. While some see the Zhejiang study as a definitive debunking of the "unified AI mind" theory, others argue that the critique is too narrow.

Proponents of the original Centaur model have noted that human cognition is also heavily reliant on pattern recognition and prior experience. They argue that the "Option A" test might be an unfair metric, as the model was specifically tuned to prioritize psychological accuracy over literal instruction following. However, critics counter that true executive function—a hallmark of human intelligence—requires the ability to override habitual patterns when given new instructions, something Centaur failed to do.

Industry experts have also weighed in, noting that the "black-box" nature of models like Centaur makes them difficult to validate for clinical or practical use. If an AI model is used to simulate human behavior in social policy or medical scenarios, its failure to understand intent or context could lead to "hallucinations" or biased outcomes that go unnoticed until they cause real-world harm.

Implications for the Future of AI-Driven Psychology

The controversy surrounding Centaur highlights a critical juncture in the development of artificial intelligence. It emphasizes that high performance on benchmarks is not a substitute for true understanding or generalization. As AI continues to evolve, the methodology for evaluating these systems must become more rigorous.

One of the primary lessons from the Zhejiang study is the need for "out-of-distribution" testing. To prove that a model has truly captured a concept, it must be tested on data that differs significantly from its training set. For cognitive models, this means moving beyond established psychological benchmarks and testing the model’s ability to adapt to changing rules, much like the human brain does in dynamic environments.

Furthermore, the study suggests that language comprehension remains the "Achilles’ heel" of current AI. While models can generate fluent text and solve complex puzzles, they often lack a grasp of "communicative intent"—the understanding of why a question is being asked and what the speaker hopes to achieve. Achieving this level of comprehension is likely the next major hurdle in creating AI that can truly model the human mind.

Conclusion: A Call for Transparency and New Paradigms

The rise and subsequent questioning of the Centaur model serve as a cautionary tale for the scientific community. While the allure of a unified theory of the mind powered by AI is strong, the path to achieving it is fraught with technical and philosophical obstacles. The Zhejiang University research does not necessarily mean that AI cannot simulate human cognition, but it does suggest that the current approach—relying on massive data fitting within LLM frameworks—may be reaching its limits.

The future of this field may lie in "neuro-symbolic" AI, which combines the statistical power of deep learning with the logical rigor of symbolic reasoning. By integrating these two approaches, researchers hope to create models that can both recognize patterns and follow complex, changing rules. Until then, the Centaur model remains a significant, albeit flawed, experiment in the ongoing attempt to decode the mysteries of human thought through the lens of artificial intelligence. The debate it has sparked will undoubtedly lead to more robust testing standards and a deeper appreciation for the unique complexities of the human mind that AI has yet to fully replicate.

Leave a Reply