Google has introduced TurboQuant, a groundbreaking algorithmic suite and library poised to revolutionize the efficiency of large language models (LLMs) and vector search engines, critical components of modern retrieval-augmented generation (RAG) systems. This novel technology achieves an unprecedented reduction in cache memory consumption, down to a mere 3 bits, without necessitating model retraining or sacrificing crucial accuracy, a significant leap forward in the quest for more sustainable and scalable AI.

The Urgent Need for AI Efficiency: Tackling Computational Bottlenecks

The exponential growth of large language models has brought about unprecedented capabilities, but also significant computational challenges. Modern LLMs, with their vast parameter counts and ever-expanding context windows, demand immense memory and processing power. A primary bottleneck frequently encountered in LLM inference is the management of the key-value (KV) cache. This "digital cheat sheet" stores frequently accessed intermediate activations (keys and values) from previous tokens, allowing the model to efficiently recall context during sequential token generation. As context lengths grow, the KV cache scales linearly, leading to soaring memory consumption, reduced throughput, and increased operational costs. This challenge is particularly acute in enterprise-level applications and RAG systems, where real-time, long-form interactions are paramount, and the integration of LLMs with high-dimensional vector search engines further compounds the memory demands.

Traditional vector quantization (VQ) techniques have been employed to mitigate these issues by reducing the size of text vectors. However, many existing methods often introduce their own set of problems, such as a "memory overhead" for storing quantization constants or the necessity of computing full-precision constants on small data blocks. These additional computational steps can partly undermine the very purpose of compression, making the search for truly efficient quantization a persistent industry goal. Furthermore, many early quantization efforts, while effective at reducing model size, often came at the cost of accuracy or required extensive fine-tuning and retraining, adding to development time and resource expenditure. The drive for a solution that offers extreme compression without these trade-offs has become a strategic imperative for leading AI developers.

TurboQuant in Detail: A Two-Stage Breakthrough

TurboQuant distinguishes itself by optimally tackling the memory overhead issue through an innovative two-stage process, aided by two complementary techniques: Block-wise Quantization and Lossless Compression. At its core, TurboQuant eliminates the expensive data normalization typically required in traditional quantization approaches. Instead of pre-calculating and storing full-precision quantization constants for each block, TurboQuant dynamically adjusts the scale factor for each block on the fly. This adaptive approach ensures that each block of data is optimally quantized without the need for additional memory overhead for storing scaling factors, a critical design choice that contributes significantly to its efficiency.

The method focuses on quantizing the KV cache, which is often a major memory hog during inference. By performing quantization at the 3-bit level, TurboQuant dramatically shrinks the memory footprint of these critical components. The significance of 3-bit quantization cannot be overstated; while 8-bit or 4-bit quantization has become increasingly common, pushing down to 3 bits while maintaining accuracy represents a substantial engineering feat. This deep compression is achieved without requiring any retraining of the underlying language model, making it a drop-in solution for existing deployments. The result is a system that promises to unlock unprecedented levels of efficiency, allowing for larger context windows, faster inference, and reduced operational costs for AI applications.

A Chronology of Compression: Paving the Way for TurboQuant

The journey towards efficient AI models has been a long and incremental one, with TurboQuant representing a significant milestone. Early efforts in model compression date back to the nascent stages of neural networks, with techniques like pruning and weight sharing emerging in the 1990s. These methods aimed to reduce model complexity and size but often struggled with maintaining performance.

The modern era of deep learning, characterized by massive models, reignited interest in compression. Initially, models were predominantly trained and deployed using 32-bit floating-point precision (FP32). As computational demands soared, a shift towards lower precision became inevitable. The introduction of 16-bit floating-point (FP16 or bfloat16) for training and inference marked a major step, offering a good balance between precision and computational efficiency, significantly reducing memory bandwidth requirements and accelerating computations on specialized hardware.

Following this, 8-bit integer quantization (INT8) gained prominence, particularly for inference tasks. Techniques like NVIDIA’s TensorRT and various post-training quantization (PTQ) methods allowed models to run with INT8 precision, often with minimal accuracy degradation and substantial speedups. However, moving below 8-bit, especially to 4-bit or even lower, presented considerable challenges. Maintaining model accuracy at such low bit depths typically required specialized training techniques like quantization-aware training (QAT), which added complexity and time to the development cycle. Moreover, the memory overhead associated with storing quantization parameters for fine-grained, block-wise quantization often negated some of the gains.

Google’s TurboQuant emerges from this historical context as a breakthrough precisely because it overcomes these long-standing hurdles. Launched recently, it builds upon years of research into efficient AI, offering a solution that pushes the boundaries of compression to 3 bits for KV cache while bypassing the need for retraining and maintaining accuracy. This positions TurboQuant not just as an incremental improvement but as a pivotal development in the ongoing timeline of AI efficiency, directly addressing the limitations that have plagued sub-8-bit quantization for years.

Benchmarking TurboQuant: Performance Beyond Local Constraints

To provide a tangible understanding of TurboQuant’s capabilities, Google has provided a practical Python code example for local evaluation. This benchmark, executable in environments like Google Colab with a T4 GPU, conceptually compares unquantized vectors against TurboQuant’s fast compression. The setup involves loading a moderately sized LLM, TinyLlama/TinyLlama-1.1B-Chat-v1.0, using 16-bit decimal float precision (torch.float16) for efficiency on modern hardware. A simulated long input prompt—"Explain the history of the universe in great detail. " repeated 20 times—is used to mimic large context windows where TurboQuant’s benefits become most apparent.

The run_unified_benchmark function measures execution time and memory usage during text generation, toggling TurboQuant’s 3-bit KV compression. The initial local test results with the TinyLlama model and the specified prompt were as follows:

--- THE VERDICT ---

Baseline (FP16) Cache: 42.45 MB

TurboQuant (3-bit) Cache: 7.86 MB

Speedup: 0.61x

Memory Saved: 34.59 MBThese results immediately highlight a significant achievement: a compression ratio of approximately 5.4x for KV cache memory footprint. This means TurboQuant reduced the cache memory by over 80%, from 42.45 MB to just 7.86 MB. However, the observed speedup of 0.61x in this local environment might appear counter-intuitive, suggesting a slowdown rather than an acceleration. This discrepancy is crucial to understand and is entirely consistent with the expected behavior of TurboQuant under specific conditions.

The true transformative performance of TurboQuant is realized in large-scale, enterprise-level scenarios with extensive context lengths and powerful hardware accelerators. The local TinyLlama benchmark, while illustrative for demonstrating memory savings, operates on a relatively short sequence for the massive-scale scenarios TurboQuant is designed to optimize. In such smaller-scale local tests, the overhead of applying the quantization logic, even if efficient, can sometimes outweigh the benefits of reduced memory traffic, especially if the primary bottleneck is not memory bandwidth but other computational factors.

Google’s experimental results, based on an enterprise-level cluster of H100 GPUs and long-form RAG prompts containing over 32,000 tokens, paint a much clearer picture. In these demanding environments, memory traffic becomes the dominant bottleneck. By dramatically reducing the KV cache size, TurboQuant significantly alleviates this constraint, leading to a throughput increase of up to 8x in speed compared to 32-bit unquantized keys. This stark contrast underscores the trade-off between memory bandwidth and computing latency; while the memory footprint reduction is universal, the speedup becomes profound when memory bandwidth is the primary limiting factor, as it is in high-throughput, large-context AI deployments.

Further local experimentation by multiplying the input string by 200 and setting max_new_tokens=250 demonstrated this scaling effect on memory savings:

--- THE VERDICT ---

Baseline (FP16) Cache: 421.44 MB

TurboQuant (3-bit) Cache: 79.02 MB

Speedup: 0.57x

Memory Saved: 342.42 MBHere, the memory savings scale proportionately with the increased context length, showcasing a consistent 5.3x compression ratio. While the local speedup remains below 1x, these results unequivocally confirm TurboQuant’s ability to maintain high precision while operating at 3-bit-level system efficiency, especially in environments where memory is a critical resource.

Industry Reactions and Broader Implications

The unveiling of TurboQuant is expected to be met with considerable enthusiasm across the AI industry. Industry analysts and researchers are likely to highlight its potential to democratize access to powerful LLMs by lowering their operational footprint. For developers, the "zero retraining" aspect is a game-changer, eliminating a significant barrier to adopting more efficient models. This allows existing models to be seamlessly integrated with TurboQuant, reducing time-to-market for optimized applications.

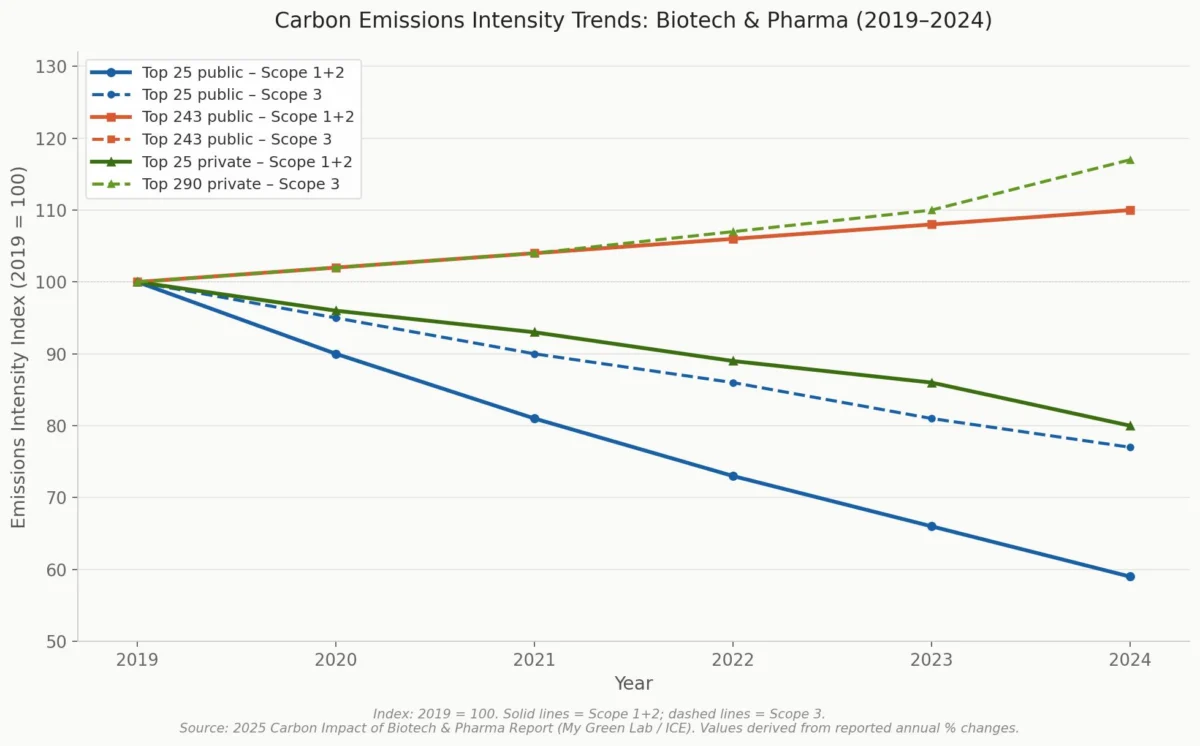

Cloud providers and enterprises running large-scale AI inference workloads stand to benefit immensely from the economic implications. Reduced memory consumption directly translates to lower cloud computing costs and higher throughput on existing hardware, leading to substantial savings on infrastructure and energy. This efficiency gain is particularly vital for organizations deploying RAG systems, where the speed and cost-effectiveness of retrieving and generating information directly impact user experience and business viability.

The broader implications extend beyond cost savings. TurboQuant facilitates the deployment of more complex AI models in resource-constrained environments, such as edge devices, mobile phones, and embedded systems. This opens up new avenues for on-device AI applications that require low latency and high privacy, where data processing happens locally without relying on distant cloud servers. Furthermore, by making AI inference more energy-efficient, TurboQuant contributes to the ongoing efforts to make artificial intelligence more sustainable and environmentally friendly.

Challenges and Future Outlook

While TurboQuant marks a significant advancement, the path forward involves continuous optimization and broader integration. One immediate challenge will be ensuring widespread adoption across various AI frameworks and hardware platforms. Google’s release of TurboQuant as a library is a strong step, but ongoing development will be needed to ensure seamless compatibility with evolving LLM architectures and specialized AI accelerators.

Future research might explore extending TurboQuant’s extreme compression beyond the KV cache to other parts of the LLM architecture, potentially unlocking even greater efficiency gains. The AI community will also be keen to see how TurboQuant performs with an even wider array of LLM sizes, types, and downstream tasks, ensuring its robustness and generalizability. As the demand for larger, more capable, yet simultaneously more efficient AI models continues to grow, innovations like TurboQuant will be crucial in balancing performance with practical deployment considerations.

Conclusion

Google’s TurboQuant represents a pivotal moment in the evolution of AI efficiency. By achieving 3-bit KV cache compression without sacrificing accuracy or requiring model retraining, it directly addresses some of the most pressing challenges facing large language models and RAG systems today. While local benchmarks offer a glimpse into its memory-saving prowess, the true power of TurboQuant lies in its ability to deliver up to 8x performance increases in demanding, enterprise-scale environments. This innovation promises to make advanced AI more accessible, cost-effective, and sustainable, reshaping the landscape of AI development and deployment for years to come.

Leave a Reply