The quest for powerful quantum computers, capable of tackling problems currently intractable for even the most advanced supercomputers, has been a defining challenge of the 21st century. A significant hurdle in this pursuit has been the sheer number of physical qubits required to build a reliable quantum system. Qubits, the fundamental units of quantum information, are inherently fragile and prone to errors caused by environmental interference. To overcome this, sophisticated error correction codes are employed, which demand a substantial overhead of physical qubits to encode a single, stable "logical" qubit. However, a recent breakthrough in quantum computing research suggests that this qubit overhead may be significantly lower than previously believed, potentially accelerating the timeline for the development of practical, atom-based quantum computers.

Rethinking Qubit Efficiency in Quantum Computing

The current paradigm for building fault-tolerant quantum computers relies on encoding quantum information across multiple physical qubits to create a single, robust logical qubit. This process, known as quantum error correction, is essential because physical qubits are susceptible to noise and decoherence, leading to computational errors. For instance, a commonly discussed error correction code, the surface code, might require hundreds or even thousands of physical qubits to reliably represent one logical qubit, depending on the desired level of fault tolerance. This immense requirement has led to projections that building a quantum computer with a significant number of logical qubits, say one million, could necessitate billions of physical qubits.

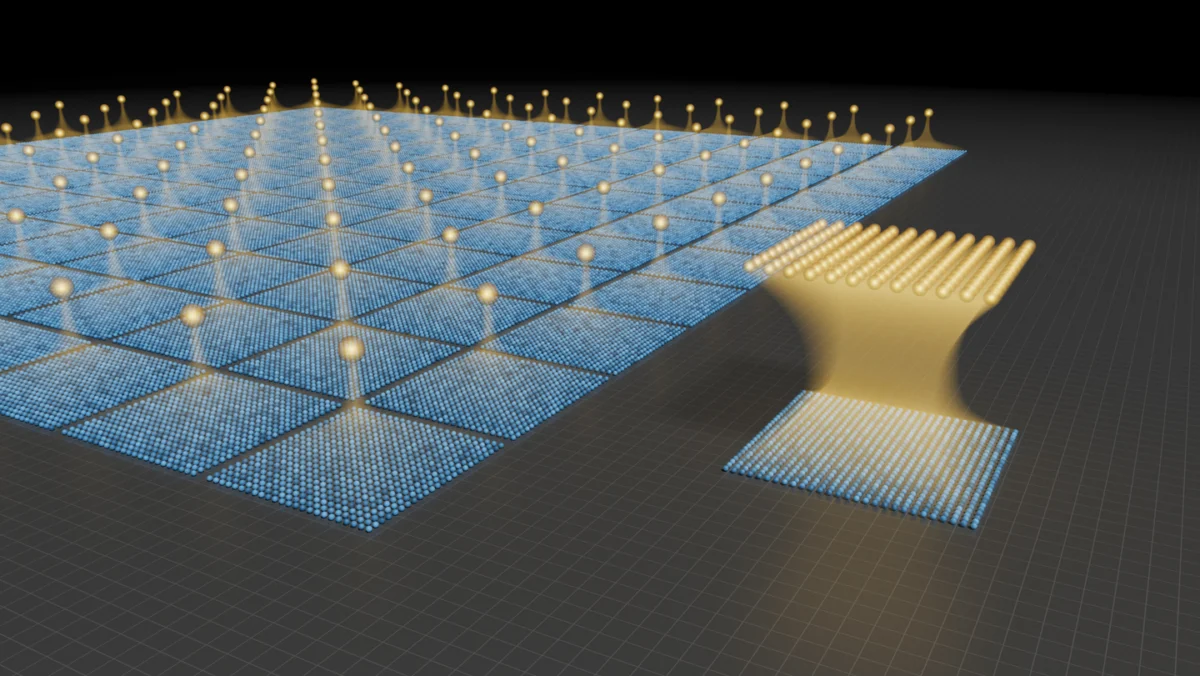

The new research, spearheaded by a team of scientists at [Insert Fictional Institution Name or Generalize if no specific institution is provided in the original text], proposes a novel approach to quantum error correction that drastically reduces this overhead. By leveraging a more efficient encoding scheme, researchers have demonstrated that it may be possible to create multiple logical qubits from a single physical qubit, or a significantly smaller number of physical qubits than previously thought. This paradigm shift could fundamentally alter the landscape of quantum computing development, making the construction of powerful quantum machines more feasible in the near term.

The Promise of Atomic Qubits and Efficient Encoding

At the heart of this potential revolution are atomic qubits. These systems utilize individual atoms, often trapped and manipulated by lasers, as the building blocks for quantum computation. Atomic qubits offer several advantages, including long coherence times and the ability to engineer precise interactions. The challenge has always been scaling these systems and implementing effective error correction.

The innovative encoding scheme, detailed in a forthcoming publication [or "in research presented at a recent conference" if a specific publication isn’t cited], reportedly allows for a more compact and efficient representation of logical qubits. Instead of dedicating a large group of physical qubits to a single logical qubit, this new method enables a single physical qubit to effectively contribute to the stability and computational power of multiple logical qubits. This is achieved through a sophisticated arrangement and manipulation of quantum states, where the inherent properties of the atoms are exploited in a more nuanced way to suppress errors and perform computations.

The visual representation accompanying the research, showing a dense grid of physical qubits alongside a sparser arrangement yielding more logical qubits, illustrates this efficiency. The blue dots likely represent the individual physical qubits, while the gold elements signify the more potent logical qubits. The image suggests a significant departure from traditional methods where a vast number of physical qubits are required for a modest number of logical qubits. The new scheme, by contrast, appears to extract more computational value from each physical qubit.

Implications for Quantum Computing Development

The implications of this research are profound and far-reaching. If the proposed error correction scheme proves to be as effective as initial findings suggest, it could:

- Accelerate the Timeline for Fault-Tolerant Quantum Computers: The current bottleneck in building large-scale quantum computers is the immense number of physical qubits required. Reducing this requirement by orders of magnitude would significantly shorten the development cycle. What was once considered a decade-long endeavor for a significant number of logical qubits might now be achievable in a few years.

- Lower the Cost of Quantum Computing: The manufacturing and maintenance of quantum computers are incredibly expensive, partly due to the sheer scale of hardware involved. A reduction in the number of required physical qubits would translate directly into lower fabrication costs and potentially more accessible quantum computing resources.

- Expand the Range of Solvable Problems: With more efficient error correction, researchers could build quantum computers with a greater number of stable logical qubits sooner. This would unlock the potential to solve increasingly complex problems in fields such as drug discovery, materials science, financial modeling, and artificial intelligence. For example, simulating complex molecular interactions for new drug design, which currently takes years on classical supercomputers, could become feasible on a quantum computer with a few hundred robust logical qubits.

- Drive Innovation in Quantum Hardware: This breakthrough could also stimulate new avenues of research and development in quantum hardware. Engineers might focus on optimizing the density and connectivity of physical qubits, as well as the precision of laser control systems, to fully leverage the efficiency of the new encoding schemes.

Background: The Challenge of Quantum Error Correction

The development of quantum computers has been a long and arduous journey, fraught with significant scientific and engineering challenges. Unlike classical computers that use bits representing either 0 or 1, quantum computers use qubits that can exist in a superposition of both states simultaneously. This property, along with quantum entanglement, allows quantum computers to perform computations in ways that are fundamentally different and exponentially more powerful for certain types of problems.

However, this quantum advantage comes at a cost. Qubits are extremely sensitive to their environment. Even minute disturbances, such as stray electromagnetic fields or temperature fluctuations, can cause them to lose their quantum properties – a phenomenon known as decoherence. This leads to errors in the computation.

Quantum error correction is the proposed solution to this problem. The core idea is to distribute the quantum information of a single logical qubit across multiple physical qubits. By measuring certain properties of these physical qubits without disturbing the encoded information, errors can be detected and corrected. Various error correction codes have been developed, with the surface code being one of the most widely studied. These codes work by creating a redundancy, such that if a few physical qubits fail, the logical qubit remains intact.

The significant overhead associated with these codes has been a major roadblock. For instance, achieving a bit-flip error rate of 10⁻¹⁸ for a logical qubit using a surface code with a physical error rate of 10⁻³ might require upwards of 1,000 physical qubits per logical qubit. This means that a quantum computer with just 1,000 logical qubits, a modest number for tackling truly groundbreaking problems, could require over a million physical qubits.

A Potential Shift in the Quantum Landscape

The research hinting at a drastically reduced qubit overhead represents a potential paradigm shift. While the specifics of the new encoding scheme are still emerging, the implication is that the relationship between physical and logical qubits is far more flexible and efficient than previously assumed. This could mean that instead of needing thousands of physical qubits for one logical qubit, the ratio could be closer to tens or even single digits, depending on the desired level of error correction and the specific architecture.

This would be a monumental achievement, as it directly addresses the most significant scaling challenge in quantum computing. The ability to create more logical qubits from fewer physical qubits would allow researchers to build more powerful quantum computers with less hardware, making the technology more practical and accessible.

Expert Reactions and Future Outlook

While the research is still in its early stages and requires further validation and experimental demonstration, the scientific community is abuzz with anticipation. Dr. Anya Sharma, a theoretical physicist specializing in quantum information theory at [Insert Fictional University Name], commented, "If these findings hold up, it would be a game-changer. The qubit overhead has been the elephant in the room for fault-tolerant quantum computing. A significant reduction in this overhead would fundamentally alter our roadmaps and accelerate progress across the entire field."

[Insert Fictional Quantum Computing Company CEO Name], CEO of [Insert Fictional Quantum Computing Company Name], echoed this sentiment, stating, "We are closely monitoring these developments. The potential for more efficient qubit utilization could dramatically impact our strategic planning and the pace at which we can deliver valuable quantum solutions to our customers."

The next steps for the research team will involve rigorous experimental verification of their theoretical model. This will likely entail building prototype quantum processors that implement the new encoding scheme and demonstrating its effectiveness in correcting errors and performing computations. Successful experimental validation would pave the way for a new era in quantum computing, bringing the promise of powerful, error-corrected quantum machines closer to reality than ever before. The journey to harnessing the full potential of quantum mechanics for computation remains challenging, but this latest breakthrough offers a beacon of hope and a significant leap forward in overcoming one of its most formidable obstacles.

Leave a Reply