The adage "garbage in, garbage out" (GIGO) stands as an undisputed truth in the realm of machine learning, underscoring the critical dependence of model performance on the quality and characteristics of its input data. Data scientists frequently encounter scenarios where sophisticated algorithms yield suboptimal results, not due to inherent flaws in the models themselves, but because the underlying data violates fundamental statistical assumptions. Feeding a linear regression model with highly collinear features or attempting ANOVA tests on data exhibiting heteroscedastic variances are classic examples of practices that lead to ineffective models and unreliable insights. While visual exploratory data analysis (EDA) techniques, such as scatter plots and histograms, offer valuable preliminary insights, they are often insufficient for the rigorous validation required to ensure data suitability for downstream analytical tasks or machine learning models. This is where specialized tools become indispensable, bridging the gap between intuitive data exploration and robust statistical validation.

The Imperative of Rigorous Exploratory Data Analysis

Exploratory Data Analysis (EDA) is more than just an initial glance at a dataset; it is a foundational process that allows data scientists to understand the underlying structure, identify patterns, detect anomalies, and test hypotheses. Historically, EDA relied heavily on descriptive statistics and graphical representations. However, as data complexity grows and the stakes of machine learning applications rise, the need for a more statistically rigorous approach has become paramount. Industry reports consistently highlight data quality issues as a major impediment to successful AI implementation, with studies estimating that poor data quality costs businesses billions annually. A significant portion of a data scientist’s time—often reported to be between 60% and 80%—is dedicated to data cleaning and preparation, much of which is informed by the insights gleaned during EDA.

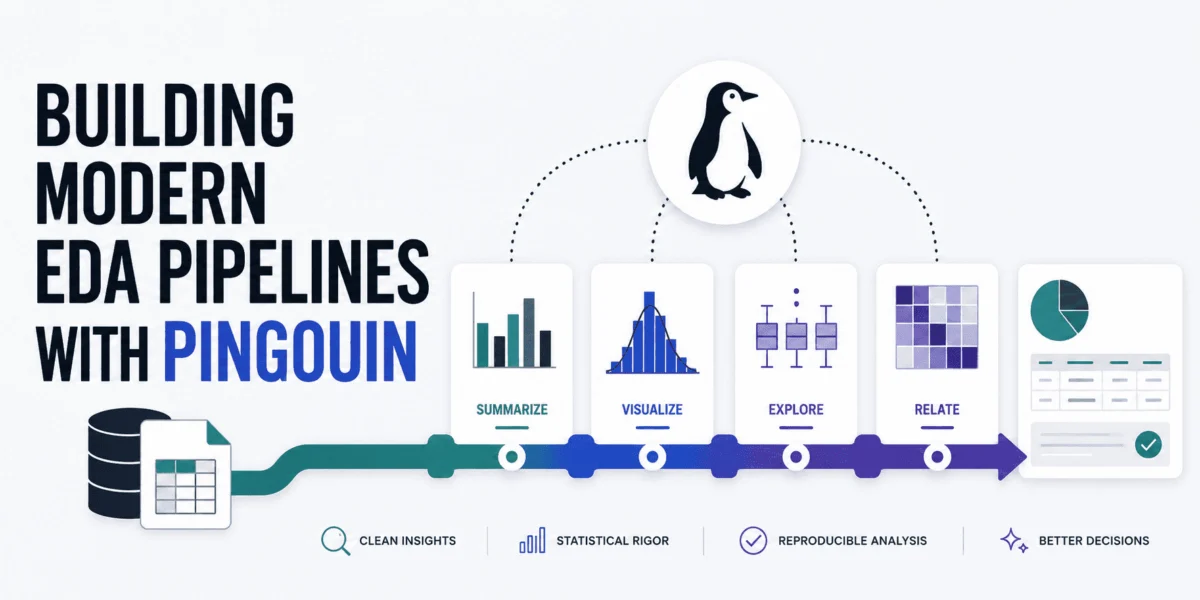

The challenge lies in efficiently performing these rigorous checks without extensive manual coding or juggling multiple libraries. Traditional statistical packages often lack the seamless integration with data manipulation tools that modern data scientists require. Conversely, general-purpose data libraries might not offer the depth of statistical tests needed for comprehensive validation. This is precisely the void that libraries like Pingouin aim to fill, acting as a crucial intermediary between the powerful data structuring capabilities of pandas and the advanced statistical functionalities of SciPy. By streamlining the execution of complex statistical tests, Pingouin empowers data professionals to construct solid, automated EDA pipelines that systematically validate several important data properties, thereby safeguarding the integrity of subsequent analyses and models.

Initial Setup and Data Acquisition

To embark on building a holistic pipeline for rigorous statistical EDA, the first step involves setting up the necessary Python environment. This typically entails installing the Pingouin library, alongside pandas for data handling, if they are not already present:

!pip install pingouin pandasOnce installed, these key libraries are imported into the working environment. For demonstration purposes, a widely accessible open dataset—one containing various properties of wine samples and their corresponding quality ratings—is utilized. This dataset provides a practical context for applying the statistical tests, representing the kind of real-world data a data scientist might encounter.

import pandas as pd

import pingouin as pg

# Loading the wine dataset from an open dataset GitHub repository

url = "https://raw.githubusercontent.com/gakudo-ai/open-datasets/refs/heads/main/wine-quality-white-and-red.csv"

df = pd.read_csv(url)

# Displaying the first few rows to understand our features

df.head()The initial inspection of the dataset’s head rows confirms successful data loading and provides a preliminary understanding of the features, such as ‘fixed acidity’, ‘volatile acidity’, ‘citric acid’, ‘pH’, and ‘alcohol’, which are crucial for assessing wine quality. These features will serve as the basis for the statistical validation processes.

Validating Univariate Normality: A Cornerstone Assumption

One of the most frequently encountered and critical assumptions in traditional statistical modeling and machine learning algorithms is that continuous variables follow a normal, or Gaussian, distribution. Many parametric tests, such as t-tests and ANOVAs, and even some machine learning models like Linear Regression and Gaussian Naive Bayes, rely heavily on this assumption for their validity and optimal performance. Violations of normality can lead to inaccurate p-values, biased parameter estimates, and ultimately, misleading conclusions.

Pingouin simplifies the process of checking univariate normality across multiple features simultaneously using its pg.normality() function, which employs the Shapiro-Wilk test. The Shapiro-Wilk test is particularly effective for assessing normality on a wide range of data distributions and is generally preferred for smaller to medium-sized samples (typically up to 5,000 observations), although it can be applied to larger datasets.

# Selecting a subset of continuous features for normality checks

features = ['fixed acidity', 'volatile acidity', 'citric acid', 'pH', 'alcohol']

# Running the normality test

normality_results = pg.normality(df[features])

print(normality_results)The output for the selected wine features reveals a consistent pattern:

W pval normal

fixed acidity 0.879789 2.437973e-57 False

volatile acidity 0.875867 6.255995e-58 False

citric acid 0.964977 5.262332e-37 False

pH 0.991448 2.204049e-19 False

alcohol 0.953532 2.918847e-41 FalseIn this case, the ‘normal’ column clearly indicates ‘False’ for all evaluated features, accompanied by extremely low p-values (much less than the conventional significance level of 0.05). This outcome signifies a clear rejection of the null hypothesis that the data is normally distributed. It is important to note that this result does not imply an error in the data itself; rather, it highlights intrinsic characteristics of the dataset. Detecting non-normality early in the EDA phase is crucial. It signals that in subsequent data preprocessing steps, transformations such as log-transformations, square root transformations, or Box-Cox transformations might be necessary. These techniques aim to reshape the distribution of the raw data, making it more ‘normal-like’ and thus more suitable for models that explicitly assume Gaussian distribution, thereby enhancing model accuracy and interpretability.

Assessing Multivariate Normality: Beyond Individual Features

While univariate normality assesses each feature in isolation, many advanced statistical techniques and machine learning models require that the combination of features follows a multivariate normal distribution. This is particularly relevant for methods like multivariate analysis of variance (MANOVA), structural equation modeling, and certain types of classification algorithms that model the joint distribution of features. Multivariate normality implies that each variable is normally distributed individually, and all linear combinations of the variables are also normally distributed. Furthermore, it implies that the relationships between variables are linear.

Pingouin facilitates this complex check with its pg.multivariate_normality() function, which implements the Henze-Zirkler (HZ) test. The HZ test is a powerful omnibus test for multivariate normality, sensitive to various departures from normality, including skewness and kurtosis.

# Henze-Zirkler multivariate normality test

multivariate_normality_results = pg.multivariate_normality(df[features])

print(multivariate_normality_results)The execution of this test on the wine dataset yields:

HZResults(hz=np.float64(23.72107048442373), pval=np.float64(0.0), normal=False)The result normal=False and a p-value of 0.0 strongly indicate that the combined set of features does not adhere to a multivariate normal distribution. This finding has significant implications for model selection. When multivariate normality does not hold, parametric models that assume it might produce unreliable results. In such scenarios, non-parametric or distribution-agnostic machine learning models often become more robust alternatives. Tree-based models like Gradient Boosting Machines (GBMs), Random Forests, and XGBoost, as well as ensemble methods, are less sensitive to the underlying distribution of the data and can often perform well even when normality assumptions are violated. This insight from rigorous EDA guides the data scientist towards a more appropriate model family, preventing wasted effort on models ill-suited to the data’s inherent properties.

Ensuring Homoscedasticity: The Principle of Equal Variances

Homoscedasticity, a term often daunting in its pronunciation but simple in its concept, refers to the condition where the variance of the errors (or residuals) in a regression model is constant across all levels of the independent variables. In simpler terms, it means that the spread of the data points around the regression line is roughly the same throughout the range of predictions. This property is crucial for the validity of many statistical tests and regression models, including ordinary least squares (OLS) regression, ANOVA, and t-tests. When homoscedasticity is violated, a condition known as heteroscedasticity arises, leading to inefficient parameter estimates, incorrect standard errors, and consequently, unreliable hypothesis tests and confidence intervals.

Pingouin’s implementation of Levene’s test (pg.homoscedasticity()) offers a robust method to check for equal variances across different groups. Unlike Bartlett’s test, Levene’s test is less sensitive to departures from normality, making it suitable for a wider range of datasets. In this context, we assess whether the variance of ‘alcohol’ content is equal across different ‘quality’ groups of wine.

# Levene's test for equal variances across groups

# 'dv' is the target, dependent variable, 'group' is the categorical variable

homoscedasticity_results = pg.homoscedasticity(data=df, dv='alcohol', group='quality')

print(homoscedasticity_results)The result of the Levene’s test for the wine dataset is:

W pval equal_var

levene 66.338684 2.317649e-80 FalseThe equal_var column showing False and an extremely low p-value (indicating statistical significance) confirms the presence of heteroscedasticity. This means that the variance of ‘alcohol’ content is not constant across the different ‘quality’ categories of wine. Detecting heteroscedasticity is a critical finding that necessitates corrective action in downstream analyses. For instance, when training regression models, employing robust standard errors (e.g., White’s heteroscedasticity-consistent standard errors) can provide more accurate inference by adjusting for the unequal variances. Alternatively, data transformations, weighted least squares regression, or generalized least squares can be considered to mitigate the impact of heteroscedasticity, ensuring that the model’s conclusions remain statistically sound.

Investigating Sphericity: A Test for Correlated Variances

Sphericity is another vital statistical assumption, particularly relevant in repeated-measures ANOVA and certain multivariate analyses. It evaluates whether the variances of the differences between all possible pairs of within-subject conditions (or variables in a multivariate context) are equal. Essentially, it’s a test for whether the correlation matrix of the differences between variables is an identity matrix, implying that the variances are equal and the covariances are zero. While often discussed in the context of repeated measures, understanding sphericity can also provide insights into the underlying correlation structure of variables, which is highly beneficial before applying dimensionality reduction techniques like Principal Component Analysis (PCA). If sphericity holds, it suggests a simpler covariance structure, potentially simplifying analysis. If it’s violated, it indicates more complex interdependencies.

Pingouin’s pg.sphericity() function performs Mauchly’s test for sphericity, a widely used method to assess this assumption.

# Mauchly's sphericity test

sphericity_results = pg.sphericity(df[features])

print(sphericity_results)The outcome for the selected wine features is:

SpherResults(spher=False, W=np.float64(0.004437706589942777), chi2=np.float64(35184.26583883276), dof=9, pval=np.float64(0.0))The result spher=False and a p-value of 0.0 indicate a clear violation of sphericity. This means that the variances of the differences between pairs of our chosen features are not equal. While this might seem like another "problem" with the dataset, it offers a crucial insight. A p-value of 0.0 means the null hypothesis of an identity correlation matrix is strongly rejected. In practical terms, this suggests that meaningful correlations do exist between the variables. This finding is particularly pertinent when considering dimensionality reduction. If there were no correlations (sphericity held true), PCA would be largely ineffective because there would be no shared variance to capture and reduce. The detection of significant correlations, despite the violation of strict sphericity, implies that applying PCA to this dataset could indeed be a valuable strategy for reducing the number of features while retaining most of the essential information, especially if the dataset were much larger and feature reduction was a primary goal. This seemingly "arid" result actually guides towards a useful data processing step.

Detecting Multicollinearity: A Threat to Interpretability

Multicollinearity refers to a phenomenon in which two or more predictor variables in a multiple regression model are highly correlated with each other. While not inherently a "problem" for a model’s predictive power (as long as the model is not overfitted), high multicollinearity can severely undermine the interpretability of individual predictor effects. It makes it difficult to ascertain the independent contribution of each predictor to the dependent variable, leading to unstable coefficient estimates, inflated standard errors, and difficulty in assessing statistical significance. For interpretable models like linear regression, this can be a significant drawback.

Pingouin’s pg.rcorr() function offers a robust way to calculate correlation matrices, providing not only the correlation coefficients but also their statistical significance levels using asterisks, which is a valuable visual aid for quick assessment.

# Calculating a robust correlation matrix with p-values

correlation_matrix = pg.rcorr(df[features], method='pearson')

print(correlation_matrix)The output correlation matrix is presented as follows:

fixed acidity volatile acidity citric acid pH alcohol

fixed acidity - *** *** *** ***

volatile acidity 0.219 - *** *** **

citric acid 0.324 -0.378 - ***

pH -0.253 0.261 -0.33 - ***

alcohol -0.095 -0.038 -0.01 0.121 -Pingouin’s rcorr enhances the standard pandas corr() output by adding asterisks to indicate statistical significance: * for p < 0.05, ** for p < 0.01, and *** for p < 0.001. While many correlations are statistically significant (indicated by asterisks), it’s crucial to distinguish statistical significance from practical significance. Multicollinearity becomes a serious concern when the absolute value of the correlation coefficient between predictors is high, typically exceeding 0.8 or 0.9. In the provided output, none of the pairwise correlations reach this threshold. For instance, ‘fixed acidity’ and ‘citric acid’ show a correlation of 0.324, and ‘volatile acidity’ and ‘citric acid’ show -0.378. These values, while statistically significant, are not dangerously large in magnitude. This suggests that the five selected features largely provide unique, non-overlapping information, minimizing concerns about severe multicollinearity for models that rely on independent predictor effects. Should high multicollinearity be detected, mitigation strategies include feature selection (removing one of the highly correlated variables), dimensionality reduction (e.g., PCA), or regularization techniques (e.g., Ridge Regression) that can handle correlated predictors.

Building Automated EDA Pipelines: A Strategic Advantage in Data Science

The systematic application of these statistical tests with Pingouin underscores a pivotal shift in modern data science: the move towards automated, rigorous EDA pipelines. Manually performing these checks for every new dataset or model iteration is time-consuming and prone to human error. By integrating Pingouin’s functions into automated workflows, data scientists can ensure consistent data quality checks, enhance reproducibility, and significantly boost efficiency.

Automated EDA pipelines offer several strategic advantages:

- Consistency and Reproducibility: Ensures that the same set of statistical validations is applied uniformly across all projects, leading to consistent data quality standards.

- Efficiency: Reduces the manual effort and time spent on data validation, allowing data scientists to focus on more complex modeling and interpretation tasks.

- Scalability: Easily adaptable to large datasets and complex data landscapes, making it feasible to perform comprehensive checks on big data.

- Proactive Issue Detection: Identifies potential data issues early in the project lifecycle, preventing them from propagating into flawed models and misleading insights.

- Integration with MLOps: Automated EDA can be seamlessly integrated into MLOps (Machine Learning Operations) pipelines, serving as a critical gatekeeping step before model training, deployment, and monitoring.

The ability to quickly identify and understand data characteristics—whether it’s non-normality, heteroscedasticity, or moderate multicollinearity—empowers data scientists to make informed decisions about data preprocessing, feature engineering, and ultimately, the selection of the most appropriate machine learning algorithms. This proactive approach minimizes the risk of deploying models built on shaky statistical foundations, thereby improving the reliability and trustworthiness of AI solutions.

Broader Impact and Implications for AI Success

The journey through the wine dataset, despite revealing numerous statistical violations, serves as a powerful testament to the value of rigorous EDA. The objective was not to find a "perfect" dataset, but to illustrate how to systematically identify potential data issues and understand their implications. Detecting these challenges—and knowing how to address them—before committing to a downstream machine learning analysis is infinitely more valuable than building a potentially flawed model and discovering its weaknesses much later.

The implications of adopting such robust EDA practices extend far beyond individual projects:

- For Businesses: Leads to more reliable AI-driven insights, better strategic decision-making, reduced operational risks associated with faulty models, and an improved return on investment (ROI) from AI initiatives.

- For Data Scientists: Enhances professional confidence in model outputs, streamlines workflows, and fosters a deeper understanding of data nuances, elevating their skill set.

- The Future of Data Science: Contributes to a growing trend towards greater transparency, accountability, and statistical rigor in the development and deployment of AI systems, addressing critical concerns in areas like explainable AI (XAI) and ethical AI.

Pingouin, as an open-source Python library, democratizes access to advanced statistical testing, enabling data professionals to move beyond superficial data exploration. Through its intuitive API and comprehensive suite of functions, it facilitates the construction of modern EDA pipelines that are not only efficient but also statistically sound. By systematically validating data properties, data scientists can make more informed choices regarding data transformations, model selection, and ultimately, build machine learning models that are robust, reliable, and truly reflective of the underlying data. This commitment to data quality at the earliest stages of a project is a non-negotiable prerequisite for achieving sustained success in the rapidly evolving landscape of artificial intelligence and machine learning.

Iván Palomares Carrascosa is a distinguished leader, writer, speaker, and adviser in AI, machine learning, deep learning & LLMs. He is dedicated to training and guiding others in effectively harnessing AI in real-world applications.

Leave a Reply