The landscape of patient engagement with their own health data is undergoing a profound transformation, driven by the rapid advancements in artificial intelligence (AI). A burgeoning trend sees a significant and growing number of individuals bypassing traditional medical consultations, instead opting to feed their diagnostic lab results into AI-powered tools for interpretation. This shift, while emblematic of a broader movement towards patient autonomy and self-management in healthcare, simultaneously introduces a complex array of challenges and opportunities for clinical laboratories, physicians, and regulatory bodies alike. The central dilemma revolves around the unvalidated nature of these AI interpretations, posing serious questions about accuracy, patient safety, and the fundamental role of expert medical guidance.

The Genesis of Patient-Driven Interpretation: A Shift in Healthcare Dynamics

The emergence of AI as a personal health interpreter is not an isolated phenomenon but rather the latest evolution in a decades-long trajectory towards greater patient access and control over health information. Historically, medical test results were the exclusive domain of physicians, who acted as gatekeepers and primary interpreters. However, the advent of the internet, followed by patient portals and direct-to-consumer (DTC) lab testing services, began to democratize access to health data. Services allowing individuals to order their own blood tests or genetic screens, such as those that gained prominence in the 2010s, paved the way for a more proactive patient base eager to understand their biomarkers without necessarily going through a doctor’s visit first.

This evolving dynamic created a demand for simplified, understandable explanations of complex medical jargon. Lab reports, often filled with technical terms, reference ranges, and abbreviations, can be daunting for the average person. Recognizing this gap, a new ecosystem of AI-driven startups and wellness companies has rapidly emerged. These entities are capitalizing on the inherent complexity of diagnostic data and the perceived lack of time physicians have for detailed explanations. Many now offer subscription-based services, often ranging from a few dollars to hundreds annually, that promise to translate intricate lab data into digestible summaries, highlight potential health concerns, and even suggest "next steps" or lifestyle modifications. As reported by Mashable, this burgeoning market signifies a significant shift in how consumers interact with their diagnostic information, frequently preceding any professional medical consultation.

The Unsettling Reality: Unvalidated AI and the Accuracy Crisis

Despite the allure of instant, personalized insights, the core issue plaguing AI-driven lab interpretation tools is a critical lack of rigorous clinical validation. Unlike medical devices or pharmaceuticals, which undergo extensive testing and regulatory review before reaching the market, most AI models currently offered for lab result interpretation have not been specifically benchmarked against gold-standard clinical outcomes. This absence of validation means there is no standardized framework to measure their accuracy at scale, nor robust, peer-reviewed data to confirm their reliability.

Early evidence and expert consensus point to significant concerns regarding the accuracy of these tools. AI models, particularly large language models (LLMs) adapted for health interpretation, may misinterpret biomarkers, overlook critical findings in a panel of results, or generate recommendations that are not clinically sound. For instance, a subtle elevation in a liver enzyme might be flagged by an AI as a serious issue when, in the context of other normal markers and a patient’s medical history, it could be clinically insignificant or transient. Conversely, an AI might miss a pattern of multiple, slightly off-kilter markers that, when considered together by a human expert, indicate an emerging health problem.

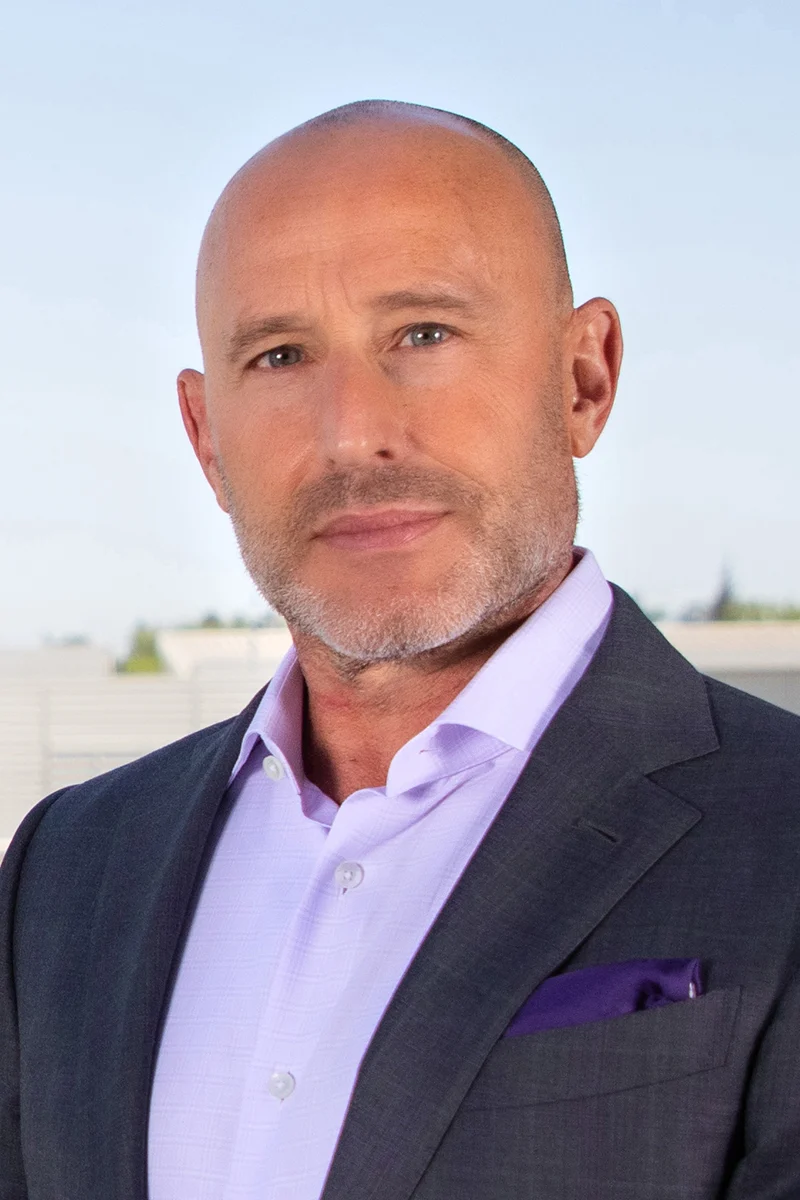

Dr. John Whyte, MD, MPH, CEO of the American Medical Association (AMA), has voiced strong skepticism regarding the reliability of these AI tools. "Physicians are [not always] the best communicators," Whyte candidly admitted, acknowledging the time constraints and communication challenges often faced by medical professionals. "I wish we were, and [that we] had more time." However, he emphasized that there is currently "no strong clinical evidence showing AI can reliably interpret blood test results or generate accurate, personalized health recommendations." This critical absence of evidence makes it difficult to ascertain whether these paid AI services offer any demonstrable advantage over free chatbots, or more importantly, over the nuanced, context-aware guidance of a trained physician. "I think you have to be skeptical about some of the claims," Whyte cautioned, underscoring the potential for misleading or even harmful advice.

The complexity of laboratory results often extends beyond simple numerical values. Factors such as patient demographics, existing medical conditions, medications, lifestyle, and even the time of day a sample was taken can significantly influence results. Human clinicians are trained to integrate these multifactorial elements into a holistic interpretation, a capability that current AI models struggle to replicate reliably without extensive, context-rich training data and sophisticated reasoning capabilities. Errors, therefore, are more likely to occur in complex or ambiguous cases, potentially leading to unnecessary anxiety, redundant or inappropriate follow-up testing, delayed diagnoses, or even misdiagnosis.

The Regulatory Vacuum: A Challenge for Oversight

Adding another layer of complexity is the significant regulatory gap surrounding these AI tools. The rapid pace of AI development has largely outstripped the ability of regulatory bodies, such as the Food and Drug Administration (FDA) in the United States, to establish clear guidelines and oversight. Generally, the FDA considers any software that provides interpretation leading to a diagnosis or informs clinical management decisions to be a medical device. This classification would typically necessitate rigorous pre-market review, including evidence of safety and effectiveness, before the product could be legally marketed.

However, many AI tools for lab interpretation are marketed as "wellness aids" or "information providers" rather than diagnostic tools, often with disclaimers that they do not provide medical advice. This strategic positioning allows developers to circumvent the stringent regulatory pathways designed for medical devices. Consumers, often unaware of the nuances of FDA oversight or the implications of such disclaimers, may implicitly trust these tools as authoritative medical sources. The lack of clear regulatory frameworks creates a hazardous environment where unvalidated tools can proliferate, potentially endangering public health. The challenge for regulators is to develop agile frameworks, such as adapting the "Software as a Medical Device" (SaMD) guidance, that can effectively evaluate AI products without stifling innovation, ensuring patient safety remains paramount.

A Fragmented Market: Pricing, Value, and Opportunity

The market for AI-driven lab result interpretation is currently characterized by its fragmentation and a wide spectrum of pricing models, reflecting both the commercial opportunity and the inherent uncertainty regarding true clinical value. At the more accessible end, some platforms offer freemium models, providing basic explanations for free while charging a few dollars per month (typically $4-$8) for more advanced insights, personalized dashboards, or trend analysis. These services often position themselves as tools for "health literacy" rather than medical diagnosis.

Moving up the pricing scale, wellness-focused companies frequently bundle AI interpretation with direct lab testing services and, in some cases, a clinician review. These comprehensive packages can command significantly higher prices, often starting at $199 per individual test or upwards of $500 annually for ongoing biomarker tracking and interpretation. The value proposition here often includes a perceived "concierge" experience and more integrated health management.

For clinical laboratories and enterprise solutions, the pricing model typically shifts to pay-per-report or per-biomarker, where costs might be mere cents per analyte but scale significantly with volume. This B2B model often focuses on leveraging AI to streamline internal processes, enhance efficiency, or provide supplementary information to clinicians, rather than direct patient interpretation.

This wide pricing spectrum underscores a critical disconnect: the cost of an AI interpretation service does not yet clearly correlate with validated clinical performance or proven patient outcomes. Consumers are left to navigate a market where perceived value, ease of use, and marketing claims often overshadow evidence-based efficacy. For clinical laboratories, this presents a dual challenge and opportunity. On one hand, it highlights a potential revenue stream and a way to enhance patient engagement. On the other, it necessitates careful consideration of how to integrate AI responsibly, ensuring that commercial interests do not compromise the integrity of diagnostic information or patient safety.

Implications for Clinical Laboratories: Adapting to a New Paradigm

The rise of AI-driven result interpretation presents clinical laboratories with both immediate challenges and long-term strategic imperatives. Labs, traditionally focused on the accurate and timely generation of results, are now compelled to adapt their roles to a more patient-centric environment.

One crucial implication is the intensified need for clearer, more patient-friendly reporting. If patients are accessing their results independently, the language used in lab reports must evolve beyond technical jargon. Labs may need to invest in digital platforms that offer simplified explanations, visual aids, and context-sensitive information alongside the raw data. This could involve developing in-house AI tools to generate patient-facing summaries or partnering with validated third-party platforms.

Improved patient communication strategies are also paramount. Labs cannot simply provide data; they must empower patients to understand it correctly. This might involve offering easily accessible educational resources, FAQs, or even direct lines of communication for general inquiries about results (while carefully avoiding providing medical advice). The objective is to enhance health literacy and guide patients toward appropriate medical follow-up when necessary, rather than allowing them to rely solely on unvalidated AI.

The development and integration of more accessible digital tools within the laboratory ecosystem become essential. This could include secure patient portals with enhanced functionalities, or even AI-powered internal tools designed to assist lab professionals in identifying critical results more efficiently or flagging potential areas of concern for physicians. The goal should be to augment, not replace, human expertise.

Furthermore, clinical laboratories hold a critical responsibility in advocating for and participating in the validation of AI tools. As the custodians of diagnostic accuracy, labs are uniquely positioned to collaborate with AI developers, clinicians, and regulatory bodies to establish robust validation frameworks and contribute real-world data for benchmarking AI performance. This proactive engagement is vital to ensure that AI applications meet the same rigorous standards applied to other medical technologies.

The shift towards patient-driven data interpretation also underscores the imperative for laboratories to reinforce their fundamental role: ensuring accuracy, providing clinical context, and facilitating the appropriate use of diagnostic information. While AI may enhance patient engagement and initial understanding, laboratories remain the bedrock of reliable diagnostics. They must continue to emphasize that AI interpretations are supplementary, never a replacement for a qualified medical professional who can integrate lab results with a comprehensive understanding of the patient’s medical history, physical examination, and other clinical factors.

The Physician’s Evolving Role: Context, Time, and Trust

The physician’s role in this new landscape is also undergoing redefinition. Dr. Whyte’s acknowledgment of physician communication challenges highlights a core reason patients seek AI alternatives: the desire for more time and clearer explanations than a typical office visit might afford. However, the physician brings an irreplaceable element to the interpretation of lab results: context. A human doctor can integrate seemingly disparate pieces of information—a patient’s symptoms, lifestyle, family history, medication list, and even emotional state—to form a comprehensive diagnostic picture. This holistic approach is currently beyond the capabilities of even the most advanced AI.

Physicians are likely to find themselves needing to address AI-generated interpretations brought to them by patients, which could add a layer of complexity to consultations. This necessitates that medical professionals remain informed about the capabilities and limitations of these AI tools. There is an opportunity for AI to serve as a supportive tool for physicians, perhaps by pre-analyzing complex data sets, identifying potential trends, or generating patient-friendly summaries that physicians can then review and personalize. The key lies in leveraging AI to augment clinical practice, not to replace the critical thinking and empathetic communication that define quality medical care. Building and maintaining patient trust in the face of readily available AI "answers" will be a central challenge.

Looking Ahead: The Future of Health Literacy and Responsible AI Integration

The phenomenon of patients turning to AI for lab result interpretation is more than a fleeting trend; it signifies a permanent shift in how individuals interact with their health information. The path forward requires a multi-pronged approach involving all stakeholders.

For AI developers, the onus is on pursuing rigorous clinical validation and transparency regarding the limitations of their tools. Clear disclaimers and a commitment to evidence-based efficacy, rather than just market capture, will be essential for long-term credibility.

For regulatory bodies, developing agile and comprehensive frameworks that can keep pace with technological innovation while safeguarding public health is an urgent priority. This includes clarifying the classification of AI health tools and enforcing appropriate standards.

For clinical laboratories, the challenge is to embrace innovation while upholding their core mission of accuracy and clinical relevance. This means investing in patient-centric reporting, enhancing communication, and actively participating in the validation and responsible deployment of AI in diagnostics.

Ultimately, the goal must be to strike a delicate balance between empowering patients with information and ensuring that this information is accurate, contextually relevant, and leads to appropriate healthcare decisions. AI has the potential to significantly enhance health literacy and patient engagement, but only if its application is guided by stringent scientific validation, robust ethical considerations, and a collaborative effort from the entire healthcare ecosystem. The journey toward fully integrating AI into diagnostic interpretation is still in its early stages, marked by both immense promise and considerable peril, demanding careful navigation to protect the integrity of patient care.

—Janette Wider

Leave a Reply