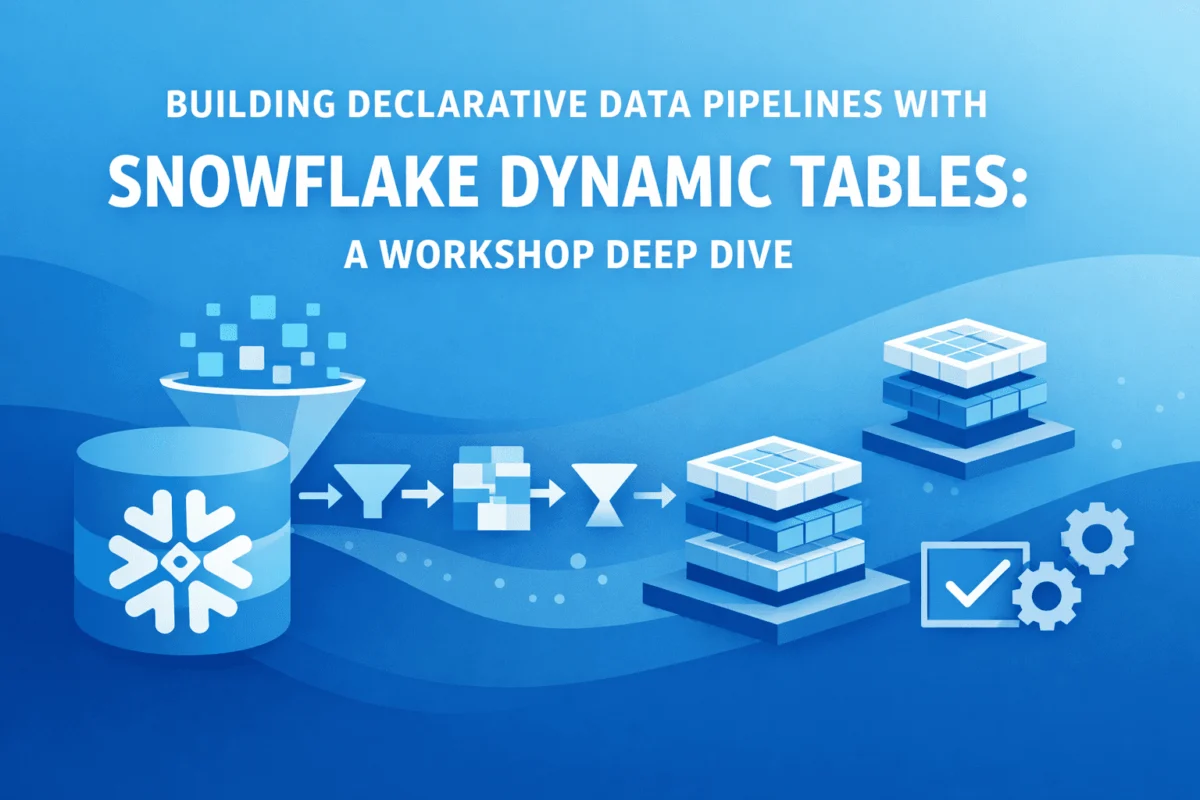

The rapidly evolving landscape of data engineering is witnessing a significant paradigm shift, with declarative programming emerging as a cornerstone for streamlining data infrastructure. This transformation was recently underscored by a comprehensive hands-on workshop hosted by Snowflake, which provided participants with invaluable practical experience in constructing declarative data pipelines utilizing Snowflake’s innovative Dynamic Tables. The workshop served as a compelling demonstration of how modern data platforms are fundamentally simplifying complex Extract, Transform, Load (ETL) workflows, attracting a diverse cohort of data practitioners, from burgeoning students to seasoned engineers, all keen to grasp the profound implications of declarative approaches for enhancing their data transformation processes.

The Evolution of Data Engineering and the Declarative Promise

Traditionally, the development of data pipelines has been characterized by an imperative, procedural approach. This methodology mandates the meticulous coding of every step involved in data transformation and movement between various stages. Such a process is inherently verbose, prone to errors, and demands significant maintenance overhead. Data engineers often found themselves engrossed in writing intricate orchestration scripts, managing dependencies manually, and debugging complex refresh schedules across disparate tools. This imperative style, while effective, often diverted critical engineering talent from higher-value tasks such as data modeling, quality assurance, and business logic implementation.

The declarative paradigm offers a revolutionary alternative. Instead of dictating how data transformations should occur, it empowers data engineers to simply specify what the desired end state of the data should be. This abstraction layer is where Dynamic Tables in Snowflake shine. They encapsulate and automate the underlying complexities of refresh logic, intricate dependency tracking, and efficient incremental updates—tasks that would otherwise consume countless hours of manual coding and debugging. This fundamental shift drastically reduces the cognitive load on developers, allowing them to focus on the logical integrity and business value of their data, while simultaneously minimizing the attack surface for bugs that frequently plague traditional ETL implementations. The growing adoption of cloud-native data warehouses, projected to reach a market value exceeding $100 billion by the end of the decade, provides fertile ground for such innovations, as enterprises seek more agile and scalable data solutions.

A Structured Journey: Mapping the Workshop Architecture and Learning Path

The Snowflake workshop was meticulously designed as a progressive learning journey, guiding participants from foundational setup to advanced pipeline monitoring over six comprehensive modules. Each module was strategically structured to build upon the preceding one, fostering a cohesive and cumulative learning experience that mirrored the logical progression of real-world data pipeline development. This pedagogical approach ensured that learners not only understood individual concepts but also appreciated their interconnectedness within a larger data ecosystem.

Establishing the Data Foundation: Module 1

The initial phase of the workshop focused on establishing a robust data foundation within the Snowflake environment. Participants began by provisioning a Snowflake trial account, a quick and accessible entry point to the platform. Following this, a precisely crafted setup script was executed to create the necessary infrastructural components. This included the setup of two distinct virtual warehouses: one designated for handling raw, ingested data, and another optimized for analytical workloads, ensuring efficient resource allocation and cost management.

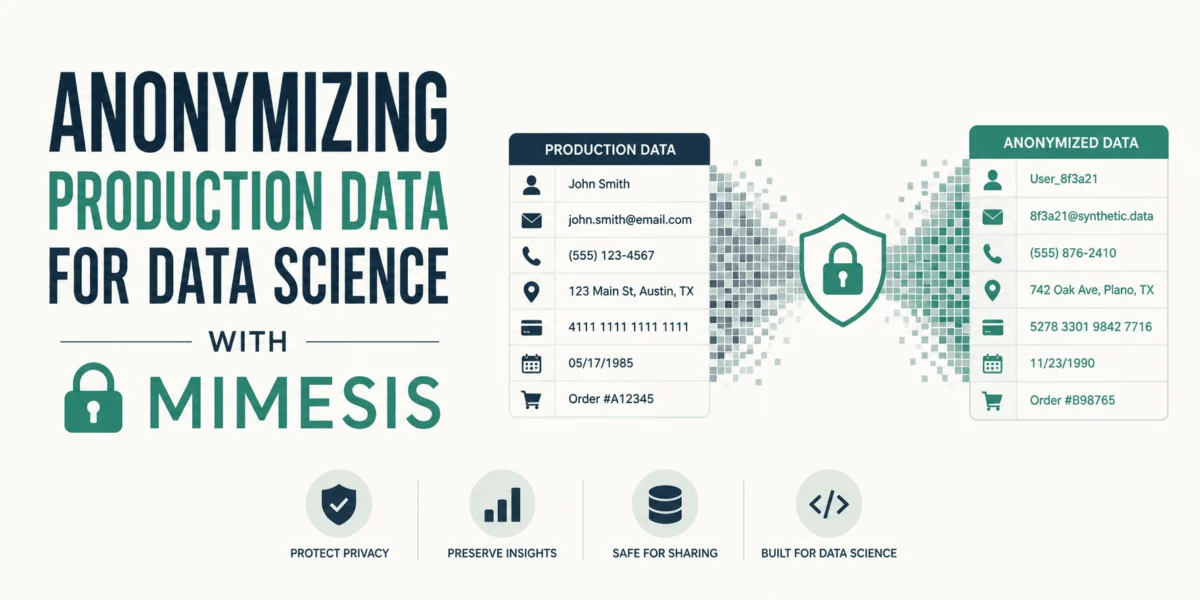

Crucially, the workshop eliminated the common hurdle of sourcing and preparing external datasets by leveraging Snowflake’s extensibility. Participants utilized Python User-Defined Table Functions (UDTFs) in conjunction with the popular Faker library to generate realistic, synthetic datasets. This ingenious approach provided 1,000 customer records complete with spending limits, 100 product records detailing stock levels, and a substantial volume of 10,000 order transactions spanning the previous 10 days. The use of Python UDTFs showcased Snowflake’s robust capabilities for integrating external programming logic directly within the data platform, allowing participants to concentrate entirely on pipeline mechanics without being sidetracked by data acquisition and initial preparation. The deliberately chosen data volumes were large enough to simulate real-world processing characteristics and refresh behaviors, yet sufficiently small to allow for rapid completion of hands-on exercises, thus optimizing the learning curve.

Creating the First Dynamic Tables: Module 2

Module two plunged participants into the core concept of Dynamic Tables. This hands-on segment involved the creation of staging tables, which are critical intermediate steps in any robust data pipeline. Participants learned to transform raw customer data by systematically renaming columns and casting data types using standard SQL SELECT statements, all seamlessly encapsulated within Dynamic Table definitions. A key parameter introduced here was target_lag=downstream, a powerful feature demonstrating automatic refresh coordination. This intelligent mechanism ensures that tables refresh only when required by their dependent downstream tables, effectively eliminating the need for complex, rigid scheduling logic typically managed by external orchestration tools.

The module further explored the practical handling of semi-structured data, a common challenge in modern data environments. For the orders table, participants gained expertise in parsing nested JSON structures, leveraging Snowflake’s native VARIANT data type and intuitive path notation. This practical exercise illustrated how Dynamic Tables can declaratively manage semi-structured data transformations, extracting crucial elements like product IDs, quantities, prices, and dates from complex JSON purchase objects and flattening them into a tabular format. The ability to perform such sophisticated transformations within the same declarative framework that handles traditional relational data proved particularly valuable for participants accustomed to working with modern API-driven data sources.

Chaining Tables to Build a Data Pipeline: Module 3

Elevating the complexity, Module three demonstrated the fundamental principle of table chaining, a cornerstone of multi-stage data pipelines. Participants progressed to creating a fact table, a central component in dimensional modeling, which judiciously joined the two staging Dynamic Tables previously established. This fact table for customer orders effectively combined granular customer information with their historical purchase data through a left join operation. The resulting schema adhered to established dimensional modeling principles, creating a highly optimized structure suitable for high-performance analytical queries and seamless integration with Business Intelligence (BI) tools.

It was in this module that the power of the declarative nature became profoundly evident. In contrast to traditional methods that would necessitate writing complex orchestration code to guarantee the staging tables refresh before the fact table, the Dynamic Table framework autonomously managed these intricate dependencies. When source data changes, Snowflake’s intelligent optimizer automatically determines the optimal refresh sequence and executes it with precision, entirely without manual intervention. Participants immediately grasped the immense value proposition: multi-table pipelines that would traditionally demand dozens, if not hundreds, of lines of complex orchestration code were instead elegantly defined purely through declarative SQL table definitions, significantly reducing development time and enhancing maintainability.

Visualizing Data Lineage: Module 4

A standout feature of the workshop was the seamless, built-in data lineage visualization. By navigating to the intuitive Catalog interface and selecting the ‘Graph’ view for their newly created fact table, participants were presented with a clear, visual representation of their entire data pipeline as a directed acyclic graph (DAG). This powerful visualization transparently depicted the flow of data from raw source tables, through the intermediate staging Dynamic Tables, all the way to the final fact table. This provided immediate and profound insight into data dependencies and the various transformation layers applied. The automatic generation of such comprehensive lineage documentation directly addressed a chronic pain point in traditional data pipelines, where lineage often required separate, costly tools or manual documentation that quickly became outdated and unreliable, leading to governance and debugging challenges.

Managing Advanced Pipelines

Monitoring and Tuning Performance: Module 5

Module five shifted focus to the crucial operational aspects of managing production-grade data pipelines. Participants learned to query the information_schema.dynamic_table_refresh_history() function, a vital resource for inspecting refresh execution times, quantifying data change volumes, and identifying potential errors. This rich metadata provides the essential observability required for robust production pipeline management. The ability to query refresh history using standard SQL meant that participants could readily integrate monitoring data into existing dashboards and alerting systems, bypassing the need to learn new, proprietary monitoring tools.

The workshop also delved into freshness tuning, demonstrating the flexibility of Dynamic Tables by altering the target_lag parameter. From the default ‘downstream’ mode, participants experimented with setting a specific time interval, such as ‘5 minutes’. This critical functionality empowers data engineers to intelligently balance data freshness requirements against computational costs, allowing them to adjust refresh frequencies precisely based on evolving business needs. Participants actively experimented with different lag settings, observing firsthand how the system responded and gaining invaluable intuition about the tradeoffs between real-time data availability and resource consumption, a common challenge in large-scale data operations.

Implementing Data Quality Checks: Module 6

The integration of data quality checks represented a crucial pattern for building production-ready pipelines. Participants modified their fact table definition to incorporate filtering logic, specifically a WHERE clause designed to exclude records with null product IDs. This approach demonstrated declarative quality enforcement, ensuring that only valid orders propagated through the pipeline. The filtering logic was automatically applied during each refresh cycle, guaranteeing data integrity. The workshop emphasized that embedding quality rules directly within table definitions makes them an intrinsic part of the pipeline’s contract, rendering data validation transparent, auditable, and inherently maintainable, a significant improvement over external, disconnected validation scripts.

Extending with Artificial Intelligence Capabilities: Module 7

Module seven broadened the scope by introducing Snowflake Intelligence and Cortex capabilities, showcasing the seamless integration of artificial intelligence (AI) features directly within data engineering workflows. Participants explored the Cortex Playground, connecting it to their meticulously engineered orders table and enabling natural language queries against their rich purchase data. This powerful demonstration highlighted the accelerating convergence of data engineering and AI, where well-structured, clean data pipelines become immediately accessible and queryable through intuitive conversational interfaces. The effortless integration between engineered data assets and cutting-edge AI tools illustrated how modern data platforms are effectively dismantling the traditional barriers between data preparation and advanced analytical consumption, paving the way for data-driven insights to be democratized across an organization.

Validating and Certifying Skills

The culmination of the workshop was an innovative autograding system. This automated verification mechanism meticulously validated each participant’s implementation, ensuring that learners had successfully completed all pipeline components and met the specified requirements. Successful completion led to the earning of a Snowflake badge, providing tangible and verifiable recognition of their newly acquired skills. The autograder checked for correct table structures, accurate transformations, and appropriate configuration settings, instilling confidence in participants that their implementations adhered to professional industry standards and best practices.

Key Takeaways for Data Engineering Practitioners

The Snowflake workshop underscored several critical patterns and advantages for modern data engineering:

- Declarative Simplicity: The fundamental shift from imperative "how" to declarative "what" dramatically simplifies pipeline development, allowing engineers to focus on business logic rather than orchestration minutiae.

- Automated Dependency Management: Dynamic Tables eliminate the need for manual dependency tracking and complex scheduling, significantly reducing human error and maintenance overhead.

- Built-in Incremental Processing: The platform intelligently processes only changed data, optimizing compute resources and accelerating refresh cycles, leading to substantial cost efficiencies.

- Integrated Observability and Lineage: Native tools for monitoring refresh history and visualizing data lineage provide immediate insights, enhancing debugging, governance, and compliance efforts.

- Scalability and Performance: Leveraging Snowflake’s cloud-native architecture, Dynamic Tables inherently scale to handle growing data volumes and complex transformations without manual intervention.

- Enhanced Data Quality: The ability to embed data quality rules directly within table definitions ensures consistent enforcement and higher data integrity throughout the pipeline.

- AI-Ready Data Assets: Structured pipelines become immediate assets for AI/ML initiatives, enabling faster time-to-insight through integrated intelligence features like Snowflake Cortex.

Evaluating Broader Implications for the Data Ecosystem

This workshop model and the underlying technology it showcased represent a profound shift in the capabilities and expectations of modern data platforms. As cloud data warehouses continue to integrate more sophisticated declarative features, the core skill requirements for data engineers are undergoing a significant evolution. The emphasis is shifting away from deep expertise in intricate orchestration frameworks and manual refresh scheduling towards a greater focus on robust data modeling, meticulous data quality design, and the precise implementation of business logic. This reduced dependency on infrastructure-specific expertise simultaneously lowers the barrier to entry for analytics professionals aspiring to transition into more data engineering-centric roles, fostering a more versatile and agile workforce.

The innovative synthetic data generation approach, utilizing Python UDTFs, also highlights a crucial emerging pattern for internal training and development environments. By embedding the capability for realistic data generation directly within the platform itself, organizations can rapidly create isolated, self-contained learning environments. This circumvents the need to expose sensitive production data or manage complex, external dataset provisions. This pattern is particularly invaluable for organizations operating under stringent data privacy regulations, such as GDPR or CCPA, which severely restrict the use of real customer data in non-production environments.

For organizations actively evaluating modern data engineering approaches, the Dynamic Tables pattern offers a compelling suite of advantages: significantly reduced development time for new pipelines, a lower maintenance burden for existing workflows, and the enforcement of built-in best practices for dependency management and incremental processing. The declarative model further democratizes data pipeline creation, making it more accessible to a broader cohort of SQL-proficient analysts who may lack extensive programming backgrounds. Cost efficiency is also markedly improved, as the system intelligently processes only changed data rather than performing resource-intensive full refreshes, and compute resources automatically scale based on workload demands.

The workshop’s logical progression, from simple staging transformations to multi-table pipelines incorporating advanced monitoring and quality controls, provides a practical and actionable template for adopting these patterns within production environments. A sensible adoption path for teams exploring declarative pipeline development would involve starting with non-critical staging transformations, gradually adding incremental joins and aggregations, and then layering in essential observability and robust quality checks. Organizations can confidently pilot this approach with less critical pipelines before strategically migrating mission-critical workflows, thereby building expertise and confidence incrementally.

As global data volumes continue their exponential growth and the complexity of data pipelines inevitably increases, declarative frameworks that automate the mechanical, repetitive aspects of data engineering are poised to become standard industry practice. This will ultimately free data practitioners to dedicate their invaluable time and expertise to the strategic aspects of data architecture, advanced analytics, and the direct delivery of business value. The Snowflake workshop unequivocally demonstrated that this technology has matured well beyond early-adopter status and is unequivocally ready for mainstream enterprise adoption across a diverse array of industries and use cases.

Rachel Kuznetsov, an expert with a Master’s in Business Analytics, is renowned for her ability to tackle complex data puzzles and her relentless pursuit of fresh challenges. She is deeply committed to demystifying intricate data science concepts and actively explores the multifaceted impact of AI on daily life, documenting her continuous learning journey to inspire and educate others. She can be found on LinkedIn, sharing her insights and experiences.

Leave a Reply