Data validation, often perceived as a fundamental check for missing values or duplicate records, is undergoing a profound evolution. In today’s complex data landscapes, where data streams at unprecedented volumes and velocities, traditional quality assessments are proving insufficient. Modern datasets harbor insidious issues – semantic inconsistencies, temporal anomalies, structural drift, and subtle breaks in referential integrity – that evade rudimentary checks. These advanced data quality challenges pose significant threats to data-driven decision-making, operational efficiency, and the reliability of analytical models. Recognizing this critical gap, a new suite of sophisticated Python scripts has emerged, offering robust solutions to safeguard data integrity by delving into the contextual, logical, and relational nuances of information.

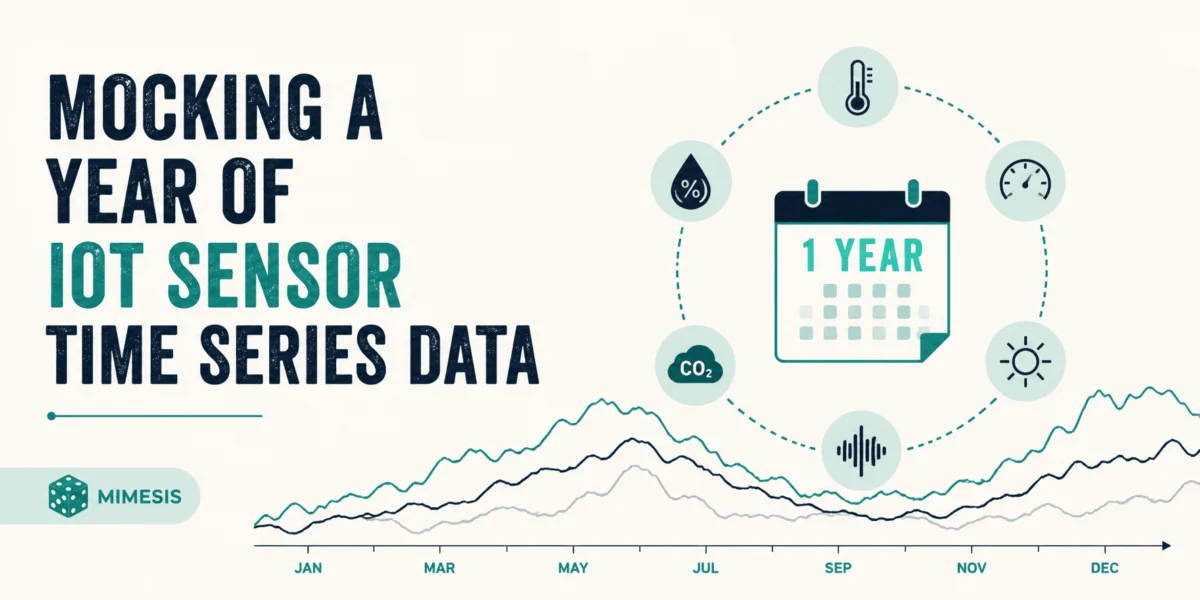

The growing complexity of enterprise data environments, spanning diverse sources from IoT sensors to customer relationship management (CRM) systems, has made data validation a mission-critical function. While null checks and uniqueness constraints remain foundational, the real-world impact often stems from data that appears superficially correct but is logically flawed. A purchase order with a future date for delivery, or a time-series record indicating an impossible change in value, can pass basic validation yet completely disrupt supply chains or financial forecasts. These subtle yet pervasive errors necessitate automated, context-aware validation frameworks. Python, with its rich ecosystem of data manipulation and analysis libraries, has become the preferred tool for developing these advanced validation mechanisms, enabling data professionals to proactively identify and rectify issues that could otherwise lead to significant operational disruptions, financial losses, or flawed strategic insights. The following sections detail five such powerful Python scripts, each designed to tackle a distinct category of advanced data validation challenges.

Validating Time-Series Continuity and Patterns: Ensuring Temporal Integrity

The Problem of Temporal Anomalies in Critical Datasets

Time-series data forms the backbone of numerous critical applications, from financial trading and climate modeling to industrial IoT monitoring and healthcare diagnostics. The inherent assumption with time-series data is a logical, sequential progression of events or measurements. However, this assumption is frequently violated in real-world scenarios. Gaps in expected sequences, timestamps that inexplicably jump forward or backward, sensor readings that fail to register within defined intervals, or event sequences that occur out of their logical order are common pain points. These "temporal anomalies" are not merely cosmetic flaws; they fundamentally corrupt predictive models, distort trend analyses, and can lead to erroneous operational decisions. For instance, in predictive maintenance for industrial machinery, a missing sensor reading might mask an impending equipment failure, while in financial markets, an out-of-sequence trade record could invalidate complex algorithmic strategies. The financial services industry alone faces billions in potential losses due to data quality issues, with temporal inconsistencies being a significant contributor.

A Pythonic Approach to Temporal Integrity

The specialized Python script developed for time-series validation offers a comprehensive solution to these challenges. Its core function is to establish and enforce the temporal integrity of time-series datasets. This involves a multi-faceted approach:

- Missing Timestamp Detection: Identifying absent data points in sequences where continuous measurements are expected, crucial for maintaining data completeness.

- Gap and Overlap Identification: Pinpointing periods where data collection either ceased unexpectedly or where records overlap, indicating potential data duplication or system errors.

- Out-of-Sequence Record Flagging: Detecting instances where timestamps do not adhere to a strictly increasing (or decreasing, depending on context) order, which can severely impact causality analysis.

- Seasonal Pattern and Frequency Validation: Ensuring that data aligns with expected periodicities and sampling rates, critical for applications relying on seasonality (e.g., retail sales, energy consumption).

- Timestamp Manipulation and Backdating Detection: Identifying records where timestamps appear to have been altered to fit a narrative or cover up delays, a common issue in audit trails and compliance-sensitive data.

- Impossible Velocity Checks: A sophisticated feature that flags instances where values change at a rate faster than physically or logically possible within the given time interval (e.g., a temperature reading jumping from 0°C to 1000°C in a second in a stable environment, or a stock price moving by an implausible percentage within a microsecond without market-moving news).

Underlying Mechanisms and Broader Implications

The script operates by analyzing timestamp columns to infer the dataset’s expected frequency (e.g., hourly, daily, minutely). It then employs algorithms to detect deviations from this inferred frequency, flagging gaps or unexpected jumps. For sequence validation, it leverages sorting and comparison techniques. Domain-specific velocity checks are implemented by defining thresholds for acceptable rates of change for particular metrics. The detection of seasonality violations often involves statistical methods or pattern matching against historical norms. The output is a detailed report that not only highlights temporal anomalies but also assesses their potential business impact, allowing organizations to prioritize remediation efforts. According to industry reports, organizations with robust time-series validation can improve forecasting accuracy by up to 20%, leading to better resource allocation and reduced operational risks. Implementing such a script transforms time-series data from a potential liability into a reliable asset for predictive analytics and operational intelligence.

Checking Semantic Validity with Business Rules: Enforcing Logical Coherence

The Insidious Nature of Semantic Violations

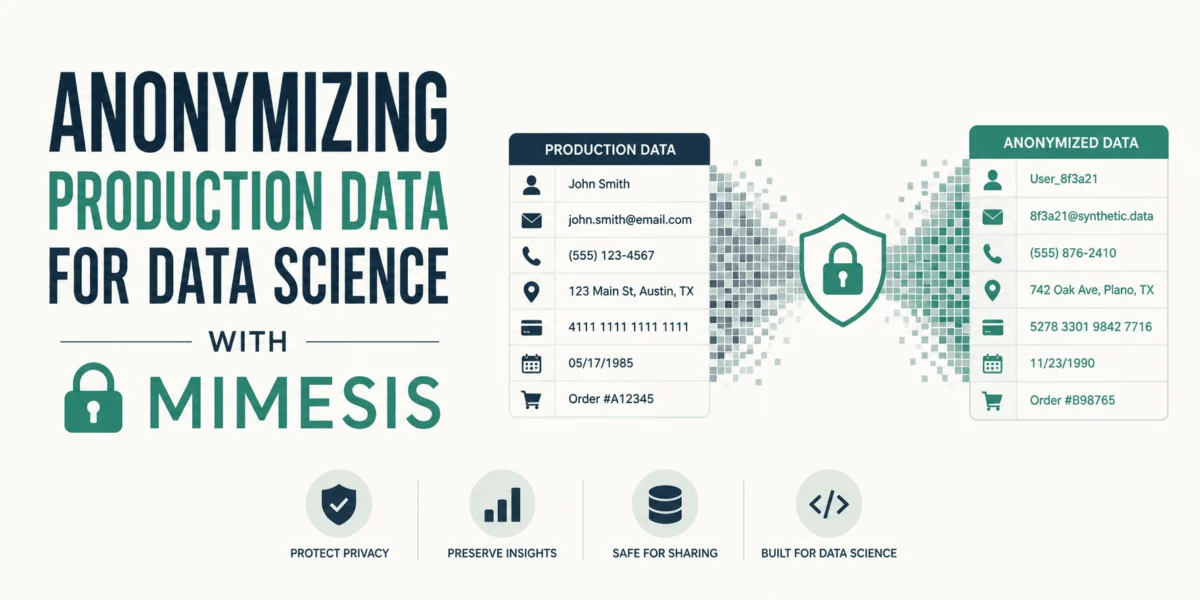

Semantic validity goes beyond the superficial correctness of individual data points; it scrutinizes the logical coherence and meaningfulness of data combinations within the context of defined business rules. A field might correctly store a date, but if that date represents a completed delivery for a purchase order issued in the future, the data is semantically invalid. Similarly, an account flagged as a "new customer" despite having a five-year transaction history presents a glaring semantic contradiction. These violations are particularly insidious because they pass basic type and range checks, appearing "clean" to automated systems. Yet, they fundamentally break business logic, leading to erroneous financial reports, incorrect customer segmentation, compliance failures, and compromised operational workflows. The cost of poor data quality, often driven by semantic inconsistencies, is estimated to run into trillions of dollars annually across global enterprises.

A Sophisticated Rule Engine for Business Logic

This Python script is engineered to validate data against complex business rules and intricate domain knowledge. It provides a robust framework for:

- Multi-Field Conditional Logic: Evaluating complex "if-then" or "AND/OR" conditions across multiple fields (e.g., "IF customer_status is ‘active’ AND account_balance is negative THEN flag_for_review").

- Validation of Stages and Temporal Progression: Ensuring that data reflects a logical progression through defined business workflows (e.g., an order cannot be "shipped" before it is "processed").

- Mutually Exclusive Category Enforcement: Verifying that categories designed to be distinct do not overlap (e.g., a product cannot be both "new" and "discontinued" simultaneously in the same inventory record).

- Flagging Logically Impossible Combinations: Identifying data combinations that defy common sense or established domain principles (e.g., a person’s age is greater than their date of birth implies).

Implementation and Transformative Impact

The script’s efficacy lies in its ability to accept business rules defined in a declarative format, often as a configuration file or a set of easily readable expressions. This abstraction allows business analysts, not just developers, to contribute to and maintain the validation logic. It then evaluates these complex conditional rules across multiple data fields, performing cross-record and intra-record checks. It is particularly adept at validating state transitions and workflow progressions, ensuring that business events unfold in a prescribed order. The script also incorporates industry-specific domain rules, making it adaptable to various sectors like finance (e.g., anti-money laundering rules), healthcare (e.g., patient eligibility criteria), or manufacturing (e.g., bill of materials validation). Upon detecting violations, it generates comprehensive reports, categorizing issues by rule type, severity, and estimated business impact, facilitating targeted data remediation. Implementing such a semantic validator helps organizations move from reactive error correction to proactive data governance, significantly reducing operational errors, ensuring regulatory compliance, and fostering greater trust in analytical outputs.

Detecting Data Drift and Schema Evolution: Maintaining Data Pipeline Resilience

The Silent Threat of Data Drift

In dynamic data environments, the structure and statistical properties of data are rarely static. Data drift, a phenomenon where the characteristics of incoming data change over time, often goes unnoticed until it causes significant downstream failures. This can manifest in various ways: new columns appearing or existing ones disappearing from a schema, subtle shifts in data types (e.g., a numeric field suddenly accepting text), expansion or contraction of value ranges, or the emergence of new categories in supposedly fixed categorical fields. These changes, if undocumented or unmanaged, can silently break data pipelines, invalidate assumptions underpinning machine learning models, render business intelligence dashboards inaccurate, and lead to months of corrupted data accumulating before detection. The impact is profound: degraded model performance, flawed business decisions, and substantial engineering effort to retroactively fix issues. Surveys suggest that data drift is a leading cause of machine learning model decay, affecting over 70% of models in production.

Proactive Monitoring with Drift Detection

This Python script is designed to act as an early warning system, continuously monitoring datasets for both structural and statistical drift. Its key functionalities include:

- Schema Change Tracking: Automatically detecting additions or removals of columns, and changes in data types.

- Distribution Shift Detection: Identifying statistically significant shifts in the distribution of numeric and categorical data (e.g., average customer age changing, or a new product category gaining prominence).

- New Value Identification: Flagging the appearance of previously unseen values in categorical columns that are expected to have a fixed set of options.

- Data Range and Constraint Changes: Monitoring for alterations in the minimum/maximum values of numeric fields or other defined data constraints.

- Statistical Property Divergence: Alerting when key statistical properties (e.g., mean, median, standard deviation) of data columns diverge from established baselines.

Technical Underpinnings and Strategic Advantages

The script’s operational methodology involves creating baseline profiles of a dataset’s structure and statistical properties at a known good state. Subsequently, it periodically compares current incoming data against these baselines. To quantify drift, it employs sophisticated statistical distance metrics such as Kullback-Leibler (KL) divergence (measuring how one probability distribution diverges from a second, expected distribution) and Wasserstein distance (a metric for comparing probability distributions, particularly effective for continuous data and sensitive to shifts in shape and location). It maintains a comprehensive change history, allowing for trend analysis of data evolution. Significance testing is applied to distinguish genuine drift from random noise, preventing false positives. The script generates detailed drift reports, complete with severity levels and recommended actions, enabling data engineers and ML practitioners to address issues proactively. By implementing a drift detector, organizations can ensure the ongoing health and reliability of their data pipelines, prevent costly model degradation, and maintain the integrity of their analytical insights, thereby enhancing the resilience of their entire data ecosystem.

Validating Hierarchical and Graph Relationships: Ensuring Structural Integrity

The Complexities of Hierarchical Data Integrity

Hierarchical data structures, fundamental to representing organizational charts, product taxonomies, supply chain dependencies, and network topologies, demand stringent validation to maintain their logical integrity. The most critical issue is the presence of circular references – situations where a parent points to a child, which in turn (directly or indirectly) points back to the original parent. This creates an infinite loop, corrupting recursive queries, distorting hierarchical aggregations, and potentially causing system crashes in applications that traverse these structures. Other common problems include self-referencing bills of materials, cyclic taxonomies, parent-child inconsistencies (e.g., a child existing without a valid parent), orphaned nodes (nodes with no connection to the main hierarchy), or disconnected subgraphs. Such structural flaws undermine the very foundation of hierarchical data, making it unreliable for reporting, decision-making, and operational processes. For complex supply chains, a single cyclic dependency can halt production or lead to incorrect inventory valuations.

A Python Script for Graph and Tree Structure Validation

This advanced Python script is specifically designed to validate the structural integrity of graph and tree-like relationships embedded within relational data. Its comprehensive capabilities include:

- Circular Reference Detection: Identifying and flagging any cycles within parent-child relationships, ensuring that hierarchies remain acyclic.

- Hierarchy Depth Limit Enforcement: Validating that the depth of any given hierarchy does not exceed predefined business limits, preventing overly complex or erroneous structures.

- Directed Acyclic Graph (DAG) Validation: Ensuring that directed relationships (e.g., task dependencies in a workflow) maintain their acyclic nature, crucial for correct sequencing and execution.

- Orphaned Node and Disconnected Subgraph Detection: Identifying records that exist but are not properly linked into the main hierarchical structure.

- Root and Leaf Node Conformance: Validating that designated root nodes (top of the hierarchy) and leaf nodes (end of the hierarchy) adhere to specific business rules or properties.

- Many-to-Many Relationship Constraints: Checking the validity and cardinality of complex relationships where multiple parents can link to multiple children.

Advanced Algorithms and Visual Reporting

The script works by dynamically building graph representations from the relational data, treating records as nodes and relationships (e.g., foreign keys) as edges. It then employs well-established graph theory algorithms, such as depth-first search (DFS) or Tarjan’s algorithm, for efficient cycle detection. Breadth-first traversals are used to validate hierarchy depth and identify connectivity issues. The script can identify strongly connected components within what should be acyclic graphs, pinpointing clusters of interdependent nodes that form a cycle. Furthermore, it validates node properties at each hierarchy level, ensuring consistency throughout the structure. A significant feature is its ability to generate visual representations of problematic subgraphs, providing data stewards with an intuitive understanding of the structural violations and their exact location within the data. By providing clear insights into these complex structural issues, this script empowers organizations to maintain accurate organizational structures, reliable product configurations, and robust dependency management systems, which are vital for operational stability and accurate analytics.

Validating Referential Integrity Across Tables: Ensuring Relational Cohesion

The Foundational Crisis of Broken Referential Integrity

Referential integrity is a cornerstone of relational database design, ensuring that relationships between tables are consistently maintained. It dictates that every foreign key value in a child table must have a corresponding primary key value in the parent table. When this principle is violated, the consequences are severe and pervasive:

- Orphaned Child Records: Records in a child table that reference a non-existent or deleted parent record (e.g., an order item without a corresponding order, or an employee record referencing a department that no longer exists).

- References to Nonexistent Parents: Similar to orphaned children, but from the perspective of the child, pointing to an invalid parent.

- Invalid Codes and IDs: Foreign keys containing values that do not exist in the domain of the parent primary key.

- Uncontrolled Cascade Deletes: Situations where deleting a parent record might inadvertently delete numerous child records without proper oversight, leading to irreversible data loss.

- Circular References Across Tables: Complex interdependencies where tables indirectly reference each other in a loop, making data management and deletion incredibly difficult.

These violations lead to corrupted joins, inaccurate reports, broken queries, and a fundamental erosion of trust in the data’s reliability. According to various data quality benchmarks, referential integrity issues are among the most common and damaging data quality problems, costing businesses significant time and resources in remediation.

A Comprehensive Cross-Table Validation Framework

This Python script offers a robust solution for rigorously validating foreign key relationships and ensuring cross-table consistency, even across multiple disparate data files or database connections. Its core capabilities include:

- Orphaned Record Detection: Identifying both orphaned child records (missing parents) and orphaned parent records (parents with no children, if business rules dictate otherwise).

- Cardinality Constraint Validation: Verifying one-to-one, one-to-many, or many-to-many cardinality rules between related tables (e.g., ensuring each employee has only one manager record in a specific context).

- Composite Key Uniqueness Checks: Validating that combinations of multiple columns forming a composite primary or foreign key maintain their uniqueness and integrity across tables.

- Cascade Delete Impact Analysis: Simulating the impact of potential cascade deletes before they are executed, providing a crucial risk assessment tool.

- Inter-Table Circular Reference Identification: Detecting complex loops of foreign key relationships across multiple tables that can hinder database operations.

Operational Mechanics and Data Trust Enhancement

The script functions by loading a primary dataset and all its related reference tables, effectively creating a temporary, in-memory data model. It then performs joins or uses efficient lookup mechanisms to verify that every foreign key value in a child table correctly maps to an existing primary key value in its respective parent table. It specifically checks for cardinality rules, ensuring that the number of related records adheres to predefined constraints. For composite keys, it validates that the combination of constituent columns is unique and consistent. The script’s ability to analyze potential cascade delete impacts is particularly valuable, offering a preventative measure against accidental data loss. It generates comprehensive reports that detail all referential integrity violations, including affected row counts and the specific foreign key values that failed validation. By consistently applying these checks, organizations can ensure their relational data remains cohesive, accurate, and trustworthy, forming a solid foundation for all data-driven activities. This ultimately reduces the risk of data corruption, streamlines database maintenance, and significantly improves the reliability of analytical and operational systems.

The Imperative of Advanced Data Validation in Modern Enterprises

The journey of data validation has evolved significantly, moving far beyond the rudimentary checks for nulls and duplicates. As data proliferates and becomes increasingly integral to every facet of business operations, the subtle, context-dependent, and relational data quality issues highlighted by these five Python scripts have emerged as critical challenges. Semantic violations, temporal anomalies, structural drift, and referential integrity breaks are no longer minor inconveniences; they represent fundamental threats to data-driven decision-making, compliance, and competitive advantage.

The development and deployment of such advanced validation scripts signify a paradigm shift in data governance and data quality management. They underscore an industry trend towards proactive, automated, and intelligent data quality frameworks that integrate seamlessly into modern data pipelines (DataOps) and machine learning workflows (MLOps). This proactive approach catches problems at the point of ingestion or transformation, rather than allowing them to fester and corrupt downstream analyses or models.

For organizations navigating complex data landscapes, the strategic imperative is clear: adopt and adapt these advanced validation techniques. The recommended approach involves:

- Prioritization: Start by identifying the most pressing data quality pain points within your specific domain and implement the relevant script first.

- Customization: Establish baseline profiles and configure validation rules tailored to your unique business logic and data characteristics.

- Integration: Embed these validation processes as integral components of your data pipeline, ensuring continuous monitoring and immediate alerting.

- Thresholding: Configure alerting thresholds that are appropriate for your specific use case, balancing sensitivity with the avoidance of alert fatigue.

The economic implications of robust data quality are substantial. Studies consistently demonstrate that high-quality data leads to improved operational efficiency, reduced regulatory risks, enhanced customer experiences, and more accurate business insights, ultimately driving significant return on investment. Conversely, the cost of poor data quality, including lost revenue, increased operational expenses, and reputational damage, can be staggering. By embracing these advanced Python validation scripts, data professionals are not just cleaning data; they are fortifying the very foundation of their organization’s digital intelligence, ensuring that the data fueling their operations is not only abundant but also reliable and trustworthy.

Happy validating!

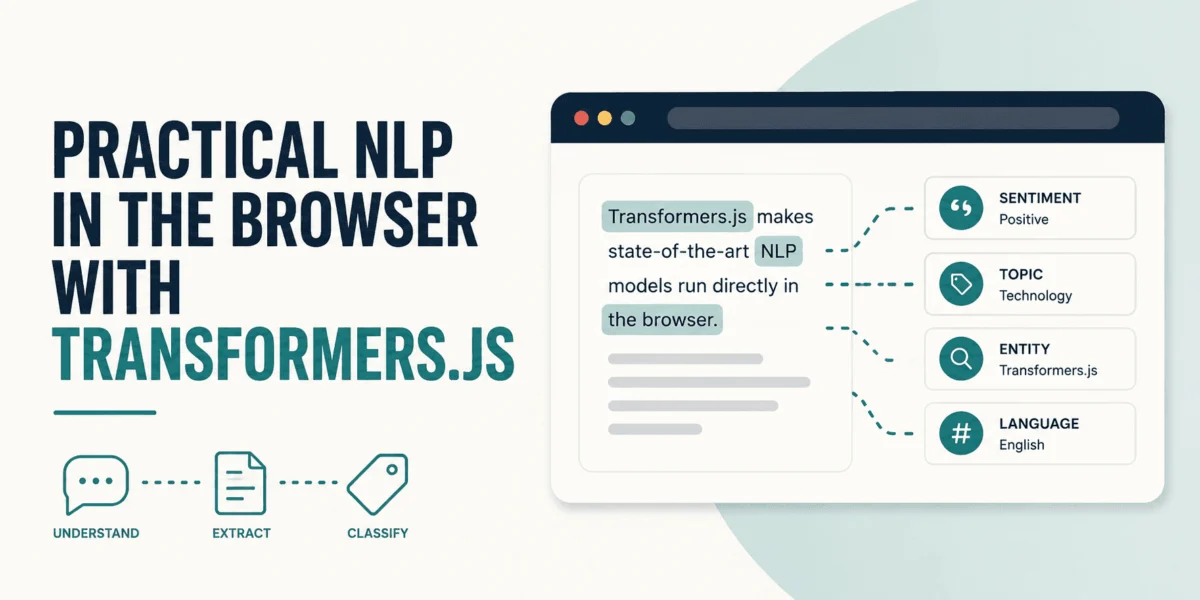

Bala Priya C, a respected developer and technical writer, is at the forefront of sharing practical insights into data science and programming. Her work consistently bridges the gap between complex technical concepts and actionable solutions for the developer community, leveraging her expertise at the intersection of mathematics, programming, data science, and content creation. Bala’s contributions, particularly in areas like DevOps, natural language processing, and data science, empower practitioners to tackle real-world challenges effectively.

Leave a Reply