The burgeoning landscape of artificial intelligence has seen a significant acceleration in the development of sophisticated AI agents, largely driven by the emergence of powerful frameworks such as LangChain and CrewAI. These tools have democratized the creation of autonomous systems capable of complex reasoning and action. However, the journey from conceptualization to deployment for these agents is often fraught with practical challenges. Developers frequently encounter hurdles like restrictive API rate limits from cloud providers, the intricate management of high-dimensional data, and the inherent security risks of exposing local development servers to the public internet during prototyping. These operational complexities can slow down development cycles, inflate costs, and introduce inconsistencies across development environments.

In response to these pervasive challenges, the adoption of containerization technologies, particularly Docker, has become an indispensable strategy for AI agent developers. Docker offers a robust solution for isolating dependencies, standardizing environments, and facilitating the seamless deployment of essential infrastructure components. Instead of incurring substantial expenses on cloud services during the critical prototyping and development phases, or burdening host machines with a myriad of dependencies and conflicting software versions, developers can harness Docker. With a single, streamlined command, developers can provision and manage the foundational infrastructure necessary to elevate the intelligence and capabilities of their AI agents. This approach not only ensures environmental consistency but also dramatically reduces the overhead associated with setting up complex development stacks.

This article delves into five pivotal Docker containers that represent a cornerstone for any serious AI agent developer’s toolkit, offering practical solutions to common development pain points and fostering a more efficient, cost-effective, and secure development workflow.

The Strategic Advantage of Docker in AI Agent Development

The foundational premise of AI agent development often involves orchestrating multiple services: large language models for reasoning, databases for memory, and various APIs for interaction with external systems. Historically, setting up these interconnected components has been a complex, time-consuming endeavor, prone to dependency conflicts and environmental discrepancies. Docker addresses this by encapsulating applications and their dependencies into portable, self-sufficient units known as containers. This ensures that an agent’s infrastructure runs consistently across different environments, from a developer’s local machine to a production server. For AI agents, which thrive on predictable environments and consistent access to their underlying tools, Docker provides an unparalleled level of reliability and control. It mitigates "works on my machine" syndrome, allowing developers to focus on the agent’s logic rather than environmental plumbing.

1. Ollama: Running Local Language Models for Private, Cost-Effective Reasoning

The reliance on cloud-based Large Language Models (LLMs) like those offered by OpenAI, Google, or Anthropic, while powerful, introduces several considerations for AI agent developers, including cumulative costs, potential latency, and data privacy concerns. Each API call incurs a charge, and for agents that perform numerous iterative tasks, these costs can quickly escalate. Furthermore, sending proprietary or sensitive data to external APIs raises significant privacy questions, especially in enterprise contexts.

Ollama emerges as a crucial open-source solution, empowering developers to execute a diverse range of open-source LLMs—such as Meta’s Llama 3, Mistral AI’s Mistral, or Microsoft’s Phi models—directly on their local hardware. By containerizing Ollama, developers can maintain a pristine host system while effortlessly switching between different LLM architectures and versions without the need for complex Python environment management. This isolation is particularly beneficial when experimenting with various models to find the optimal fit for specific agentic tasks, such as grammar correction, sentiment analysis, or data classification, where a full-scale cloud LLM might be overkill or too expensive.

Why It Matters for Agentic Developers: The ability to provide an AI agent with a "brain" that operates entirely within a developer’s own infrastructure is a transformative capability. This is paramount for enterprise-grade agents handling confidential or proprietary datasets, where data governance and security are non-negotiable. Running docker run ollama/ollama instantly provisions a local endpoint, mirroring the API interface of cloud providers, which agent code can invoke for immediate text generation, complex reasoning, or decision-making processes, all without data ever leaving the local environment. This not only enhances privacy but also significantly reduces development costs by minimizing reliance on paid API calls during intensive prototyping.

Initiating a Quick Start: To deploy and activate a model like Mistral via the Ollama container, the following command establishes the container, maps the necessary port, and ensures model persistence on the local filesystem:

docker run -d -v ollama:/root/.ollama -p 11434:11434 --name ollama ollama/ollamaFollowing the container’s successful launch, a specific model must be pulled and initiated from within the container’s environment:

docker exec -it ollama ollama run mistralUtility for Agentic Developers: With Ollama operational, AI agents can be configured to direct their LLM requests to http://localhost:11434. This provides a local, API-compatible endpoint that facilitates rapid prototyping, accelerates iteration cycles, and fundamentally guarantees that sensitive operational data remains on the developer’s machine, addressing critical privacy and cost concerns from the outset.

2. Qdrant: The Vector Database for Semantic Long-Term Memory

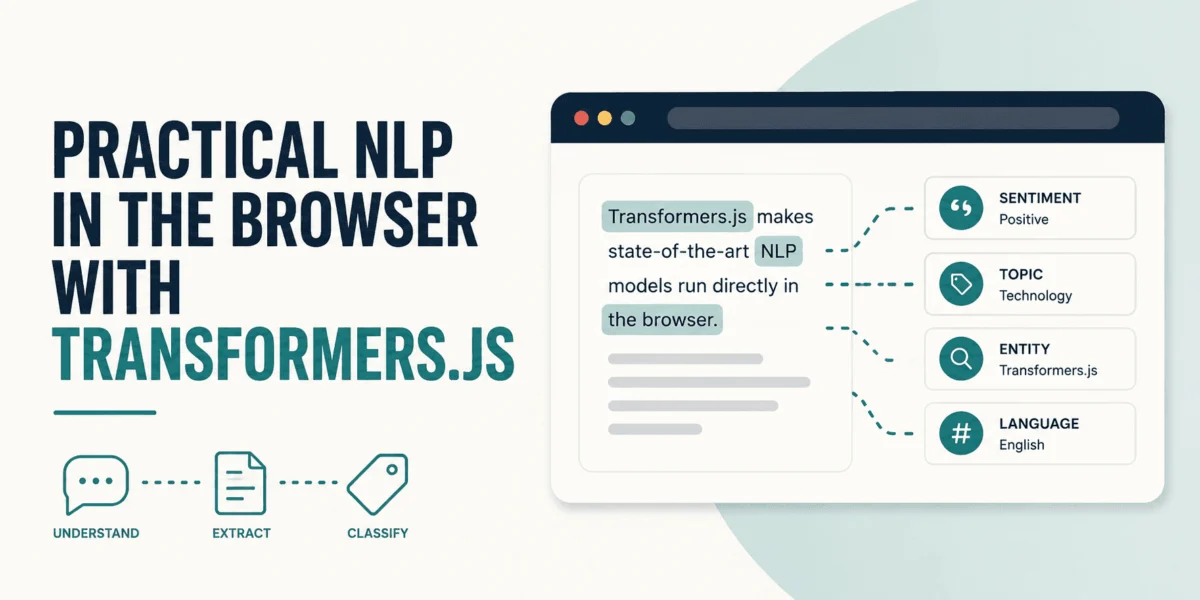

For AI agents to exhibit truly intelligent and adaptive behavior, they require robust memory capabilities—the ability to recall past interactions, contextual information, and domain-specific knowledge. Traditional databases are ill-equipped to handle the semantic search required for such memory. This is where vector databases become indispensable. These specialized databases store numerical representations of text, images, or other data (known as embeddings), enabling agents to perform highly efficient similarity searches based on meaning rather than keywords.

Qdrant stands out as a high-performance, open-source vector database, meticulously engineered in Rust for speed and reliability. It offers both gRPC and REST APIs, making it versatile for various integration patterns. Deploying Qdrant within a Docker container instantly provides a production-grade, scalable memory system for AI agents, abstracting away the complexities of its underlying infrastructure.

Why It Matters for Agentic Developers: Building a Retrieval-Augmented Generation (RAG) agent, a common and powerful architecture, necessitates the efficient storage and rapid retrieval of document embeddings. Qdrant serves as the agent’s long-term memory, a repository of knowledge that extends beyond the immediate context window of an LLM. When an agent receives a query, it can transform that query into a vector embedding, query Qdrant for semantically similar vectors representing relevant knowledge fragments, and then integrate this retrieved context into its prompt to the LLM. This process significantly enhances the agent’s ability to provide accurate, contextually rich, and relevant responses. Containerizing Qdrant ensures this critical memory layer is decoupled from the agent’s core application logic, fostering a more resilient and modular architecture. This separation allows for independent scaling and management of the memory component, which is vital as an agent’s knowledge base grows.

Initiating a Quick Start: Qdrant can be launched with a single Docker command, exposing its API and dashboard on port 6333 and its gRPC interface on port 6334:

docker run -d -p 6333:6333 -p 6334:6334 qdrant/qdrantUpon successful execution, the agent can connect to localhost:6333. As the agent "learns" new information, it can generate embeddings of this knowledge and store them in Qdrant. Subsequently, when engaging in new conversations or tasks, the agent can search this dynamic knowledge base, retrieve pertinent "memories," and seamlessly incorporate them into its decision-making and response generation processes, thereby achieving truly conversational and knowledge-aware capabilities. Other notable vector databases in the ecosystem include Pinecone, Weaviate, and Chroma, each with its own strengths, but Qdrant offers a robust open-source option for local development.

3. n8n: Orchestrating Complex Agentic Workflows and Integrations

AI agents are designed to interact with the real world, which often means connecting to a myriad of external services—email platforms, CRM systems like HubSpot, communication tools such as Slack, or various enterprise applications. Manually coding API integrations for each of these services can be an arduous, error-prone, and time-consuming process, diverting valuable development resources from the agent’s core intelligence.

n8n (pronounced "n-eight-n") provides a sophisticated yet accessible solution as a fair-code workflow automation tool. It empowers developers to visually construct intricate workflows that connect disparate services without writing extensive custom integration code. By running n8n locally within a Docker container, developers can design and implement complex automation sequences—for example, "If an agent identifies a sales lead based on a customer inquiry, automatically add the lead to HubSpot and dispatch a Slack notification to the sales team." This graphical approach significantly streamlines the integration process, enhancing developer productivity and reducing the technical debt associated with custom API wrappers.

Initiating a Quick Start: To ensure the persistence of created workflows, it is advisable to mount a Docker volume. The following command sets up n8n using SQLite as its default database, making it immediately functional:

docker run -d --name n8n -p 5678:5678 -v n8n_data:/home/node/.n8n n8nio/n8nUtility for Agentic Developers: The strategic advantage of n8n for agentic development lies in its ability to externalize and simplify the "hands" of the agent—its interaction layer with external systems. An AI agent can be designed to simply call a predefined n8n webhook URL, transmitting relevant data. n8n then assumes responsibility for orchestrating the complex, multi-step logic of interacting with various third-party APIs. This architectural separation cleanly demarcates the agent’s "brain" (the LLM and its reasoning capabilities) from its "hands" (the integration and action execution). This modularity not only makes the agent more manageable and robust but also allows for rapid modification of external integrations without altering the core agent logic. Developers can access the n8n visual editor at http://localhost:5678 to begin constructing powerful automation workflows that augment their agents’ capabilities.

4. Firecrawl: Bridging Agents to Real-World Web Data

A fundamental requirement for many AI agents, particularly those focused on research, content aggregation, or real-time information retrieval, is the ability to ingest and process data from the public web. However, directly feeding raw HTML or JavaScript-rendered web pages to an LLM is highly inefficient and often yields poor results due to extraneous elements like advertisements, navigation bars, and complex scripting. LLMs require clean, semantically structured text, ideally in formats like Markdown, for optimal comprehension.

Firecrawl addresses this critical need by providing an API service designed to transform web content into LLM-ready data. It can crawl a given URL, intelligently render JavaScript, and meticulously convert the page’s core content into clean Markdown or structured data formats. Crucially, Firecrawl automatically identifies and removes boilerplate content, ensuring that the agent receives only the most relevant information. Running Firecrawl locally via Docker provides an invaluable advantage, bypassing the usage limitations and potential costs associated with its cloud-hosted counterpart.

Initiating a Quick Start: Firecrawl is composed of multiple services, including the main application, Redis for caching, and Playwright for browser automation. Consequently, it is deployed using a docker-compose.yml file. Developers can set it up by cloning its repository and initiating the compose stack:

git clone https://github.com/mendableai/firecrawl.git

cd firecrawl

docker compose upUtility for Agentic Developers: Firecrawl empowers AI agents with the indispensable capability to ingest and process live web data efficiently. Consider a research agent tasked with synthesizing information from multiple online sources. This agent can programmatically invoke its local Firecrawl instance to fetch specific web pages. Firecrawl then processes these pages, extracting clean text. The agent can then further chunk this text into manageable segments, generate embeddings for each segment, and autonomously store them in its Qdrant vector database. This workflow enables the agent to build a dynamic, real-time knowledge base from the web, significantly enhancing its research acumen and ability to provide up-to-date information. It transforms the agent from a passive information processor into an active web explorer, making it an essential tool for any agent requiring external, current data.

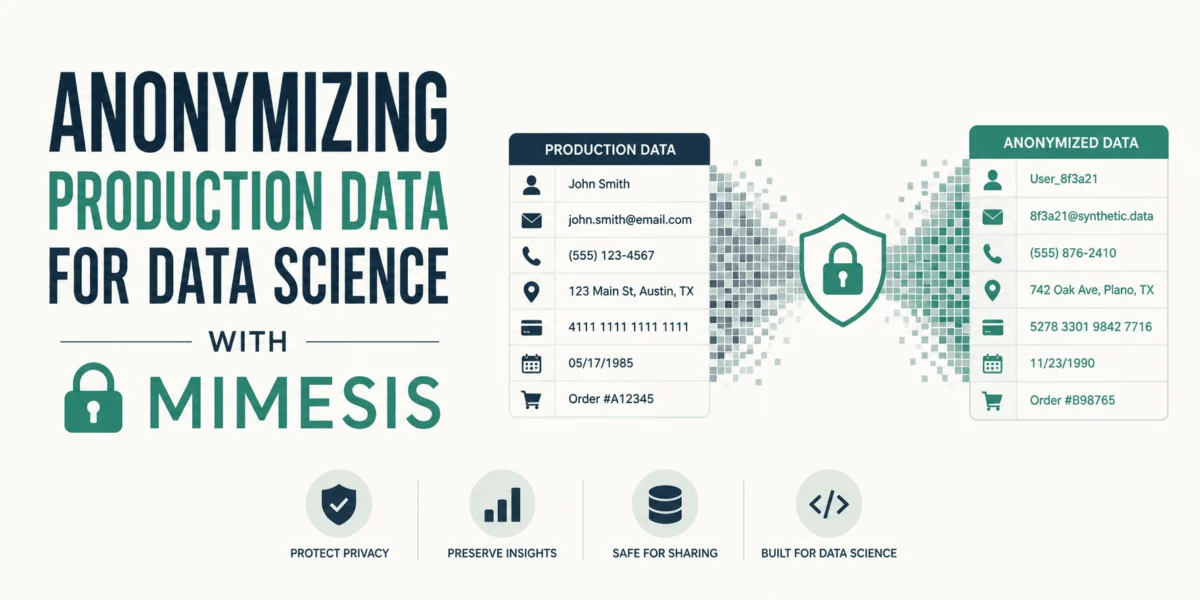

5. PostgreSQL and pgvector: Unifying Structured Data and Vector Memory for Stateful Agents

While vector databases excel at semantic search and long-term memory based on embeddings, many AI agent applications also require the robust capabilities of a traditional relational database for managing structured data. This often includes user profiles, transaction histories, configuration settings, or the agent’s internal state. Historically, this has necessitated running separate systems: a vector database for semantic memory and a SQL database for structured data. This dual-database architecture can introduce complexity, potential inconsistencies, and increased operational overhead.

PostgreSQL, a highly respected and powerful open-source relational database, offers an elegant solution to this dilemma through its pgvector extension. This extension seamlessly integrates vector embeddings directly into PostgreSQL, allowing developers to store both structured data (e.g., a user’s name, age, preferences) and high-dimensional vector embeddings (e.g., conversation history embeddings, user interaction patterns) within the same database instance. This unified approach provides the best of both worlds, enabling sophisticated hybrid searches—for instance, "Find all conversations from users in New York about refunds that are semantically similar to this new support ticket."

Initiating a Quick Start: The standard PostgreSQL Docker image does not include the pgvector extension by default. To leverage this combined capability, a specialized image that pre-integrates pgvector is required. An image provided by the pgvector organization itself is ideal:

docker run -d --name postgres-pgvector -p 5432:5432 -e POSTGRES_PASSWORD=mysecretpassword pgvector/pgvector:pg16Utility for Agentic Developers: This combined PostgreSQL and pgvector setup represents the ultimate backend for stateful AI agents. A stateful agent, by definition, must remember its past interactions and maintain an internal state to provide coherent and personalized experiences. With this unified database, an agent can write its "memories" (vector embeddings of interactions, observations, or learned facts) and its internal operational state (e.g., current task, user context, flags) into the same database where the broader application data resides. This ensures data consistency across the entire system, simplifies the architectural footprint, and provides a powerful platform for complex queries that blend traditional relational logic with advanced semantic search. For agents that require persistent, nuanced understanding of their environment and users, this integrated database solution is invaluable.

Broader Implications for AI Agent Development

The strategic adoption of Docker for managing these five essential components has profound implications for the AI agent development lifecycle and the broader landscape of AI innovation. Firstly, it significantly lowers the barrier to entry for developers, enabling them to experiment with cutting-edge AI architectures without substantial upfront cloud infrastructure investment. This fosters greater innovation and democratizes access to powerful tools.

Secondly, the enhanced privacy and security offered by local execution of LLMs and data storage within controlled environments are critical for sensitive applications in healthcare, finance, and defense, where data sovereignty is paramount. The ability to prototype and even deploy agents that handle confidential information without exposing it to third-party cloud services is a game-changer for enterprise AI adoption.

Thirdly, the consistency and portability afforded by Docker containers translate into more robust and reliable AI systems. Developers can be confident that an agent tested locally will behave identically in staging or production environments, minimizing debugging time and deployment risks. This operational efficiency is crucial as AI agents become more deeply embedded in critical business processes.

Finally, by compartmentalizing core services—LLM inference, long-term memory, external integrations, web data ingestion, and structured data management—Docker promotes a modular architectural pattern. This modularity facilitates easier updates, scaling of individual components, and the integration of new technologies as the AI landscape continues to evolve. It allows developers to swap out components (e.g., try a new LLM, switch vector databases) with minimal disruption to the overall agent system. This agility is key in a rapidly advancing field like AI.

Wrapping Up: The Future of Agentic Development with Docker

The journey to building sophisticated, intelligent AI agents does not inherently demand an exorbitant cloud budget or a sprawling infrastructure team. The vibrant Docker ecosystem provides a suite of production-grade alternatives that are perfectly capable of running efficiently and effectively on a developer’s laptop, or scaling seamlessly to more powerful local or cloud environments when needed.

By integrating these five pivotal Docker containers into their development workflow, AI agent developers equip themselves with a powerful and comprehensive toolkit, fundamentally transforming their capabilities:

- Ollama: Enables cost-effective, private, and high-speed local inference with open-source Large Language Models, empowering agents with their own "brain."

- Qdrant: Provides agents with robust, scalable, and semantically searchable long-term memory, crucial for contextual awareness and knowledge retrieval.

- n8n: Simplifies complex external integrations and workflow automation, allowing agents to interact with the real world through a visual, no-code interface.

- Firecrawl: Grants agents the ability to intelligently ingest and process clean, LLM-ready data from dynamic web pages, fueling research and real-time information gathering.

- PostgreSQL with pgvector: Offers a unified and powerful backend for managing both structured application data and high-dimensional vector embeddings, creating truly stateful and intelligent agents.

The synergy of these tools, all orchestrated through Docker, allows developers to bypass common hurdles and accelerate the development cycle. Begin by spinning up these containers, configure your LangChain or CrewAI agents to interact with these local endpoints, and witness your AI creations transcend their limitations, coming to life with enhanced intelligence, memory, and interaction capabilities. This approach represents a paradigm shift, placing unprecedented control and flexibility directly into the hands of AI agent developers.

Further Reading

- Ollama Docker Hub: https://hub.docker.com/r/ollama/ollama

- Qdrant Documentation: https://qdrant.tech/documentation/overview/

- n8n Docker Hub: https://hub.docker.com/r/n8nio/n8n

- Firecrawl GitHub: https://github.com/mendableai/firecrawl

- pgvector GitHub: https://github.com/pgvector/pgvector

Shittu Olumide is a software engineer and technical writer passionate about leveraging cutting-edge technologies to craft compelling narratives, with a keen eye for detail and a knack for simplifying complex concepts. You can also find Shittu on Twitter.

Leave a Reply