The landscape of software development, particularly within the burgeoning fields of Python programming and data science, has long been plagued by the intricate and often frustrating problem of environment dependencies. Developers frequently encounter situations where code that runs flawlessly on one machine fails spectacularly on another, a predicament stemming from disparate Python versions, conflicting virtual environments, varied system-level packages, and fundamental operating system differences. This persistent challenge, which can consume more time than the actual code development itself, has found a robust and increasingly indispensable solution in Docker, a platform that has fundamentally reshaped how applications and data projects are packaged, distributed, and executed.

Docker addresses this chronic issue by encapsulating an application, alongside its entire runtime environment—including the specific Python version, all required dependencies, crucial system libraries, and even operating system configurations—into a singular, portable unit known as an image. From this immutable image, developers can launch containers that guarantee identical execution across any environment, whether it’s a local development machine, a team member’s workstation, or a remote cloud server. This paradigm shift eradicates the laborious process of debugging environmental inconsistencies, allowing teams to focus instead on accelerating development and deployment cycles.

The adoption of containerization, spearheaded by Docker, has become a cornerstone of modern development practices, especially relevant for data scientists and machine learning engineers who navigate complex ecosystems. A 2023 industry report indicated that over 70% of organizations leveraging AI and machine learning technologies have integrated containerization into their MLOps pipelines, underscoring Docker’s critical role in operationalizing data projects. The platform’s versatility is demonstrated through a spectrum of practical applications, from merely containerizing a standalone Python script to orchestrating sophisticated multi-service data pipelines and scheduling routine data fetching jobs.

The Foundational Step: Containerizing a Python Script for Reliability

At its core, Docker’s utility for data professionals begins with ensuring the reliable execution of individual Python scripts. Consider a common scenario in data preparation: a script designed to clean raw sales data, removing duplicates, imputing missing values, and saving a refined output. In traditional setups, distributing such a script alongside its requirements.txt file often led to "it works on my machine" conundrums due to subtle variations in dependency versions or system libraries.

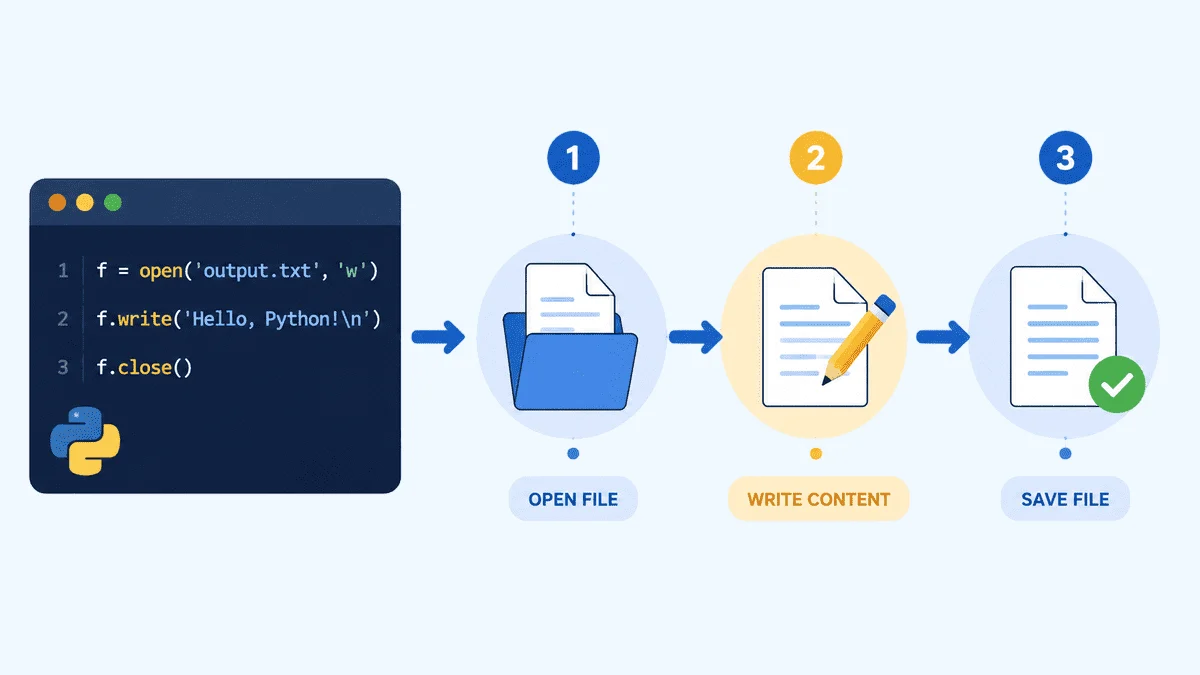

With Docker, this process is streamlined. A Dockerfile acts as a blueprint, defining the steps to build an isolated environment. This typically involves starting with a minimal base Python image (e.g., python:3.11-slim), setting a working directory, copying a precisely versioned requirements.txt file, installing dependencies, and then copying the main script. The deliberate ordering of these steps, specifically installing dependencies before copying application code, leverages Docker’s layer caching mechanism, significantly reducing build times for subsequent changes to the script.

For instance, a data cleaning script using the Pandas library would have its exact Pandas version (e.g., pandas==2.2.0) pinned in requirements.txt. The Docker image then bakes in this exact environment. When the container is run, local data directories can be mounted into it, allowing the script to read raw data from the host machine and write cleaned data back, ensuring data persistence outside the container while maintaining environmental isolation for processing. This approach guarantees that every execution, irrespective of the underlying host, uses the identical, validated environment, fostering reproducibility—a paramount concern in data science.

Elevating Deployment: Serving Machine Learning Models with FastAPI

Beyond batch processing, a significant challenge in data science is the deployment of trained machine learning models as accessible services. Organizations increasingly require models to be available via HTTP APIs, enabling other applications or front-end services to send input data and receive real-time predictions. FastAPI, a modern, high-performance web framework for building APIs with Python, perfectly complements Docker in this context.

FastAPI’s built-in features, such as automatic input validation powered by Pydantic, ensure data integrity before it even reaches the model. When combined with Docker, the entire model serving application—including the FastAPI server, the pre-trained model artifact (e.g., a model.pkl file), and all Python dependencies—can be packaged into a single, self-contained Docker image. This eliminates the complexities of managing server environments, dependency conflicts, and even differing operating systems when deploying models to production.

A typical Dockerfile for an ML API would include copying the model artifact directly into the image, ensuring the container is fully self-sufficient. The application, managed by an ASGI server like Uvicorn, would expose endpoints such as /predict for inference and /health for readiness checks, which are vital for load balancers and container orchestration platforms like Kubernetes. This containerized approach ensures consistent model behavior, simplifies scaling, and allows for seamless integration into broader microservices architectures. Industry analysts note that containerization of ML models has reduced deployment times by up to 40% for many enterprises, significantly accelerating time-to-market for new AI-powered features.

Orchestrating Complexity: Multi-Service Pipelines with Docker Compose

Real-world data projects seldom operate in isolation; they are often components of larger, interconnected systems. A typical data pipeline might involve a PostgreSQL database for storage, a Python script to load data into it, and a dashboard application to visualize the insights—all requiring seamless interaction. Managing these disparate services individually presents considerable operational overhead. Docker Compose emerges as the elegant solution for defining and running multi-container Docker applications.

Docker Compose allows developers to declare an entire application stack within a single docker-compose.yml file. Each service (e.g., db, loader, dashboard) runs in its own isolated container but communicates over a private, Docker-managed network using service names as hostnames. This simplifies network configuration and promotes a microservices architecture, where each component can be developed, deployed, and scaled independently.

Crucially, Docker Compose facilitates the declaration of inter-service dependencies and health checks. For instance, a data loader script should only attempt to connect to the database once the database service is fully operational and ready to accept connections. Docker Compose’s depends_on with condition: service_healthy ensures this robust startup order, preventing common race conditions. Furthermore, persistent volumes (e.g., pgdata for PostgreSQL) ensure that critical data remains intact even if containers are stopped, removed, and restarted. This holistic approach to environment management, from development to production, streamlines the deployment of complex data ecosystems, enabling data engineers to build robust and scalable pipelines with unprecedented ease.

Automating Tasks: Scheduling Jobs with a Cron Container

For data tasks that require periodic execution—such as hourly data fetches from an API, daily report generation, or weekly data aggregations—a dedicated cron container offers a lightweight and efficient solution without the complexity of full-fledged orchestration platforms like Apache Airflow. This pattern is particularly useful for smaller-scale, recurring jobs where a simple, scheduled execution is sufficient.

A cron container packages the necessary Python script (e.g., fetch_data.py), its dependencies, and a traditional crontab file within a Docker image. The Dockerfile for such a container typically installs cron, copies the script and the crontab file, registers the schedule, and then starts cron in the foreground (cron -f). Running cron in the foreground is critical for Docker, as it ensures the container’s main process remains active, keeping the container alive.

By mounting a local output directory into the container, the scheduled script can write its results (e.g., timestamped CSV files) directly to the host filesystem, ensuring data persistence and easy access for further processing or archival. Log redirection within the crontab (e.g., >> /var/log/fetch.log 2>&1) provides clear visibility into job execution, enabling developers to monitor script output and troubleshoot any issues. This containerized cron approach offers a dependable and isolated mechanism for automating routine data tasks, reducing manual intervention and improving the overall efficiency of data operations.

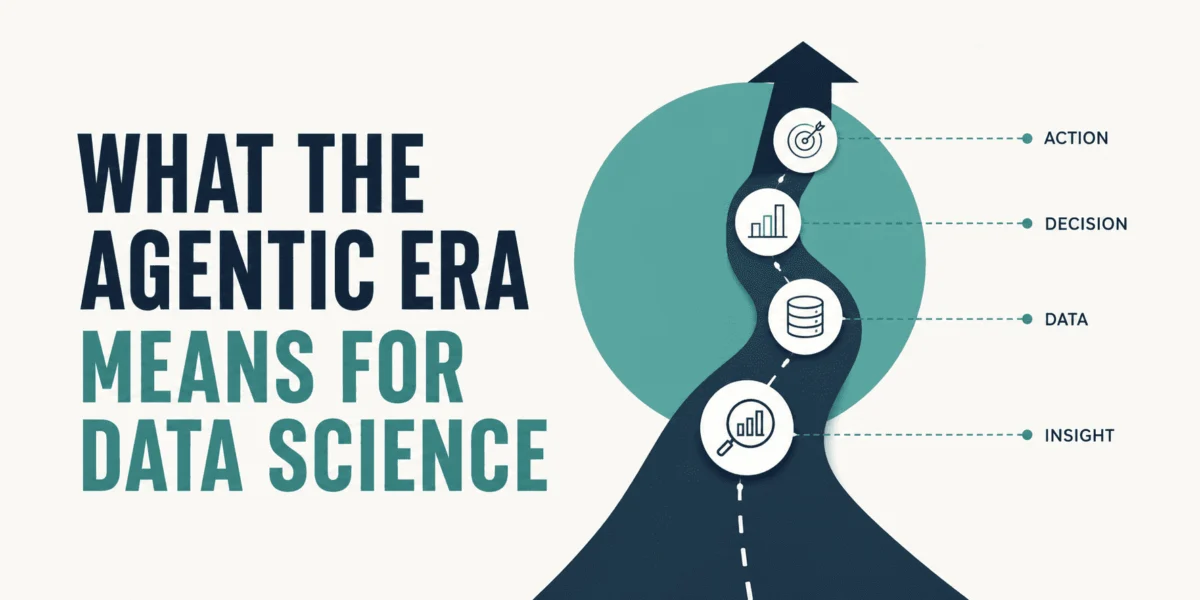

Broader Implications and Industry Trajectory

The widespread adoption of Docker across Python and data projects signals a fundamental shift towards more reproducible, scalable, and collaborative development practices. For data scientists, it means less time spent on environment setup and more on model development and analysis. For MLOps engineers, it provides the essential tooling for building robust CI/CD pipelines, enabling seamless model deployment and updates. Organizations benefit from accelerated development cycles, reduced operational overhead, and enhanced reliability of their data infrastructure.

Containerization significantly improves collaboration among development teams. By providing a consistent environment, Docker ensures that all team members, regardless of their local machine configurations, are working with the exact same dependencies and runtime. This consistency minimizes integration issues and streamlines the entire development lifecycle. Furthermore, Docker’s synergy with cloud platforms is undeniable. Major cloud providers offer extensive support for containerized applications, simplifying deployment to services like AWS ECS/EKS, Google Cloud Run/GKE, and Azure Container Instances/AKS. This facilitates easy scaling and management of data applications in the cloud, leveraging the elasticity and robustness of cloud infrastructure.

However, while Docker offers profound advantages, it is not a panacea for every Python workload. Industry experts advise against its use when the overhead of containerization outweighs its benefits. For instance, for very simple, single-script tasks that are executed infrequently and have minimal dependencies, the initial setup and management of Docker might be overkill. Similarly, when working with highly specialized hardware or complex GPU configurations that require direct hardware access, careful consideration and advanced Docker techniques are necessary. Moreover, for local exploratory data analysis or quick prototyping where environmental isolation is less critical than immediate execution, traditional virtual environments often suffice.

Despite these caveats, Docker’s trajectory within the data science and Python ecosystems remains on a steep upward curve. Its ability to encapsulate complexity, guarantee reproducibility, and simplify deployment across diverse environments makes it an indispensable tool for modern data professionals. As data projects continue to grow in complexity and scale, Docker will undoubtedly remain at the forefront of enabling efficient, reliable, and collaborative development and operationalization.

Leave a Reply