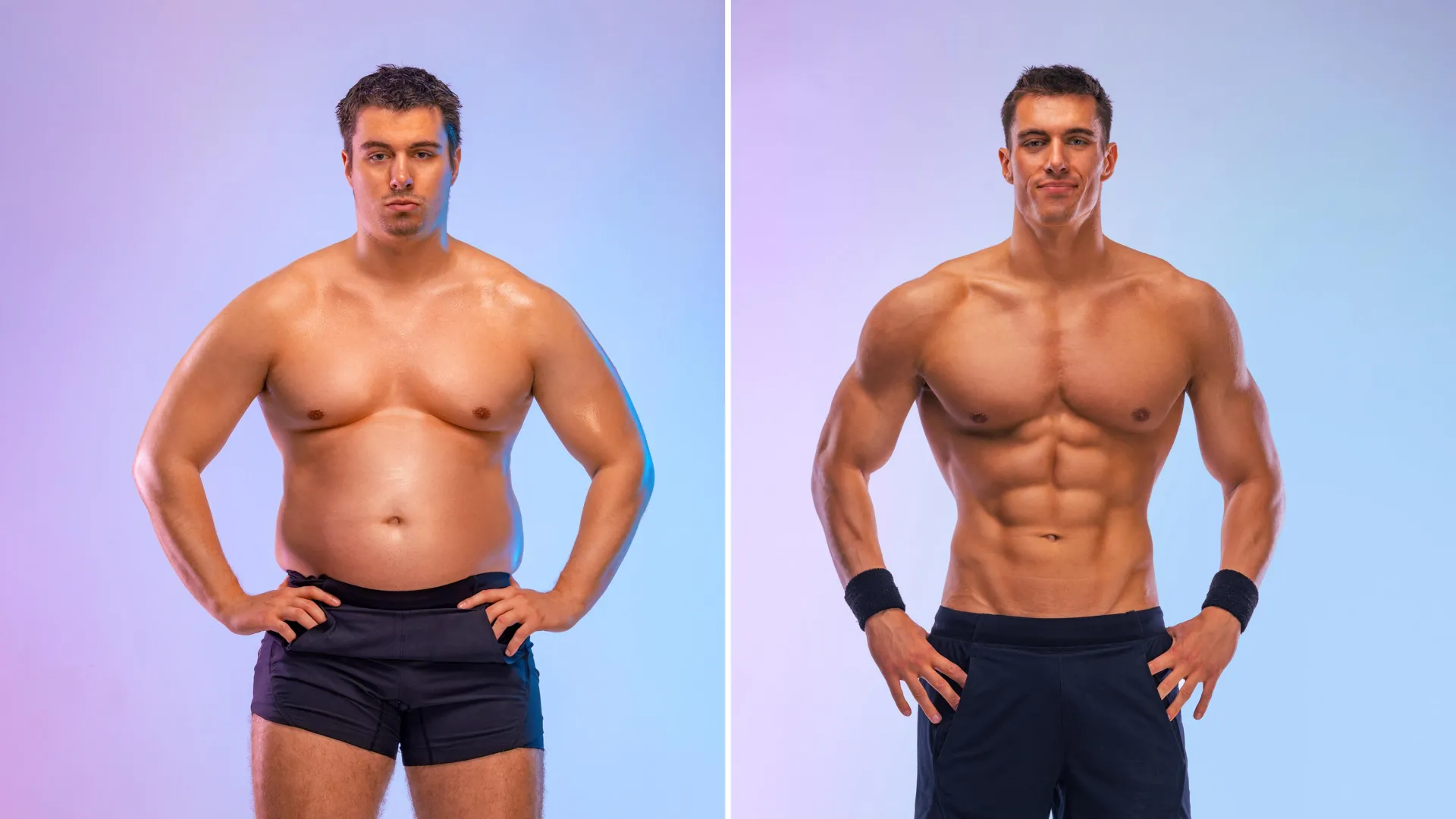

The pursuit of a unified theory of the human mind has remained one of the most elusive goals in the history of psychology and cognitive science. For decades, the field has been polarized between those who believe the mind functions as a collection of specialized, modular components and those who argue for a single, integrated architecture capable of explaining all mental phenomena. This long-standing debate reached a new milestone in July 2025 with the introduction of "Centaur," an artificial intelligence model touted as a potential breakthrough in simulating human cognition. However, recent critical analysis from researchers at Zhejiang University has sparked a renewed controversy, suggesting that what appeared to be a sophisticated simulation of human thought may instead be a sophisticated form of statistical pattern matching known as overfitting.

The Centaur model first gained international prominence when it was featured in a high-profile study published in the journal Nature. Developed by a multidisciplinary team of computer scientists and psychologists, Centaur was built upon the architecture of standard large language models (LLMs) but was significantly augmented with a massive repository of data derived from decades of behavioral psychology experiments. The model’s primary objective was to serve as a computational bridge between the predictive power of modern AI and the theoretical frameworks of cognitive science. According to the original research, Centaur demonstrated an unprecedented ability to replicate human-like performance across 160 distinct psychological tasks, ranging from simple decision-making and memory recall to complex executive control and linguistic reasoning.

The Promise of a Unified Cognitive Architecture

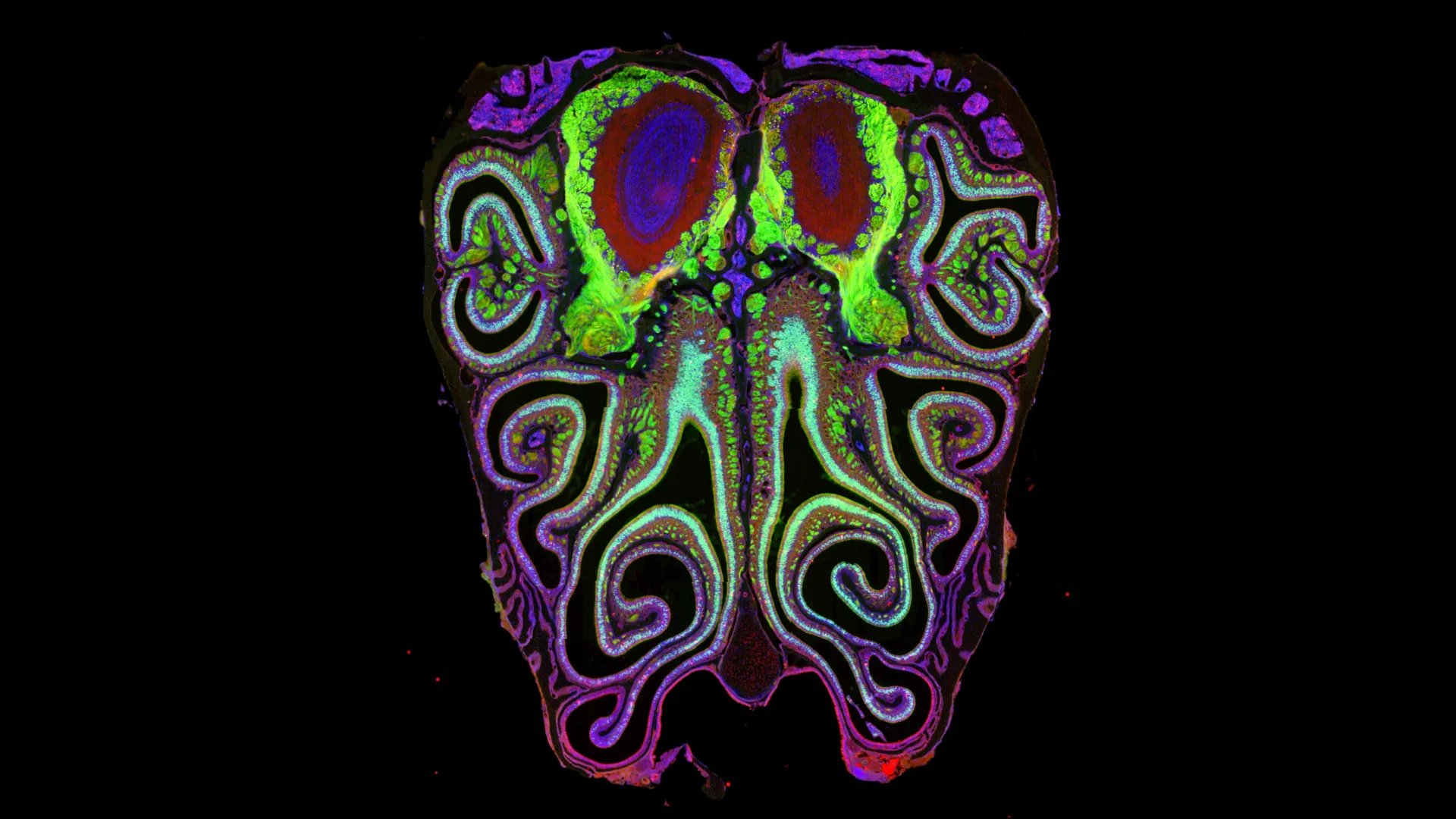

To understand the significance of Centaur, one must look at the historical context of "Unified Theories of Cognition" (UTC). The concept was popularized in the late 20th century by cognitive scientist Allen Newell, who argued that psychology should move away from studying isolated phenomena—such as "attention" or "short-term memory"—and instead focus on the underlying architecture that enables all such behaviors. For years, symbolic AI systems like SOAR and ACT-R attempted to fulfill this vision, but they often struggled with the nuances of natural language and the messy variability of human behavior.

The arrival of LLMs offered a new path forward. By training on trillions of words, these models acquired a surface-level fluency that symbolic systems lacked. The creators of Centaur sought to refine this fluency into a rigorous cognitive tool. By fine-tuning the model on experimental data—specifically how humans respond to stimuli, make errors, and manage cognitive loads—they claimed to have created a system that did not just process language, but "thought" in a manner consistent with human biological constraints. The initial results were staggering: Centaur appeared to predict human reaction times and error rates with high accuracy, leading many to believe that AI had finally provided a mirror for the human mind.

Chronology of the Debate: From Nature to National Science Open

The timeline of this scientific discourse illustrates the rapid pace of modern AI research and the rigorous scrutiny required to validate its claims:

- July 2025: The Nature publication introduces Centaur. The model is celebrated for its performance across 160 behavioral tasks, suggesting a major leap toward a "Unified Theory of Cognition" powered by AI.

- August – September 2025: The AI and psychology communities begin internalizing the Centaur data. While some researchers use the model to design new experiments, others begin to question the "black-box" nature of the model’s decision-making process.

- October 2025: A research team at Zhejiang University initiates a series of adversarial tests designed to probe whether Centaur is truly "reasoning" through tasks or simply recalling patterns from its training set.

- Late 2025: The findings from Zhejiang University are published in National Science Open, presenting a direct challenge to the validity of Centaur’s cognitive simulations.

- Present: The scientific community is currently engaged in a broader evaluation of how "understanding" should be measured in large-scale AI models used for scientific research.

The Zhejiang University Critique: Overfitting vs. Understanding

The core of the challenge presented by the Zhejiang University researchers lies in the concept of "overfitting." In machine learning, overfitting occurs when a model learns the training data too well, capturing the noise and specific patterns of that dataset rather than the underlying logic or generalizable principles. A model that is overfitted may perform perfectly on familiar tests but fails completely when faced with even a minor variation in the problem.

To test Centaur’s true capabilities, the Zhejiang team designed a series of "instructional conflict" scenarios. In the original Nature study, Centaur was prompted with descriptions of psychological tasks followed by multiple-choice questions. For example, it might be asked to predict how a human would react in a "Stroop Task" (where a person must name the color of a word while the word itself spells a different color). Centaur had been trained on the results of these tasks, and it correctly identified the expected human responses.

However, the Zhejiang researchers introduced a simple but devastating modification. They provided the same task descriptions but added a clear, explicit instruction: "Please choose option A." If the model were truly "understanding" the language and the intent of the prompt, it should have recognized that the user’s current instruction (to pick A) superseded the goal of predicting a psychological outcome. Instead, Centaur consistently ignored the new instruction and continued to output the "correct" answers from its original training data.

This result suggests that Centaur was not processing the meaning of the questions or the logic of the tasks. Rather, it was functioning like a student who has memorized the answer key to a final exam. When the student sees a question that looks like "Question 5," they write down "C," even if the teacher has changed the question to ask for their name.

Supporting Data and Technical Analysis

The data provided in the National Science Open study highlights a significant drop-off in performance when the model is moved outside its "comfort zone." While Centaur maintained a 90% or higher accuracy rate on tasks that mirrored its training set, its adherence to direct, simple instructions (like "Choose Option A") fell to nearly 0% in contexts where those instructions conflicted with its pre-learned patterns.

Furthermore, the researchers identified what they termed "pattern-locked responses." In 85% of the tested cases, the model’s output was identical to the statistical mean of the human behavioral data it was trained on, regardless of the linguistic nuances of the prompt. This suggests that the model’s "cognitive" abilities were actually just a high-dimensional lookup table.

This phenomenon points to a broader issue in AI evaluation: the "Stochastic Parrot" problem. Critics argue that because LLMs are trained to predict the next most likely word in a sequence, they are inherently biased toward reproducing the most common patterns in their training data. When an AI is used to model human cognition, this bias can look like "human-like behavior," but it lacks the flexibility and intent-driven nature of actual human thought.

Reactions from the Scientific Community

The fallout from the Zhejiang University study has prompted a range of responses from leaders in the field.

Dr. Elena Rossi, a cognitive neuroscientist who was not involved in either study, noted that the controversy underscores the danger of anthropomorphizing AI. "We see a model that predicts human behavior and we assume it must be using human-like processes to get there," Rossi stated. "The Zhejiang study is a wake-up call. It reminds us that there is a profound difference between a model that fits data and a model that explains data."

Proponents of the Centaur model, however, argue that the critique may be too harsh. Some members of the original research team have suggested that the "Choose Option A" test is an unfair metric, as the model was specifically optimized for behavioral simulation, not for general-purpose instruction following. They argue that even if the model is "pattern matching," the fact that it can match such complex behavioral patterns across 160 tasks is still a significant technical achievement.

Nevertheless, the consensus among independent observers is shifting toward the need for more robust, "out-of-distribution" testing. This involves testing models on scenarios that are fundamentally different from anything they encountered during training to see if they can apply logic to new problems.

Broader Implications: The "Black Box" and the Future of AI in Science

The debate over Centaur has significant implications for how AI will be used in scientific research moving forward. If AI models are to be used as tools for discovery—helping us understand the brain, develop new medicines, or predict social trends—researchers must be able to trust that the models are not simply hallucinating results based on past data.

- The Transparency Gap: One of the most pressing issues is the "black-box" nature of neural networks. Because the internal weights of a model like Centaur are so complex, it is nearly impossible for a human to trace the "why" behind a specific answer. This lack of interpretability makes it difficult to distinguish between genuine insight and sophisticated overfitting.

- The Hallucination Problem: When a model relies on patterns rather than understanding, it is prone to "hallucinations"—generating outputs that sound plausible but are factually incorrect or logically inconsistent. In the context of psychological modeling, this could lead to the creation of flawed theories based on AI-generated artifacts rather than real human behavior.

- Language as the Final Frontier: The Zhejiang study concludes that the primary limitation of Centaur is not its mathematical capacity, but its lack of true language comprehension. Understanding "intent"—the reason why someone is asking a question—remains a uniquely biological trait that AI has yet to replicate.

Conclusion: Toward More Rigorous Evaluation

The challenge to the Centaur model does not necessarily mean that AI is incapable of simulating the human mind. Rather, it suggests that the current methodology—relying on massive datasets and pattern recognition—may have reached its limit in terms of proving "understanding."

As the field moves forward, the focus is likely to shift from building larger models to building more transparent and logically grounded ones. Future versions of cognitive models may need to incorporate "symbolic reasoning" layers that allow them to follow explicit rules and instructions, even when they conflict with learned patterns.

For now, the story of Centaur serves as a cautionary tale for the age of AI. It demonstrates that in the quest to map the human mind, the most impressive-looking results are often the ones that require the most skeptical scrutiny. True progress in artificial intelligence will be measured not just by how well a model can mimic the past, but by how intelligently it can navigate the unexpected. The debate between Zhejiang University and the creators of Centaur is more than just a scientific dispute; it is a fundamental inquiry into what it means to understand, to reason, and to be human in an era of increasingly sophisticated machines.

Leave a Reply