The landscape of artificial intelligence applied to software development is undergoing a profound transformation, moving beyond mere code suggestions to fully autonomous execution. This paradigm shift, often termed "agentic coding," promises an era where AI assistants not only propose solutions but actively implement, test, debug, and deploy entire projects. At the forefront of this emerging wave is Goose, a free and open-source AI agent developed by Block Inc., designed to automate complex engineering tasks directly within a developer’s local environment. Goose represents a significant leap, empowering data scientists and software engineers to delegate multi-step workflows, thereby enhancing productivity and accelerating innovation.

The evolution of AI in software development has been a progressive journey, beginning with rudimentary autocompletion features in Integrated Development Environments (IDEs) decades ago. This was followed by more sophisticated context-aware code suggestions, exemplified by tools like GitHub Copilot, which leverage large language models (LLMs) to generate snippets, functions, or even entire classes based on natural language prompts and surrounding code. While these tools have undeniably boosted developer efficiency, their primary mode of operation remained advisory. Developers would receive suggestions and then manually integrate, test, and debug the generated code. Agentic AI, however, introduces a qualitative change. Instead of just suggesting code, an agentic system like Goose can comprehend a high-level goal, break it down into actionable steps, interact with the operating system, run terminal commands, manage dependencies, execute code, identify errors, and self-correct—all without continuous human intervention. This fundamental shift from "intelligent assistant" to "autonomous teammate" marks a new chapter in human-computer interaction for engineering tasks.

At its core, Goose is engineered as an open-source, reusable AI agent designed for local machine execution. This architecture distinguishes it significantly from many cloud-dependent AI coding assistants. Developed with a strong emphasis on transparency and community contribution by Block Inc., Goose’s entire codebase is publicly available on its GitHub repository, fostering an environment of collaborative development and peer review. Its ability to operate within the actual development environment, rather than being confined to a text editor, is crucial. This means Goose can interact directly with the file system, execute arbitrary terminal commands, and integrate with external Application Programming Interfaces (APIs). This deep environmental interaction allows Goose to manage entire workflows, from initial project setup and dependency installation to code generation, testing, and deployment, handling tasks that previously required a developer’s manual orchestration across multiple tools and interfaces.

Several key differentiators underscore Goose’s unique position in the burgeoning field of agentic AI. Foremost among these is its local control and privacy. By running directly on a user’s machine, Goose offers unparalleled data privacy and security. Sensitive codebases, proprietary data, or confidential project details never need to leave the local environment or be transmitted to third-party cloud services for processing. This addresses a significant concern for enterprises and individual developers wary of exposing intellectual property or client data to external AI models. Furthermore, local execution often translates to reduced latency and more immediate feedback, especially for iterative development cycles. This contrasts sharply with cloud-based solutions that incur data transfer costs and potential delays.

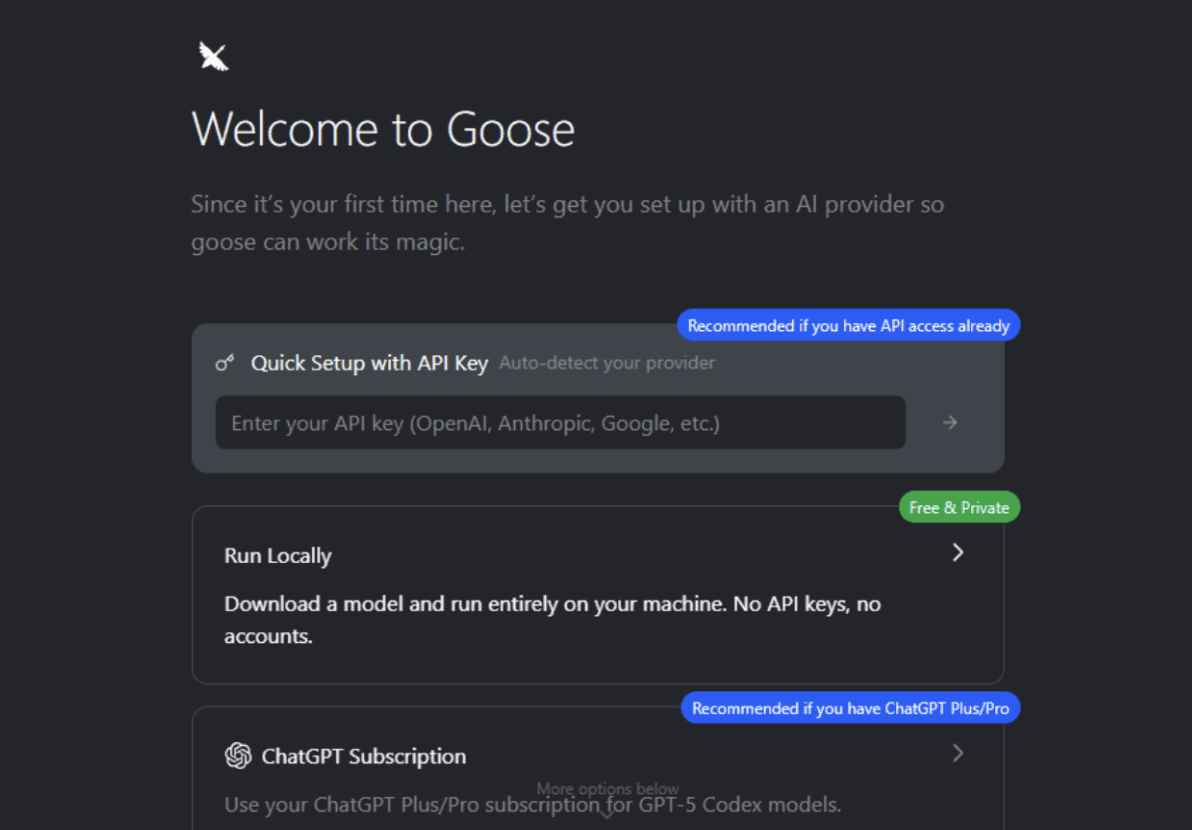

Another critical advantage is Goose’s flexibility with Large Language Models (LLMs). Designed to be LLM-agnostic, Goose allows users to configure their preferred LLM provider, such as OpenAI or Anthropic, by simply supplying an API key during setup. This flexibility future-proofs the agent and empowers users to choose models based on performance, cost-effectiveness, or specific capabilities. As the LLM landscape rapidly evolves, with new models emerging and existing ones improving, Goose users can seamlessly switch to leverage the latest advancements without being locked into a single provider. This also opens the door for potential integration with local, open-source LLMs, further enhancing privacy and control for users who prefer entirely on-premise AI solutions.

The open-source empowerment driving Goose’s development is also a significant asset. Being open-source underpins its transparency, allowing developers to inspect its inner workings, understand its decision-making processes, and contribute to its enhancement. This community-driven model often leads to faster iteration, more robust features, and greater adaptability to diverse use cases than proprietary alternatives. For Block Inc., contributing Goose as an open-source project aligns with a broader industry trend of fostering collaborative innovation in AI and establishing open standards that can benefit the entire developer ecosystem.

For data scientists, the implications of agentic coding with Goose are particularly profound. Their daily work frequently involves a complex interplay of repetitive, multi-step tasks that necessitate interaction with various tools, libraries, and data sources. Goose can become an invaluable asset in streamlining these workflows:

- Automated ETL Pipeline Setup: A data scientist could instruct Goose to "create a Python script that connects to a SQL database, extracts specific tables, performs basic cleaning, and loads the processed data into a CSV file." Goose would then write the script, handle library installations (e.g.,

pandas,sqlalchemy), execute it, and report on success or failure, debugging as needed. - Exploratory Data Analysis (EDA): Tasks like generating descriptive statistics, creating various plots (histograms, scatter plots, box plots), and identifying correlations can be fully delegated. A prompt like "Analyze the

customer_data.csvfile, generate summary statistics, identify missing values, and visualize the distribution of ‘age’ and ‘income’ columns, saving plots to a dedicated ‘eda_reports’ folder" could be executed autonomously. - Model Training and Evaluation: From setting up a machine learning environment to writing boilerplate code for model training, cross-validation, and performance evaluation, Goose can handle the scaffolding. "Train a scikit-learn Logistic Regression model on

titanic_dataset.csv, perform 5-fold cross-validation, and report accuracy and precision scores" becomes a single instruction. - Environment Setup and Dependency Management: A common headache for data scientists is managing virtual environments and ensuring all necessary libraries are installed. Goose can take an instruction like "Set up a new Python virtual environment, install

numpy,scipy,matplotlib, andjupyterlab, then launch JupyterLab," significantly reducing setup time. - Reproducible Research: By automating the exact steps of data loading, processing, model training, and visualization, Goose can help ensure that experiments are easily reproducible, a cornerstone of sound scientific practice.

Beyond data science, Goose’s capabilities extend to broader software engineering and DevOps workflows. Software engineers could leverage it for automated bug fixing by feeding it error logs, enabling it to propose and test code changes. Quality assurance (QA) processes could benefit from automated test script generation and execution. DevOps teams might use Goose to generate Infrastructure as Code (IaC) templates, configure CI/CD pipelines, or even manage cloud resource provisioning based on high-level architectural descriptions. The ability to interact with the terminal and external APIs makes Goose a formidable orchestrator for many development and operational tasks that traditionally require manual scripting and vigilance.

A pivotal element of Goose’s architecture, underpinning its extensibility and future potential, is the Modular Agent Communication Protocol (MCP). MCP is an open standard that allows Goose to connect to any server that implements this protocol. Conceptually, MCP servers act as "skills" or "tools" that can be dynamically added to Goose’s repertoire. This design fosters an incredibly flexible and powerful ecosystem. Imagine Goose, through MCP, connecting to:

- Data Warehouses: An MCP server for Snowflake or BigQuery could allow Goose to autonomously query, transform, and manage data within these systems.

- Cloud Providers: An MCP server for AWS, Azure, or Google Cloud could enable Goose to provision resources, deploy applications, or manage services.

- CI/CD Systems: Integration with GitHub Actions or GitLab CI via an MCP server could allow Goose to trigger builds, deploy code, or monitor pipeline status.

- Code Analysis Tools: Connecting to SonarQube or other static analysis tools could empower Goose to not only write code but also ensure its quality and adherence to best practices.

This ecosystem transforms Goose from a powerful local agent into a central orchestrator capable of integrating and automating across an entire development and data workflow stack. The strategic decision by Block Inc. to develop MCP as an open standard is critical. It promotes interoperability and prevents vendor lock-in, encouraging a diverse marketplace of specialized agents and services that can communicate seamlessly. This vision aligns with the broader movement towards modular, composable software systems, where specialized components collaborate to achieve complex objectives. Industry analysts suggest that open standards like MCP will be crucial for the widespread adoption and innovation within the agentic AI space, allowing for greater customization and community-driven expansion of capabilities.

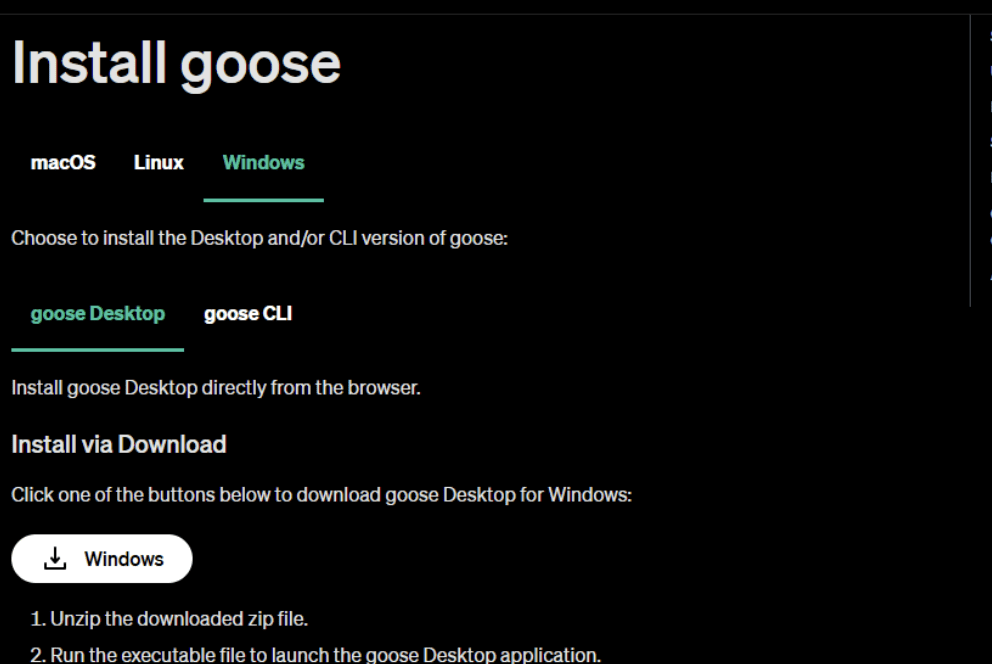

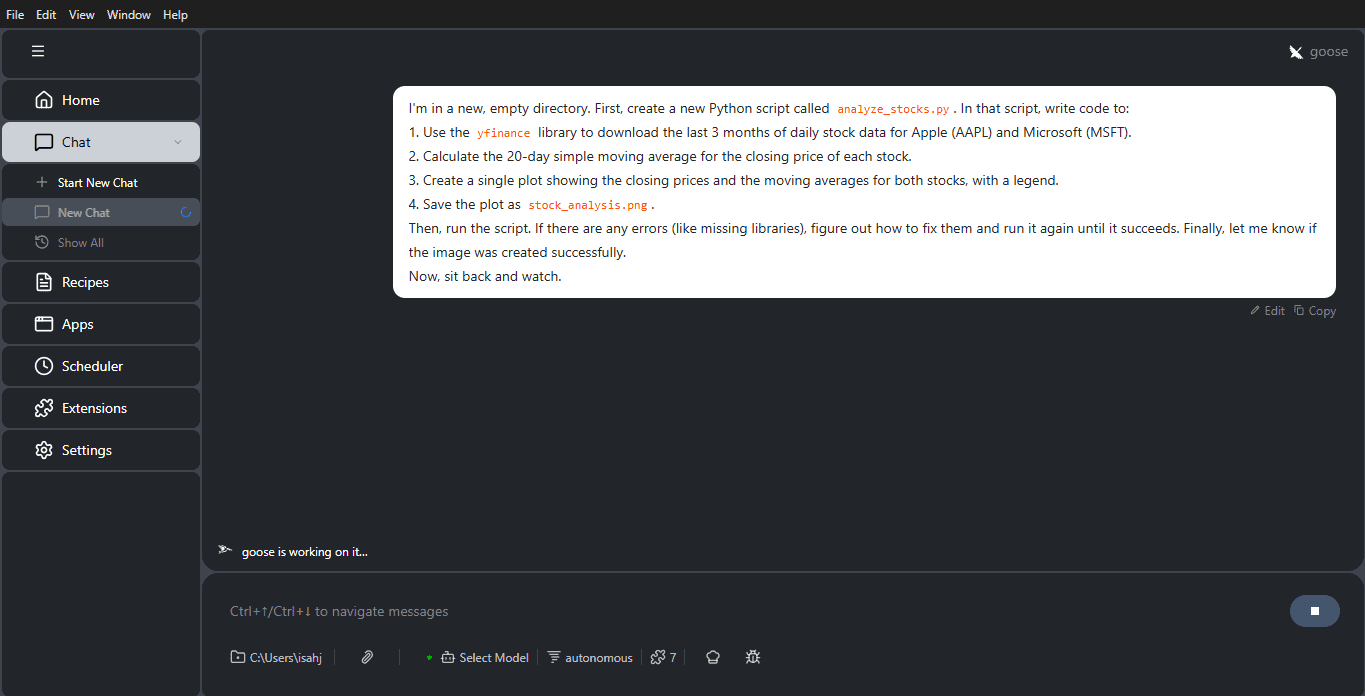

For developers eager to experience this new paradigm, getting started with Goose is designed to be straightforward. Installation typically involves downloading a desktop application installer for macOS, Linux, or Windows directly from the Goose website or its GitHub releases page. Once installed, the initial setup guides users through configuring their LLM provider, requiring an API key. This initial configuration is flexible, allowing users to switch providers or adjust settings later via a configuration file. The user experience centers around a chat interface where developers provide natural language prompts, similar to interacting with a human colleague. For instance, the task of generating a Python script to download stock data, calculate moving averages, plot the results, and save the image—while also handling missing library installations and debugging—can be accomplished with a single, detailed instruction. Goose then iteratively executes commands, installs necessary packages like yfinance and matplotlib, writes and runs the Python script, and verifies the output, reporting back on the successful creation of the plot. This multi-step, self-correcting process illustrates the core power of agentic coding in action.

Despite its immense potential, agentic AI, including Goose, presents certain challenges and considerations. The effectiveness of an agent heavily relies on the clarity and specificity of the prompt. "Prompt engineering" becomes a critical skill, requiring users to articulate tasks unambiguously and provide sufficient context. Ambiguous instructions can lead to unexpected or inefficient outcomes. While running locally offers privacy benefits, complex, resource-intensive tasks can demand significant computational resources from the local machine, potentially impacting performance. The security implications of granting an AI agent extensive access to a local development environment, including file system and terminal commands, necessitate a high degree of trust and careful configuration. Users must be mindful of the permissions granted and the potential risks, although Goose’s open-source nature helps mitigate these concerns through transparency. Furthermore, debugging agent failures can sometimes be complex; understanding why an agent chose a particular path or encountered an error might require delving into its internal logs or understanding its reasoning process. Finally, as with all AI technologies, ethical considerations around responsible AI development, potential biases inherited from LLMs, and the impact on human roles in software development remain ongoing discussions that the community must address.

In conclusion, agentic coding represents a significant evolutionary step in how developers interact with artificial intelligence, shifting from a model of passive assistance to active, autonomous delegation. Goose, as an accessible, free, and open-source agent, is poised to play a pivotal role in democratizing this powerful paradigm. Its ability to run locally, offer LLM-agnostic flexibility, and extend capabilities through the open MCP standard positions it as a robust solution for a wide array of engineering challenges. For data scientists, in particular, Goose promises to be an invaluable tool for automating tedious, multi-step tasks, accelerating prototyping, and fostering greater reproducibility in their work. The future of software development will undoubtedly involve increasingly sophisticated autonomous agents, and Goose stands as an early, yet powerful, testament to this transformative potential. As the technology matures and the ecosystem around MCP expands, developers across all disciplines are encouraged to explore Goose and experience firsthand the future of coding.

Leave a Reply