A telehealth startup, MEDVi, recently lauded by The New York Times as a prime example of an AI-driven billion-dollar enterprise, is now embroiled in a rapidly escalating controversy involving a formal warning from the U.S. Food and Drug Administration (FDA), accusations of widespread deceptive marketing practices utilizing AI-generated personas, and a series of class-action lawsuits. The company, founded by L.A. entrepreneur Matthew Gallagher, had boasted of unprecedented growth, projecting $1.8 billion in sales by 2026 with a remarkably lean operation of just two full-time employees—Gallagher and his brother Elliot—supported by contractors and agencies. However, an intricate web of regulatory concerns, legal challenges, and journalistic investigations has cast a significant shadow over its claims and operational integrity.

The Rise and Rapid Reckoning of an AI "Unicorn"

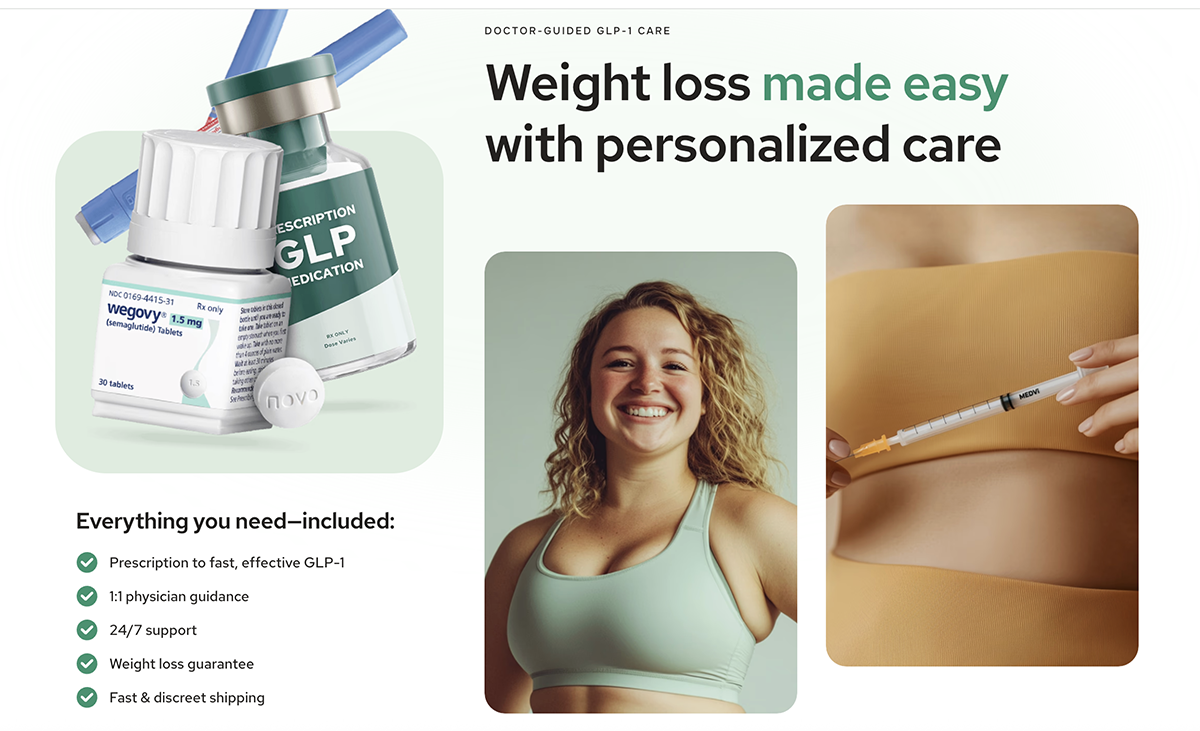

In 2024, OpenAI CEO Sam Altman famously predicted that a single entrepreneur leveraging artificial intelligence could establish a business valued at over one billion dollars. Matthew Gallagher seemingly aimed to fulfill this prophecy with MEDVi, a telehealth platform specializing in GLP-1s and other pharmaceuticals. Gallagher had publicly declared MEDVi to be "the fastest growing company in history" on his LinkedIn profile, a claim he has since removed. On April 2, 2026, The New York Times published a profile presenting MEDVi as a validation of Altman’s vision, detailing Gallagher’s reliance on AI services such as ChatGPT, Claude, Grok, MidJourney, and Runway to build the company. The Times article, which stated it was granted access to MEDVi’s financials to verify revenue and profits, highlighted how the firm outsourced critical operational aspects like physician consultations, pharmacy services, shipping, and compliance to platforms like CareValidate and OpenLoop Health. This narrative painted a picture of a hyper-efficient, AI-powered juggernaut disrupting the pharmaceutical and telehealth industries.

However, the celebratory tone was short-lived, quickly giving way to a cascade of revelations that challenged MEDVi’s legitimacy and ethical practices. The unfolding events reveal a company operating at the precarious edge of regulatory compliance and marketing ethics, raising serious questions about the role of AI in healthcare and consumer protection in the digital age.

FDA Delivers a Stern Warning

Just six weeks prior to The New York Times’ glowing profile, on February 20, 2026, the FDA issued a comprehensive warning letter to MEDVi, LLC, citing misbranding of the compounded drugs that constitute the core of its revenue. The FDA specifically flagged MEDVi’s website for falsely implying that the company was the direct compounder of the semaglutide and tirzepatide it sold. Furthermore, claims such as "Same active ingredient as Wegovy® and Ozempic®" and "Same active ingredient as Mounjaro® and Zepbound®" were deemed to falsely suggest FDA approval or evaluation of these compounded products. The agency explicitly warned that failure to rectify these violations could lead to severe enforcement actions, including product seizure or injunctions, and noted that the cited violations were not an exhaustive list of potential issues.

In an April 8 statement, MEDVi attempted to deflect the FDA’s warning, asserting that the cited website, medvi.io, belonged to an affiliate marketing agency and that MEDVi itself had "never received a letter from the FDA." This claim, however, directly contradicted the FDA’s official letter, which was addressed to "MEDVi, LLC dba MEDVi" at its registered Delaware address, utilized MEDVi’s corporate email address, and specifically referenced MEDVi-branded claims found on medvi.io. The discrepancy between MEDVi’s public denial and the FDA’s documented communication highlights a concerning lack of transparency and accountability from the company’s leadership.

Pervasive Deceptive Marketing and AI-Generated Personas

Concurrent with, and following, the FDA’s warning, multiple journalistic investigations began to expose a pattern of deceptive marketing practices employed by MEDVi, many of which appeared to extend beyond the initial issues identified by regulators.

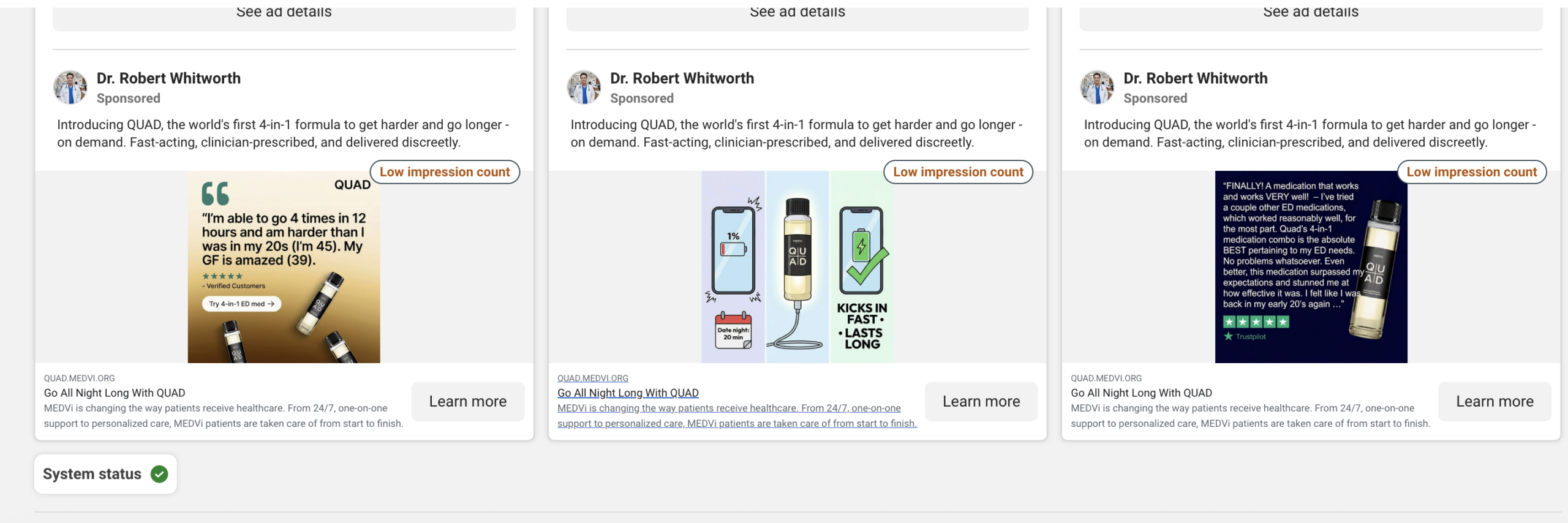

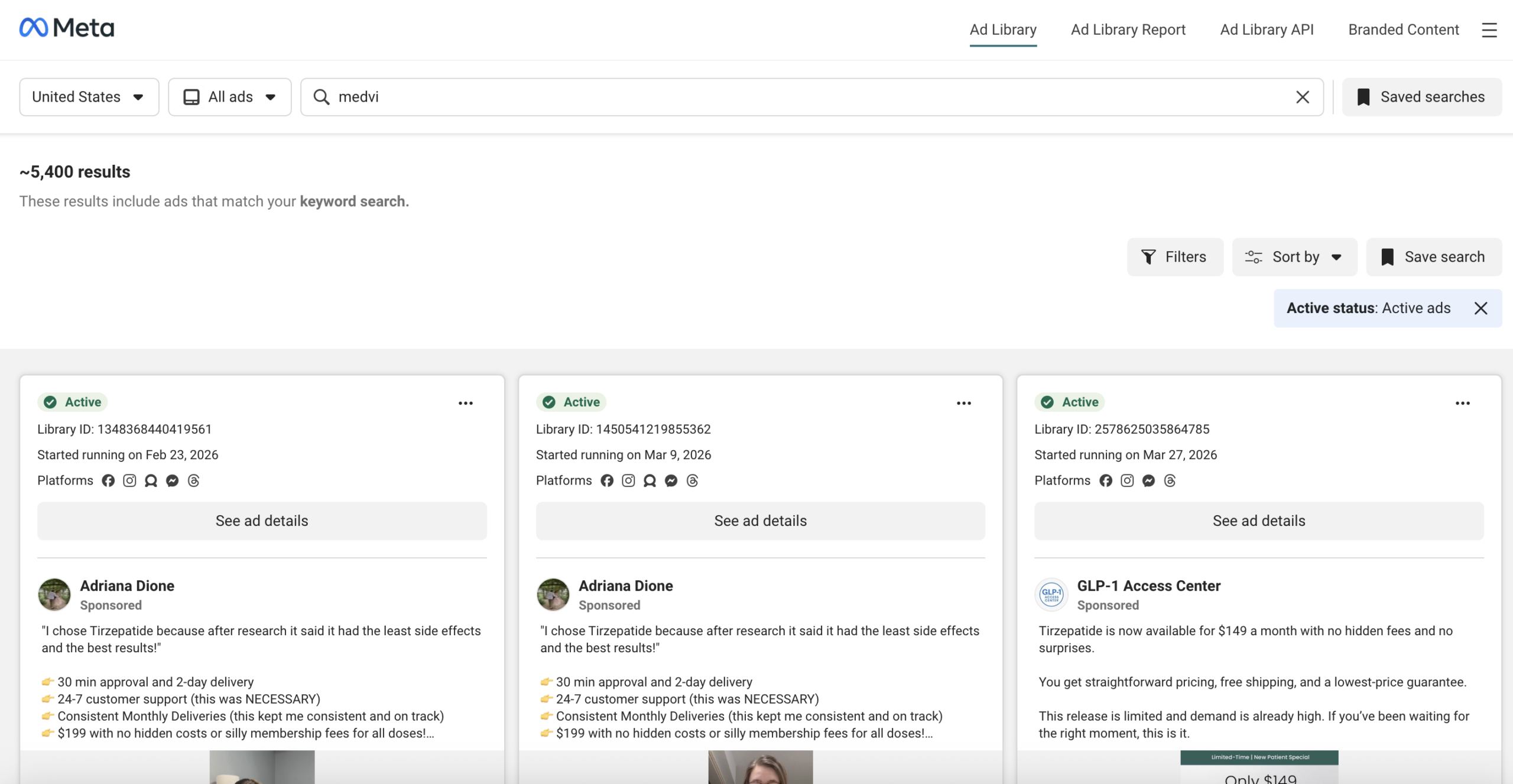

On April 3, 2026, a review by Drug Discovery & Development uncovered extensive use of apparent AI-generated personas, some presented with medical titles, across MEDVi’s website and Facebook advertising. A search of Meta’s Ad Library revealed over 5,000 active ads, many operating under what appeared to be fabricated physician identities. For instance, a Facebook page for "Dr. Robert Whitworth," promoting MEDVi’s QUAD erectile dysfunction product, was inexplicably categorized as an "Entertainment website" and listed a non-existent address in Cameron, Montana. Other ads featured names like "Professor Albust Dongledore" and "Dr. Richard Hörzgock," utilizing AI-generated video testimonials and identical scripts across various fabricated personas. In several instances, a doctor’s headshot would be displayed while an unrelated individual delivered a patient testimonial in the ad. A subsequent review on April 6 noted that many of these problematic doctor personas had been removed.

On the same day, a Business Insider investigation further scrutinized the historical use of apparent AI-generated doctor profiles in Meta ads for MEDVi, often linked to affiliate marketers. Matthew Gallagher conceded to Business Insider that "maybe 30%" of MEDVi’s advertising was conducted through affiliates. Business Insider identified profiles such as "Dr. Matthew Anderson MD" (connected to an Angolan phone number and previously associated with a gospel musician) and "Dr. Spencer Langford MD" (linked to a clothing store in the Republic of Congo), alongside others exhibiting tell-tale signs of AI generation, like garbled text and Gemini watermarks. Following Business Insider‘s inquiries, the number of active Medvi-related ads dropped significantly from over 5,000 to approximately 2,800. Gallagher stated to Business Insider that "In line with the FTC, we have a clear policy of providing disclosure on any actor or AI portrayal of a doctor or not using them at all. If we find an affiliate doing this we work to take these ads down." However, critics, including Nancy Glick of the National Consumers League, expressed concerns about the marketing of unregulated GLP-1 products.

Earlier, in May 2025, Futurism had already documented similar deceptive practices, including AI-generated patient photos, deepfaked before-and-after weight loss images (traced to existing online photos with AI-swapped faces), and AI-generated Ozempic box imagery containing clear errors. MEDVi’s initial website also falsely implied editorial coverage by displaying logos from the New York Times, Bloomberg, and Forbes when no such coverage existed. Futurism even found that one listed physician, osteopathic practitioner Tzvi Doron, denied any association with MEDVi and requested his removal. Gallagher later acknowledged to The New York Times that MEDVi’s initial site had indeed used AI-generated photos and deepfaked before-and-after images.

Adding to the concerns, MEDVi’s own website features a small disclaimer at the bottom: "Individuals appearing in advertisements may be actors or AI portraying doctors and are not licensed medical professionals." This disclosure, while present, appears to be a reactive measure rather than a proactive commitment to transparent advertising, especially given the scale and nature of the deceptive ads identified.

The Compounding Conundrum and Regulatory Gaps

MEDVi’s business model relies heavily on the nuanced and often contentious regulatory landscape surrounding compounded medications, particularly GLP-1s. Federal law generally prohibits compounders from creating copies of FDA-approved drugs. However, Sections 503A and 503B of the Federal Food, Drug, and Cosmetic Act permit compounding under specific circumstances, notably during drug shortages.

A crucial development in this context occurred when the FDA determined that the shortage of tirzepatide injection was resolved on December 19, 2024, and the semaglutide injection shortage was resolved on February 21, 2025. This resolution significantly narrowed the legal window for compounding these specific drugs. In response, many sellers, including those in MEDVi’s orbit, pivoted to offering "personalized" formulations that include additional ingredients like vitamin B-12, arguing that these variations are not "essentially a copy" of the approved drugs.

However, on April 1, 2026, the FDA clarified its stance, stating that a compounded product combining semaglutide with another active ingredient, such as vitamin B-12, could still be considered "essentially a copy" unless a prescriber documented a patient-specific "significant difference" justifying the compounded version. Further reinforcing this regulatory pressure, Eli Lilly, the manufacturer of tirzepatide (Mounjaro/Zepbound), issued an open letter in March 2026, warning against the practice of compounding tirzepatide with vitamin B-12, underscoring potential patient safety risks and regulatory non-compliance. These clarifications suggest a tightening regulatory environment that directly challenges the justification for many of MEDVi’s offerings.

Mounting Legal Challenges and Network Vulnerabilities

Beyond regulatory warnings and ethical concerns, MEDVi and its partners are facing a growing wave of legal action, highlighting vulnerabilities in its operational model and potential harm to consumers.

MEDVi reported an astonishing 250,000 customers by the end of 2025, a figure Gallagher described as "insane" to The New York Times, and its current homepage boasts "500,000+ MEDVi patients." The site promises "unlimited 24/7 support" and "doctor-led plans & coaching," with care purportedly provided by OpenLoop Health clinicians who "retain the decision to prescribe compounded GLP-1s to patients."

However, OpenLoop Health, a Des Moines, Iowa-based telehealth infrastructure company central to MEDVi’s operations, recently disclosed a significant cyber breach. On January 7, 2026, a threat actor accessed OpenLoop’s systems, claiming to have exfiltrated records from approximately 1.6 million patients, including sensitive data like names, contact information, dates of birth, and medical information. OpenLoop notified the Texas Attorney General in March 2026, confirming at least 68,160 affected individuals in that state alone. This data breach has led to multiple class-action lawsuits against OpenLoop, exposing a critical security vulnerability in the backend infrastructure supporting telehealth providers like MEDVi.

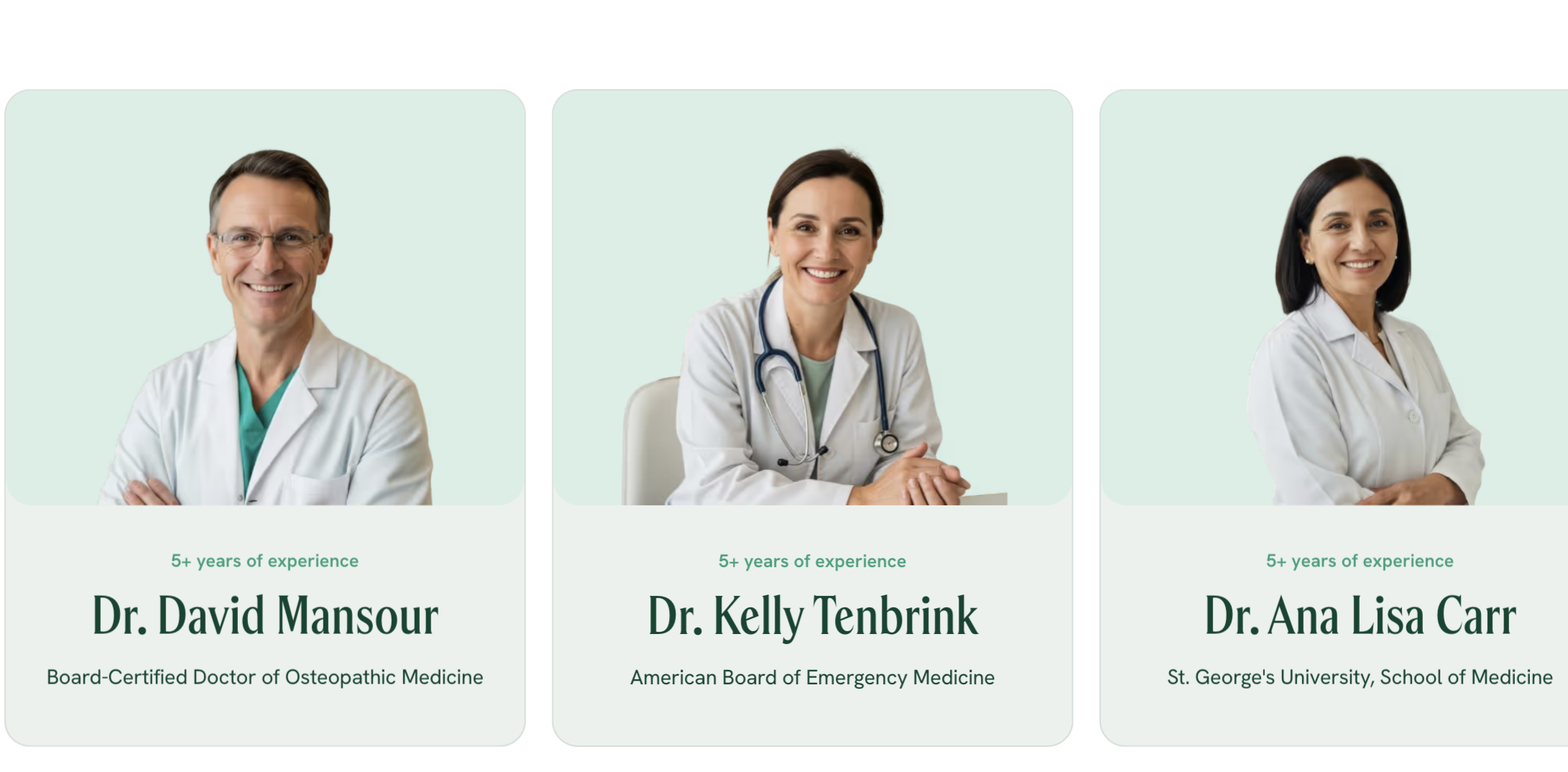

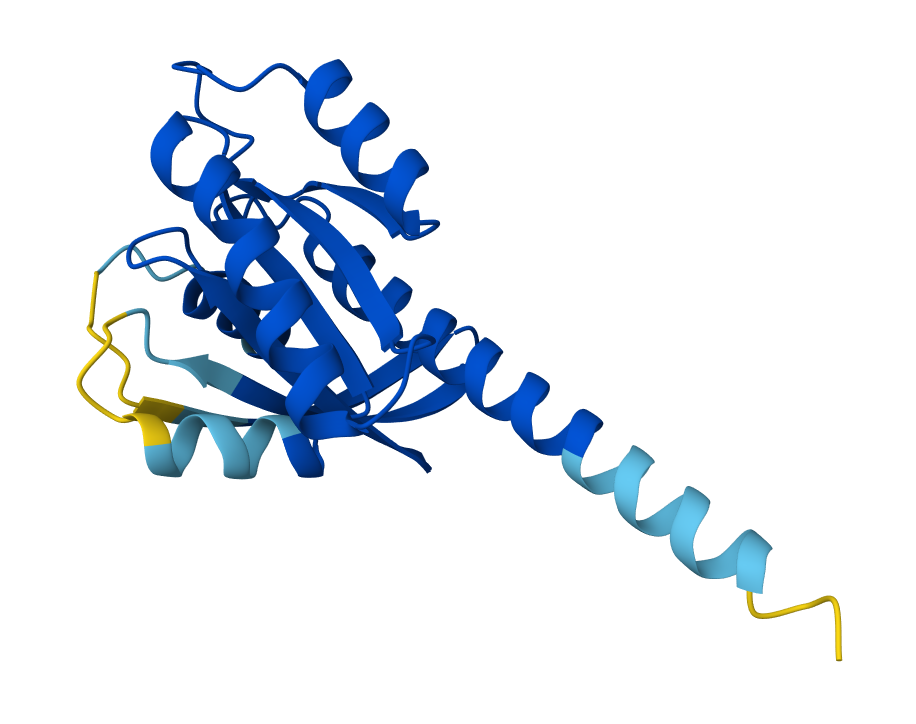

Furthermore, MEDVi is indirectly implicated in a notable class-action complaint filed in November 2025 in the U.S. District Court for the District of Delaware. The lawsuit targets OpenLoop Health and compounding pharmacy Triad Rx, alleging the manufacture and sale of "modern-day snake oil"—compounded oral tirzepatide tablets with "no demonstrated mechanism of absorption or efficacy." Although MEDVi is not named as a direct defendant, the complaint explicitly states that the plaintiff purchased these pills through MEDVi, describing it as one of dozens of consumer-facing telehealth storefronts operating within a network powered by OpenLoop. The lawsuit details the scientific basis for its claims: tirzepatide, a large peptide molecule, is typically destroyed by digestive enzymes before it can enter the bloodstream. The only FDA-approved oral GLP-1, Rybelsus, requires a specialized absorption enhancer (SNAC) to achieve minimal bioavailability and must be taken under strict conditions. This legal challenge, which brings claims under the Racketeer Influenced and Corrupt Organizations (RICO) Act, state consumer protection statutes, and common law fraud, alleges that the "Meet the incredible doctors we’ve partnered with" presentation, including specific physicians like Dr. David Mansour (who appeared in a January 2026 MEDVi press release but is no longer on the site), was part of a broader deceptive marketing scheme.

MEDVi has also faced direct legal action. The company was served in Siuksta v. MEDVi, LLC, a federal Telephone Consumer Protection Act lawsuit filed in May 2025, but failed to appear, leading to a voluntary dismissal after the court noted the default. More recently, James v. Medvi LLC, a class-action case filed on March 20, 2026, in federal court in California, accuses MEDVi of benefiting from affiliate spam. The lawsuit cites emails with misleading subject lines like "This might be the easiest way to start Ozempic," sent from nonsensical email addresses that routed to MEDVi landing pages. Filed under California’s anti-spam law, the suit seeks statutory damages of $1,000 per violating email, alleging a class of at least 100,000 individuals.

The scrutiny also extended to the credentials of physicians previously listed on MEDVi’s site. Drug Discovery & Development investigated Dr. Ana Lisa Carr and Dr. Kelly Tenbrink, both linked to Ringside Health, a concierge practice in Florida. While Dr. Tenbrink was found to be certified in emergency medicine by the American Board of Emergency Medicine (a previous version of this story incorrectly reported otherwise), the Florida Department of Health practitioner profiles for both physicians indicated a lack of specialty board certifications recognized by the Florida board. After Drug Discovery & Development requested comment from these physicians and after this story was first published, MEDVi removed both Dr. Carr and Dr. Tenbrink from its website entirely on April 10.

Leadership’s Public Stance and Social Media Backlash

In the wake of The New York Times profile and the subsequent critical feedback, Matthew Gallagher has publicly defended MEDVi, primarily through social media. His approach has been combative, dismissing critics and equating his company’s use of AI-generated physician personas with standard commercial practices.

In a deleted X post, Gallagher mocked detractors as having "low testosterone," labeling them the "Karens of the internet." In another instance, he pushed back against Shutterstock founder Jon Oringer, who had sarcastically proposed a "fake-doctor affiliate-as-a-service platform." Gallagher retorted by equating MEDVi’s AI-generated imagery with generic medical stock art, stating: "The guy who SELLS images of doctors to marketers pretends not to understand marketing. The irony is beautiful," accompanied by a screenshot of a stock doctor image. This stance is difficult to reconcile with MEDVi’s April 8 official statement claiming the company had only "recently become aware" of ads featuring "potentially AI-generated medical practitioners." By April 3, Gallagher was already publicly mocking critics and drawing parallels between fake-doctor imagery and ordinary stock-art marketing, suggesting a deeper awareness than his company’s official statement implied.

On April 3, 2026, Gallagher posted a lengthy defense of the white-label telemedicine model on X, asserting its "net positive for humanity" by lowering prices and increasing healthcare accessibility. He dismissed critics as "low energy people" who mischaracterize offering "life-changing weight loss medication, prescribed by a doctor," as a "pill mill."

Reactions on LinkedIn to MEDVi’s narrative were mixed. While some congratulated Gallagher on his AI-first execution and purported philanthropic goals, others voiced significant skepticism. An SEO professional questioned whether MEDVi’s reported website traffic could genuinely support its claimed user base and revenue. A digital health founder commented, "Something doesn’t add up," while an accountant recommended an independent audit. Notably, one user linked to a viral YouTube video titled "billion dollar ai company was built on lies," which garnered over 1 million views within three days, while several alleged customers posted direct complaints about their experiences with MEDVi.

Broader Implications for AI in Healthcare and Telehealth

The MEDVi saga serves as a stark illustration of the ethical and regulatory challenges inherent in integrating rapidly advancing AI technologies into highly sensitive sectors like healthcare. The promises of AI-driven efficiency and accessibility are undeniable, but the case of MEDVi exposes the potential for exploitation, misinformation, and harm when these technologies are deployed without robust oversight and ethical safeguards.

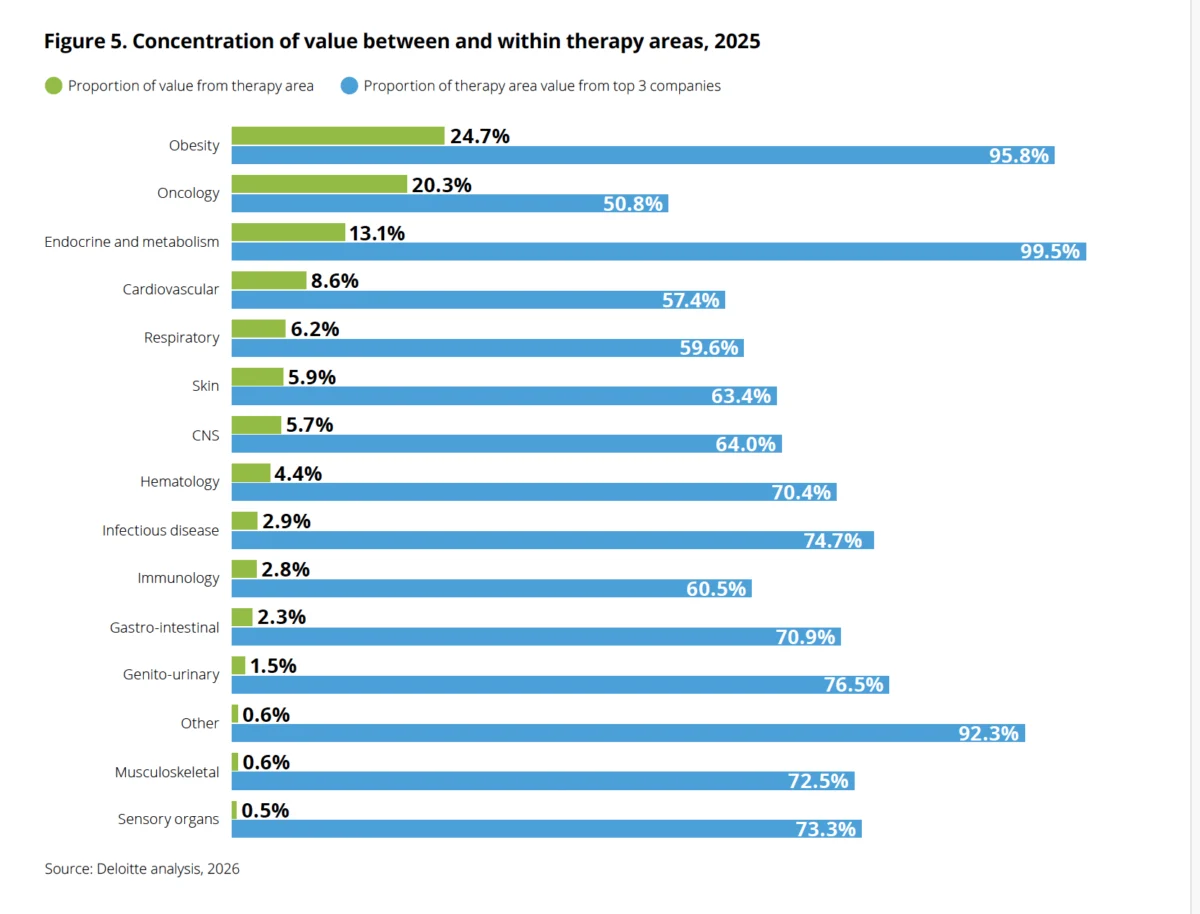

The widespread use of AI-generated personas and deepfakes in advertising, particularly for medical products, poses significant risks to public trust and consumer safety. Federal and state regulators have already identified compounded GLP-1 advertising as a category of concern. In December 2025, a bipartisan coalition of 35 attorneys general wrote to Meta, alleging that the company was permitting deceptive, AI-generated before-and-after weight-loss comparison photos. The coalition stated, "The use of artificial intelligence to fabricate images, spokespeople, and medical claims crosses a line and makes these ads particularly dangerous." A Reuters investigation in late 2025 reported that Meta was "earning a fortune on a deluge of fraudulent ads," projecting that one-tenth of its 2024 revenue came from "scams and banned goods," underscoring the platform’s role in facilitating such questionable practices.

The incident highlights the urgent need for clearer guidelines and stricter enforcement mechanisms to govern AI’s application in medical marketing and telehealth. It also raises questions about the responsibility of platform providers (like Meta) in vetting advertisers and the accountability of telehealth infrastructure companies (like OpenLoop Health) in ensuring the legitimacy and security of the services they enable. The balance between fostering innovation and protecting public health and consumer rights remains a critical and evolving challenge.

As investigations and legal proceedings continue, the MEDVi story is likely to become a cautionary tale for the burgeoning AI-in-healthcare industry, emphasizing that technological prowess must be coupled with unwavering ethical commitment and strict regulatory compliance. The future of AI-driven telehealth will depend not just on its ability to scale, but on its capacity to build and maintain trust through transparency, integrity, and patient safety above all else.

Leave a Reply