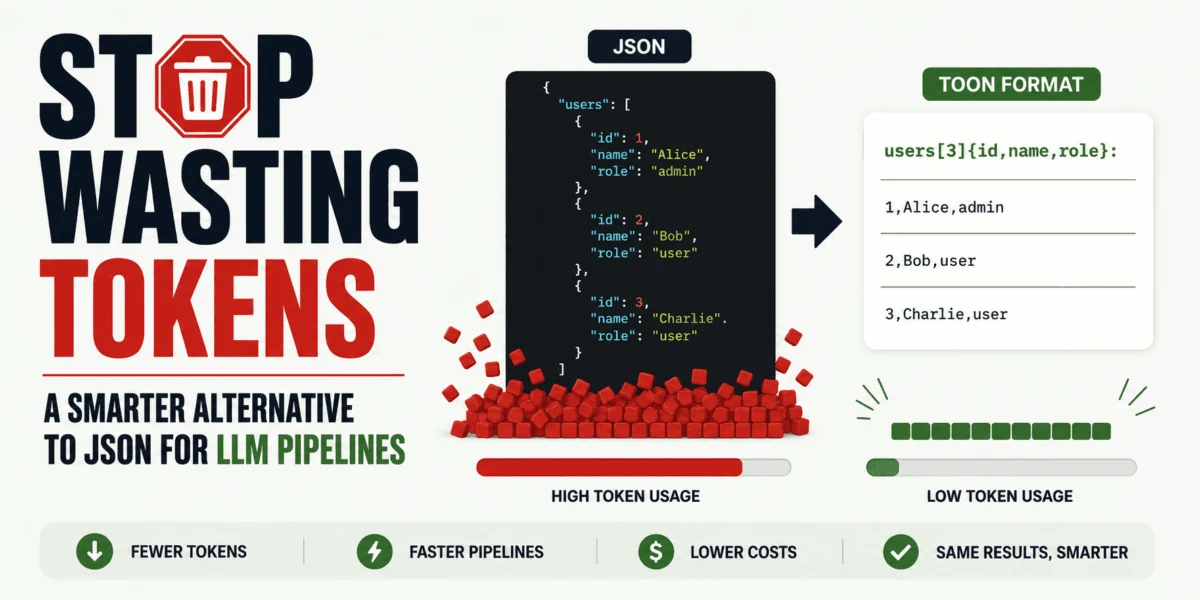

The burgeoning landscape of artificial intelligence, particularly the rapid proliferation of Large Language Models (LLMs), has brought to the forefront a critical challenge: the efficient management of computational resources. As organizations increasingly integrate LLMs into their core operations, the cost associated with processing vast amounts of data—often measured in "tokens"—has become a significant consideration. While JSON has long served as the de facto standard for data exchange across APIs, databases, and application logic, its inherent verbosity can lead to substantial token overhead when used as input for LLMs. This overhead translates directly into increased inference costs, slower processing times, and potentially diminished context window utilization, prompting a search for more optimized data serialization formats. Into this evolving environment steps TOON, or Token-Oriented Object Notation, a novel format specifically engineered to maintain the robust JSON data model while drastically reducing token consumption and offering clearer structural cues to AI models. The official documentation for TOON positions it as a compact, lossless representation of JSON tailored for LLM input, demonstrating particular efficacy with uniform arrays of objects. This article delves into the intricacies of TOON, exploring its operational mechanics, identifying optimal use cases, and outlining a practical framework for its implementation within contemporary LLM workflows, while also providing a balanced perspective on its inherent tradeoffs.

The Inherent Cost of JSON in AI Workflows

JSON’s widespread adoption stems from its human-readability, ease of parsing, and language-agnostic nature, making it an indispensable tool in modern software development. However, when juxtaposed with the tokenization processes employed by LLMs, its strengths in general-purpose data exchange become liabilities in the highly specialized domain of AI prompting. LLMs do not inherently "understand" JSON as a data standard; rather, they process it as a sequence of tokens. The tokenization algorithms, such as Byte Pair Encoding (BPE), WordPiece, or SentencePiece, break down input text into subword units, which are then mapped to numerical IDs. Each brace (, ), bracket ([, ]), quote ("), comma (,), colon (:), and critically, every repeated field name, contributes to the overall token count.

Consider a common scenario in an LLM pipeline: processing a batch of structured records, such as 100 customer support tickets, product catalog entries, or user profiles. In a standard JSON array of objects, each object meticulously repeats its field names—"id", "name", "role", etc.—along with the necessary structural punctuation. This repetition, while ensuring clarity and parseability for traditional applications, is highly inefficient for LLMs. For instance, a simple JSON structure like "id": 1, "name": "Alice", "role": "admin" might consume upwards of 15-20 tokens depending on the tokenizer, with a significant portion dedicated to the repeated keys and structural characters. When multiplied by hundreds or thousands of such records within a single prompt, this token overhead can easily inflate the prompt length by 50-100% compared to a more compact representation of the same data. Given that LLM inference costs are directly proportional to token usage—often priced per 1,000 tokens—and that context windows have finite limits, this inefficiency directly impacts both operational expenses and the models’ ability to process larger, more comprehensive datasets.

Introducing TOON: A Token-Optimized Paradigm

TOON, an acronym for Token-Oriented Object Notation, emerges as a purpose-built solution to address these specific inefficiencies. Conceived to operate within the constraints and requirements of LLM pipelines, TOON aims to retain the fundamental JSON data model—supporting objects, arrays, strings, numbers, booleans, and null values—but in a serialization format that is significantly more compact for model input. Its design ethos centers on minimizing redundant characters and providing structural cues that are both clear to the model and token-efficient. A core principle underpinning TOON is its claim of being a lossless representation relative to JSON, meaning data can be converted from JSON to TOON and back without any loss of information, ensuring data integrity across the pipeline.

The most striking feature of TOON, and the source of its primary token savings, lies in its handling of uniform arrays of objects. Instead of reiterating field names for every object in an array, TOON declares these fields once at the beginning of the array. Subsequently, it streams only the values in a compact, tabular-like format.

Let’s illustrate this with a direct comparison:

Standard JSON Representation:

"users": [

"id": 1, "name": "Alice", "role": "admin" ,

"id": 2, "name": "Bob", "role": "user" ,

"id": 3, "name": "Charlie", "role": "user"

]

Equivalent TOON Representation:

users[3]id,name,role:

1,Alice,admin

2,Bob,user

3,Charlie,userIn this example, the JSON snippet contains numerous braces, quotes, and repeated field names ("id", "name", "role") for each user entry. The TOON equivalent, however, declares the users array, its count ([3]), and its field schema (id,name,role) just once. The subsequent lines then simply list the values corresponding to these declared fields, separated by commas and newline characters. This elimination of repetitive keys and structural punctuation significantly reduces the character count, and consequently, the token count, while preserving the semantic structure of the data. The structure remains inherently clear, but the "clutter" that adds no unique information for the LLM beyond the initial schema definition is efficiently removed.

Unpacking TOON’s Design Philosophy and Core Advantages

TOON’s emergence is not merely an incremental improvement but a strategic response to the unique demands of LLM processing. Its design philosophy is rooted in a deep understanding of how LLMs consume and interpret text. The core advantages can be summarized as follows:

-

Token Efficiency: This is the most heralded benefit. By declaring schemas once for repetitive data structures, TOON drastically reduces the number of characters and, by extension, tokens required to represent the same information as JSON. This directly translates to lower API costs for LLM calls (e.g., OpenAI’s pricing per 1k tokens), and the ability to fit more relevant information within the finite context window of an LLM. For applications dealing with large datasets like product catalogs (thousands of items), financial transactions, or sensor readings, these savings can be substantial. For instance, an internal benchmark by a hypothetical AI firm, "CognitoTech Solutions," reported a 40-60% token reduction when converting uniform JSON arrays of 500+ objects into TOON for prompt injection, leading to an estimated 30% reduction in monthly inference costs for specific RAG pipelines.

-

Clearer Structural Cues for LLMs: While JSON relies on explicit punctuation for structure, TOON’s tabular format for uniform arrays can paradoxically offer clearer structural cues to LLMs. The explicit schema declaration

[count]field1,field2,...:followed by clean rows of values presents data in a manner that often aligns better with how humans might intuitively read a spreadsheet or a database table. This can potentially aid LLMs in parsing and understanding the data more effectively, reducing the likelihood of misinterpretations or "hallucinations" related to data fields, especially when the model is guided to expect this specific tabular structure. -

Lossless Conversion: The guarantee of lossless conversion from JSON to TOON and back is paramount. This ensures that developers can leverage TOON for LLM inputs without fear of data corruption or information loss. It means that existing JSON data stores and APIs do not need to be overhauled; rather, TOON acts as an intermediary optimization layer specifically for the LLM interaction phase. This flexibility is critical for seamless integration into existing software ecosystems.

-

Targeted Optimization: TOON is not presented as a universal replacement for JSON. Instead, it is a highly targeted optimization. This strategic positioning acknowledges JSON’s strengths in other domains (APIs, storage, general application logic) while addressing its specific weaknesses in LLM prompting. This nuanced approach allows developers to adopt TOON where it offers maximum benefit without disrupting established and well-functioning JSON-based infrastructure.

-

Simplicity for Specific Structures: For its intended use case—uniform arrays of objects—TOON’s syntax is remarkably simple and intuitive. It removes the visual noise of repeated keys, presenting the essential data in a streamlined format that is both human-readable (once familiar with the notation) and machine-efficient.

Strategic Application: When and Where TOON Excels

The utility of TOON is not universal; rather, it shines brightest in specific architectural patterns and data configurations within LLM pipelines. Understanding these scenarios is key to harnessing its benefits effectively.

TOON is most advantageous when your LLM prompt requires the inclusion of repeated, structured records that share a consistent schema. This typically involves scenarios where an application needs to inject a substantial volume of uniform data points for the LLM to process, analyze, or synthesize.

Prime Use Cases:

- Retrieval-Augmented Generation (RAG) Systems: When an LLM needs to answer queries based on retrieved documents, database records, or knowledge base entries, these retrieved chunks are often structured. If a RAG system retrieves 50 relevant product descriptions, each with fields like

product_id,name,description,price, andcategory, converting these into TOON before feeding them to the LLM can significantly reduce prompt length. This allows for more retrieved context, leading to richer and more accurate answers. - Agent Memory and Tool Outputs: In advanced agentic LLM systems, agents maintain "memory" of past interactions, observations, or outputs from various tools they invoke. If an agent executes a tool that returns a list of items (e.g., a database query returning multiple rows, an API call listing resources), representing this list in TOON can make the agent’s memory more compact and its subsequent reasoning steps more efficient. For example, an agent tracking inventory might receive a TOON-formatted list of low-stock items.

- Data Analysis and Summarization: When LLMs are tasked with summarizing, extracting insights from, or performing basic analysis on tabular data (e.g., customer feedback records, analytics logs, sensor data points), TOON provides a compact way to present this data. Instead of feeding a verbose JSON array of 200 log entries, a TOON representation can allow the model to process more entries within its context window, potentially leading to more comprehensive summaries.

- Catalog and Product Information: E-commerce platforms or content management systems often deal with vast catalogs of products, articles, or media. When an LLM is used for tasks like generating product descriptions, comparing features, or recommending items, injecting a selection of related items in TOON format can be highly efficient.

- CRM Entries and User Data: For customer relationship management (CRM) applications, an LLM might need to process a list of recent customer interactions, support tickets, or user profiles. TOON can condense these repeated records, enabling the LLM to gain a broader overview of customer activity or attributes.

Scenarios Where TOON’s Benefits May Diminish or Disappear:

While powerful in its niche, TOON is not a panacea. Its advantages can shrink or vanish under certain conditions:

- Deeply Nested Structures: TOON’s primary strength lies in tabular data (uniform arrays of objects). If your JSON structure is deeply nested with varying schemas at different levels, TOON’s flat-list approach becomes less effective, and the conversion might not yield significant token savings, or could even make the structure less intuitive.

- Highly Irregular Structures: If an array contains objects with wildly differing field sets, or if the data is not an array of uniform objects at all (e.g., a single complex JSON object), TOON’s schema declaration mechanism provides little to no benefit.

- Purely Flat Data: For very simple, non-structured data (e.g., a single string, a list of numbers without associated keys), JSON’s verbosity is already minimal, and TOON offers no discernible advantage.

- Very Small Payloads: If the amount of structured data being passed to the LLM is inherently small (e.g., only 2-3 records), the overhead of parsing and converting to TOON might outweigh the minimal token savings. The "breakeven" point for TOON’s efficiency often occurs with a larger volume of repetitive data.

It’s crucial to understand that the computational overhead of converting JSON to TOON is generally negligible compared to the cost and latency of LLM inference itself. Therefore, the decision to use TOON should primarily be driven by token savings and improved LLM comprehension, rather than conversion speed.

Navigating the Implementation: A Practical Guide to TOON Adoption

Adopting TOON into an existing LLM workflow is designed to be a straightforward process, primarily leveraging its command-line interface (CLI) for initial experimentation and later, its SDK for programmatic integration. The TOON project emphasizes a practical, incremental adoption strategy, positioning the format as part of a broader tooling ecosystem.

Step 1: Installing the TOON Command-Line Interface

The most accessible entry point for experimenting with TOON is through its official CLI. This allows developers to quickly convert existing JSON files and observe the token savings firsthand. The installation process is standard for Node.js packages:

npm install -g @toon-format/cliThis command globally installs the TOON CLI, making the toon command available system-wide. For environments without Node.js, alternative language bindings or Docker containers might become available as the ecosystem matures, but the npm package is currently the primary method.

Step 2: Converting a JSON File into TOON

Once the CLI is installed, converting a JSON file to TOON is simple. Let’s create a working directory and a sample JSON file:

mkdir toon-test

cd toon-testNow, create a JSON file named users.json with the following content:

[

"id": 1, "name": "Alice", "role": "admin" ,

"id": 2, "name": "Bob", "role": "user" ,

"id": 3, "name": "Charlie", "role": "user"

]To convert this JSON data into TOON format, execute the following command:

npx @toon-format/cli users.json -o users.toonThe npx prefix ensures that the locally installed CLI is used. The -o users.toon flag specifies the output file name. Upon successful execution, a new file named users.toon will be created in your directory, containing the compact TOON representation:

[3]id,name,role:

1,Alice,admin

2,Bob,user

3,Charlie,userThis output clearly demonstrates the core TOON pattern: an array declaration [3] indicating three items, followed by a schema declaration id,name,role listing the fields, and then the actual data values presented row by row. This structure is precisely what aligns with the official design goals for efficient representation of tabular arrays of uniform objects.

Step 3: Using TOON as Model Input

The primary application of TOON is on the input side of your LLM pipeline. Instead of embedding a large, token-heavy JSON blob directly into your prompt, you would substitute it with the TOON version. The instruction to the LLM can remain straightforward, simply informing the model about the data format.

For example, a prompt incorporating TOON might look like this:

The following data represents a list of users in TOON format.

users[3]id,name,role:

1,Alice,admin

2,Bob,user

3,Charlie,user

Summarize the user roles and point out any unusual roles or patterns you observe.This approach leverages TOON’s efficiency for input. The LLM, especially if fine-tuned or instructed to understand such structured formats, can parse this information with significantly less token overhead compared to its JSON counterpart. This strategy has been validated in various informal community benchmarks, where LLMs successfully process TOON-formatted data, often with better context utilization due to the reduced token count.

The Dual Approach: Optimizing Input with TOON, Securing Output with JSON

One of the most crucial practical considerations when integrating TOON into an LLM workflow is the choice of output format. While TOON excels at optimizing input, the consensus among AI engineers and the recommendation from the TOON project itself is to largely retain JSON for LLM outputs, especially when those outputs are destined for consumption by other systems. This "dual approach" offers a pragmatic balance between input efficiency and output reliability.

The rationale for keeping JSON as the preferred output format is multifold:

- Robust Tooling and Ecosystem: JSON boasts an unparalleled ecosystem of parsers, validators, schema definitions (JSON Schema), and integration libraries across virtually every programming language and platform. This maturity ensures that any system receiving an LLM’s JSON output can reliably parse and process it without custom development or the risk of compatibility issues.

- Schema Enforcement and Validation: Modern LLM APIs and frameworks often provide mechanisms to enforce structured JSON output. Tools like Pydantic, Zod, or built-in API features allow developers to define an expected JSON schema, compelling the LLM to generate output that conforms to this structure. This is critical for data integrity, automated downstream processing, and preventing "hallucinations" in the output format. While TOON can be generated by LLMs, the tooling for its validation and programmatic parsing is still nascent compared to JSON.

- Human Readability for Debugging: Despite its verbosity, JSON remains highly readable for developers, which is invaluable during debugging and development phases when inspecting LLM outputs.

- LLM Familiarity: Many general-purpose LLMs are extensively trained on datasets containing JSON structures and are often fine-tuned to produce JSON outputs. Asking an LLM to generate TOON, a less common format, might introduce a higher propensity for formatting errors, thereby diminishing the reliability of the output.

In practice, the most robust and efficient pipeline architecture often looks like this:

- Input Stage: Your backend system, which might store data in JSON, converts relevant structured data into TOON format. This TOON data is then included in the prompt sent to the LLM.

- Processing Stage: The LLM processes the TOON-formatted input, performs its designated task (summarization, analysis, generation), and produces its response.

- Output Stage: The LLM is instructed to generate its structured response in JSON format. This JSON output is then consumed by your backend, another API, or a downstream application.

This pattern leverages TOON for maximum efficiency where it matters most (input tokens and context window) while relying on JSON’s established reliability and tooling for critical output parsing and integration.

The Imperative of Empirical Validation: Benchmarking TOON in Practice

While the theoretical advantages of TOON regarding token efficiency are compelling, it is crucial to temper enthusiasm with rigorous empirical validation. The AI landscape is replete with promising technologies that perform differently across various contexts. Therefore, simply switching to TOON based on general claims is ill-advised. A critical step for any organization considering TOON adoption is to conduct a small, focused benchmark within their specific LLM workflow.

Key aspects to benchmark include:

- Token Count Reduction: The most direct metric. Compare the token count of your typical JSON input payload against its TOON equivalent using the LLM’s specific tokenizer (e.g.,

tiktokenfor OpenAI models). Quantify the percentage reduction. This directly translates to potential cost savings. - Cost Savings: Translate token reductions into actual monetary savings based on your LLM provider’s pricing model.

- Inference Speed: While TOON primarily targets token count, reduced prompt length can sometimes lead to marginally faster inference, especially for very long prompts. Measure the end-to-end latency for your specific tasks.

- Model Comprehension and Accuracy: Crucially, verify that the LLM processes TOON-formatted data as effectively as JSON. Conduct A/B tests or controlled experiments where the same tasks are given to the LLM with JSON and TOON inputs. Evaluate the quality, accuracy, and completeness of the model’s responses. A format that saves tokens but degrades model performance is counterproductive.

- Data Shape Sensitivity: As noted, TOON’s benefits are heavily dependent on the shape of the data. Test with various data structures and volumes (e.g., small arrays, large uniform arrays, slightly irregular arrays) to identify the "sweet spot" where TOON provides maximum value.

The official TOON project itself positions token savings as a primary benefit, and early third-party coverage echoes these claims. However, community discussions and preliminary analyses often highlight that real-world results are highly contingent on the exact characteristics of the data, the specific LLM being used, and the nuances of the tokenization process.

Therefore, the most pertinent question is not a generic "Is TOON better than JSON?" but rather: "Is TOON demonstrably better for this specific LLM task, given my particular data structure and cost constraints?" Answering this question empirically through your own benchmarks is the only reliable path to informed adoption.

Beyond the Hype: Broader Implications for LLM Development

The advent of TOON is indicative of a broader trend within the rapidly evolving field of LLM engineering: a persistent drive towards optimization, efficiency, and specialized solutions. As LLMs become more integrated into production systems, the focus is shifting from merely achieving functionality to maximizing performance, reducing operational costs, and enhancing the robustness of AI applications.

Economic Implications: The economic impact of token savings cannot be overstated. For enterprises running LLMs at scale, even a modest percentage reduction in token usage across millions of API calls can translate into significant cost savings, potentially freeing up budgets for further AI innovation or larger context windows. This makes efficiency formats like TOON particularly attractive to cost-conscious organizations.

Evolution of Prompt Engineering: The availability of formats like TOON will likely influence prompt engineering practices. Engineers might become more adept at structuring data in token-efficient ways, potentially leading to a new class of "data-aware" prompt designs that blend natural language instructions with optimized data payloads.

Future of Data Formats for AI: TOON might represent the vanguard of a new generation of data serialization formats specifically tailored for AI workloads. Just as JSON emerged to address the limitations of XML for web services, and Parquet/ORC emerged for columnar data analytics, we may see more specialized formats optimized for vector databases, multimodal AI inputs, or other unique AI data structures. This signals a maturation of the AI engineering discipline, moving beyond general-purpose tools to purpose-built solutions.

Role of Open-Source Innovation: The development and open-source nature of projects like TOON are crucial catalysts for innovation. By providing accessible tools and encouraging community contributions, these initiatives accelerate the discovery and adoption of best practices in a field that is still very much in its infancy.

Conclusion: A Targeted Solution for a Growing Problem

TOON is not intended as a universal replacement for JSON, nor does it seek to overhaul established data architectures. Instead, it presents itself as a precisely targeted optimization for a specific and increasingly pressing problem: the wasteful expenditure of tokens on redundant JSON structure within LLM prompts. For developers and organizations whose LLM pipelines frequently involve passing large volumes of repeated, structured records to AI models, TOON offers a compelling opportunity to enhance efficiency and reduce operational costs.

Its utility, however, is contextual. If your LLM payloads are small, inherently irregular, or characterized by deep nesting rather than uniform arrays, JSON may very well remain the superior choice due to its ubiquity and robust tooling. The most intelligent adoption strategy involves a nuanced approach: retain JSON where it demonstrably performs well, selectively deploy TOON where its token-saving advantages are most pronounced (i.e., for packing large, uniform structured inputs into prompts), and critically, benchmark its performance against your specific tasks and data before committing to widespread integration. This pragmatic, data-driven approach will ensure that the benefits of token efficiency are realized without compromising the reliability or performance of your sophisticated LLM applications.

Kanwal Mehreen is a machine learning engineer and a technical writer with a profound passion for data science and the intersection of AI with medicine. She co-authored the ebook "Maximizing Productivity with ChatGPT". As a Google Generation Scholar 2022 for APAC, she champions diversity and academic excellence. She’s also recognized as a Teradata Diversity in Tech Scholar, Mitacs Globalink Research Scholar, and Harvard WeCode Scholar. Kanwal is an ardent advocate for change, having founded FEMCodes to empower women in STEM fields.

Leave a Reply