The Evolving Landscape of Data Science and the Need for a Focused Approach

The data science domain has witnessed exponential growth over the past decade, transforming from a niche academic discipline into a cornerstone of modern business strategy. As enterprises across sectors leverage data for decision-making, the demand for skilled data scientists continues to outpace supply. Projections from various labor market analyses, including those by LinkedIn and the U.S. Bureau of Labor Statistics, consistently forecast double-digit growth in data science roles through the next decade. However, this rapid expansion has also created a ‘noise problem’ for beginners, with an overwhelming array of tools, algorithms, and methodologies making it challenging to identify the most crucial initial steps.

The "2026 Data Science Starter Kit" addresses this challenge head-on, acknowledging that many newcomers, often with foundational Python knowledge and an initial foray into libraries like Pandas, quickly encounter decision paralysis. The article’s core premise is to cut through the extraneous information, offering a clear, structured curriculum designed to transform raw data into actionable intelligence efficiently. The ultimate objective is to equip individuals with the skills necessary to extract knowledge and insights from data, driving concrete business actions and informed decisions.

Embracing the Pareto Principle: The 80/20 Rule in Data Science Education

A central tenet of the "Starter Kit" is the application of the Pareto Principle, or the 80/20 rule, to the learning journey. This principle, which posits that 80% of effects come from 20% of causes, is presented as a guiding philosophy for aspiring data scientists. In practical terms, this means that a select 20% of concepts and tools will be utilized for approximately 80% of real-world tasks encountered in the profession.

This insight serves as a critical counterpoint to the common beginner’s pitfall of attempting to master every algorithm, library, or mathematical proof. Such an exhaustive approach frequently leads to burnout and delayed entry into the workforce. Instead, the "Starter Kit" advocates for a concentrated focus on core, high-impact skills that deliver the majority of results. As industry experts frequently attest, the ability to build and deploy two tangible projects, coupled with strategic professional networking (e.g., LinkedIn engagement) and consistent job applications, forms a more effective pathway to employment than a mere accumulation of certifications. This pragmatic, project-centric methodology ensures that learning is directly aligned with industry demands, distinguishing job-ready candidates from those with purely theoretical knowledge.

The Four Pillars of Data Analytics: A Framework for Value Extraction

To provide a robust conceptual framework, the guide delves into the four types of data science, often referred to as the four pillars of data analytics maturity. Understanding these progressive stages is fundamental to approaching any data problem systematically and deriving maximum value.

- Descriptive Analytics: This foundational pillar answers the question, "What happened?" It involves summarizing historical data to identify trends and patterns. Examples include calculating average monthly sales, tracking website traffic, or determining customer conversion rates. Descriptive analytics provides a comprehensive snapshot of past performance, serving as the basis for further inquiry.

- Diagnostic Analytics: Moving beyond mere description, diagnostic analytics addresses, "Why did it happen?" This stage requires deeper investigation to uncover the root causes of observed outcomes. If a company experiences a decline in customer retention, diagnostic analytics would involve breaking down the problem by geographical region, product line, or customer segment to pinpoint the specific factors contributing to the decline.

- Predictive Analytics: This is where the focus shifts to the future, answering, "What is likely to happen?" Leveraging machine learning algorithms, predictive analytics identifies patterns in historical data to forecast future events. Common applications include predicting customer churn, sales forecasts, or identifying potential fraud risks. This stage transforms historical data into actionable foresight.

- Prescriptive Analytics: The most advanced stage, prescriptive analytics, tackles the question, "What should we do about it?" It employs sophisticated techniques like simulations and optimization to recommend specific actions or interventions. For instance, it might suggest the optimal pricing strategy for a new product, recommend personalized marketing offers to retain at-risk customers, or optimize supply chain logistics. Prescriptive analytics moves beyond prediction to provide concrete, data-driven recommendations for future action.

The "Starter Kit" underscores that a data scientist’s learning journey typically progresses through these pillars, beginning with descriptive tasks and gradually advancing towards predictive and prescriptive challenges, building complexity and impact along the way.

Prioritizing Core Skills: The First Steps Towards Proficiency

The "Starter Kit" outlines a critical set of "survival skills" to be mastered within the initial two months of dedicated learning, forming the bedrock of a successful data science career.

- Mastering Programming and Data Wrangling: Proficiency in Python is paramount. This includes not just basic syntax but also adeptness with essential libraries such as Pandas for data manipulation and NumPy for numerical operations. Data wrangling—the process of cleaning, transforming, and structuring raw data—is often cited as consuming 60-80% of a data scientist’s time, making its mastery indispensable.

- Performing Data Exploration and Visualization: The ability to explore data visually and quantitatively is crucial for uncovering insights and communicating findings. Libraries like Matplotlib and Seaborn for static visualizations, and potentially Plotly or Bokeh for interactive ones, enable data scientists to identify trends, anomalies, and relationships within datasets. Effective data visualization translates complex data into understandable narratives.

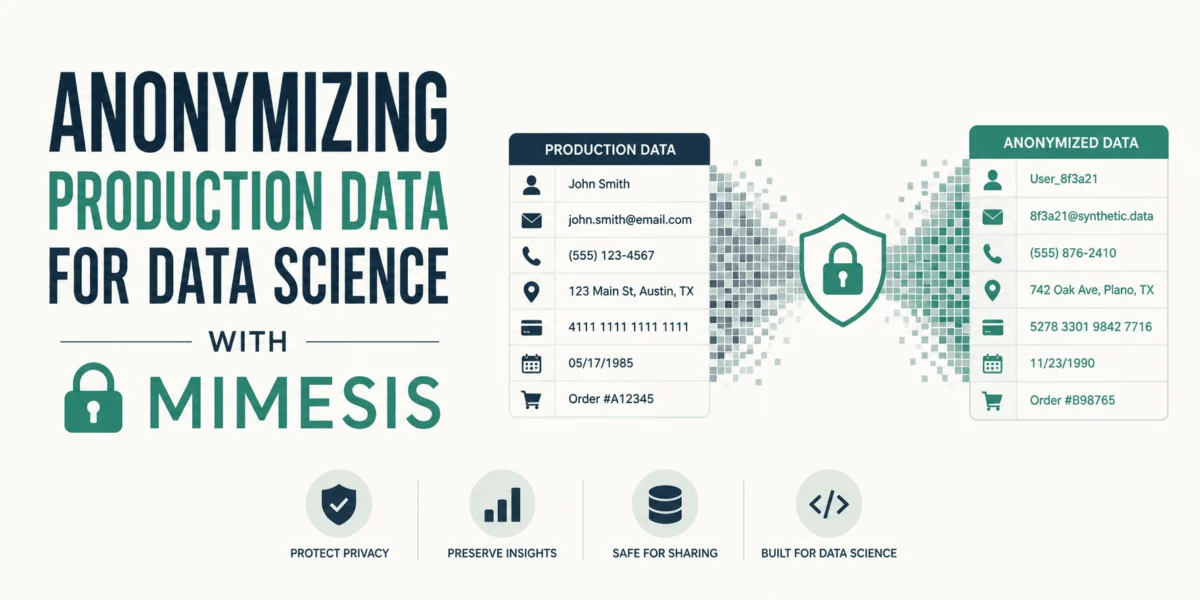

- Learning SQL and Data Hygiene: SQL (Structured Query Language) remains the universal language for interacting with databases, a ubiquitous component of almost all data-driven organizations. A solid understanding of SQL for querying, joining, and aggregating data is non-negotiable. Concurrently, practicing good data hygiene—ensuring data quality, consistency, and integrity—prevents erroneous analyses and builds trust in data-driven insights.

- Building the Statistical Foundation: While a Ph.D. in statistics is not required, a robust conceptual understanding of key statistical areas is essential. This includes descriptive statistics (mean, median, mode, variance), inferential statistics (hypothesis testing, confidence intervals), and basic probability theory. Statistical thinking allows data scientists to interpret results accurately, account for variability, and avoid drawing spurious conclusions. It provides the framework for understanding the significance and reliability of data-driven insights.

Python’s Dominance: A Strategic Choice for 2026 and Beyond

The perennial debate between Python and R for data science is decisively addressed, with the "Starter Kit" firmly recommending Python for beginners in 2026. While R excels in statistical analysis and niche academic research, Python’s versatility, scalability, and expansive ecosystem make it the preferred choice for real-world, large-scale applications. Python’s robust libraries for machine learning (Scikit-learn, TensorFlow, PyTorch), web development (Flask, Django), and general-purpose programming offer a seamless transition from data analysis to model deployment. For individuals already possessing some Python knowledge, doubling down on this language represents the most efficient and career-advantageous use of their learning time.

A 6-Month Action Plan for Job Readiness: A Chronological Pathway

The "2026 Data Science Starter Kit" culminates in a practical, month-by-month action plan, adapted from successful industry roadmaps, designed to transform a beginner into a job-ready candidate within half a year.

- Months 1-2: Building the Foundation. This phase is dedicated to mastering Python fundamentals, data structures, and object-oriented programming. Concurrently, intense focus is placed on Pandas for data manipulation, NumPy for numerical operations, and SQL for database interaction. Data visualization with Matplotlib and Seaborn is also prioritized. The goal is to complete several small, verifiable projects showcasing proficiency in data cleaning, exploration, and basic reporting.

- Months 3-4: Mastering Machine Learning Basics. With a solid foundation, these months introduce core machine learning concepts. This includes supervised learning (regression, classification), unsupervised learning (clustering), and model evaluation metrics. Scikit-learn becomes the primary tool for implementing algorithms. Emphasis is placed on understanding model assumptions, bias-variance trade-off, and feature engineering. Each concept learned should be immediately applied in a practical project, demonstrating the ability to build and evaluate predictive models.

- Month 5: Focusing on Deployment. This crucial stage bridges the gap between model development and real-world application. Learners are guided to understand the basics of MLOps (Machine Learning Operations) and deploy at least one machine learning model. This might involve using frameworks like Flask or Streamlit to create a simple web application that consumes the model, potentially hosted on a basic cloud platform or locally. This step demonstrates the ability to deliver value beyond just theoretical model building.

- Month 6: Creating the Job-Ready Portfolio. The final month is dedicated to refining existing projects, developing one or two capstone projects that showcase a comprehensive skill set (from data ingestion to deployment), and preparing for the job market. This includes building a strong GitHub portfolio, optimizing a LinkedIn profile, crafting a compelling resume, and practicing interview skills, particularly behavioral and technical questions related to projects. Networking and engaging with the data science community are also encouraged.

Strategic Omission: What to Ignore to Accelerate Learning

Perhaps as important as what to learn is what to consciously ignore during the initial stages. The "Starter Kit" provides invaluable guidance on avoiding common detours that can consume hundreds of hours without yielding proportional career benefits for beginners.

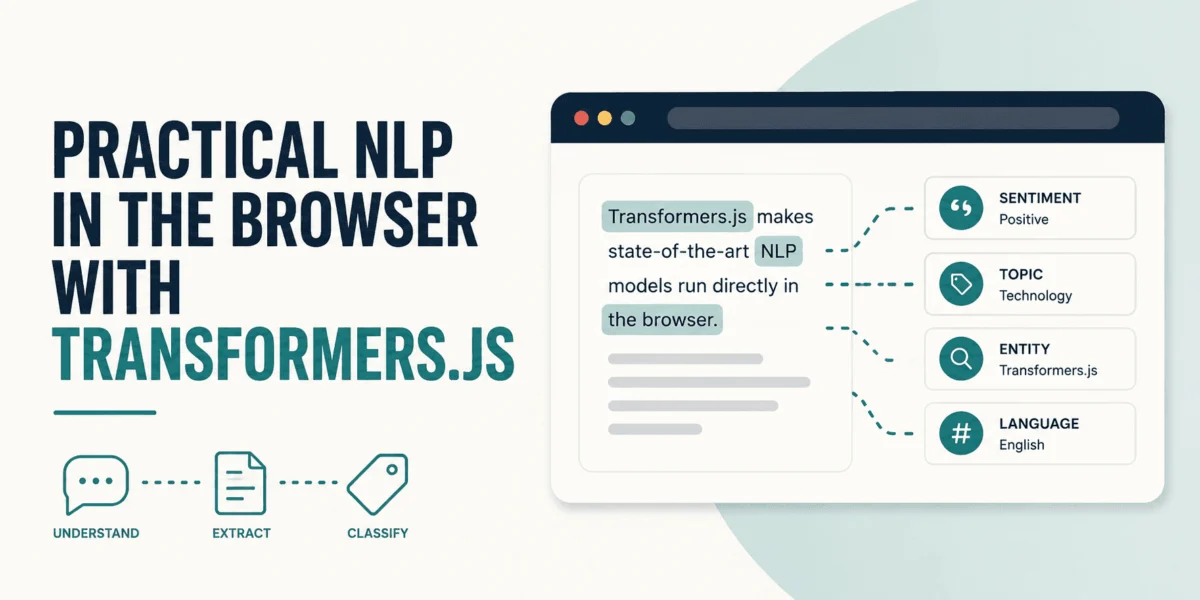

- Delaying Deep Learning: Unless targeting specialized roles in computer vision or natural language processing, deep learning, including neural networks, transformers, and intricate backpropagation, can be safely postponed. For 80% of entry-level data science positions, a solid grasp of traditional machine learning algorithms via Scikit-learn is sufficient and far more frequently applied.

- Skipping Advanced Mathematical Proofs: While a conceptual understanding of underlying mathematical principles (e.g., gradients in optimization) is beneficial, spending extensive time proving complex theorems from scratch is unnecessary. Modern libraries abstract away much of the low-level mathematics, allowing data scientists to focus on application and interpretation.

- Avoiding Framework Hopping: The temptation to learn every new framework that emerges can be a significant time sink. The recommendation is to master one core library, such as Scikit-learn. Once the fundamental principles of model fitting, prediction, and evaluation are understood, adapting to other specialized libraries like XGBoost or LightGBM becomes a much simpler task.

- Pausing Kaggle Competitions (as a Beginner): While Kaggle offers valuable learning opportunities, beginners often fall into the trap of spending weeks optimizing models for minute accuracy gains by ensembling dozens of complex algorithms. This process, while technically challenging, rarely reflects the pragmatic, business-problem-solving nature of real-world data science. A clean, well-documented, deployable project that solves a clear business problem is far more valuable to an employer than a high Kaggle leaderboard rank achieved through esoteric methods.

- Mastering Every Cloud Platform: The vast ecosystems of AWS, Azure, and Google Cloud Platform are complex. Aspiring data scientists do not need to be experts in all three simultaneously. A foundational understanding of cloud concepts and perhaps basic familiarity with one platform is sufficient. Specific cloud skills are often learned on the job, as companies typically standardize on a particular provider.

Concluding Remarks and Broader Implications

The "2026 Data Science Starter Kit" presents a compelling argument for a focused, practical, and project-driven approach to entering the data science field. By strategically applying the 80/20 rule, understanding the four pillars of analytics, and adhering to a disciplined 6-month roadmap, beginners can efficiently acquire the high-impact skills demanded by the industry. The kit’s emphasis on Python, SQL, statistical fundamentals, and clear communication through deployed projects directly addresses the skill gaps frequently highlighted by employers.

This roadmap signifies a broader industry trend towards practical application and demonstrable value, moving away from purely academic or theoretical pursuits for entry-level roles. By providing a clear path and identifying common pitfalls, the "Starter Kit" helps democratize access to a high-growth career, reducing burnout and accelerating the transition from learner to practitioner. Ultimately, it reinforces the core objective of data science: to transform raw data into actionable insights that drive strategic decisions and tangible business outcomes. The journey, as the guide aptly concludes, begins with a single line of code and the commitment to build, deploy, and communicate.

Leave a Reply