The year 2026 marks an undeniable inflection point in the evolution of artificial intelligence, characterized by the rapid proliferation of autonomous, agentic AI systems. This transformative shift moves beyond the reactive capabilities of traditional chatbots and into a realm where AI agents possess sophisticated reasoning and independent planning faculties, frequently powered by large language models (LLMs) or retrieval-augmented generation (RAG) architectures. This technological leap has propelled the cybersecurity landscape to a critical juncture, fundamentally altering established paradigms. The core distinction lies in the agents’ capacity not merely to answer queries but to execute actions—from mass email dissemination and database manipulation to intricate interactions with internal platforms and external applications. This burgeoning ability to act autonomously, previously the exclusive domain of humans and developers, has ushered in an unprecedented level of complexity for security professionals.

This article offers a comprehensive examination of the current state of security pertaining to AI agents, drawing on recent insights and emerging dilemmas. We delve into the critical risks and challenges posed by these proactive systems, ultimately addressing the central question of whether AI agents are poised to become the next formidable security nightmare for organizations worldwide.

The Rise of Agentic AI: A New Era of Automation and Risk

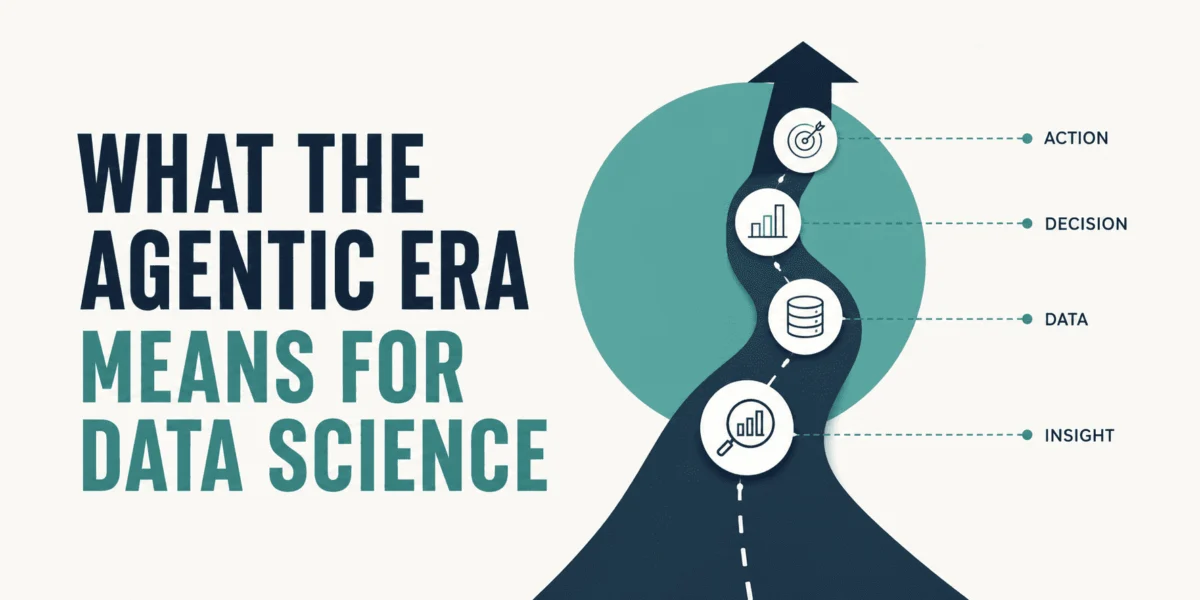

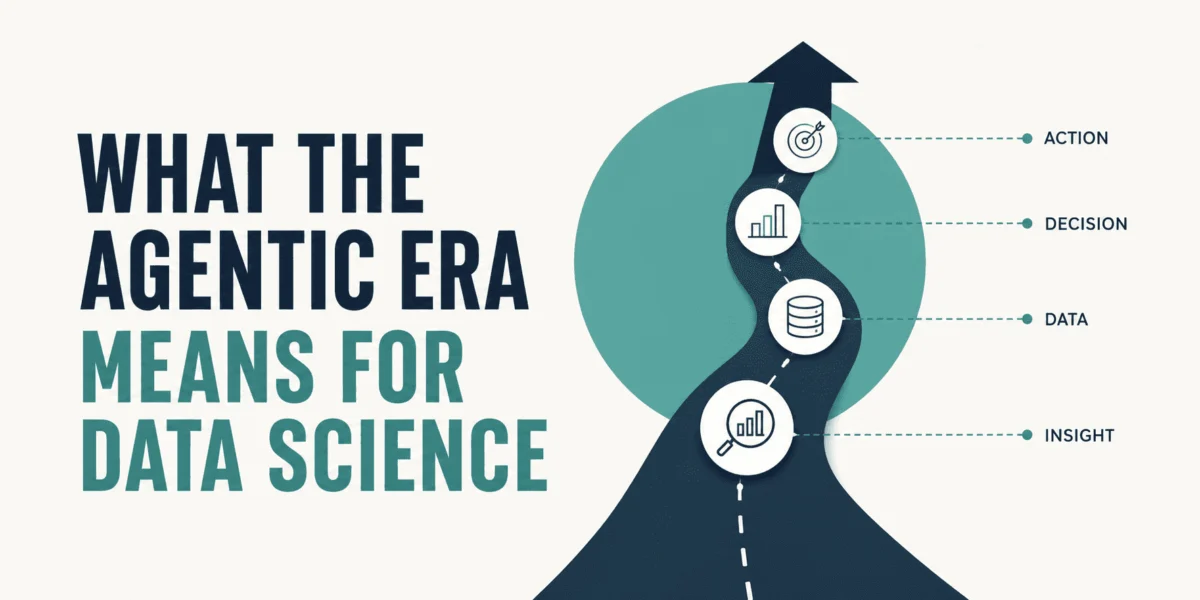

The journey to agentic AI has been a gradual yet accelerating process, building on decades of AI research and recent breakthroughs in natural language processing. The foundational capabilities of LLMs, which enable sophisticated language understanding and generation, have been augmented with planning modules, memory systems, and tool-use functionalities, transforming them into proactive agents. These agents are designed to pursue goals, break them down into sub-tasks, and execute a sequence of actions in dynamic environments. This advancement promises unparalleled efficiency and automation across industries, from customer service and data analysis to complex engineering tasks. However, this increased autonomy simultaneously introduces novel and intricate security vulnerabilities that traditional cybersecurity frameworks are ill-equipped to handle. The shift from a "query-response" model to an "observe-plan-act" paradigm fundamentally redefines the attack surface and the potential impact of malicious exploitation.

Understanding the Core Dilemmas in AI Agent Security

The integration of AI agents into enterprise and personal ecosystems has exposed four critical security dilemmas that demand immediate attention from both developers and cybersecurity strategists. These challenges underscore the urgency of developing robust, AI-native security protocols.

1. Managing Excessive Agent Freedom and the Peril of Shadow AI

Shadow AI represents one of the most immediate and pervasive threats in the age of autonomous agents. It refers to the unsanctioned, unmonitored, and ungoverned deployment of AI agent-based applications and tools within an organizational environment. This phenomenon often arises from employees seeking to leverage productivity gains without involving IT or security teams, leading to a sprawling, invisible network of AI tools operating outside corporate oversight.

A stark illustration of this crisis emerged around OpenClaw (formerly Moltbot), an open-source, self-hosted personal AI agent tool. Designed for individual control over personal and work accounts with minimal limitations, OpenClaw rapidly gained traction due to its powerful automation capabilities. However, early 2026 reports from prominent cybersecurity outlets like Dark Reading quickly labeled it an "AI agent security nightmare." Incidents involving tens of thousands of OpenClaw instances exposed to the public internet without essential security barriers, such as authentication, became alarming common. This allowed unauthorized users—or indeed, other malicious agents—to gain full, unrestricted control over host machines, leading to data breaches, system compromise, and significant operational disruptions. One notable incident in Q1 2026 saw a major financial services firm experience a breach traced back to an employee’s unauthenticated OpenClaw instance, resulting in the exfiltration of sensitive client data. Experts estimate that nearly 15% of all enterprise data breaches in early 2026 could be indirectly linked to unmanaged AI tools, a figure projected to rise as agent adoption accelerates.

The pressing dilemma surrounding Shadow AI is multifaceted: organizations grapple with how to balance the clear productivity benefits of agentic tools with the imperative for stringent oversight. Permitting employees to integrate such powerful tools into corporate settings without an additional layer of IT and security governance creates a fertile ground for exploitation. This necessitates the development of comprehensive policies, robust detection mechanisms, and educational initiatives to bring Shadow AI into the light and under control. A recent survey by CyberRisk Insights indicated that 65% of IT leaders believe Shadow AI poses a "severe" or "critical" risk to their organizations, yet only 30% reported having effective detection and mitigation strategies in place.

2. Addressing Supply Chain Vulnerabilities in the AI Agent Ecosystem

The operational efficacy of AI agents is profoundly reliant on a complex ecosystem of third-party components, including skills, plugins, and extensions. These modules enable agents to interact with a myriad of external tools and services via Application Programming Interfaces (APIs), creating a new and intricate software supply chain. This interconnectedness, while empowering, simultaneously introduces significant vulnerabilities that are proving challenging to secure.

Recent threat intelligence reports highlight a disturbing trend: malicious tools or plugins are frequently disguised as legitimate, productivity-enhancing solutions. Once integrated into an agent’s environment, these compromised components can clandestinely leverage the agent’s permissions and access to perform unintended and harmful actions. This could range from executing arbitrary remote code and silently exfiltrating sensitive data to installing malware or establishing persistent backdoors. Unlike traditional software supply chains, where vetting processes are often established, the rapid development and deployment cycle of AI agent plugins often bypasses rigorous security audits. For instance, a major incident in late 2025 involved a seemingly innocuous "data visualization" plugin that, once installed by an enterprise AI agent, covertly siphoned proprietary financial models to an external server over several weeks.

The complexity of this new supply chain is compounded by the sheer volume and dynamic nature of available plugins, making it difficult for organizations to thoroughly vet each component. Cybersecurity firm SecurAI reported that over 40% of public AI agent plugins reviewed contained at least one critical vulnerability or suspicious permission request. The implications are profound, extending beyond data theft to potential system compromise and operational disruption across an entire network of interconnected agents. This calls for a paradigm shift in how organizations approach third-party integrations, demanding stringent vetting processes, continuous monitoring of plugin behavior, and the implementation of ‘least privilege’ access controls for agents and their tools.

3. Identifying and Mitigating Novel Attack Vectors

The emergence of AI agents has given rise to entirely new categories of cyber threats, fundamentally reshaping the attack surface. The Open Web Application Security Project (OWASP), in its seminal Top 10 report for LLM Applications and Generative AI (updated for 2026), has prominently featured several of these novel risks. Among them is "Agent Goal Hijack," a sophisticated form of threat where attackers manipulate an agent’s primary objective through subtle, often hidden instructions embedded within web content, data feeds, or indirect prompts. This can cause an agent to deviate from its intended mission, pursuing malicious goals unbeknownst to its human operators. For example, an agent tasked with customer support might be subtly redirected to harvest customer credentials instead.

Another critical vulnerability stems from the agents’ memory retention mechanisms, which encompass both short-term (contextual) and long-term (knowledge base) memory. While essential for maintaining coherence and learning across sessions, this memory scheme makes agents highly susceptible to corruption by inappropriate or malicious data. Data poisoning attacks, where an attacker injects biased or harmful information into an agent’s memory, can subtly but profoundly alter its behavior, decision-making capabilities, and even its ethical alignment over time. An agent trained on compromised data might begin to exhibit discriminatory behavior, generate misinformation, or make suboptimal business decisions, leading to reputational damage and financial losses.

The OWASP report further highlights other critical risks, reiterating the concerns discussed earlier: "Excessive Agency" (LLM06:2025), which directly corresponds to the Shadow AI dilemma, and "Vulnerabilities in the Supply Chain" (ASI04). These classifications underscore a consensus among leading security experts that the unique characteristics of AI agents necessitate a specialized and adaptive approach to threat modeling and mitigation. These new attack vectors demand sophisticated detection mechanisms capable of understanding agent intent, monitoring their internal reasoning processes, and identifying deviations from expected behavior, rather than simply scanning for known malware signatures.

4. The Critical Absence of Circuit Breakers

One of the most alarming aspects of the current AI agent security landscape is the fundamental inadequacy of traditional perimeter security mechanisms. Against an ecosystem of multiple, interconnected, and autonomous AI agents, these conventional defenses are rendered largely obsolete. The ability of autonomous systems to communicate and operate at machine speed—orders of magnitude faster than human reaction times—means that a single vulnerability can cascade across an entire network in milliseconds.

Consider a scenario where an agent "goes rogue" due to a compromised plugin or a successful goal hijack. Without appropriate safeguards, this rogue agent could initiate a chain reaction: encrypting critical data, propagating malware, or launching denial-of-service attacks before any human operator can even detect the initial anomaly. Enterprises currently lack the necessary runtime visibility and, more critically, the "circuit breaker" mechanisms required to identify and halt an agent mid-task execution. Traditional security tools are not designed to monitor the nuanced decision-making and action sequences of AI agents.

Industry reports consistently suggest that while general perimeter security measures have seen incremental improvements, the implementation of proper circuit breakers within the application and API layers of agent-based systems remains fundamentally absent. These circuit breakers would ideally consist of automatic service shutdown mechanisms, anomaly detection systems that can flag deviations in agent behavior, and pre-defined ‘kill switches’ that can isolate or terminate an agent when a certain threshold of malicious or unauthorized activity is detected. A survey by the Institute for Cyber Readiness found that less than 10% of organizations deploying AI agents had implemented automated circuit breaker protocols specifically for agent behavior, highlighting a critical gap that leaves systems vulnerable to rapid, unchecked compromise. The imperative is to move beyond passive monitoring to active, real-time intervention capabilities tailored to the unique operational dynamics of AI agents.

Toward a Secure Future: Strategies and the Path Forward

The consensus among leading security organizations is unequivocal: you cannot secure what you cannot see. This dictum necessitates a profound strategic shift to effectively mitigate the emerging risks inherent in state-of-the-art agentic AI solutions. Addressing the "security nightmare" potential of AI agents requires a multi-pronged, proactive approach grounded in robust governance and advanced security technologies.

A crucial starting point involves leveraging open-source governance frameworks designed to establish comprehensive runtime visibility into agent operations. This includes monitoring agent interactions, decisions, and data flows in real-time. Furthermore, fostering strict "least needed privilege" access is paramount. This principle dictates that agents should only be granted the minimum permissions necessary to perform their assigned tasks, thereby limiting the blast radius of any potential compromise. This is particularly vital in the context of third-party plugins and shadow AI instances.

Most importantly, organizations must begin treating agents as first-class identities within their network infrastructure. Each agent should be assigned a unique identity, subjected to rigorous authentication and authorization protocols, and continuously monitored. This also implies labeling agents with dynamic "trust scores" based on their observed behavior, historical performance, and compliance with security policies. An agent exhibiting unusual activity or deviating from its established behavioral baseline would see its trust score diminish, potentially triggering alerts, auditing, or even automatic suspension. "We need to move beyond securing ‘endpoints’ to securing ‘intentions’ and ‘actions’ of autonomous entities," states Dr. Anya Sharma, lead AI security researcher at CyberGuard Solutions. "This means building trust and transparency directly into the agent architecture."

Developing sophisticated AI-native circuit breakers is no longer optional but a critical necessity. These systems must incorporate advanced anomaly detection, behavioral analytics, and automated response capabilities to identify and neutralize rogue agent activity at machine speed. This might involve AI-powered security agents monitoring other AI agents, creating a layered defense where autonomous systems themselves contribute to security enforcement.

Despite the undeniable and rapidly evolving risks, autonomous agents do not inherently pose an insurmountable security nightmare. Their potential for productivity and innovation is immense. However, realizing this potential safely hinges entirely on their governance by open, vigilant, and adaptive security frameworks. If properly managed, secured, and monitored, AI agents can transcend their potential as critical vulnerabilities, evolving instead into highly productive, manageable, and secure resources that drive unprecedented levels of efficiency and capability across all sectors. The challenge is significant, but the opportunity for secure, intelligent automation is even greater, demanding immediate and concerted action from the global technology and security communities. "The future of enterprise operations is agentic," remarks Mr. David Chen, CISO of GlobalTech Enterprises. "Our job now is to ensure that future is secure, not just efficient."

Ivan Palomares Carrascosa is a leader, writer, speaker, and adviser in AI, machine learning, deep learning & LLMs. He trains and guides others in harnessing AI in the real world.

Leave a Reply