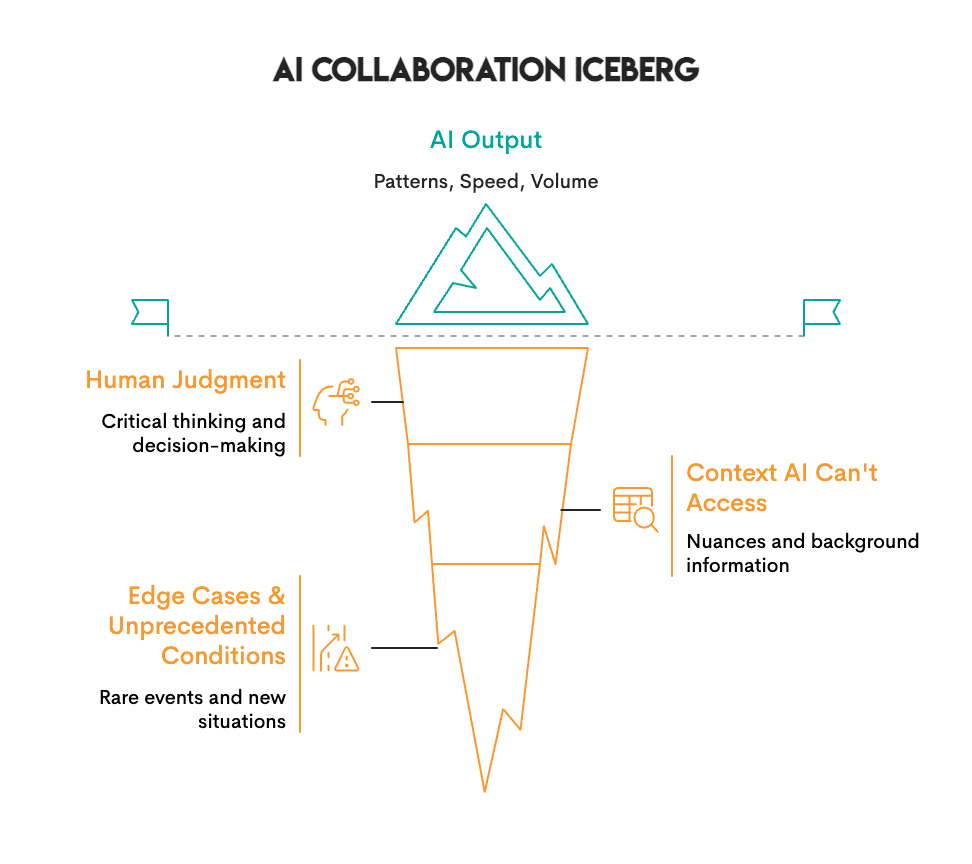

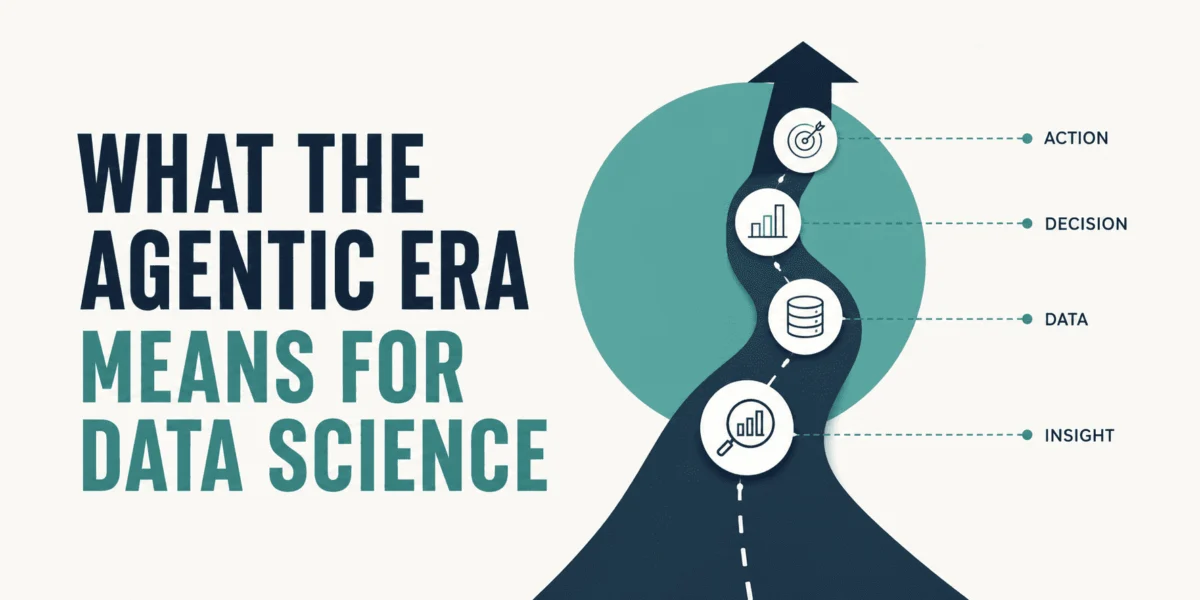

The landscape of artificial intelligence is rapidly evolving beyond simple prompt-response interactions, heralding a new era where human expertise and AI capabilities merge into powerful collaborative systems. While many data scientists, particularly those preparing for interviews, often focus on generating immediate outputs without deeper review, leading companies are pioneering environments where human and artificial intelligence work in concert, making decisions, surfacing insights, and verifying outcomes together. This symbiotic relationship leverages AI’s unparalleled ability to process vast datasets and identify patterns, complemented by human critical thinking, contextual understanding, and ethical judgment. Neither party dictates; instead, they engage in a dynamic interplay that optimizes for accuracy, efficiency, and innovation.

Observing Real-World Applications Across Industries

This collaborative paradigm is not merely theoretical; it is actively transforming critical sectors from scientific research and healthcare to complex business operations. The tangible benefits derived from these integrated systems underscore their profound impact.

Revolutionizing Scientific Research and Healthcare

In the realm of scientific discovery and medical diagnostics, collaborative AI systems are accelerating progress at an unprecedented pace. DeepMind’s AlphaFold, a groundbreaking AI program, exemplifies this shift by generating highly accurate protein structure predictions in hours or days, a task that traditionally consumed years of laborious laboratory research. AlphaFold’s success, initially announced in 2020 and continually refined, significantly advanced structural biology. However, the AI does not operate in isolation; human scientists remain indispensable. Their expertise is crucial for interpreting these complex predictions, discerning their biological significance, and designing the subsequent sequence of experiments to validate findings and translate them into actionable scientific knowledge. The AI provides a powerful hypothesis-generating engine, but human intellect guides the scientific inquiry.

Further illustrating this synergy, Insilico Medicine, a clinical-stage biotechnology company, has pushed the boundaries of drug development. Traditional drug discovery is an arduous process, often requiring four to five years to identify a single promising compound, involving extensive experimentation and high costs. Insilico Medicine developed an AI platform designed to generate and meticulously screen thousands of potential drug molecules, predicting their efficacy and safety profiles. This AI-driven pre-selection dramatically narrows the field of candidates. Subsequently, highly skilled medicinal chemists review the most promising molecules, leveraging their deep understanding of chemical structures and biological interactions to refine designs and formulate validation experiments. This human-AI collaboration has yielded remarkable results: the time required to discover a lead compound has been reduced by approximately 75%, shrinking the timeline from several years to just 18 months. This acceleration not only saves significant resources but also promises faster access to new therapies for patients.

The same pattern of enhanced precision and speed is evident in pathology with companies like PathAI. Diagnosing diseases like cancer from tissue samples traditionally relies on the meticulous examination of slides by pathologists, a process that can be time-consuming and susceptible to human fatigue or subtle variations in interpretation. PathAI’s AI system analyzes these tissue samples, pre-screening for anomalies and highlighting areas of concern. Pathologists then review these AI findings, integrating their vast clinical experience, contextual knowledge of patient history, and nuanced judgment to make a definitive diagnosis. A landmark study conducted at Beth Israel Deaconess Medical Center highlighted the effectiveness of this collaboration, reporting a 99.5% accuracy rate for cancer detections when pathologists worked with AI, compared to 96% when pathologists reviewed slides independently. Furthermore, the time required for slide review decreased significantly. This demonstrates that AI excels at identifying subtle patterns and reducing oversight due to fatigue, while human experts provide the critical clinical context and ultimate diagnostic responsibility.

Across these scientific and healthcare applications, a clear operational model emerges: AI excels at processing vast volumes of data, identifying intricate patterns, and operating at speeds unattainable by humans. Conversely, human experts provide invaluable judgment, contextual understanding, ethical oversight, and the ability to determine the true significance and implications of these patterns. AlphaFold predicts structures, but scientists interpret their meaning. Insilico’s AI generates drug candidates, but chemists synthesize and validate. PathAI flags suspicious cells, but pathologists provide the definitive diagnosis. In each instance, neither AI nor human effort alone could achieve the optimal outcome; it is their combined intelligence that drives superior results.

Enhancing Business Decisions and Operational Efficiency

Beyond scientific frontiers, collaborative AI is profoundly reshaping corporate operations and strategic decision-making, particularly in sectors dealing with high volumes of data and complex risk assessments.

Consider the legal department of JPMorgan Chase, one of the world’s leading financial institutions. Historically, their legal teams dedicated an astonishing 360,000 hours annually to manually reviewing contracts—a process that was not only slow and costly but also prone to human error, particularly with intricate regulatory compliance requirements. To address this challenge, JPMorgan developed COiN (Contract Intelligence), an artificial intelligence platform powered by natural language processing (NLP) and machine learning. COiN can rapidly scan legal documents, extract key provisions, identify unusual or questionable clauses, and categorize contractual terms within seconds. While COiN performs this high-speed analysis, human lawyers remain integral to the process. They review the specific items flagged by the AI, applying their legal acumen to interpret complex clauses, assess risk, and make final decisions. This collaborative approach has enabled JPMorgan to process contracts significantly faster, reduce compliance errors by an impressive 80%, and, crucially, reallocate their highly skilled attorneys’ time from repetitive document review to more strategic tasks like negotiation, client advisory, and legal strategy development.

Similarly, BlackRock, the world’s largest asset manager overseeing trillions of dollars in assets, faced an enormous challenge in managing risk across diverse global markets. Analyzing millions of potential risk scenarios daily, encompassing various asset classes and market dynamics, is a task beyond human capacity. In response, BlackRock developed Aladdin (Asset, Liability, Debt, and Derivatives Investment Network), an AI-based platform designed to aggregate and process immense quantities of market data, identifying potential risks and opportunities in real time. Aladdin’s sophisticated algorithms provide comprehensive analytics and scenario modeling. However, the platform does not make investment decisions autonomously. BlackRock’s experienced portfolio managers meticulously review Aladdin’s insights, combining the AI’s data-driven predictions with their own market intuition, macroeconomic understanding, and client-specific objectives to make all final asset allocations. This human-AI partnership has transformed risk analysis from a multi-day process into a real-time capability. Critically, BlackRock’s portfolios managed through this collaborative model, integrating Aladdin’s analytics with human judgment, have consistently outperformed strategies based purely on algorithmic execution or solely human intuition. The success of Aladdin has led to its widespread adoption, with over 200 other financial institutions now licensing the platform for their own risk management and investment operations.

These examples unequivocally demonstrate a consistent pattern: AI excels at generating options, processing information at scale, and identifying anomalies. Yet, it lacks inherent judgment or the ability to self-correct its fundamental assumptions. JPMorgan’s lawyers ultimately decide on contract clauses, and BlackRock’s portfolio managers make the final investment calls. The AI serves as an indispensable assistant, augmenting human capability rather than replacing it.

The Design Philosophy of Collaborative AI Tools

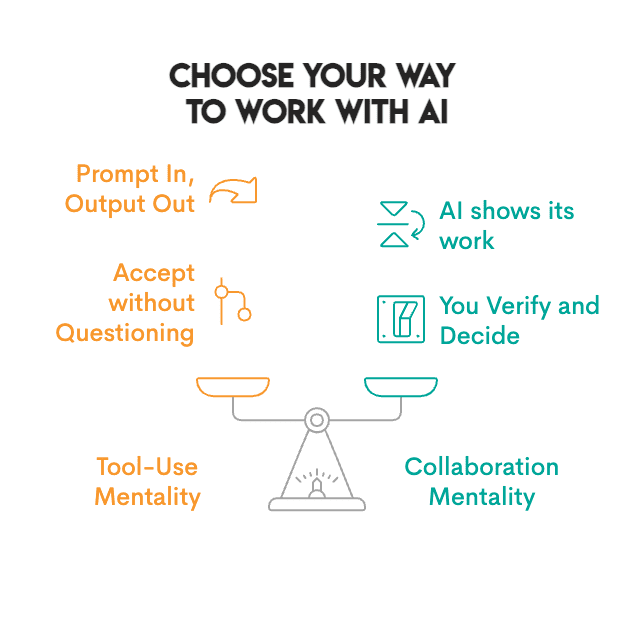

Not all AI tools are designed for effective collaboration. Many operate as "black boxes," delivering an output without transparency into their reasoning or data sources. True collaborative AI tools, however, are engineered to "show their work," fostering trust and enabling verification. This transparency is the bedrock of effective human-AI teamwork.

Collaborative AI tools empower users across various domains:

- General Purpose Assistants: Modern AI assistants, such as advanced large language models (LLMs) like GPT-4, become collaborative when users are encouraged to prompt for sources, request step-by-step reasoning, or ask for alternative perspectives. The ability to audit the AI’s thought process, even if simplified, is key.

- Research and Analysis Platforms: Tools in this category often provide data lineage, source citations, or explain the statistical models used to arrive at conclusions. They might allow users to adjust parameters or explore different analytical pathways.

- Coding and Development Environments: AI-powered coding assistants like GitHub Copilot are collaborative when they suggest code snippets, explain potential bugs, or offer refactoring options, allowing developers to accept, modify, or reject suggestions based on their expertise and project requirements.

- Data Science Workflows: Automated Machine Learning (AutoML) platforms that detail feature engineering steps, explain model selection rationale, or provide interpretability tools (e.g., SHAP values) for model predictions enable data scientists to understand and validate the AI’s recommendations.

- Writing and Communication Aids: Advanced grammar and style checkers or content generation tools that not only suggest edits but also explain the reasoning behind them, or allow iterative refinement and customization, move beyond simple correction to true co-creation.

The defining characteristic of these tools is their commitment to transparency. They enable users to verify findings, scrutinize the underlying logic, and ultimately decide whether to accept, adjust, or reject the AI’s output. This interactive and verifiable process is what distinguishes a mere tool from a genuine collaborator.

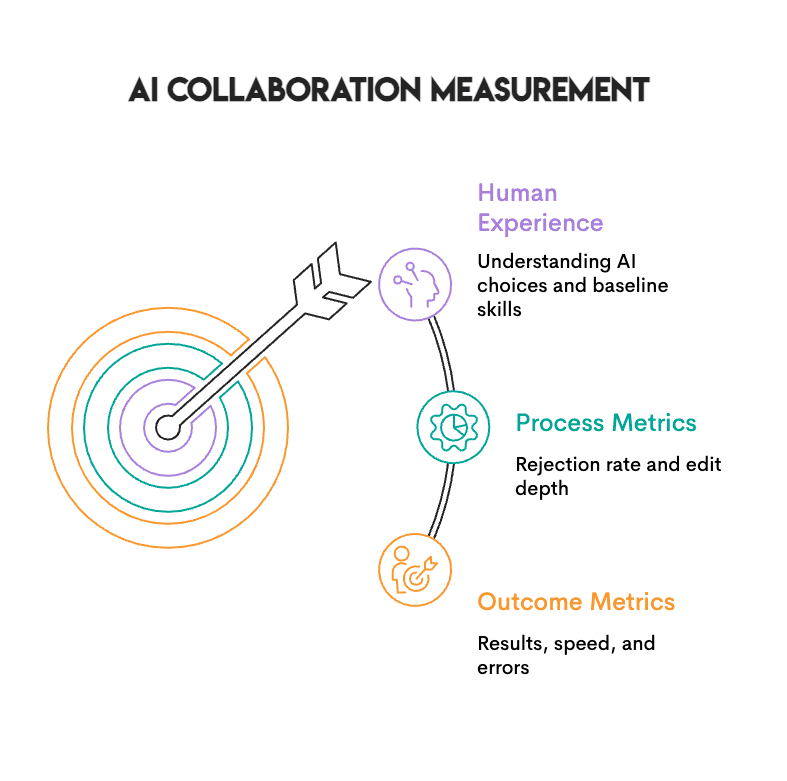

Measuring the Success of Human-AI Collaboration

Evaluating the effectiveness of human-AI collaboration requires a multi-faceted approach, moving beyond simple output metrics to assess the quality of the interaction and the overall performance enhancement. Three primary types of metrics are crucial:

- Accuracy and Quality Improvement: This involves comparing the outcomes of human-AI collaboration against purely human or purely AI-driven efforts. For instance, in diagnostic scenarios, it means measuring the reduction in false positives or negatives. In decision-making contexts, it could be the improved profitability or reduced risk. The Beth Israel Deaconess Medical Center study, showing 99.5% accuracy with AI vs. 96% independently, is a prime example.

- Efficiency Gains: Metrics here focus on time savings, resource optimization, and increased throughput. Examples include reduced processing times for legal documents (JPMorgan’s COiN), faster drug discovery timelines (Insilico Medicine), or quicker risk assessments (BlackRock’s Aladdin). Quantifying the hours saved or the percentage reduction in task completion time provides clear evidence of efficiency.

- Enhanced Human Capabilities and Satisfaction: This less tangible but equally important metric assesses how AI empowers human workers. Does it reduce cognitive load, free up time for more complex or creative tasks, or lead to higher job satisfaction? It also includes the generation of novel insights or options that humans might not have considered independently.

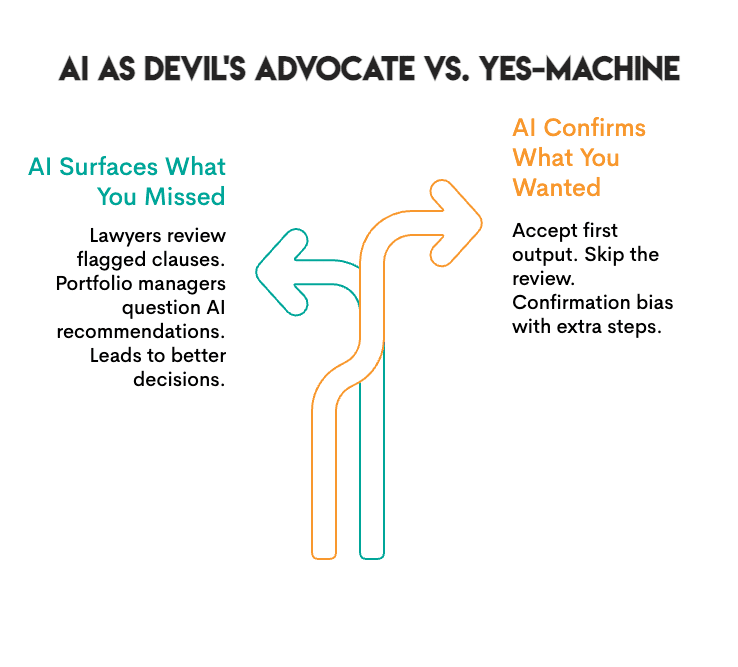

A practical "check" for genuine collaboration is to observe the frequency of output acceptance. If an individual or team consistently accepts the AI’s first output without critical review or modification, the interaction leans more towards rubber-stamping than true collaboration. To maintain a critical perspective and understand the AI’s true contribution, periodically working without AI support can help establish a baseline, allowing individuals to discern their own contributions from those provided by the tool.

Implementing Effective Practices for Seamless Integration

Organizations that successfully integrate human-AI collaboration often adhere to a set of common best practices designed to optimize synergy and mitigate potential pitfalls:

- Clear Role Definition and Trust Building: Establish distinct roles for AI and humans, outlining where AI provides data, patterns, or options, and where humans apply judgment, context, and make final decisions. Fostering a culture of trust requires demonstrating AI’s transparency and reliability, alongside training humans to understand its capabilities and limitations.

- Continuous Training and Skill Development: Equip employees with the necessary skills to interact effectively with AI systems. This includes training in prompt engineering for generative AI, critical evaluation of AI outputs, and understanding the statistical or algorithmic underpinnings of decision support systems.

- Robust Feedback Mechanisms: Implement systems where human feedback on AI outputs can be systematically collected and used to refine and improve the AI models over time. This iterative learning process is crucial for enhancing AI accuracy and relevance.

- Emphasis on Verification and Critical Thinking: Cultivate a workplace culture that encourages questioning AI outputs, cross-referencing information, and seeking alternative explanations. This proactive skepticism is vital for catching errors, biases, or misinterpretations that AI systems might generate.

- Ethical Frameworks and Accountability: Develop clear ethical guidelines for AI deployment, addressing issues such as bias, privacy, and accountability. Ensure that ultimate responsibility for decisions made with AI assistance always rests with human actors.

Concluding Thoughts: Navigating the Future of Work

Human-AI teaming represents a fundamental shift in how work is conceived and executed. We are moving beyond simply issuing commands to systems; instead, we are learning to interact with intelligent agents that offer input, analyze, and propose solutions. This transformative approach necessitates the acquisition of new skills, particularly the discerning judgment required to understand when to trust AI and when to rigorously question its findings. It involves a continuous evaluation of processes to ensure they genuinely yield superior results, rather than merely creating an illusion of productivity. Crucially, it demands that human professionals remain intellectually sharp and engaged enough to identify and rectify errors when they inevitably occur.

Teams that successfully develop these collaborative dynamics with AI are demonstrably producing better outcomes. They are quicker to identify errors, consider a broader spectrum of options, and achieve levels of innovation previously unattainable. Conversely, organizations that fail to cultivate these skills risk either underutilizing AI, thereby missing its immense potential, or becoming overly reliant on it, rendering their human workforce incapable of independent function and critical oversight. The future of work is not human versus AI, but human with AI, forging a powerful partnership that redefines productivity, discovery, and decision-making.

Answering Common Questions about Collaborative AI

What is the fundamental difference between utilizing AI as a mere tool versus collaborating with it?

The distinction lies in transparency and agency. Utilizing AI as a tool often involves providing a command and passively accepting the generated output, akin to a "black box" operation. Collaboration, conversely, demands that the AI "show its work." This means the system provides visibility into its reasoning, data sources, code (if applicable), or the steps it took to reach a conclusion. This transparency empowers the human user to verify the AI’s findings, understand its methodology, and then consciously decide whether to accept, adjust, or reject the output. Without this ability to scrutinize the AI’s process, genuine collaboration—which implies mutual understanding and informed decision-making—is impossible.

How can professionals avoid becoming overly reliant on AI systems?

Avoiding over-reliance on AI is critical for maintaining human agency and critical thinking. One effective strategy is to periodically perform tasks or solve problems without AI assistance. This practice helps individuals understand their own baseline performance and cognitive processes. Furthermore, consistently asking why the AI provided a particular output, questioning its assumptions, and actively seeking to articulate the reasoning behind its suggestions are crucial habits. If one finds themselves routinely accepting the first AI-generated output without critical review, or if their performance significantly deteriorates when AI tools are unavailable, it is a strong indicator of over-reliance. Continuous learning about AI’s limitations and biases also fortifies independent judgment.

Are companies actively evaluating human-AI interaction skills in job interviews?

Yes, increasingly, forward-thinking companies are integrating assessments of how candidates interact with AI tools into their interview processes, particularly for roles in data science, engineering, and strategic decision-making. Interviewers are not merely looking for candidates who can operate AI tools, but rather those who demonstrate critical judgment. Candidates who accept every AI suggestion without questioning or attempting to refine it are often perceived as lacking critical thinking and analytical rigor. Conversely, those who meticulously review AI outputs, pose probing questions, identify potential flaws or biases, and thoughtfully adjust or augment the AI’s suggestions, demonstrate superior judgment, a deeper understanding of the problem, and a collaborative mindset—qualities highly valued in the evolving workplace.

Nate Rosidi is a data scientist and in product strategy. He’s also an adjunct professor teaching analytics, and is the founder of StrataScratch, a platform helping data scientists prepare for their interviews with real interview questions from top companies. Nate writes on the latest trends in the career market, gives interview advice, shares data science projects, and covers everything SQL.

Leave a Reply