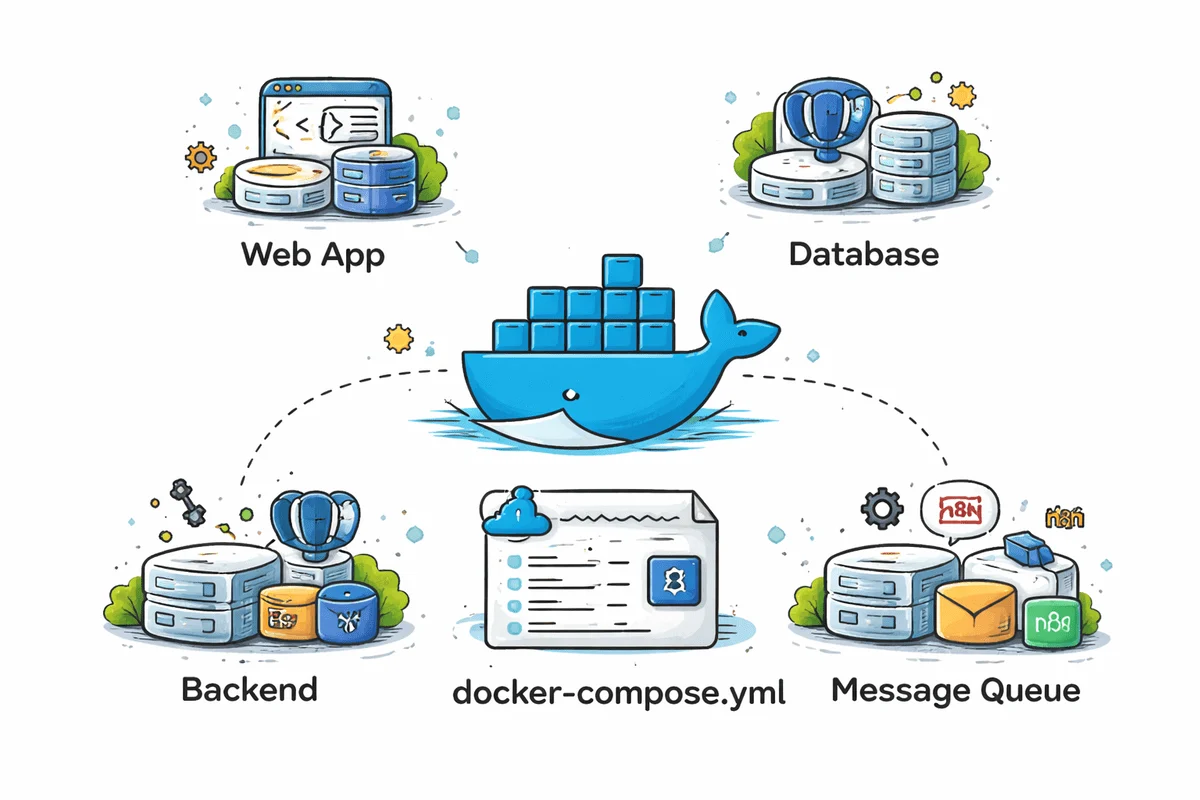

The landscape of modern software development has been profoundly reshaped by containerization, with Docker emerging as a cornerstone technology for creating consistent, portable, and isolated environments. This paradigm shift, which addresses the perennial "it works on my machine" problem, has dramatically streamlined the deployment process and enhanced developer productivity across the board. Building upon Docker’s foundational capabilities, Docker Compose elevates this efficiency by enabling developers to define and run multi-service Docker applications using a single, declarative YAML file. This orchestration tool is particularly indispensable for complex projects that require several interconnected services – such as a web application, its database, caching layers, and administrative interfaces – to function cohesively.

The Containerization Revolution and Docker’s Ascent

Before the widespread adoption of containers, developers frequently grappled with environment inconsistencies. Setting up development environments often involved intricate dependency management, operating system-specific configurations, and version conflicts that could consume significant time and introduce errors. The advent of Docker in 2013 marked a pivotal moment, offering a lightweight, standardized way to package applications and their dependencies into self-contained units called containers. These containers could then run reliably on any system that hosts a Docker engine, irrespective of the underlying infrastructure. This innovation rapidly gained traction, with industry reports consistently highlighting Docker’s dominance. For instance, according to a 2023 survey by Statista, Docker remains one of the most widely used container technologies among developers globally, underscoring its foundational role in contemporary software engineering.

The implications of this shift were profound. Development teams could achieve unprecedented levels of consistency from local machines to production servers, significantly reducing deployment friction and fostering a more agile development methodology. Moreover, the isolation provided by containers improved security and resource management, allowing multiple applications to coexist on the same host without interference. However, as applications grew more complex, often comprising several interdependent services, managing individual Docker containers became cumbersome. This is where Docker Compose stepped in, offering a higher-level abstraction to define and manage these multi-container applications as a single unit.

Docker Compose: Orchestrating Complexity with Simplicity

Docker Compose acts as an orchestrator for local development and testing environments, allowing developers to declare all the services, networks, and volumes required for an application in a docker-compose.yml file. This single configuration file then enables developers to start, stop, and rebuild their entire application stack with simple commands like docker compose up. This declarative approach significantly reduces the manual overhead associated with managing multiple containers, databases, and other services individually. Industry experts often point to Docker Compose as a critical enabler for microservices architectures in local development, as it simplifies the setup of intricate service dependencies.

The benefits extend beyond mere convenience. Docker Compose promotes consistency across development teams, ensuring that every developer works with an identical environment. This consistency accelerates onboarding for new team members, as they can set up a full development environment with minimal effort. Furthermore, it facilitates continuous integration and continuous deployment (CI/CD) pipelines by providing a reliable and reproducible environment for automated testing. While Kubernetes is often the go-to for production-grade orchestration at scale, Docker Compose remains the preferred choice for defining and managing local multi-service development environments due to its simplicity and ease of use.

The utility of pre-built Docker Compose templates cannot be overstated. These templates serve as robust starting points, encapsulating best practices and common architectural patterns for various application types. By leveraging these pre-configured setups, developers can bypass the initial, often time-consuming, configuration phase and dive directly into building application logic. This article delves into seven such essential Docker Compose templates, each designed to address specific development needs and accelerate project initiation.

Case Studies in Development Efficiency: Essential Docker Compose Templates

These templates are more than just examples; they are fully functional blueprints that can be cloned, customized, and integrated into a developer’s workflow, offering a practical foundation for both development and DevOps projects.

1. WordPress Development: Streamlining CMS Environments

For the vast ecosystem of WordPress developers, managing local development environments has historically been a source of friction. The nezhar/wordpress-docker-compose template offers a comprehensive solution, enabling the rapid deployment of a full WordPress environment. This template bundles key components such as WordPress itself, a MySQL database for content storage, WP-CLI (WordPress Command Line Interface) for administrative tasks, and phpMyAdmin for visual database management.

This setup is invaluable for a variety of WordPress workflows, including theme and plugin development, client demonstrations, and rigorous testing of content management system (CMS) functionalities. By providing a realistic local environment that mirrors production setups, developers can ensure compatibility and performance before deployment. The template’s emphasis on integrating essential tools like phpMyAdmin underscores its utility for both front-end and back-end aspects of WordPress development, making it an ideal starting point for developers seeking a repeatable and robust foundation for their WordPress projects. The ability to quickly spin up and tear down environments empowers faster iterations and significantly reduces the setup overhead traditionally associated with WordPress development.

2. Next.js Self-Hosting: Architecting Modern Web Applications

The leerob/next-self-host template caters specifically to developers aiming to self-host modern Next.js applications, offering a more production-oriented setup than a basic development server. This template is meticulously crafted around core technologies including Next.js for front-end rendering and server-side logic, PostgreSQL as a robust relational database, Docker for containerization, and Nginx as a high-performance web server and reverse proxy.

What distinguishes this template is its thoughtful consideration of practical production concerns. It incorporates elements like caching strategies to improve performance, Incremental Static Regeneration (ISR) for dynamic content updates, and robust environment variable handling for secure configuration management. This comprehensive approach moves beyond a minimal demo, providing a clear blueprint for structuring a modern, full-stack Next.js deployment in a realistic manner. For developers embarking on self-hosted Next.js projects, cloning and building upon this template offers a solid, performant, and scalable architectural foundation, mitigating many common deployment challenges upfront.

3. Robust Data Management: PostgreSQL and pgAdmin

A reliable and easily accessible database environment is crucial for almost any application development. The postgresql-pgadmin example within Docker’s official awesome-compose repository provides an elegant solution for developers needing a quick local database setup. This template showcases a practical configuration for a PostgreSQL database, a powerful open-source relational database, paired with pgAdmin, a popular web-based administration tool.

This combination is particularly useful for tasks such as schema management, executing complex queries, and visually inspecting data, all within a browser-based interface. For developers working on data-intensive applications or simply needing a sandbox for database experimentation, this template offers an immediate and intuitive environment. Its simplicity ensures that developers can spin up a functional database instance with minimal configuration, allowing them to focus on application logic rather than intricate database setup procedures. The inclusion of pgAdmin further enhances productivity by providing a user-friendly interface for database interactions, making it an excellent choice for both experienced database administrators and developers new to PostgreSQL.

4. Django Backend: Python’s Full-Stack Powerhouse

For Python web development, particularly with the Django framework, the nickjj/docker-django-example repository offers a comprehensive starting point that goes far beyond a rudimentary demonstration. This template is designed as a foundational Docker and Django application, suitable for initiating new projects or guiding the containerization of existing ones. It expertly integrates several services and patterns commonly found in real-world Django deployments.

Key components include PostgreSQL for persistent data storage, Redis for caching and session management, and Celery for asynchronous task processing. Crucially, it also demonstrates environment-based configuration, a best practice for managing sensitive credentials and varying settings across different deployment stages. This holistic approach provides a richer local setup that developers can run, study, and extend to meet the specific requirements of their projects. For Python developers seeking to build scalable and robust web applications with Django, this template serves as an invaluable resource, offering a pre-configured, production-ready environment that can be rapidly customized.

5. Kafka for Event-Driven Architectures: Mastering Real-time Data

The adoption of event-driven architectures and streaming systems has surged with the rise of microservices and real-time data processing. For developers looking to learn or implement Apache Kafka, the conduktor/kafka-stack-docker-compose repository is an exceptional resource. Unlike minimal demos, this template is meticulously designed to replicate realistic Kafka deployment patterns, providing a comprehensive stack for experimentation and development.

It offers various stack options, including Kafka itself, Zookeeper for coordination, Schema Registry for managing data schemas, Kafka Connect for integrating with other systems, REST Proxy for HTTP access, ksqlDB for stream processing, and Conduktor Platform for advanced management and monitoring. This extensive setup is particularly useful for developers who need to run a local Kafka environment to explore how different services interact, build practical understanding of event-driven systems, and prototype complex data pipelines. It significantly lowers the barrier to entry for mastering Kafka, which is a critical skill for many modern data and backend engineering roles.

6. AI Workflow Automation: n8n’s Self-Hosted Solution

The burgeoning field of artificial intelligence and machine learning has created a demand for robust, self-hosted AI development environments. The n8n-io/self-hosted-ai-starter-kit template from n8n stands out as one of the most practical options for developers keen on building local AI workflows and automations. Described as an open Docker Compose template, it facilitates the bootstrapping of a local AI and low-code setup.

This powerful stack combines self-hosted n8n, an open-source workflow automation platform, with Ollama for running large language models (LLMs) locally, Qdrant for vector database capabilities (essential for retrieval-augmented generation), and PostgreSQL for data storage. The template is exceptionally useful for experimenting with AI agents, developing advanced workflow automations, leveraging local AI models, and constructing retrieval-based pipelines without the complexity of assembling each component from scratch. It empowers developers to explore the cutting edge of AI, focusing on innovation rather than infrastructure setup, and addresses growing concerns about data privacy by enabling local model execution.

7. Local LLM Exploration: Ollama and Open WebUI Docker Compose

For developers deeply engaged in exploring local AI tooling and large language models (LLMs), the ollama-litellm-openwebui setup within the ruanbekker/awesome-docker-compose repository provides a highly flexible and accessible starting point. This stack is built around three core components: Ollama for running various LLMs locally, LiteLLM for connecting to a multitude of OpenAI-compatible APIs and managing diverse model endpoints, and Open WebUI for a clean, browser-based interface to interact with these models.

This combination makes it practical for experimenting with different local models while also seamlessly integrating with external AI services through a unified API. The Open WebUI component significantly enhances the user experience, offering a more intuitive way to manage models, prompt them, and review responses compared to command-line interactions. This template serves as a strong foundation for developers who require a flexible local AI environment for rapid prototyping, fine-tuning, and integrating LLMs into their own applications. It democratizes access to powerful AI capabilities, allowing for innovation and development without constant reliance on cloud-based services.

The Broader Impact: Accelerating Innovation and Collaboration

These Docker Compose templates collectively represent a significant leap forward in developer productivity and project initiation. By providing battle-tested, pre-configured environments, they allow developers to shift their focus from intricate setup processes to actual application development and problem-solving. This not only accelerates development cycles but also fosters a more standardized and reproducible approach to software engineering.

The implications for team collaboration are particularly noteworthy. With a standardized docker-compose.yml file, every team member can spin up an identical development environment, eliminating "works on my machine" discrepancies and facilitating smoother handoffs and code reviews. For learning complex technologies like Kafka or experimenting with emerging fields like AI, these templates lower the barrier to entry, enabling faster learning and hands-on experimentation without the steep initial configuration overhead.

While Docker Compose is predominantly used for local development, its principles of defining multi-service applications declaratively are foundational to modern DevOps practices. It provides a stepping stone towards more advanced orchestration systems and promotes an infrastructure-as-code mindset. Challenges, such as managing resource consumption for very large local stacks or ensuring consistent configurations across many different projects, still exist, but the benefits in terms of efficiency and consistency largely outweigh these considerations for the typical development workflow. The vibrant open-source community continually contributes new templates and refines existing ones, ensuring that Docker Compose remains a dynamic and essential tool in the developer’s arsenal.

In conclusion, the strategic adoption of these Docker Compose templates empowers developers to move from conceptualization to tangible building with unprecedented speed and reliability. Whether the task involves a robust CMS, a cutting-edge full-stack web application, intricate database workflows, complex Python backends, real-time streaming systems, or advanced local AI stacks, these templates offer practical, immediately usable foundations. They are not merely conveniences but fundamental tools that drive efficiency, consistency, and innovation in the rapidly evolving world of software development.

Abid Ali Awan (@1abidaliawan) is a certified data scientist professional who loves building machine learning models. Currently, he is focusing on content creation and writing technical blogs on machine learning and data science technologies. Abid holds a Master’s degree in technology management and a bachelor’s degree in telecommunication engineering. His vision is to build an AI product using a graph neural network for students struggling with mental illness.

Leave a Reply