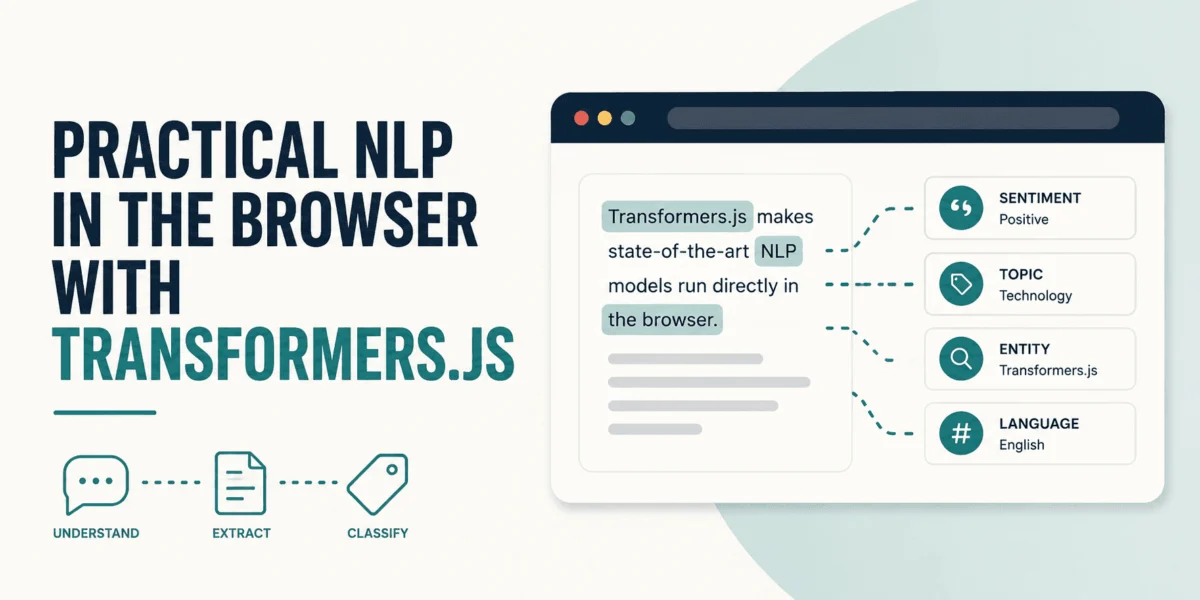

The landscape of software development is undergoing a profound transformation, ushering in what many industry experts are calling the "agent-first" artificial intelligence era. This paradigm shift moves beyond reactive code generation to sophisticated AI agents capable of genuinely understanding, planning, and executing complex software engineering processes. At the forefront of this evolution is Google Antigravity, a powerful new tool designed to empower developers with highly customizable AI agents, fundamentally altering how code is conceived, written, and maintained. This article delves into the core tenets of Antigravity—rules, skills, and workflows—and illustrates their combined potential through a practical application: building a robust code quality assurance (QA) agent for Python development.

The transition to agent-first AI marks a significant departure from previous generations of AI coding assistants, which primarily functioned as intelligent autocomplete or boilerplate generators. While valuable, these tools often required constant human intervention and lacked the contextual understanding necessary for autonomous task execution. Modern software development, characterized by increasingly complex systems, rapid iteration cycles, and a perpetual battle against technical debt, demands more. The overhead associated with manual code reviews, ensuring adherence to coding standards like PEP 8, and generating comprehensive unit tests can consume a significant portion of a developer’s time, diverting focus from innovation. According to a 2023 survey by Stack Overflow, developers spend an average of 17% of their time on debugging and testing, and a further substantial portion on code review and refactoring. This highlights a critical need for automation that can intelligently offload these labor-intensive tasks.

Google Antigravity emerges as a solution tailored for this new era, offering a framework for constructing AI agents that not only generate code but also "understand" the underlying processes and constraints. It positions itself as a key component in Google’s broader AI strategy, complementing initiatives like Gemini and Vertex AI by bringing advanced AI capabilities directly into the developer’s workspace. The tool’s emphasis on customization allows organizations to tailor agent behavior to their specific coding standards, architectural patterns, and development methodologies, ensuring that AI assistance aligns perfectly with internal best practices rather than imposing generic solutions.

Understanding the Foundational Pillars: Rules, Skills, and Workflows

To grasp Antigravity’s transformative potential, it is essential to comprehend its three cornerstone concepts: rules, skills, and workflows. These elements collectively define an agent’s intelligence, capabilities, and operational sequences, enabling unprecedented levels of automation and precision in software development.

Rules serve as the foundational principles and constraints that guide an Antigravity agent’s behavior. Unlike traditional linting tools that operate on static configurations, Antigravity rules are defined using natural language instructions, allowing for nuanced and context-aware enforcement of coding standards, architectural guidelines, security policies, and performance best practices. For instance, a rule might dictate adherence to specific design patterns, prohibit the use of certain libraries, or enforce accessibility standards in UI code. The ability to express these constraints in plain language significantly lowers the barrier to entry for defining complex governance policies, ensuring that all AI-generated or refactored code aligns with organizational mandates. This is crucial for maintaining code quality, reducing technical debt, and fostering collaborative development across diverse teams. By automatically applying these rules, Antigravity agents can preemptively catch issues that would typically require time-consuming manual code reviews, leading to more consistent and higher-quality codebases.

Skills are modular, atomic capabilities that an agent can invoke to perform specific tasks. These are essentially the tools in an agent’s arsenal, allowing it to interact with the external environment, generate specific types of content, or execute specialized functions. Examples of skills could range from generating unit tests (as demonstrated later), refactoring code, interacting with version control systems, querying databases, writing documentation, or even making API calls to external services. The power of skills lies in their reusability and composability; once defined, a skill can be leveraged across multiple agents and workflows, enabling agents to tackle a wide array of development challenges without requiring exhaustive, explicit programming for each scenario. This modularity not only streamlines the development of agents but also ensures that their capabilities can be easily updated and expanded as new requirements emerge. The concept mirrors human expertise, where individuals possess distinct skills they apply as needed within their workflow.

Workflows act as the orchestrators, stitching together rules and skills into coherent, multi-step automated processes. A workflow defines a sequence of actions an agent should take to achieve a larger objective. These sequences are not merely linear scripts; they can incorporate conditional logic, branching paths, and iterative steps, allowing agents to respond dynamically to different situations and achieve complex outcomes. For example, a QA workflow might first apply a set of style rules, then identify code smells, subsequently invoke a refactoring skill, and finally call a test generation skill. Workflows represent the operational blueprint for an agent, transforming a collection of individual capabilities into a highly effective, automated pipeline. They are analogous to modern CI/CD pipelines but with an added layer of AI-driven decision-making and contextual understanding, enabling developers to automate entire development loops, from initial code commit to deployment.

Practical Application: Building a Python Code Quality Assurance Agent

To illustrate the synergy of rules, skills, and workflows, let’s walk through the step-by-step process of configuring an Antigravity agent for Python code quality assurance. This agent will automatically review Python code, enforce formatting standards, and generate comprehensive unit tests, all without requiring external tools beyond the Antigravity ecosystem.

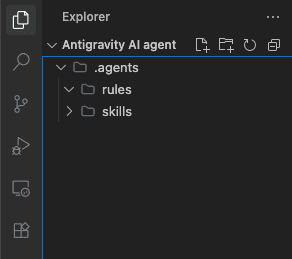

The initial setup involves downloading and installing Google Antigravity. Once installed, the desktop application allows developers to define a project workspace, typically a folder containing the Python project files. For this demonstration, we’ll assume a new, empty workspace. The first critical step in structuring our agent’s intelligence is to create a dedicated .agents directory at the root of the project. Within this, two subfolders are established: rules and skills. This hierarchical structure promotes organization and modularity, making it easy to manage and scale agent configurations.

Defining the Python Style Rule:

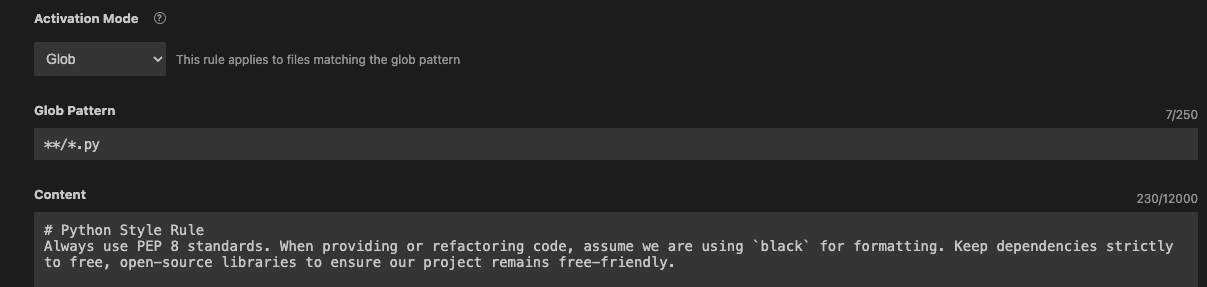

Our first task is to define a python-style.md file within the rules subfolder. This Markdown file will contain the natural language instructions that dictate our agent’s adherence to Python formatting and dependency standards.

# Python Style Rule

Always use PEP 8 standards. When providing or refactoring code, assume we are using `black` for formatting. Keep dependencies strictly to free, open-source libraries to ensure our project remains free-friendly.This rule is concise yet powerful. It mandates compliance with PEP 8, Python’s official style guide, which promotes readability and consistency. It also specifies the use of black, an opinionated code formatter widely adopted in the Python community for its ability to enforce a consistent style without developer intervention. Furthermore, the rule includes a crucial constraint regarding dependencies, ensuring that the project remains reliant solely on free, open-source libraries. This decision could be driven by cost considerations, security policies, or a commitment to open-source principles.

To activate this rule, Antigravity’s customization panel allows developers to specify an activation model. Setting it to "glob" with the pattern **/*.py ensures that this rule is applied automatically to all Python files within the workspace, providing consistent quality checks across the entire codebase. This global application eliminates the need for manual rule enforcement, drastically reducing the chances of style inconsistencies.

Teaching the Agent a Key Skill: Pytest Generation:

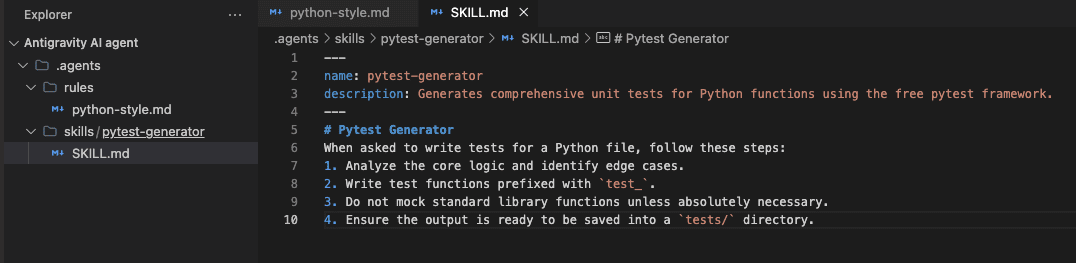

Next, we imbue our agent with a specific capability: generating robust unit tests for Python code. Inside the skills subfolder, a new directory named pytest-generator is created, and within it, a SKILL.md file defines this capability.

# Pytest Generator Skill

Generates comprehensive pytest unit tests for Python functions, covering various scenarios including edge cases, normal operation, and error handling.This skill empowers the agent to analyze Python functions, identify potential test scenarios, and automatically generate pytest-compatible test cases. Unit testing is a cornerstone of modern software engineering, helping developers catch bugs early, prevent regressions, and build confidence in their codebase. Automating this process with an AI agent can significantly accelerate development cycles and improve test coverage, especially for projects with rapidly evolving codebases. The agent, equipped with this skill, understands the intricacies of test generation, considering different inputs, expected outputs, and potential failure modes, thereby reducing the manual effort traditionally associated with writing comprehensive test suites.

Orchestrating Actions with a Workflow: The QA Check:

The final step is to integrate these rules and skills into a cohesive workflow. Within Antigravity’s "Workflows" navigation pane, a new workflow named qa-check is created with the following definition:

# Python QA Check

# Description: Automates code review and test generation for Python files.

Step 1: Review the currently open Python file for bugs and style issues, adhering to our Python Style Rule.

Step 2: Refactor any inefficient code.

Step 3: Call the `pytest-generator` skill to write comprehensive unit tests for the refactored code.

Step 4: Output the final test code and suggest running `pytest` in the terminal.This workflow acts as the operational script for our QA agent.

- Step 1 leverages the previously defined

Python Style Ruleto scan the active Python file for deviations from PEP 8 andblackstandards, as well as potential bugs. - Step 2 demonstrates the agent’s inherent ability to identify and refactor inefficient or problematic code, going beyond mere formatting to improve code quality.

- Step 3 activates the

pytest-generatorskill, instructing the agent to produce a full suite of unit tests for the refactored code. This seamless integration showcases the power of combining rules (for guidance) with skills (for action). - Step 4 ensures that the agent provides actionable output, presenting the generated tests and prompting the developer to execute them.

Demonstrating the Agent in Action:

To observe the qa-check workflow in action, we create a deliberately flawed Python file named flawed_division.py:

def divide_numbers( x,y ):

return x/yThis code snippet violates PEP 8 spacing conventions, lacks a docstring, and, critically, does not handle the ZeroDivisionError case, making it prone to runtime crashes. With this file open in the Antigravity workspace, invoking the agent via the console with the qa-check command triggers the workflow.

Upon execution, the agent processes the file according to the defined rules and skills. It first applies the Python Style Rule, refactoring the code to conform to PEP 8 and black standards. It also identifies the lack of error handling for division by zero and introduces a more robust implementation. The refactored code suggested by the agent would appear similar to:

def divide_numbers(x, y):

"""

Divides two numbers.

Args:

x (float or int): The numerator.

y (float or int): The denominator.

Returns:

float: The result of the division.

Raises:

ValueError: If the denominator is zero.

"""

if y == 0:

raise ValueError("Cannot divide by zero")

return x / yBeyond refactoring, the agent, leveraging the pytest-generator skill, produces a comprehensive suite of unit tests. These tests demonstrate the agent’s understanding of various scenarios, including normal operations, negative inputs, floating-point numbers, zero numerator, and crucially, the specific error handling for a zero denominator. A sample of the generated tests might include:

import pytest

from flawed_division import divide_numbers

def test_divide_numbers_normal():

assert divide_numbers(10, 2) == 5.0

assert divide_numbers(9, 3) == 3.0

def test_divide_numbers_negative():

assert divide_numbers(-10, 2) == -5.0

assert divide_numbers(10, -2) == -5.0

assert divide_numbers(-10, -2) == 5.0

def test_divide_numbers_float():

assert divide_numbers(5.0, 2.0) == 2.5

assert divide_numbers(7, 3) == pytest.approx(2.3333333333333335) # Demonstrating precision

def test_divide_numbers_zero_numerator():

assert divide_numbers(0, 5) == 0.0

def test_divide_numbers_zero_denominator():

with pytest.raises(ValueError, match="Cannot divide by zero"):

divide_numbers(10, 0)

with pytest.raises(ValueError, match="Cannot divide by zero"):

divide_numbers(-5, 0)This demonstration vividly illustrates how Antigravity combines declarative rules, modular skills, and orchestrated workflows to deliver a complete, automated solution for code quality assurance. The agent first analyzes the code under the defined constraints, then autonomously calls a specialized skill to produce a comprehensive testing strategy tailored to the codebase, and finally suggests actionable steps to the developer.

Broader Implications and Future Outlook

The advent of tools like Google Antigravity carries significant implications for the future of software development, potentially reshaping developer roles, improving productivity, and elevating overall code quality across the industry.

Enhanced Developer Productivity: By automating repetitive and time-consuming tasks such as code formatting, basic refactoring, and test generation, Antigravity frees developers to focus on higher-level problem-solving, architectural design, and innovative feature development. This can lead to substantial gains in productivity, potentially reducing the time spent on mundane tasks by 20-30%, allowing teams to deliver projects faster and more efficiently.

Improved Code Quality and Consistency: The consistent application of rules ensures that all code adheres to predefined standards, reducing technical debt and improving maintainability. AI-generated tests, when properly supervised, can lead to higher test coverage and earlier detection of bugs, resulting in more robust and reliable software. This consistency is particularly beneficial in large organizations with multiple development teams, where maintaining a unified coding standard can be challenging.

Evolution of Developer Roles: The role of a developer may evolve from primarily coding and debugging to orchestrating and supervising AI agents. Developers will become "agent whisperers," defining rules, designing skills, and constructing workflows, essentially programming the AI to program for them. This shift demands new skills, including prompt engineering, AI system design, and a deeper understanding of automation principles.

Competitive Landscape and Google’s Vision: Google Antigravity enters a competitive landscape populated by other AI coding assistants. Its differentiator lies in its "agent-first" philosophy, emphasizing deep understanding, customizable behavior, and orchestrated workflows, moving beyond simple code completion or suggestion. This aligns with Google’s broader vision of empowering developers with advanced AI tools, making complex AI capabilities accessible and practical for everyday software engineering challenges. The potential for integration with other Google Cloud services (e.g., for security scanning, deployment, or monitoring) could further solidify its position.

Ethical Considerations and Responsible AI: As with all powerful AI tools, the use of Antigravity also necessitates consideration of ethical implications. Ensuring that AI-generated code is free from bias, adheres to security best practices, and does not introduce new vulnerabilities will be paramount. Developers must maintain oversight and critical judgment, understanding that AI is a powerful assistant, not a replacement for human intellect and accountability. The "free, open-source libraries" rule implicitly touches upon supply chain security and dependency management, crucial areas for responsible AI development.

In conclusion, Google Antigravity represents a significant leap forward in AI-assisted software development. By meticulously combining rules, skills, and workflows, it transforms generic AI capabilities into specialized, efficient, and robust "workmates" for developers. The practical demonstration of a Python QA agent highlights its ability to streamline code formatting, intelligent refactoring, and comprehensive test generation. As the agent-first AI era continues to unfold, tools like Antigravity are poised to redefine how software is built, fostering greater innovation, efficiency, and quality across the entire development lifecycle.

Leave a Reply