In an era increasingly defined by the proliferation of sophisticated artificial intelligence, Google DeepMind has introduced SynthID, a cutting-edge framework designed to embed imperceptible digital watermarks directly into AI-generated content across various media formats. This strategic development marks a significant stride in the global effort to establish verifiable provenance for synthetic media, thereby strengthening trust in digital information and actively combating the pervasive threats of misinformation, deepfakes, and the malicious misuse of AI technologies. Unveiled in August 2023, SynthID integrates directly into Google’s suite of generative AI models, including Gemini for text, Imagen for images, Lyria for audio, and Veo for video, positioning it as a foundational layer for content authenticity within the Google ecosystem and potentially a blueprint for the broader industry.

The Urgent Imperative: Navigating the AI Content Deluge

The rapid advancements in generative AI, exemplified by models capable of producing highly realistic text, images, audio, and video, have democratized content creation but simultaneously introduced unprecedented challenges. The ease with which synthetic media can be generated and disseminated has blurred the lines between authentic and artificial, creating fertile ground for societal disruption. Reports from cybersecurity firms and media watchdogs increasingly highlight a concerning rise in deepfake incidents, with some analyses suggesting a year-over-year increase of over 500% in detected deepfake content, particularly targeting political figures, celebrities, and financial institutions. Public opinion surveys consistently reveal high levels of concern regarding the potential for AI to spread misinformation and influence elections, underscoring a growing crisis of trust in digital information.

Prior to SynthID, efforts to verify content authenticity often relied on metadata-based approaches, such as those championed by the Coalition for Content Provenance and Authenticity (C2PA) or Adobe’s Content Authenticity Initiative. While valuable, these methods typically append authenticity information after content generation, making them susceptible to removal or alteration through common editing processes. SynthID distinguishes itself by operating at a deeper, more fundamental level – directly embedding a hidden signature at the model or pixel level during the content generation process itself. This approach aims to create a more resilient and enduring mark of origin, crucial for content that may undergo subsequent transformations.

A Chronology of Innovation: Google DeepMind’s Path to SynthID

The development of SynthID can be understood within Google’s long-standing commitment to responsible AI and its continuous investment in AI safety and ethics. The genesis of Google DeepMind itself, formed in April 2023 through the merger of Google Brain and DeepMind, signaled an intensified focus on cutting-edge AI research coupled with robust safety protocols. This consolidation brought together some of the world’s leading AI scientists and engineers, accelerating the pace of innovation across various domains, including generative models and their associated ethical safeguards.

The imperative for a solution like SynthID became increasingly apparent as Google’s internal generative AI capabilities matured. As models like Imagen demonstrated the ability to create photorealistic images and Gemini showcased multimodal generation prowess, the need for an integrated provenance system became paramount. The official announcement of SynthID in August 2023 was not merely a technological unveiling but a strategic declaration by Google DeepMind of its intent to lead the industry in responsible AI deployment. This move positions Google as a proactive player in addressing the ethical dilemmas posed by its own powerful AI tools, aiming to build trust and transparency from the ground up, rather than reacting to potential misuse. The framework’s ongoing integration into Google’s flagship AI products underscores a commitment to making content provenance a standard feature, not an afterthought.

The Invisible Hand: How SynthID Embeds Provenance

At its core, SynthID leverages principles of steganography – the art of concealing information within other data in a way that its presence is imperceptible to human senses but detectable by specialized tools. The key design goals for SynthID are clear: the watermark must be invisible or inaudible to users, resilient to common distortions and transformations, and reliably detectable by algorithmic scanners.

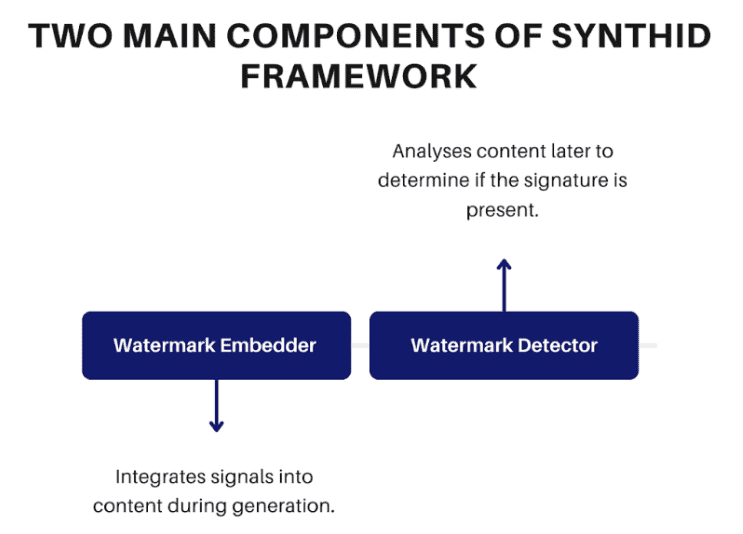

Unlike traditional watermarks that are visually obvious or easily removable, SynthID’s method is deeply integrated into the AI generation process. It operates through two main components: an embedding mechanism that injects the watermark during content creation and a detection system that identifies its presence later.

-

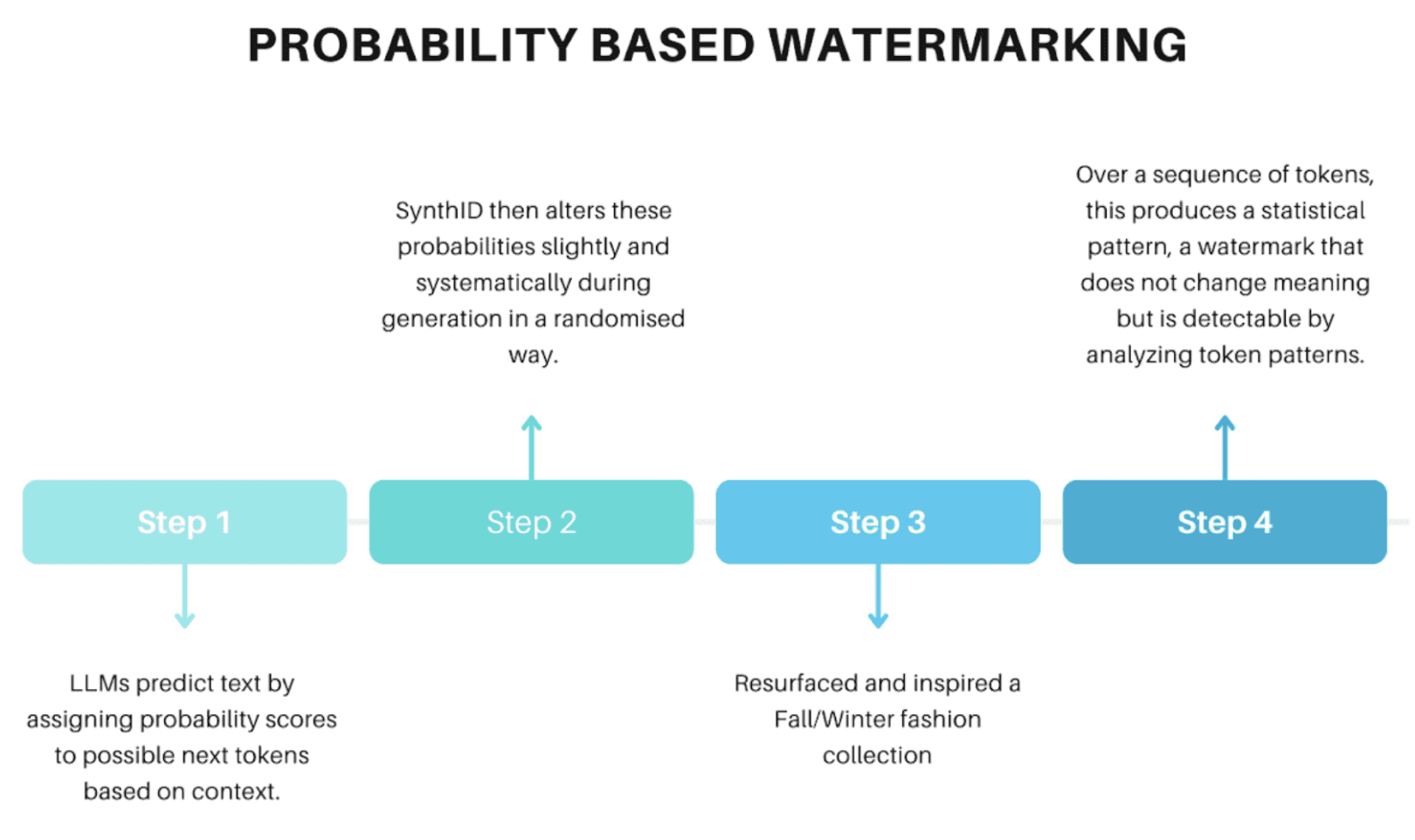

For Text Media: Probability-Based Watermarking

Large language models (LLMs) like Google’s Gemini generate text by predicting the next most probable word or token in a sequence. SynthID capitalizes on this probabilistic nature. During text generation, it subtly manipulates the probability distributions from which the LLM selects its next token. Instead of simply picking the single most probable word, SynthID introduces minute, controlled adjustments to these probabilities, creating a statistically significant pattern over a sequence of tokens. These adjustments are so minor that they do not alter the semantic meaning, grammatical correctness, or overall quality of the generated text. For a human reader, the text remains indistinguishable from unwatermarked output. However, a specialized SynthID detector can analyze the statistical patterns of token choices within a piece of text and infer the presence of this embedded signal, even after minor edits or paraphrasing. This method benefits from the inherent variability in LLM outputs, allowing the watermark to ride along the natural statistical fluctuations of language.

-

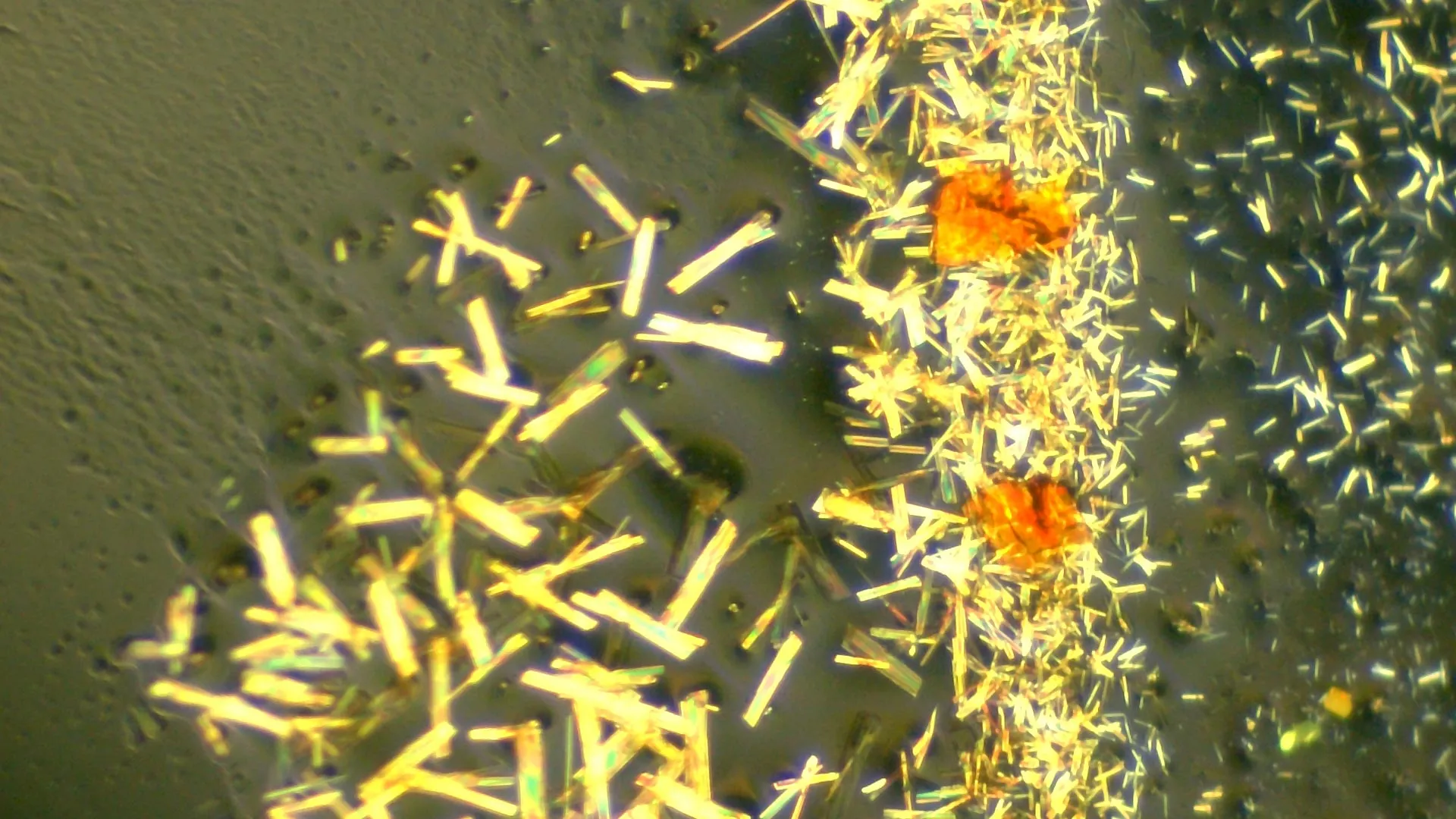

For Images and Video Media: Pixel-Level Watermarking

For visual content generated by diffusion models like Imagen and Veo, SynthID embeds its watermark directly into the pixel values. During the iterative process of image or video generation, SynthID introduces imperceptible modifications to specific pixel values across the content. These changes are below the threshold of human visual perception, meaning an observer cannot see the watermark. The pattern of these minute pixel alterations, however, encodes a machine-readable signature. For video, this watermarking is applied frame by frame, ensuring that the provenance information is present throughout the temporal dimension of the content. This allows for robust detection even after common transformations such such as cropping, resizing, compression (e.g., JPEG, MPEG), noise addition, or filtering, which are often used to obscure or remove metadata-based authenticity signals. The resilience stems from the watermark being intrinsic to the visual data itself, rather than an appended layer. -

For Audio Media: Spectral-Based Encoding

Audio content generated by models like Lyria utilizes a similar principle, adapted for the unique characteristics of sound. The watermarking process leverages the audio’s spectral representation, which maps sound frequencies over time. SynthID subtly manipulates the frequency-time characteristics of the audio signal. This could involve minute adjustments to specific frequency bands or the introduction of subtle, inaudible modulations within the audio spectrum. These changes are designed to be imperceptible to the human ear but form a detectable pattern for algorithmic analysis. This approach ensures the watermark remains detectable through common audio transformations, including compression (e.g., MP3), noise addition, or speed changes. While extreme alterations might weaken detectability, the core principle is to embed the signature within the very fabric of the sound wave.

Detection and Verification: Unmasking the Origin

Once a watermark is embedded, SynthID’s detection system is crucial for its utility. Google has developed tools, including a publicly accessible SynthID Detector portal, that allow users to upload media (text, images, audio, video) to scan for the presence of these hidden signatures. The detection process involves sophisticated algorithms trained to identify the specific patterns injected during the generation phase. Upon detection, the system can not only confirm the presence of a watermark but also indicate the confidence level of that detection (e.g., "AI-generated with high confidence"). For visual media, the detector can even highlight specific areas within an image or video where strong watermark signals are present, enabling more granular originality checks and assisting in forensic analysis. This empowers platforms, researchers, and individual users to trace the origin of content and ascertain whether it has been synthetically produced by Google’s integrated AI models.

Strengths and Strategic Limitations

SynthID presents a formidable defense against content manipulation and misattribution, boasting several key strengths. Its primary advantage lies in its deep integration at the model level, making the watermark highly resilient to a wide array of content transformations. These include common image edits like cropping, resizing, and various forms of compression (e.g., JPEG, PNG, MP4), as well as the addition of noise or filters. For text, it can withstand minor edits and paraphrasing, while for audio, it tolerates format conversions and subtle pitch or speed adjustments. This robustness significantly surpasses metadata-based solutions, which are often easily stripped away. By providing original markers, SynthID aims to establish a new standard for content provenance, fostering greater transparency and accountability in the AI ecosystem.

However, a balanced journalistic assessment also requires acknowledging its limitations. While resilient, SynthID is not entirely invulnerable. Significant and aggressive changes to content, such as extreme editing, extensive paraphrasing (for text), or highly destructive compression algorithms, can reduce or even eliminate the detectability of the watermark. This highlights an ongoing "arms race" between AI generation, watermarking, and adversarial attempts to remove or forge such marks. More critically, SynthID’s detection capabilities are primarily effective for content generated by AI models integrated with the SynthID system. This means content created by external AI models, especially open-source or proprietary models from other developers that lack SynthID integration, will not be detectable by Google’s system. This "walled garden" aspect poses a challenge for universal content provenance, as a fragmented landscape of detection technologies could emerge. The industry will need to grapple with the need for interoperability and potentially a standardized, open protocol for AI watermarking to achieve truly global impact.

Broader Impact and Implications for the Digital Future

The introduction of SynthID carries profound implications across multiple sectors, shaping the future of digital content and trust.

- Combating Misinformation and Deepfakes: This is arguably the most immediate and critical application. By providing a reliable method to identify AI-generated content, SynthID empowers social media platforms, news organizations, and fact-checkers to quickly flag and potentially restrict the spread of malicious synthetic media, thereby mitigating its impact on public discourse and democratic processes.

- Content Authentication and Intellectual Property: For artists, creators, and businesses, SynthID offers a mechanism to distinguish AI-assisted work from purely human-created content, providing a layer of authenticity. This could be crucial for intellectual property rights, ensuring that the provenance of generated assets is clear, and potentially leading to new models for licensing and attribution.

- Ethical AI Development and Governance: Google DeepMind’s leadership in deploying SynthID sets a precedent for responsible AI development. It signals to the industry that robust safeguards are not optional but integral to the deployment of powerful generative AI. This initiative could influence future regulatory frameworks and industry best practices, potentially leading to mandates for AI models to incorporate similar provenance technologies.

- Restoring Public Trust: In a world where anything can be faked, the ability to verify the origin of digital content is paramount for restoring public trust in information. SynthID contributes to this by providing a tangible tool for transparency, allowing users to make informed judgments about the content they consume.

- Challenges for Open-Source AI and Interoperability: While powerful within its ecosystem, SynthID highlights the need for broader industry collaboration. The proliferation of open-source AI models means that a truly comprehensive solution would require either widespread adoption of SynthID’s methodology or the development of an open, interoperable standard that all AI developers can integrate. This will be a key area of discussion and development in the coming years.

Conclusion

SynthID represents a pivotal technological advancement in the ongoing battle for digital authenticity and trust. By embedding cryptographically strong, unnoticeable watermarks directly into AI-generated media, Google DeepMind has introduced a robust mechanism for content provenance. Its innovative approach, leveraging model-specific influences on token probabilities for text, pixel modifications for images and video, and spectrogram encoding for audio, achieves a practical balance of invisibility, resilience, and detectability without compromising content quality.

As generative AI continues to redefine the landscape of information and creativity, technologies like SynthID will play an increasingly central role. They are indispensable for ensuring responsible AI deployment, challenging the misuse of synthetic media, and ultimately maintaining a trustworthy digital environment in a future where AI-generated content becomes ubiquitous. The success of SynthID, and similar initiatives, will depend not only on their technical prowess but also on their widespread adoption and the establishment of common standards across the diverse and rapidly evolving AI industry.

Leave a Reply