The increasing demand for personalized digital assistance has fueled the development of AI agents designed to automate tasks, provide instant information, and streamline communication. However, many existing solutions, such as the mentioned OpenClaw, have often been perceived as overly robust or resource-intensive for simple, everyday applications. This perception created a significant gap in the market for a more agile, user-friendly alternative. Nanobot, emerging from the open-source community, directly addresses this need by offering a streamlined approach to building and deploying AI agents. Its growing popularity, evidenced by discussions on platforms like YouTube and its GitHub repository, underscores a collective yearning for simplicity and practical utility in the realm of personal AI.

The Evolution of Personal AI Agents and Nanobot’s Niche

The landscape of artificial intelligence has rapidly evolved, moving from large, centralized models to more distributed and personalized applications. Early AI assistants were often confined to specific platforms or required substantial computational resources, making them less accessible for individual users or developers with limited infrastructure. The advent of open-source frameworks and more efficient model architectures has begun to democratize AI, enabling a broader range of innovators to experiment and deploy custom solutions.

Nanobot positions itself uniquely within this evolving ecosystem. Its design philosophy prioritizes a minimal footprint and ease of integration, making it an ideal entry point for those new to AI agent development. Unlike comprehensive frameworks that might offer a vast array of features at the cost of increased complexity and resource consumption, Nanobot focuses on core functionalities essential for a practical personal assistant. This includes robust connectivity to multiple messaging channels—beyond WhatsApp, it supports Telegram, Slack, Discord, Feishu, QQ, and email—and compatibility with a diverse range of model providers and Model Context Protocol (MCP) tool servers. This modularity allows beginners to grasp the fundamental structure of an AI agent without being overwhelmed by an overly intricate system, facilitating a smoother learning curve and quicker deployment.

Moreover, Nanobot distinguishes itself through its emphasis on practical, real-world usability from the outset. Beyond basic conversational capabilities, it incorporates advanced features such as tool calling, enabling the agent to interact with external services; web search, providing real-time information retrieval; scheduled tasks, allowing for proactive automation; voice transcription, enhancing accessibility and natural interaction; and real-time progress streaming, offering transparency into the agent’s workflow. These features collectively transform Nanobot from a mere demo project into a highly functional personal assistant, capable of handling a wide spectrum of tasks that extend beyond simple query-response interactions.

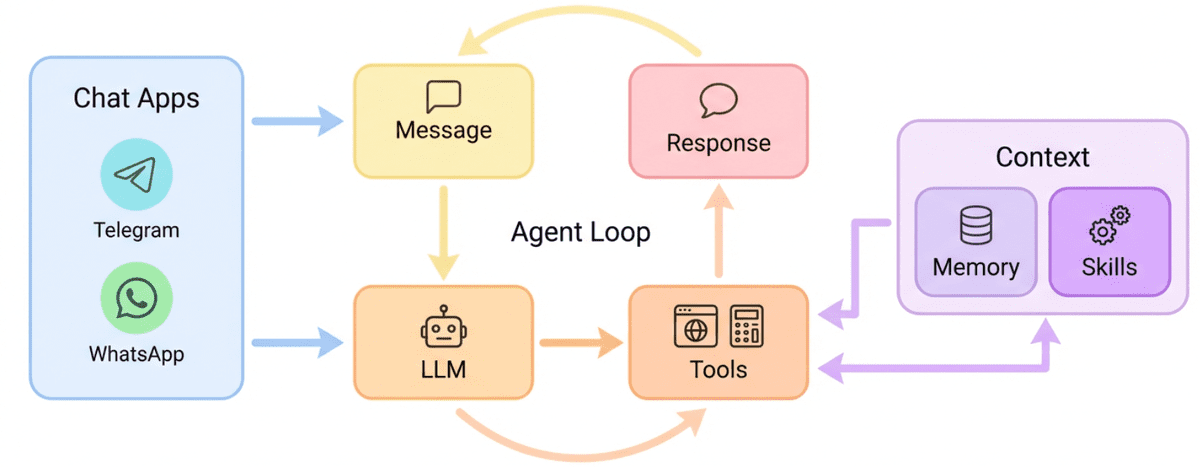

Architectural Simplicity and Operational Efficiency

The core of Nanobot’s appeal lies in its architectural elegance, designed for efficiency and adaptability. The system is structured to separate concerns, with distinct components for model providers, communication channels, and tool servers. This modularity is illustrated in its architectural diagram, which emphasizes a clear pathway from user input through agent processing to output delivery. This design principle allows users to easily swap out large language models (LLMs) from different providers (e.g., OpenAI, Anthropic, Google) or integrate various communication platforms without overhauling the entire system.

The "lightweight" claim translates into several tangible benefits. Firstly, reduced resource consumption means Nanobot can often run on less powerful hardware, making it more accessible for individuals without access to high-end servers or cloud computing resources. Secondly, its simplified setup process minimizes the typical friction associated with configuring complex AI systems, significantly cutting down deployment time. This operational efficiency is particularly valuable for developers who wish to quickly prototype and iterate on AI agent ideas. The ability to connect to a broad array of messaging platforms ensures that the agent can be integrated seamlessly into existing communication workflows, maximizing its utility.

A Step-by-Step Guide to Deployment: Technical Nuances

Deploying Nanobot involves a series of structured technical steps, each crucial for establishing a fully functional AI agent. While presented as a tutorial, these steps also highlight key technical decisions and dependencies inherent in such a system.

Step 1: Installing uv – The Modern Python Package Manager

The initial step involves installing uv, a next-generation Python package installer and dependency manager developed by Astral. Nanobot’s reliance on uv over traditional tools like pip is indicative of a move towards more efficient and reliable dependency management in modern Python projects. uv is celebrated for its speed and determinism, often outperforming pip and conda in virtual environment creation and package installation. The installation is straightforward, typically executed via a curl command to download and run the installer script:

curl -LsSf https://astral.sh/uv/install.sh | shVerifying the installation with uv --version confirms that the environment manager is ready, displaying an output similar to uv 0.10.9 (f675560f3 2026-03-06), which confirms its successful integration into the system path.

Step 2: Installing Nanobot – The Core Agent CLI

Once uv is operational, it is used to install the Nanobot package itself. This process adds the Nanobot command-line interface (CLI) to the system, enabling direct interaction with the agent’s functionalities from the terminal. The command uv tool install nanobot-ai leverages uv‘s capability to install applications that are runnable from the command line, ensuring all necessary dependencies for Nanobot are correctly resolved and installed within an isolated environment, preventing conflicts with other Python projects. This isolation is a key benefit of modern package managers, ensuring stability and reproducibility.

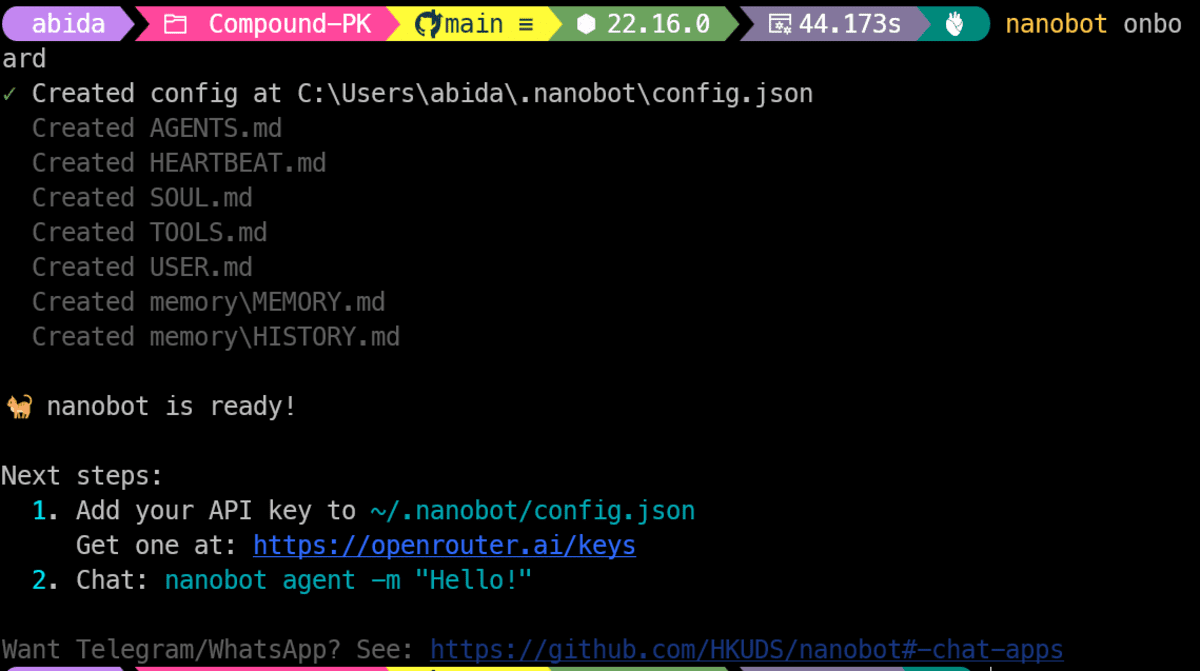

Step 3: Initializing Your Nanobot Project – Setting the Foundation

The initialization phase is critical for establishing the local environment for the Nanobot agent. Executing the nanobot onboarding command sets up the basic project structure. This typically involves creating a default configuration directory, usually located at ~/.nanobot, which serves as the central hub for storing agent configurations, logs, and other operational files. It also establishes the agent’s workspace, a dedicated area where Nanobot can store temporary data or manage files related to its operations. This structured setup ensures that Nanobot has a clear and organized environment to operate within, preparing it for subsequent configuration and channel integration.

Step 4: Adding Your Nanobot Configuration – Personalizing the Agent

Configuration is where the agent truly becomes personalized. Users navigate to the ~/.nanobot/config.json file to define how their Nanobot agent will behave and interact. This JSON file dictates crucial parameters:

"providers":

"openai":

"apiKey": "sk-REPLACE_ME"

,

"agents":

"defaults":

"model": "openai/gpt-5.3-codex",

"provider": "openai"

,

"channels":

"whatsapp":

"enabled": true,

"allowFrom": ["1234567890"]

In this configuration, the apiKey for a chosen large language model provider (e.g., OpenAI) must be replaced with a valid credential. The model field specifies which particular AI model the agent should use (e.g., openai/gpt-5.3-codex), and provider links it to the configured API key. For channel integration, specifically WhatsApp, the enabled flag activates the channel, and allowFrom is a critical security measure, specifying a list of authorized phone numbers that can interact with the agent. A notable point of friction for some users has been the formatting of allowFrom numbers for WhatsApp; while often requiring a country code, some setups reportedly work better without the leading "+" sign, highlighting a minor inconsistency in the practical implementation that users should be aware of.

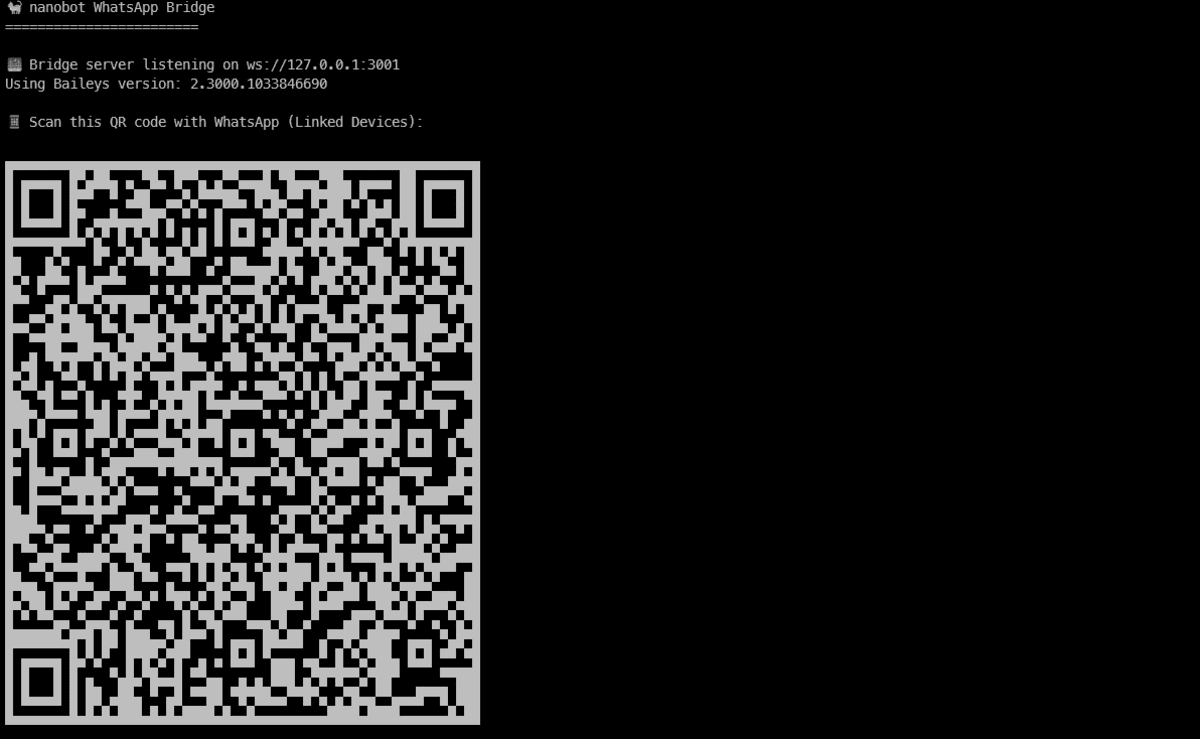

Step 5: Connecting Nanobot To WhatsApp – Bridging to Communication

Connecting Nanobot to WhatsApp requires an additional dependency: Node.js and its package manager, npm. The WhatsApp bridge operates through a Node-based process, making these installations prerequisites. The connection process involves two distinct terminal operations:

- Initiating the Login Flow: In the first terminal,

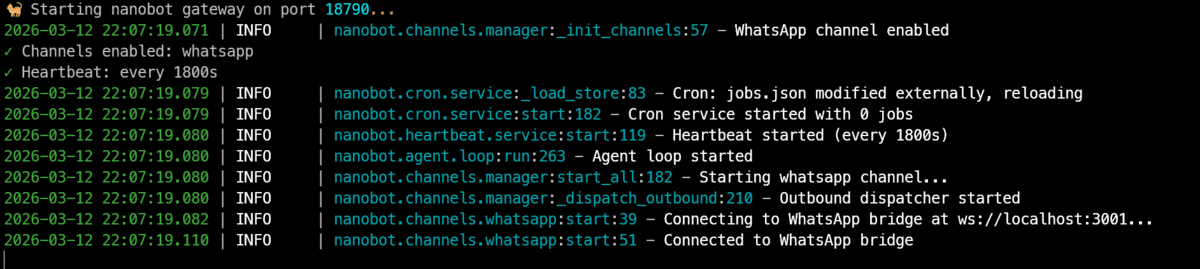

nanobot channels login whatsappis executed. This command initiates a process that generates a QR code. This QR code is crucial for linking the Nanobot agent to a WhatsApp account, mimicking the "Linked Devices" functionality within the WhatsApp mobile application. Users scan this QR code from their phone (WhatsApp -> Settings -> Linked Devices) to establish a secure connection. - Starting the Gateway: Once the device is linked, a second terminal is opened to run

nanobot gateway start. This command launches the Nanobot gateway, which is the persistent process responsible for receiving incoming WhatsApp messages, routing them to the AI agent for processing, and sending back the generated responses. This two-terminal approach ensures that the login session is established independently of the ongoing operational gateway, providing stability and resilience to the messaging channel.

Step 6: Testing Your AI Agent On WhatsApp – Validation and Interaction

The final step is to validate the agent’s functionality. This typically involves using a separate phone number (one that has been explicitly added to the allowFrom list in the config.json) to send a message to the WhatsApp number connected to Nanobot. Upon receiving a message, Nanobot processes the request, potentially leveraging integrated tools like web search if configured, and generates a response, which it then sends back via WhatsApp. For instance, prompting the agent with "what is happening in the world" would trigger a web search, with the agent synthesizing and returning a detailed snapshot of current events.

During this interaction, the gateway terminal provides real-time visibility into the agent’s workflow. This includes timestamps for message reception, indications of tool calls, the generation of the AI response, and the dispatch of the reply. This live feedback mechanism is invaluable for debugging, understanding the agent’s decision-making process, and confirming that all components are functioning as expected.

Challenges and the Path Forward

While Nanobot offers a compelling solution for accessible AI agent deployment, early adopters have noted minor hurdles, typical of rapidly evolving open-source projects. One such challenge observed on Windows systems involved inconsistent detection of Node.js or npm by the Python script, potentially due to environmental path configurations or versioning issues. This highlights the complexities of managing cross-platform dependencies and the need for robust environmental setup guides.

Another area for improvement lies in documentation clarity, particularly concerning WhatsApp integration. The initial lack of explicit guidance on the interface working by messaging the connected device directly, rather than through a separate bot chat interface, caused some confusion. This distinction is crucial for user expectations and initial setup success. The aforementioned inconsistency in WhatsApp allowFrom formatting (with or without the "+" sign) also points to areas where more precise documentation or internal handling could enhance user experience.

Despite these minor friction points, which are common in the early stages of innovative software, Nanobot stands as a robust starting point. These challenges are often addressed through community contributions, updated documentation, and subsequent software releases, reflecting the dynamic nature of open-source development.

Broader Implications and Future Outlook

The advent of accessible AI agent frameworks like Nanobot carries significant implications across various domains:

- Democratization of AI: Nanobot dramatically lowers the barrier to entry for individuals and small teams to experiment with and deploy AI agents. This democratization fosters innovation, allowing a wider demographic to leverage AI for personal productivity, learning, and creative projects, rather than being restricted to large corporations or highly specialized researchers.

- Enhanced Personal Productivity: For individual users, a Nanobot-powered agent can serve as an invaluable personal assistant, capable of automating routine tasks, providing instant information, managing schedules, and facilitating communication across multiple platforms. Its ability to perform web searches, handle scheduled tasks, and transcribe voice inputs extends its utility beyond basic conversational AI.

- Potential for Small Business and Niche Applications: Small businesses or entrepreneurs could leverage Nanobot for localized customer support via WhatsApp, internal team communication management on Slack, or automated email responses. Its lightweight nature makes it cost-effective and adaptable for niche applications that might not justify the investment in more complex, enterprise-grade AI solutions.

- Security and Privacy Considerations: With the integration of personal AI agents into messaging platforms, security and privacy become paramount. Users must exercise diligence in securing their API keys and carefully consider the data that their agent processes and stores. The

allowFromfeature is a critical step in controlling access, but comprehensive understanding of data flow and encryption practices remains essential. - Contribution to the Open-Source Ecosystem: As an open-source project, Nanobot contributes to the collective knowledge and tools available to the AI community. Its modular design encourages contributions, forks, and adaptations, potentially leading to specialized versions or integrations that address even more specific needs.

In conclusion, Nanobot represents a pivotal step in making sophisticated AI capabilities tangible and practical for everyday use. Its commitment to a lightweight architecture, ease of setup, and robust multi-channel integration positions it as an excellent choice for anyone looking to build their first AI agent. While minor technical nuances are part of any cutting-edge technology, the immediate value and extensive potential of a Nanobot-powered personal assistant are clear. Industry experts anticipate that such adaptable and accessible AI agents will play an increasingly crucial role in integrating AI seamlessly into our daily digital lives, paving the way for a future where intelligent automation is not a luxury but a widespread utility.

Leave a Reply