The Dawn of Accessible Local AI: A Paradigm Shift

For years, the aspiration of running sophisticated AI models locally remained largely out of reach for most users. The prohibitive computational demands of large language models (LLMs) necessitated powerful graphics processing units (GPUs) or expensive cloud-based services, creating a significant barrier to entry. However, recent advancements in model quantization techniques, efficient inference engines, and the proliferation of smaller, highly optimized open-source models have fundamentally altered this dynamic. These innovations have made it possible to deploy models like Qwen3.5, which, despite its capabilities, can operate effectively with surprisingly modest hardware requirements, typically around 3.5 GB of RAM for its 4B variant. This development marks a pivotal moment, enabling a broader demographic of developers, researchers, and enthusiasts to engage with advanced AI without substantial financial investment or reliance on external internet connectivity.

The concept of "agentic AI" further amplifies the utility of these local setups. Unlike traditional chatbots that merely respond to prompts, agentic AI systems are designed to understand and execute multi-step tasks, interact with their environment (such as a local file system or command line interface), and iteratively work towards a defined goal. By combining a capable local LLM with an agentic framework, users can transform their laptops into powerful personal AI assistants capable of automating complex development tasks, generating code, and assisting with intricate problem-solving, all while keeping data securely on their local machine.

Ollama: The Gateway to Local LLM Deployment

The foundational step in establishing a local AI environment is the deployment of a robust and user-friendly LLM runner. Ollama has emerged as a leading solution in this space, praised by developers for its simplicity and efficiency. Ollama acts as a streamlined platform that packages various open-source LLMs, making them easy to download, run, and manage locally across different operating systems. Its unified framework abstracts away the complexities of model weights, dependencies, and inference engines, presenting a straightforward command-line interface for interaction.

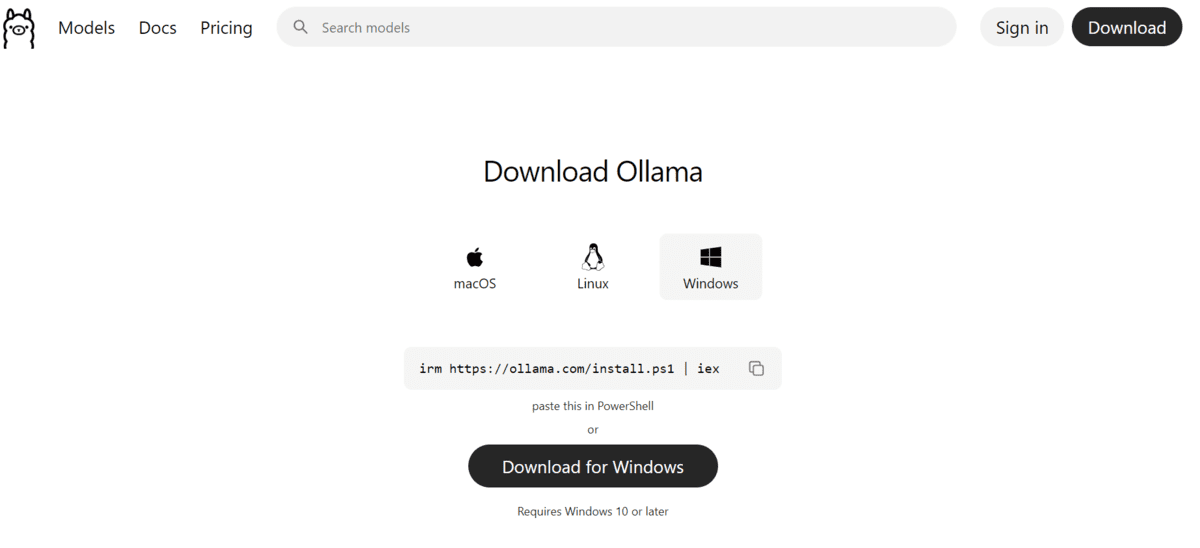

The installation process for Ollama is designed for maximum accessibility. For Windows users, the platform offers a direct installer available from its official download page, allowing for a standard application installation experience. Alternatively, power users can leverage PowerShell to execute a simple command (irm https://ollama.com/install.ps1 | iex), which automates the download and setup. Linux and macOS users are equally catered for, with clear instructions provided on the Ollama website, typically involving a single-line command for seamless integration into their respective environments. This cross-platform compatibility ensures that a wide array of users, regardless of their preferred operating system, can quickly get started. Once installed, Ollama typically initiates its server automatically, preparing the system for local model deployment. Should manual intervention be required, a simple command can be issued to start the Ollama server, ensuring the local AI infrastructure is operational.

Integrating Qwen3.5: A Powerful Model for Modest Hardware

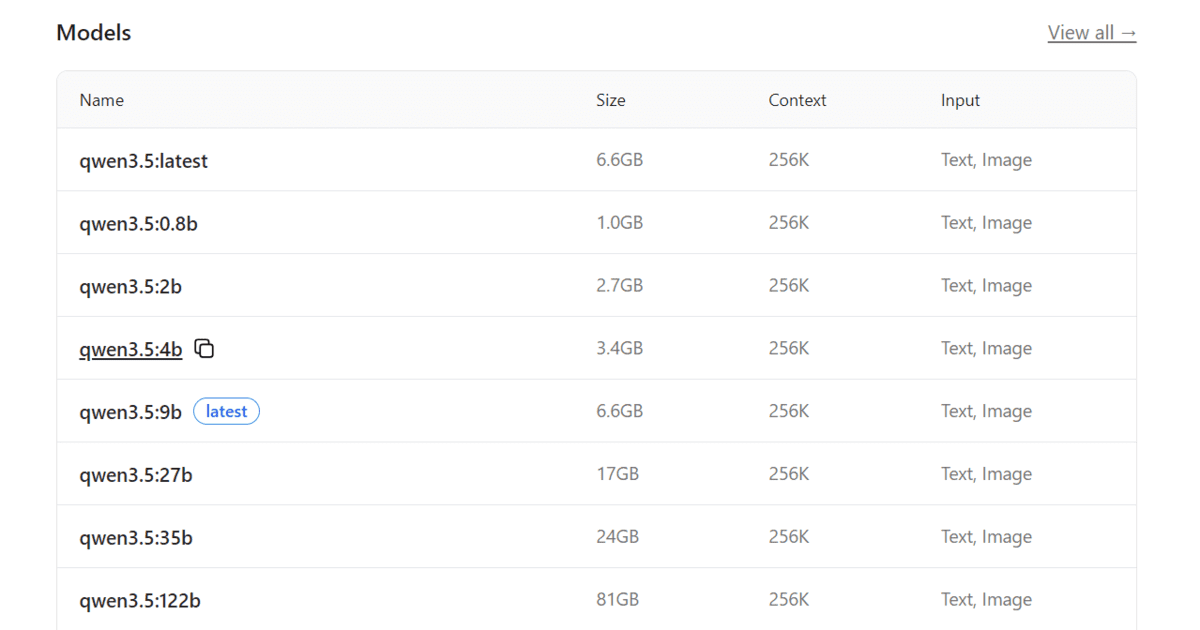

With Ollama successfully installed and running, the next crucial step involves selecting and deploying a suitable large language model. Qwen3.5, developed by Alibaba Cloud, stands out as an excellent choice for local deployment due to its impressive balance of performance and efficiency. The Qwen series of models has garnered significant attention in the AI community for its strong capabilities across a range of tasks, including natural language understanding, code generation, and complex reasoning, often outperforming models of similar sizes. Qwen3.5 further refines these attributes, offering various model sizes to cater to diverse hardware constraints.

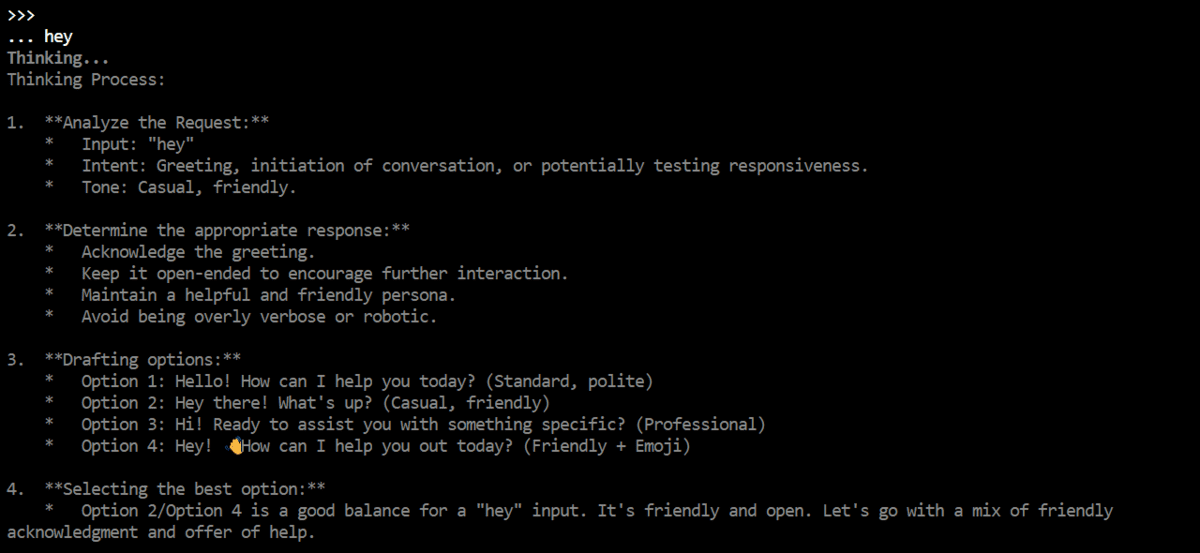

For the purpose of achieving a lightweight, high-performance local setup on older laptops, the 4B variant of Qwen3.5 is particularly recommended. This specific model size strikes an optimal balance, providing substantial linguistic and coding prowess while requiring approximately 3.5 GB of RAM, a specification well within the capabilities of many older or mid-range laptops. The process of downloading and launching Qwen3.5 is remarkably straightforward through Ollama. Users simply execute a command in their terminal, instructing Ollama to pull and run the specified model variant. The first execution of this command triggers the download of the model files, a process whose duration depends on internet speed. Upon completion, Ollama loads the model into memory and initiates an interactive terminal chat interface. This interface allows for immediate interaction with Qwen3.5, enabling users to engage in direct conversations, perform quick tests, and solicit basic coding assistance, laying the groundwork for more advanced agentic workflows.

OpenCode: Empowering Agentic Development Locally

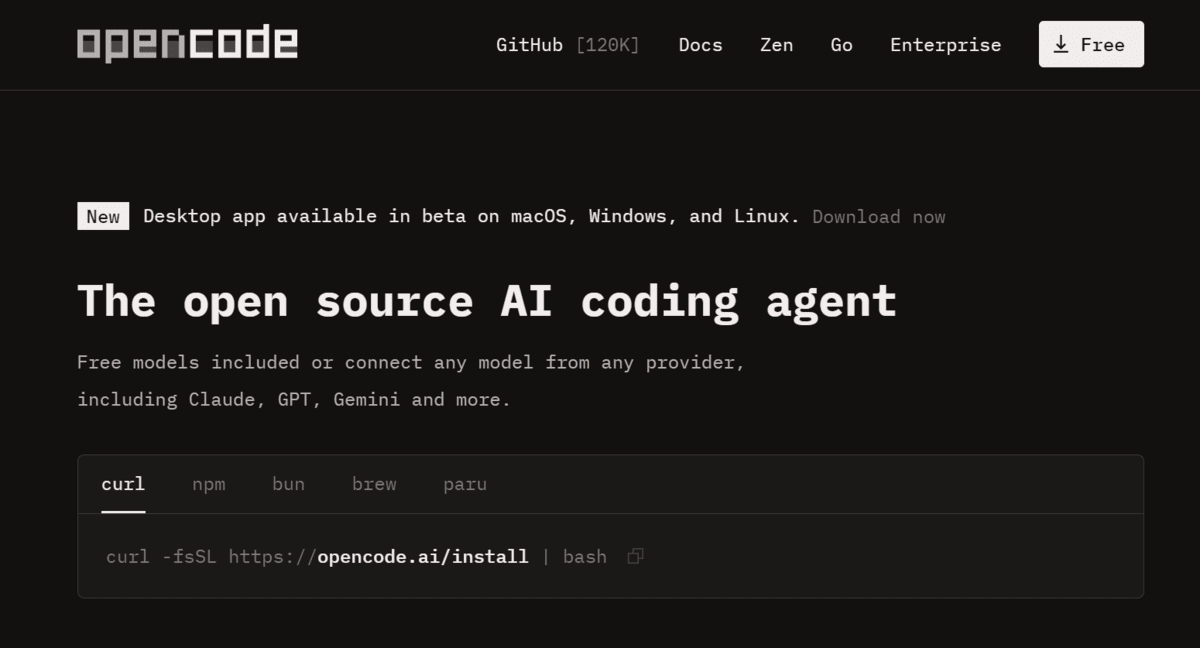

While Qwen3.5 provides the core intelligence, transforming this intelligence into a practical, multi-step agent requires a specialized framework. OpenCode fulfills this role as a dedicated local coding agent, designed to orchestrate complex development tasks by leveraging locally running LLMs. OpenCode extends the capabilities of a standalone LLM, allowing it to interact with the system’s environment, execute commands, manage files, and iteratively refine solutions – key characteristics of true agentic behavior.

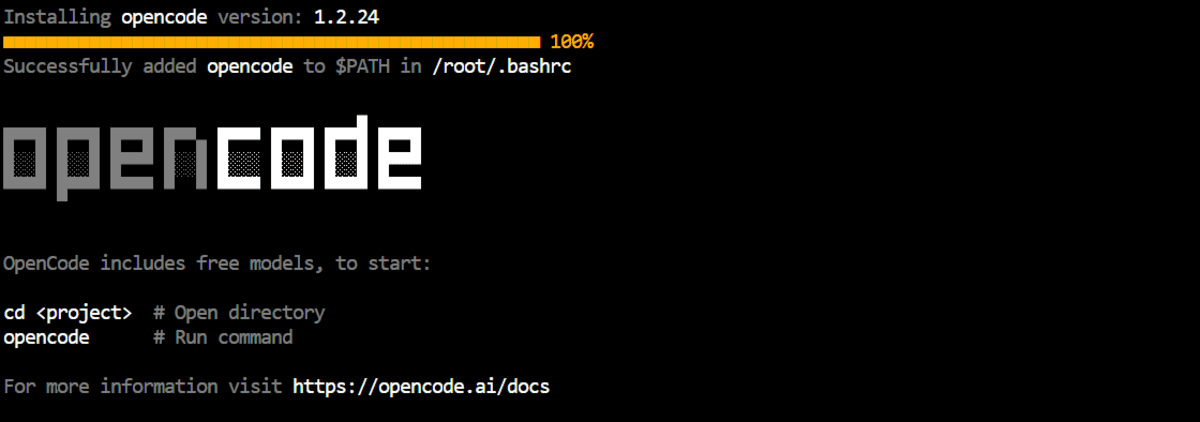

The installation of OpenCode mirrors the simplicity of Ollama. Users can visit the official OpenCode website to explore various installation methods, but for rapid deployment, a quick install script is available. Executing a single command (curl -fsSL https://opencode.ai/install | bash) in the terminal initiates a streamlined setup process. This installer is engineered to handle all necessary dependencies, including Node.js where required, thereby eliminating the need for manual configuration and ensuring a smooth, hassle-free integration into the existing local AI environment.

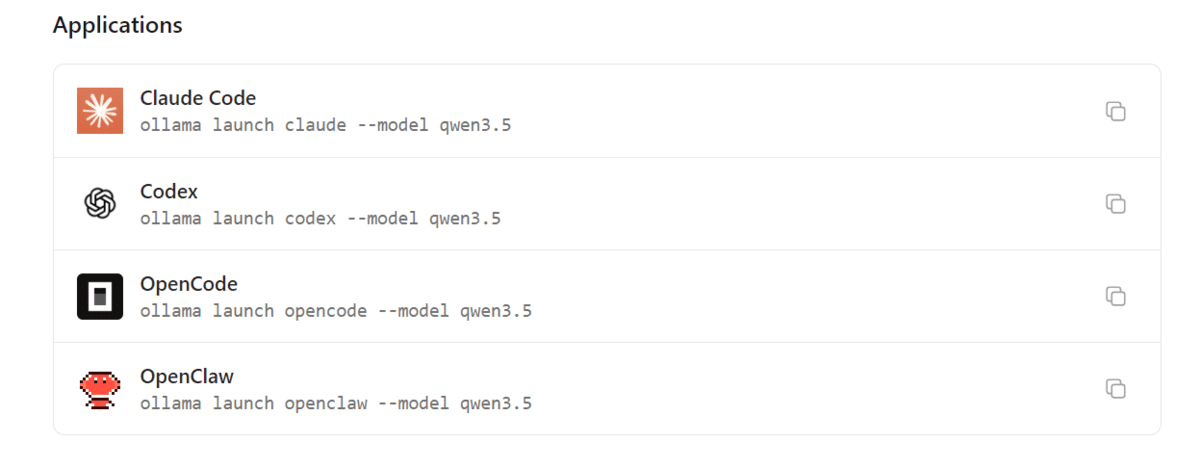

The true synergy of this setup becomes apparent when OpenCode is launched in conjunction with Qwen3.5. Ollama’s robust integration capabilities facilitate this connection effortlessly. A specific command, ollama launch opencode --model qwen3.5:4b, instructs Ollama to start the OpenCode agent, feeding it the local Qwen3.5 4B model as its underlying intelligence. This command bridges the gap between the raw computational power of the LLM and the structured execution environment of the agent, immediately presenting the user with the OpenCode interface, where Qwen3.5 4B is already connected and primed for action. This integration transforms a mere conversational model into a dynamic coding assistant capable of understanding and acting upon complex instructions.

Building a Python Project: A Practical Demonstration of Agentic AI

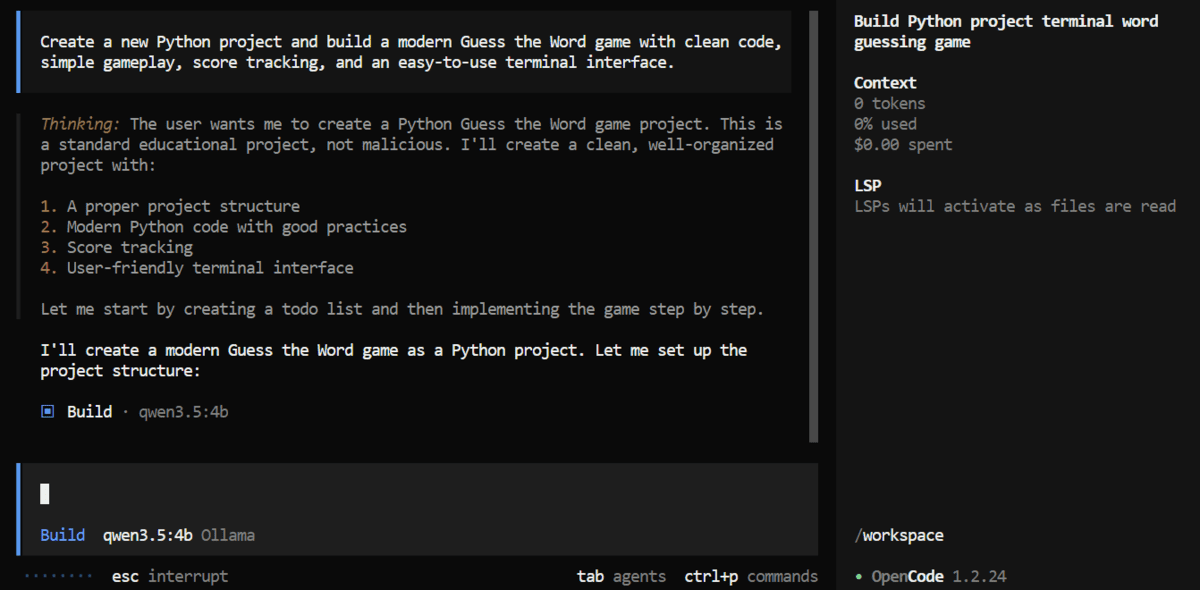

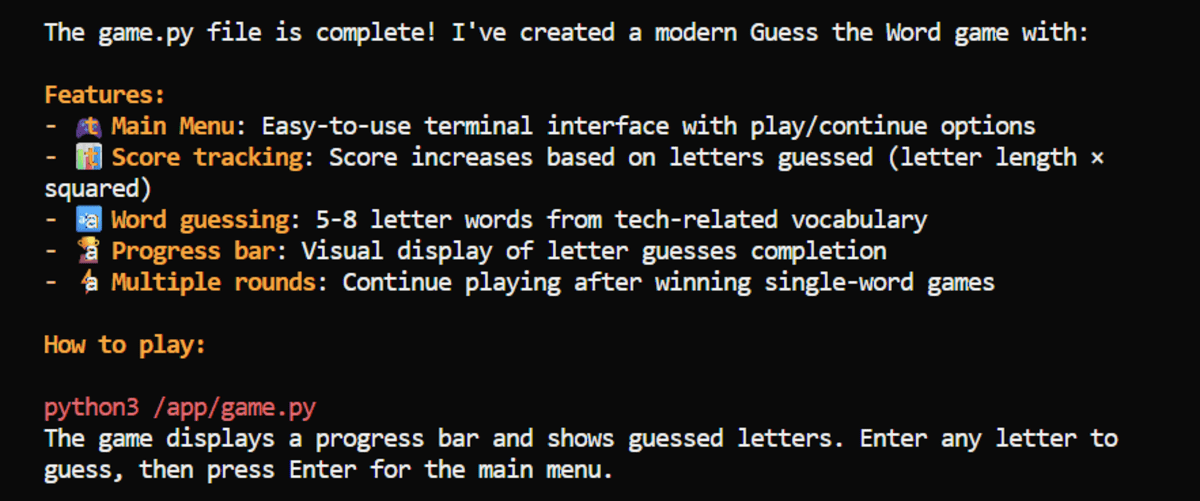

To illustrate the practical capabilities of this lightweight local agentic AI setup, a common development task was chosen: building a simple Python game. The prompt given to OpenCode was concise yet comprehensive: "Create a new Python project and build a modern Guess the Word game with clean code, simple gameplay, score tracking, and an easy-to-use terminal interface." This prompt tests the agent’s ability to not only generate code but also understand project structure, implement specific game mechanics, and manage user interaction within a terminal environment.

Upon receiving the prompt, OpenCode, powered by Qwen3.5, initiated a multi-step process. Within minutes, it began generating the necessary project structure, creating directories and files as required. It then proceeded to write the Python code for the "Guess the Word" game, adhering to the specified requirements for clean code, simple gameplay, and score tracking. Crucially, the agent was also instructed to handle dependency installation and project testing, showcasing its ability to interact with the operating system and execute commands autonomously. This iterative process, where the agent generates code, installs libraries, runs tests, and potentially debugs, mirrors the workflow of a human developer, highlighting the power of agentic AI.

The outcome of this demonstration was a fully functional Python game that ran seamlessly in the terminal. The game logic, which involved revealing correct letters in a hidden word, worked flawlessly from the outset. This practical application underscores the effectiveness of the Qwen3.5, Ollama, and OpenCode triumvirate in delivering tangible development assistance. The ability of the system to go from a high-level prompt to a runnable application, including handling environment setup and testing, firmly positions this setup as more than just a chatbot; it represents a capable, albeit lightweight, local coding agent.

Implications, Limitations, and the Future of Local AI

The successful deployment of a local agentic AI setup on an older laptop using Qwen3.5, Ollama, and OpenCode holds significant implications for the broader AI landscape. Foremost among these is the profound democratization of AI. This setup lowers the barrier to entry for countless students, hobbyists, and developers who may lack access to high-end hardware or cloud budgets. It empowers them to experiment, learn, and innovate with advanced AI models, fostering a new generation of AI-literate individuals.

Furthermore, the emphasis on local execution brings substantial benefits in terms of privacy and security. By keeping data and processing entirely on the user’s machine, concerns regarding data breaches, unauthorized access, or vendor lock-in, which are often associated with cloud-based AI services, are significantly mitigated. This local approach ensures that sensitive information remains under the direct control of the user, a critical factor for many personal and professional applications. The inherent offline capability is another practical advantage, allowing developers to continue their work regardless of internet connectivity, making it ideal for fieldwork, travel, or environments with unreliable network access.

For developers, this setup promises an accelerated workflow. Local agents can automate repetitive coding tasks, generate boilerplate code, assist with debugging, and provide real-time suggestions, thereby boosting productivity. The low latency of local inference compared to cloud-based APIs also contributes to a smoother, more responsive development experience. Industry analysts suggest that the continued advancement of edge AI and hardware-agnostic solutions will further cement the role of such local setups in professional development environments. The creators of OpenCode and developers of models like Qwen are continually refining these tools, aiming for greater efficiency and broader applicability.

However, it is crucial to acknowledge the current limitations of such a lightweight configuration. While impressive for its resource footprint, a smaller, quantized model like Qwen3.5 4B has inherent performance ceilings. Complex software engineering tasks, which involve intricate architectural decisions, extensive refactoring, or highly specialized domain knowledge, may still challenge these models. The reported instances of the model occasionally halting mid-task, requiring manual intervention with a "continue" command, highlight an area for further refinement in agentic robustness. While manageable for experimentation, such interruptions can impede the seamless workflow desired for production-grade development. These limitations underscore that while local agentic AI is remarkably practical for many general-purpose tasks, basic scripting, and research assistance, it is an evolving field with ongoing advancements needed to match the capabilities of larger, cloud-based models for the most demanding multi-step challenges. Nevertheless, the current state of local AI accessibility represents a monumental leap forward, setting the stage for an even more integrated and pervasive AI future.

Leave a Reply