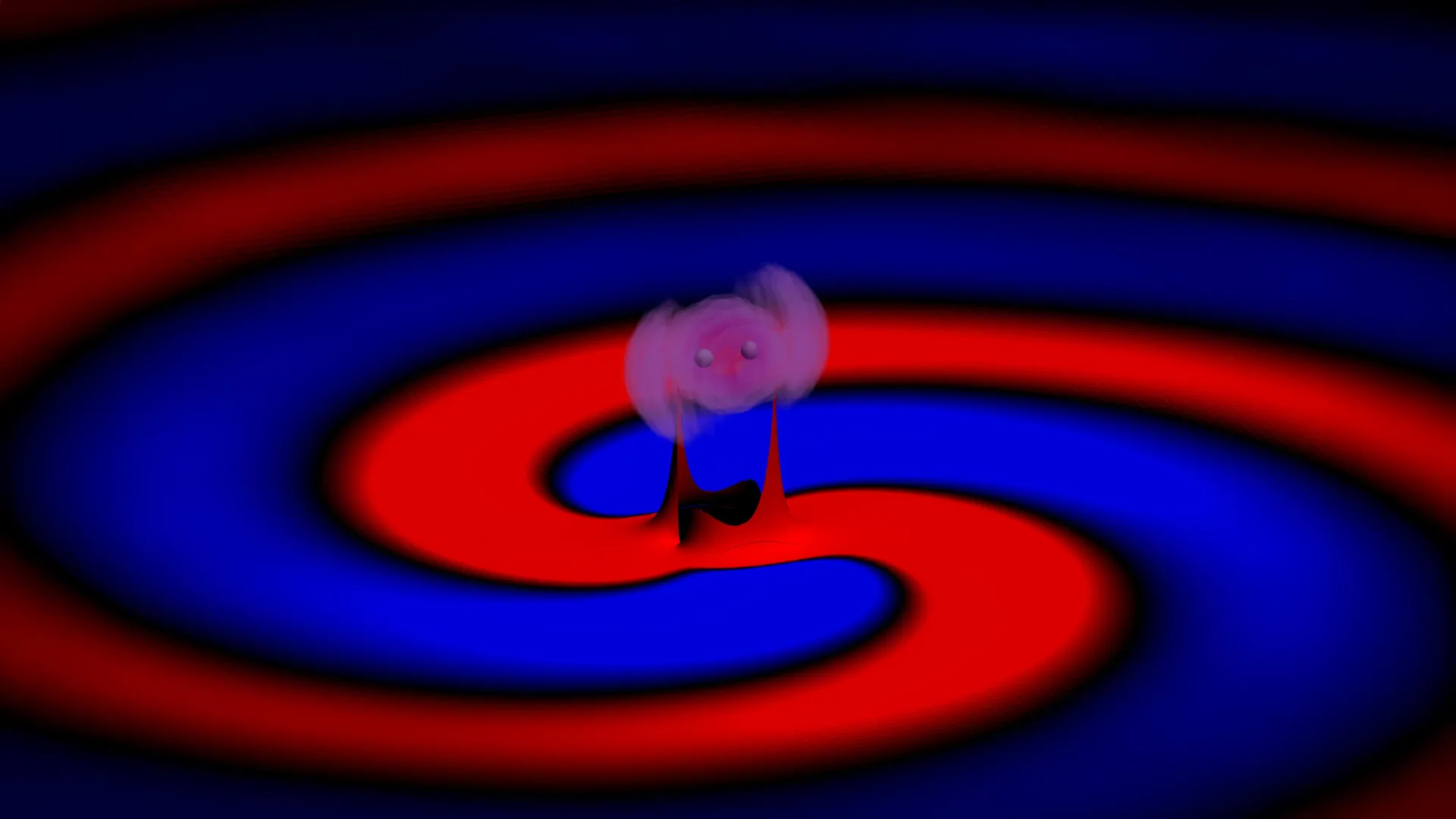

The natural world has long served as the ultimate laboratory for camouflage and adaptive surfaces. Among the most sophisticated practitioners of these biological arts are cephalopods—octopuses, cuttlefish, and squid—which possess the uncanny ability to vanish into their environment in a fraction of a second. This feat is achieved through a complex coordination of skin cells that alter color and muscular organs called papillae that change physical texture. While scientists have spent decades attempting to replicate these dual capabilities in synthetic materials, they have often been limited by scale, speed, or the inability to combine texture and color into a single, flexible system.

In a landmark study published in the journal Nature, a multidisciplinary team of researchers at Stanford University has announced a significant leap forward in materials science. They have developed a soft, flexible material capable of rapidly shifting its surface topography and visual properties at the micron scale—features smaller than the diameter of a human hair. By utilizing water-responsive polymers and precision engineering, the team has created a platform that could revolutionize fields ranging from military camouflage and soft robotics to wearable electronics and advanced nanophotonics.

The Science of Synthetic Mimicry: Bridging Biology and Engineering

In nature, the octopus relies on a hierarchical system of organs to achieve its "disappearing act." Chromatophores—tiny sacs filled with pigment—expand or contract to change color, while iridophores and leucophores reflect light to create iridescent or white patterns. Simultaneously, the animal’s nervous system triggers the papillae to rise from the skin, mimicking the jagged edges of coral or the smoothness of a pebble.

The Stanford team, led by doctoral student Siddharth Doshi and professors Nicholas Melosh and Mark Brongersma, sought to replicate this multi-sensory experience in a synthetic format. "Textures are crucial to the way we experience objects, both in how they look and how they feel," said Doshi, the paper’s first author. "These animals can physically change their bodies at close to the micron scale, and now we can dynamically control the topography of a material—and the visual properties linked to it—at this same scale."

The breakthrough lies in the ability to manipulate "swellable" materials with extreme precision. While previous attempts at creating adaptive skins often relied on bulky mechanical actuators or slow chemical reactions, the Stanford approach utilizes a soft polymer film that responds to environmental triggers, allowing for nearly instantaneous transformations that are both reversible and highly detailed.

A Serendipitous Discovery in the Lab

The path to this innovation was not entirely linear. As is often the case in high-level research, a moment of accidental observation provided the necessary spark. While working on an earlier experiment involving nanostructures on polymer films, Doshi was using a scanning electron microscope (SEM) to examine his samples. Rather than discarding the polymer after the imaging process, he decided to reuse it for subsequent tests.

During these follow-up observations, Doshi noticed something unusual: the areas of the film that had been previously exposed to the electron beam of the microscope behaved differently than the unexposed regions. Specifically, they displayed distinct colors and swelled at different rates when exposed to moisture.

"We realized that we could use these electron beams to control topography at very fine scales," Doshi noted. "It was definitely serendipitous." This realization shifted the focus of the research toward using electron-beam lithography—a standard tool in semiconductor manufacturing—to "program" the polymer film. By directing a focused beam of electrons at specific points on the material, the researchers could alter the polymer’s cross-linking density. This, in turn, dictated how much water each specific region could absorb.

Mechanics of the Material: Swelling and Structural Color

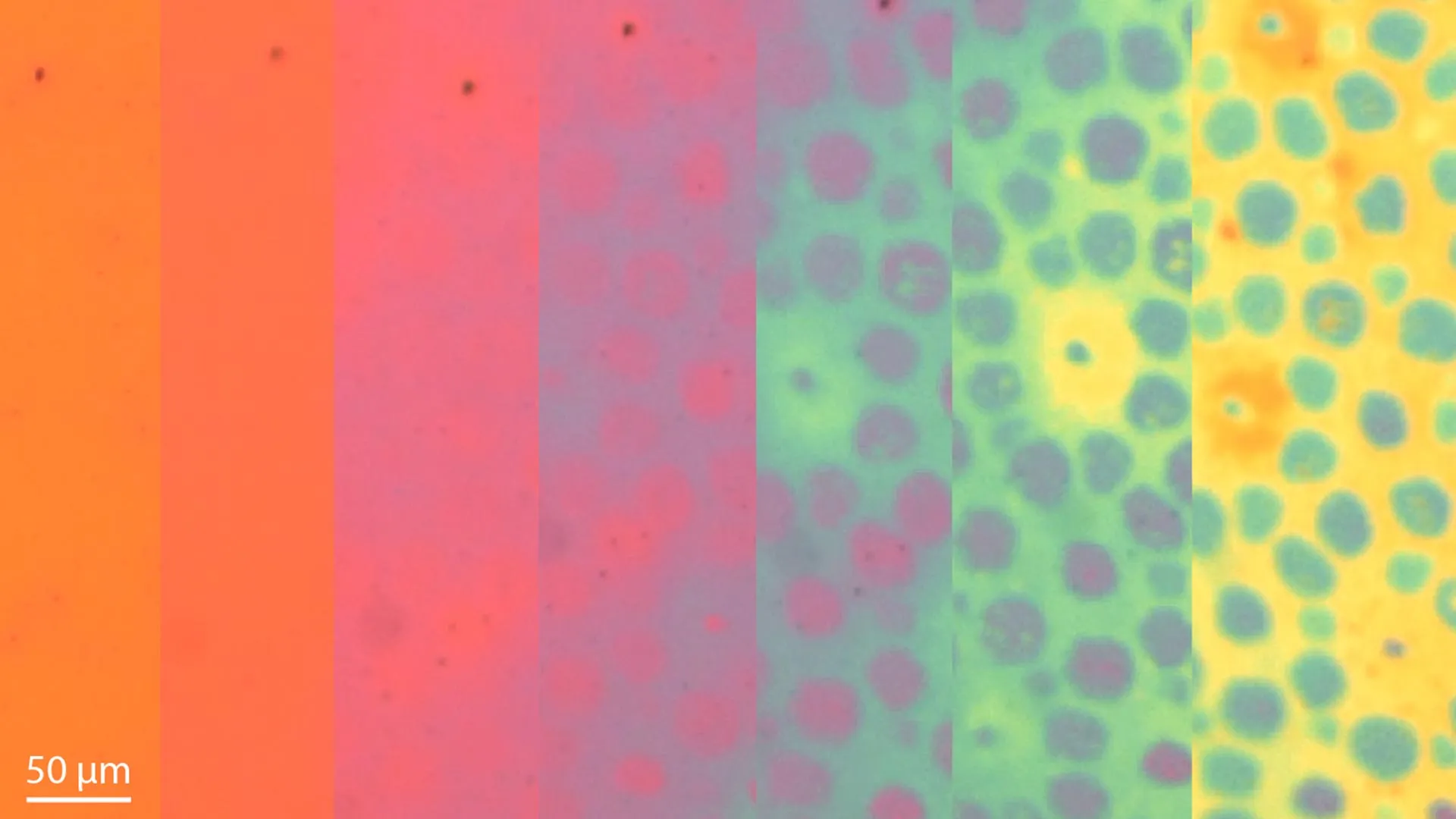

The core of the technology involves a water-responsive polymer film that acts as a dynamic canvas. When the film is exposed to water, the "programmed" regions swell at different rates based on the amount of electron-beam exposure they received. This differential swelling transforms a flat, two-dimensional surface into a complex three-dimensional landscape.

To demonstrate the precision of this technique, the researchers created a microscopic relief of Yosemite’s famous El Capitan. In its dry state, the polymer film appears as a perfectly flat, translucent sheet. However, upon the introduction of moisture, the "mountain" rises from the surface, forming a detailed 3D structure. The process is entirely reversible; by applying an alcohol-based solvent, the water is drawn out, and the material returns to its original flat state.

Beyond physical texture, the material also manipulates light through structural color. By integrating the polymer between thin layers of metal, the researchers created Fabry-Pérot resonators. These are optical cavities that selectively reflect specific wavelengths of light based on the thickness of the material. As the polymer swells or contracts, the distance between the metal layers changes, causing the material to shift through a vibrant spectrum of colors. This allows the researchers to control not just the shape of the surface, but also its "finish"—switching between glossy, matte, and various chromatic hues.

Technical Data and Experimental Results

The study detailed in Nature highlights several key metrics that distinguish this material from previous iterations of adaptive "skins":

- Scale of Control: The material can form features at the micron and sub-micron scale, allowing for resolutions that exceed the capabilities of traditional 3D printing or mechanical embossing.

- Reversibility: The transition between states can be repeated numerous times without significant degradation of the polymer structure, a critical requirement for long-term applications in robotics or wearables.

- Dual-Modality: Unlike materials that can only change color (electrochromics) or only change shape (shape-memory alloys), this system links topography and optical properties, mimicking the integrated nature of cephalopod skin.

- Energy Efficiency: The material does not require a constant power source to maintain its state; the swelling is a passive response to the presence of a solvent or moisture, though the "programming" via electron beam is done during the manufacturing phase.

Professor Nicholas Melosh, a senior author on the paper, emphasized the uniqueness of the system. "There’s just no other system that can be this soft and swellable, and that you can pattern at the nanoscale," Melosh said. "You can imagine all kinds of different applications."

Broader Implications: From Camouflage to Bioengineering

The potential applications for this technology are vast and span multiple industries. In the realm of defense, the material could be used to create advanced camouflage for both personnel and equipment. Unlike current camouflage patterns, which are static, a suit made from this material could theoretically adjust its texture to match the roughness of a rocky terrain or the smoothness of a leaf, while simultaneously shifting its color to match the environment.

In robotics, the implications are equally profound. The ability to dynamically alter surface texture means that a robot’s "skin" could change its friction coefficient on demand. A small search-and-rescue robot could become "sticky" to climb a vertical wall and then "slick" to slide through a narrow pipe.

"Small changes in the properties of soft materials over micron distances are finally possible, which will open up all sorts of possibilities," Melosh added. This includes bioengineering, where the material could be used to study how cells respond to changing physical environments. Since cells are highly sensitive to the topography of the surfaces they grow on, a dynamic material could allow researchers to "steer" cell growth or differentiation in real-time.

Furthermore, the field of nanophotonics stands to benefit from this breakthrough. By controlling light at such small scales, the material could be used to create new types of optical switches, high-security encryption labels that only appear under specific conditions, or flexible displays for wearable devices that are more durable and versatile than current OLED technology.

The Road Ahead: AI and Automation

While the current version of the material requires manual tuning of water and solvent levels to achieve specific patterns, the Stanford team is already looking toward the future of automation. The next phase of research involves integrating computer vision and artificial intelligence to create a fully autonomous system.

"We want to be able to control this with neural networks—basically an AI-based system—that could compare the skin and its background, then automatically modulate it to match in real time, without human intervention," Doshi explained. By pairing the material with a camera and a machine-learning algorithm, the "synthetic skin" could sense its surroundings and trigger the necessary chemical or moisture adjustments to achieve perfect camouflage instantly.

Professor Mark Brongersma noted that the introduction of soft materials into the world of optics is a game-changer. "The introduction of soft materials that can expand, contract, and alter their shape opens up an entirely new toolbox in the world of optics to manipulate how things look," he said.

Research Support and Collaborative Effort

The success of this project is a testament to the interdisciplinary environment at Stanford. The research team included experts from materials science, applied physics, photonics, and microfluidics. Co-authors included Alberto Salleo, Polly Fordyce, Nicholas A. Güsken, Gerwin Dijk, Jennifer E. Ortiz-Cárdenas, Johan Carlström, Peter Suzuki, and Bohan Li.

The work was supported by a wide array of prestigious institutions, including the Stanford Graduate Fellowship, the Meta PhD Fellowship, the Wu Tsai Human Performance Alliance, the Joe and Clara Tsai Foundation, and the German National Academy of Sciences Leopoldina. Federal funding was provided by the Department of Energy, the Air Force Office of Sponsored Research, and the National Science Foundation.

As the team continues to refine the material and explore its integration with AI, the boundary between biological capability and engineered reality continues to blur. The Stanford "octopus skin" represents not just a new material, but a new philosophy in design—one where the static becomes dynamic, and the inanimate begins to mimic the complex, adaptive beauty of life itself.

Leave a Reply